metadata

license: other

base_model: black-forest-labs/FLUX.1-dev

tags:

- flux

- flux-diffusers

- text-to-image

- diffusers

- simpletuner

- lora

- template:sd-lora

inference: true

widget:

- text: unconditional (blank prompt)

parameters:

negative_prompt: blurry, cropped, ugly

output:

url: ./assets/image_0_0.png

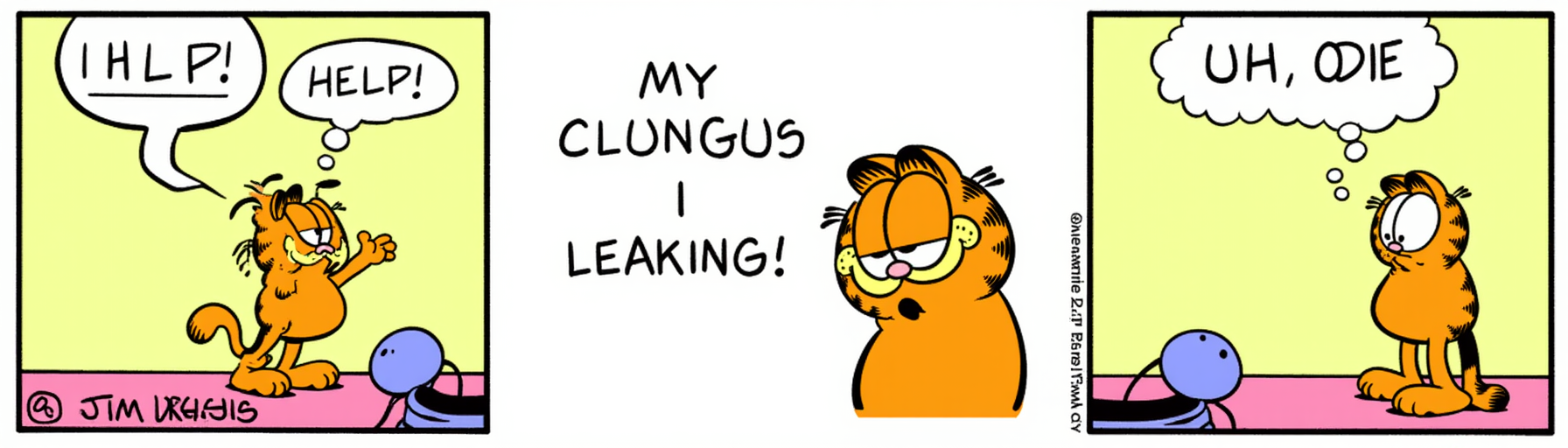

- text: >-

a comic strip of garfield, by jim davis. the first panel has garfield

saying Help!. the second panel has garfield saying My clungus is leaking!

and the third panel has Odie saying uh oh!

parameters:

negative_prompt: blurry, cropped, ugly

output:

url: ./assets/image_1_0.png

- text: >-

a comic strip by jim davis, showcasing odie in his full demonic form while

garfield cowers in the background

parameters:

negative_prompt: blurry, cropped, ugly

output:

url: ./assets/image_2_0.png

- text: a picture of garfield in walmart, shopping amongst the real people

parameters:

negative_prompt: blurry, cropped, ugly

output:

url: ./assets/image_3_0.png

- text: A photo-realistic image of a cat

parameters:

negative_prompt: blurry, cropped, ugly

output:

url: ./assets/image_4_0.png

simpletuner-lora

This is a LyCORIS adapter derived from black-forest-labs/FLUX.1-dev.

The main validation prompt used during training was:

A photo-realistic image of a cat

Validation settings

- CFG:

3.0 - CFG Rescale:

0.0 - Steps:

20 - Sampler:

None - Seed:

42 - Resolution:

1776x512

Note: The validation settings are not necessarily the same as the training settings.

You can find some example images in the following gallery:

- Prompt

- unconditional (blank prompt)

- Negative Prompt

- blurry, cropped, ugly

- Prompt

- a comic strip of garfield, by jim davis. the first panel has garfield saying Help!. the second panel has garfield saying My clungus is leaking! and the third panel has Odie saying uh oh!

- Negative Prompt

- blurry, cropped, ugly

- Prompt

- a comic strip by jim davis, showcasing odie in his full demonic form while garfield cowers in the background

- Negative Prompt

- blurry, cropped, ugly

- Prompt

- a picture of garfield in walmart, shopping amongst the real people

- Negative Prompt

- blurry, cropped, ugly

- Prompt

- A photo-realistic image of a cat

- Negative Prompt

- blurry, cropped, ugly

The text encoder was not trained. You may reuse the base model text encoder for inference.

Training settings

- Training epochs: 2

- Training steps: 2000

- Learning rate: 0.0001

- Effective batch size: 2

- Micro-batch size: 2

- Gradient accumulation steps: 1

- Number of GPUs: 1

- Prediction type: flow-matching

- Rescaled betas zero SNR: False

- Optimizer: optimi-lion

- Precision: bf16

- Quantised: Yes: fp8-quanto

- Xformers: Not used

- LyCORIS Config:

{

"algo": "lokr",

"multiplier": 1.0,

"linear_dim": 10000,

"linear_alpha": 1,

"factor": 16,

"apply_preset": {

"target_module": [

"Attention",

"FeedForward"

],

"module_algo_map": {

"Attention": {

"factor": 16

},

"FeedForward": {

"factor": 8

}

}

}

}

Datasets

garfield

- Repeats: 0

- Total number of images: 2206

- Total number of aspect buckets: 4

- Resolution: 512 px

- Cropped: False

- Crop style: None

- Crop aspect: None

Inference

import argparse

import torch

from helpers.models.flux.pipeline import FluxPipeline as DiffusionPipeline

from lycoris import create_lycoris_from_weights

from huggingface_hub import hf_hub_download

def generate_image(pipeline, prompt, output_file, num_inference_steps, width, height, guidance_scale, seed, device):

# Set device

pipeline.to(device)

# Generate image

generator = torch.Generator(device=device).manual_seed(seed)

image = pipeline(

prompt=prompt,

num_inference_steps=num_inference_steps,

generator=generator,

width=width,

height=height,

guidance_scale=guidance_scale,

).images[0]

# Save image

output_file = "output.png"

image.save(output_file, format="PNG")

print(f"Image saved as {output_file}")

def main():

parser = argparse.ArgumentParser(description="Generate images using a custom diffusion pipeline with LoRA weights.")

parser.add_argument("--model_id", type=str, default='black-forest-labs/FLUX.1-dev', help="Model ID from Hugging Face Hub.")

parser.add_argument("--adapter_id", type=str, default='pytorch_lora_weights.safetensors', help="LoRA weights file.")

parser.add_argument("--lora_scale", type=float, default=1.0, help="Scale for LoRA weights.")

parser.add_argument("--output_file", type=str, default="output.png", help="Output file name for the generated image.")

parser.add_argument("--num_inference_steps", type=int, default=30, help="Number of inference steps.")

parser.add_argument("--guidance_scale", type=float, default=3.5, help="Guidance scale for the generation.")

parser.add_argument("--seed", type=int, default=1641421826, help="Random seed for reproducibility.")

parser.add_argument("--device", type=str, default='cuda' if torch.cuda.is_available() else 'mps' if torch.backends.mps.is_available() else 'cpu', help="Device to run the model on.")

args = parser.parse_args()

# Load model and weights

hf_hub_download(repo_id="terminusresearch/flux-lokr-garfield-nomask", filename=args.adapter_id, local_dir="./")

pipeline = DiffusionPipeline.from_pretrained(args.model_id, torch_dtype=torch.bfloat16)

# Apply LoRA weights

wrapper, _ = create_lycoris_from_weights(args.lora_scale, args.adapter_id, pipeline.transformer)

wrapper.merge_to()

print("Model loaded successfully. Ready to generate images.")

while True:

user_input = input("Enter a prompt or 'quit' to exit: ")

if user_input.lower() == 'quit':

break

# Check for resolution command

if user_input.startswith("resolution:"):

resolution = user_input.split(":")[1]

width, height = map(int, resolution.split("x"))

print(f"Resolution set to {width}x{height}")

continue

prompt = user_input

output_file = args.output_file.replace(".png", f"_{prompt.replace(' ', '_')}.png")

# Use default or previously set resolution

width = locals().get('width', 1024)

height = locals().get('height', 1024)

generate_image(

pipeline=pipeline,

prompt=prompt,

output_file=output_file,

num_inference_steps=args.num_inference_steps,

width=width,

height=height,

guidance_scale=args.guidance_scale,

seed=args.seed,

device=args.device

)

if __name__ == "__main__":

main()