File size: 3,154 Bytes

ddbaf50 3e518c2 ddbaf50 3e518c2 ddbaf50 fe13cca ddbaf50 f457935 ddbaf50 fe13cca f457935 ddbaf50 f457935 ddbaf50 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 |

---

language:

- en

tags:

- speech

---

# WavLM-Base for Speaker Diarization

[Microsoft's WavLM](https://github.com/microsoft/unilm/tree/master/wavlm)

The model was pretrained on 16kHz sampled speech audio with utterance and speaker contrastive loss. When using the model, make sure that your speech input is also sampled at 16kHz.

The model was pre-trained on 960h of [Librispeech](https://huggingface.co/datasets/librispeech_asr).

[Paper: WavLM: Large-Scale Self-Supervised Pre-Training for Full Stack Speech Processing](https://arxiv.org/abs/2110.13900)

Authors: Sanyuan Chen, Chengyi Wang, Zhengyang Chen, Yu Wu, Shujie Liu, Zhuo Chen, Jinyu Li, Naoyuki Kanda, Takuya Yoshioka, Xiong Xiao, Jian Wu, Long Zhou, Shuo Ren, Yanmin Qian, Yao Qian, Jian Wu, Michael Zeng, Furu Wei

**Abstract**

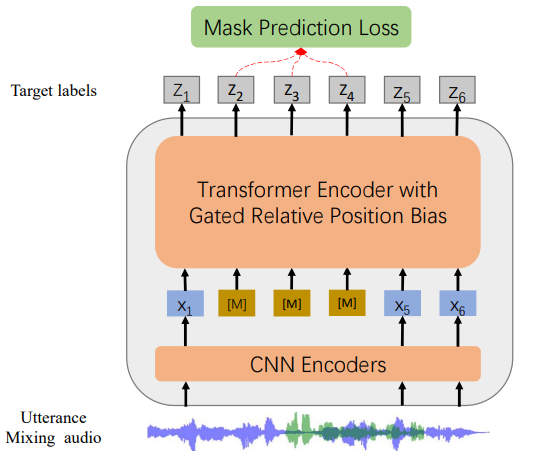

*Self-supervised learning (SSL) achieves great success in speech recognition, while limited exploration has been attempted for other speech processing tasks. As speech signal contains multi-faceted information including speaker identity, paralinguistics, spoken content, etc., learning universal representations for all speech tasks is challenging. In this paper, we propose a new pre-trained model, WavLM, to solve full-stack downstream speech tasks. WavLM is built based on the HuBERT framework, with an emphasis on both spoken content modeling and speaker identity preservation. We first equip the Transformer structure with gated relative position bias to improve its capability on recognition tasks. For better speaker discrimination, we propose an utterance mixing training strategy, where additional overlapped utterances are created unsupervisely and incorporated during model training. Lastly, we scale up the training dataset from 60k hours to 94k hours. WavLM Large achieves state-of-the-art performance on the SUPERB benchmark, and brings significant improvements for various speech processing tasks on their representative benchmarks.*

The original model can be found under https://github.com/microsoft/unilm/tree/master/wavlm.

# Fine-tuning details

The model is fine-tuned on the [LibriMix dataset](https://github.com/JorisCos/LibriMix) using just a linear layer for mapping the network outputs.

# Usage

## Speaker Diarization

```python

from transformers import Wav2Vec2FeatureExtractor, WavLMForAudioFrameClassification

from datasets import load_dataset

import torch

dataset = load_dataset("hf-internal-testing/librispeech_asr_demo", "clean", split="validation")

feature_extractor = Wav2Vec2FeatureExtractor.from_pretrained('microsoft/wavlm-base-sd')

model = WavLMForAudioFrameClassification.from_pretrained('microsoft/wavlm-base-sd')

# audio file is decoded on the fly

inputs = feature_extractor(dataset[0]["audio"]["array"], return_tensors="pt")

logits = model(**inputs).logits

probabilities = torch.sigmoid(logits[0])

# labels is a one-hot array of shape (num_frames, num_speakers)

labels = (probabilities > 0.5).long()

```

# License

The official license can be found [here](https://github.com/microsoft/UniSpeech/blob/main/LICENSE)

|