metadata

language: it

license: apache-2.0

widget:

- text: Il [MASK] ha chiesto revocarsi l'obbligo di pagamento

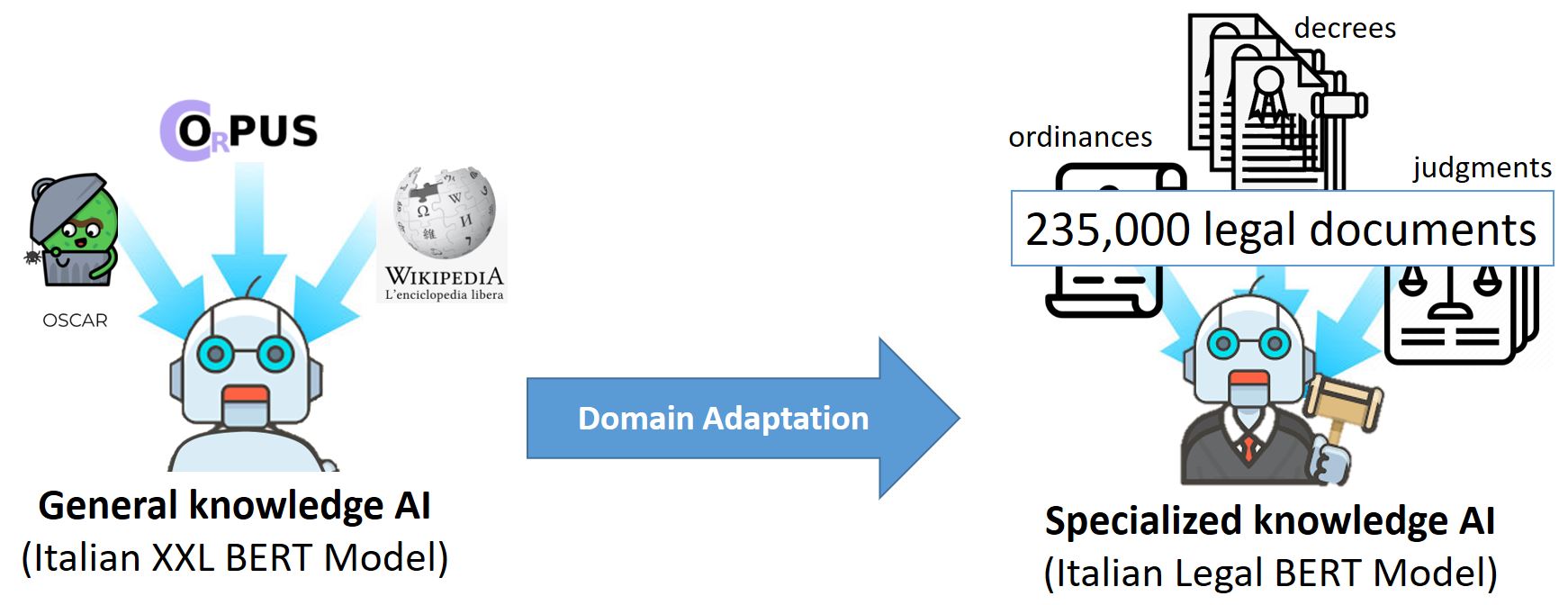

ITALIAN-LEGAL-BERT:A pre-trained Transformer Language Model for Italian Law

ITALIAN-LEGAL-BERT is based on bert-base-italian-xxl-cased with additional pre-training of the Italian BERT model on Italian civil law corpora. It achieves better results than the ‘general-purpose’ Italian BERT in different domain-specific tasks.