---

language: it

license: apache-2.0

widget:

- text: "Il [MASK] ha chiesto revocarsi l'obbligo di pagamento"

---

ITALIAN-LEGAL-BERT:A pre-trained Transformer Language Model for Italian Law

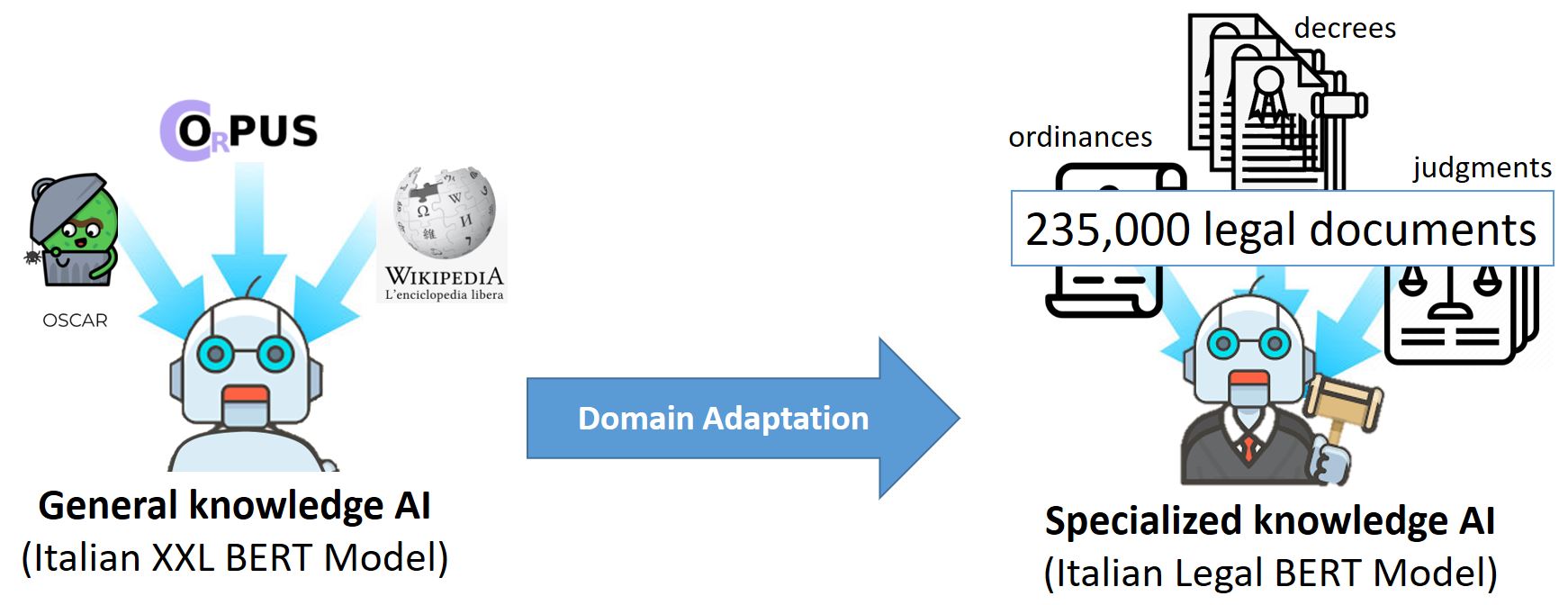

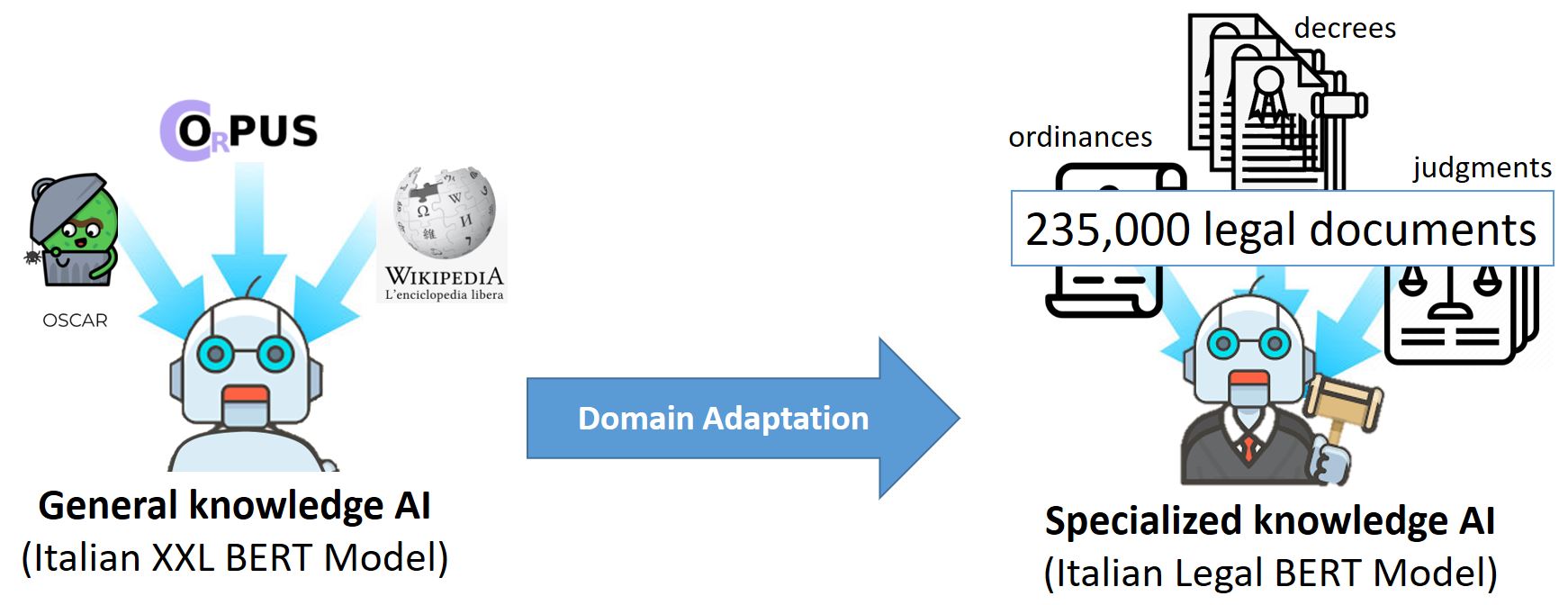

ITALIAN-LEGAL-BERT is based on bert-base-italian-xxl-cased with additional pre-training of the Italian BERT model on Italian civil law corpora.

It achieves better results than the ‘general-purpose’ Italian BERT in different domain-specific tasks.

Training procedure

We initialized ITALIAN-LEGAL-BERT with ITALIAN XXL BERT

and pretrained for an additional 4 epochs on 3.7 GB of text from the National Jurisprudential

Archive using the Huggingface PyTorch-Transformers library. We used BERT architecture

with a language modeling head on top, AdamW Optimizer, initial learning rate 5e-5 (with

linear learning rate decay, ends at 2.525e-9), sequence length 512, batch size 10 (imposed

by GPU capacity), 8.4 million training steps, device 1*GPU V100 16GB