ColQwen2.5-3b-multilingual-v1.0: Multilingual Visual Retriever based on Qwen2.5-VL-3B-Instruct with ColBERT strategy

This is the base version trained on 8xH100 80GB with per_device_batch_size=128 for 8 epoch.

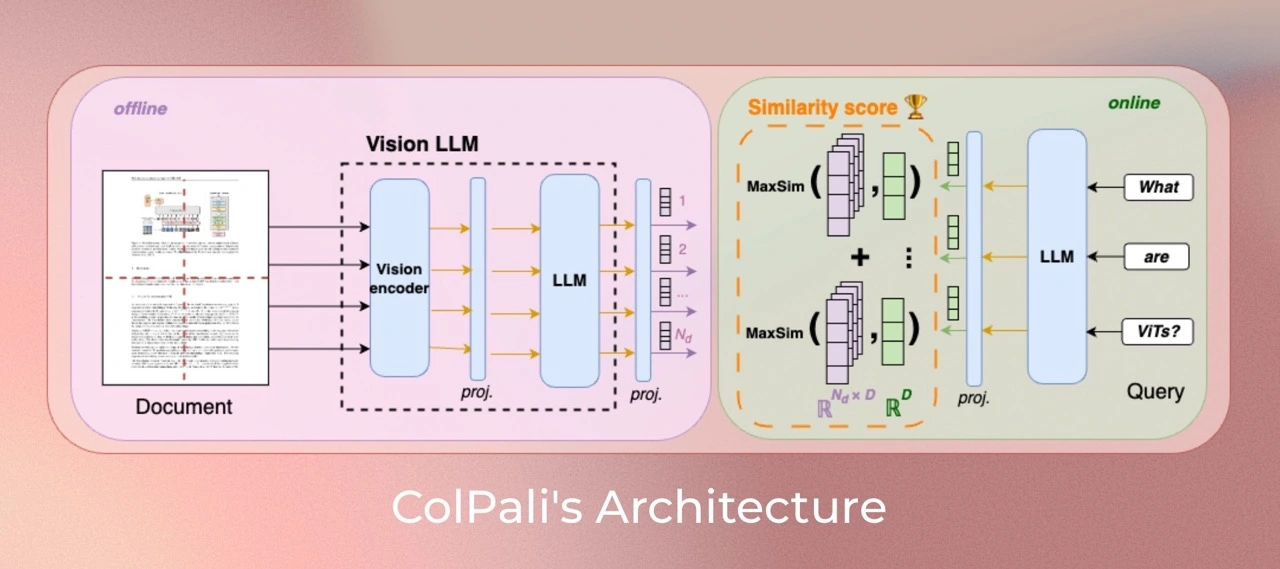

ColQwen is a model based on a novel model architecture and training strategy based on Vision Language Models (VLMs) to efficiently index documents from their visual features. It is a Qwen2.5-VL-3B extension that generates ColBERT- style multi-vector representations of text and images. It was introduced in the paper ColPali: Efficient Document Retrieval with Vision Language Models and first released in this repository

Version specificity

This model takes dynamic image resolutions in input and does not resize them, changing their aspect ratio as in ColPali. Maximal resolution is set so that 768 image patches are created at most. Experiments show clear improvements with larger amounts of image patches, at the cost of memory requirements.

This version is trained with colpali-engine==0.3.9.

Data

- German & English: Taken from the

tsystems/vqa_de_en_batch1dataset. - Multilingual dataset: Taken from

llamaindex/vdr-multilingual-train. - Synthetic data: Taken from

openbmb/VisRAG-Ret-Train-Synthetic-datadataset. - In-domain VQA dataset: Taken from

openbmb/VisRAG-Ret-Train-In-domain-datadataset. - Colpali dataset: Taken from

vidore/colpali_train_set.

Model Training

Parameters

We train models use low-rank adapters (LoRA)

with alpha=128 and r=128 on the transformer layers from the language model,

as well as the final randomly initialized projection layer, and use a paged_adamw_8bit optimizer.

We train on an 8xH100 GPU setup with distributed data parallelism (via accelerate), a learning rate of 2e-4 with linear decay with 1% warmup steps, batch size per device is 128 in bfloat16 format

Installation

pip install git+https://github.com/illuin-tech/colpali

pip install transformers==4.49.0

pip install flash-attn --no-build-isolation

Usage

import torch

from PIL import Image

from colpali_engine.models import ColQwen2_5, ColQwen2_5_Processor

model = ColQwen2_5.from_pretrained(

"tsystems/colqwen2.5-3b-multilingual-v1.0",

torch_dtype=torch.bfloat16,

device_map="cuda:0", # or "mps" if on Apple Silicon

).eval()

processor = ColQwen2_5_Processor.from_pretrained("tsystems/colqwen2.5-3b-multilingual-v1.0")

# Your inputs

images = [

Image.new("RGB", (32, 32), color="white"),

Image.new("RGB", (16, 16), color="black"),

]

queries = [

"Is attention really all you need?",

"What is the amount of bananas farmed in Salvador?",

]

# Process the inputs

batch_images = processor.process_images(images).to(model.device)

batch_queries = processor.process_queries(queries).to(model.device)

# Forward pass

with torch.no_grad():

image_embeddings = model(**batch_images)

query_embeddings = model(**batch_queries)

scores = processor.score_multi_vector(query_embeddings, image_embeddings)

Limitations

- Focus: The model primarily focuses on PDF-type documents and high-ressources languages, potentially limiting its generalization to other document types or less represented languages.

- Support: The model relies on multi-vector retreiving derived from the ColBERT late interaction mechanism, which may require engineering efforts to adapt to widely used vector retrieval frameworks that lack native multi-vector support.

License

ColQwen2.5's vision language backbone model (Qwen2.5-VL) is under apache2.0 license. The adapters attached to the model are under MIT license.

Citation

If you use this models from this organization in your research, please cite the original paper as follows:

@misc{faysse2024colpaliefficientdocumentretrieval,

title={ColPali: Efficient Document Retrieval with Vision Language Models},

author={Manuel Faysse and Hugues Sibille and Tony Wu and Bilel Omrani and Gautier Viaud and Céline Hudelot and Pierre Colombo},

year={2024},

eprint={2407.01449},

archivePrefix={arXiv},

primaryClass={cs.IR},

url={https://arxiv.org/abs/2407.01449},

}

- Developed by: T-Systems International

- Downloads last month

- 14

Model tree for tsystems/colqwen2.5-3b-multilingual-v1.0-merged

Unable to build the model tree, the base model loops to the model itself. Learn more.