A newer version of the Gradio SDK is available:

5.9.1

title: PUMP

emoji: 📚

colorFrom: yellow

colorTo: red

sdk: gradio

app_file: app.py

pinned: false

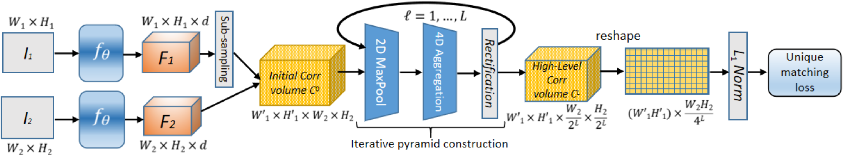

PUMP: pyramidal and uniqueness matching priors for unsupervised learning of local features

Official repository for the following paper:

@inproceedings{cvpr22_pump,

author = {Jerome Revaud, Vincent Leroy, Philippe Weinzaepfel, Boris Chidlovskii},

title = {PUMP: pyramidal and uniqueness matching priors for unsupervised learning of local features},

booktitle = {CVPR},

year = {2022},

}

License

Our code is released under the CC BY-NC-SA 4.0 License (see LICENSE for more details), available only for non-commercial use.

Requirements

- Python 3.8+ equipped with standard scientific packages and PyTorch / TorchVision:

tqdm >= 4 PIL >= 8.1.1 numpy >= 1.19 scipy >= 1.6 torch >= 1.10.0 torchvision >= 0.9.0 matplotlib >= 3.3.4 - the CUDA tool kit, to compile custom CUDA kernels

cd core/cuda_deepm/ python setup.py install

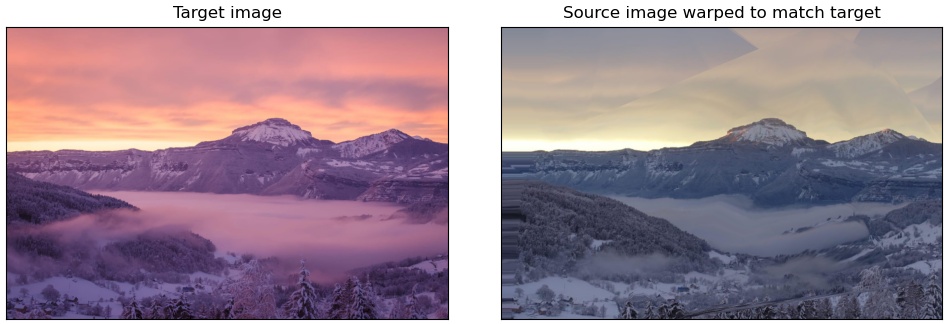

Warping Demo

python demo_warping.py

You should see the following result:

Test usage

We provide 4 variations of the pairwise matching code, named test_xxxscale_yyy.py:

- xxx:

single-scale ormulti-scale. Single-scale can cope with 0.75~1.33x scale difference at most. Multi-scale version can also be rotation invariant if asked. - yyy: recursive or not. Recursive is slower but provide denser/better outputs.

For most cases, you want to use test_multiscale.py:

python test_multiscale.py

--img1 path/to/img1

--img2 path/to/img2

--resize 600 # important, see below

--post-filter

--output path/to/correspondences.npy

It outputs a numpy binary file with the field file_data['corres'] containing a list of correspondences.

The row format is [x1, y1, x2, y2, score, scale_rot_code].

Use core.functional.decode_scale_rot(code) --> (scale, angle_in_degrees) to decode the scale_rot_code.

Optional parameters:

Prior image resize:

--resize SIZEThis is a very important parameter. In general, the bigger, the better (and slower). Be wary that the memory footprint explodes with the image size. Here is the table of maximum

--resizevalues depending on the image aspect-ratio:Aspect-ratio Example img sizes GPU memory resize 4/3 800x600, 1024x768 16 Go 600 4/3 800x600, 1024x768 22 Go 680 4/3 800x600, 1024x768 32 Go 760 1/1 1024x1024 16 Go 540 1/1 1024x1024 22 Go 600 1/1 1024x1024 32 Go 660 (Formula:

memory_in_bytes = (W1*H1*W2*H2)*1.333*2/16)Base descriptor:

--desc {PUMP, PUMP-stytrf}We provide the

PUMPdescriptor from our paper, as well asPUMP-stytrf(with additional style-transfer training). Defaults toPUMP-stytrf.Scale:

--max-scale SCALEBy default, this value is set to 4, meaning that PUMP is at least invariant to a 4x zoom-in or zoom-out. In practically all cases, this is more than enough. You may reduce this value if you know this is too much in order to accelerate computations.

Rotation:

--max-rot DEGREESBy default, PUMP is not rotation-invariant. To enforce rotation invariance, you need to specify the amount of rotation it can tolerate. The more, the slower. Maximum value is 180. If you know that images are not vertically oriented, you can just use 90 degrees.

post-filter:

--post-filter "option1=val1,option2=val2,..."When activated, post-filtering remove spurious correspondences based on their local consistency. See

python post_filter.py --helpfor details about the possible options. It is geometry-agnostic and naturally supports dynamic scenes. If you want to output pixel-dense correspondences (a.k.a optical flow), you need to post-process the correspondences with--post-filter densify=True. Seedemo_warping.pyfor an example.

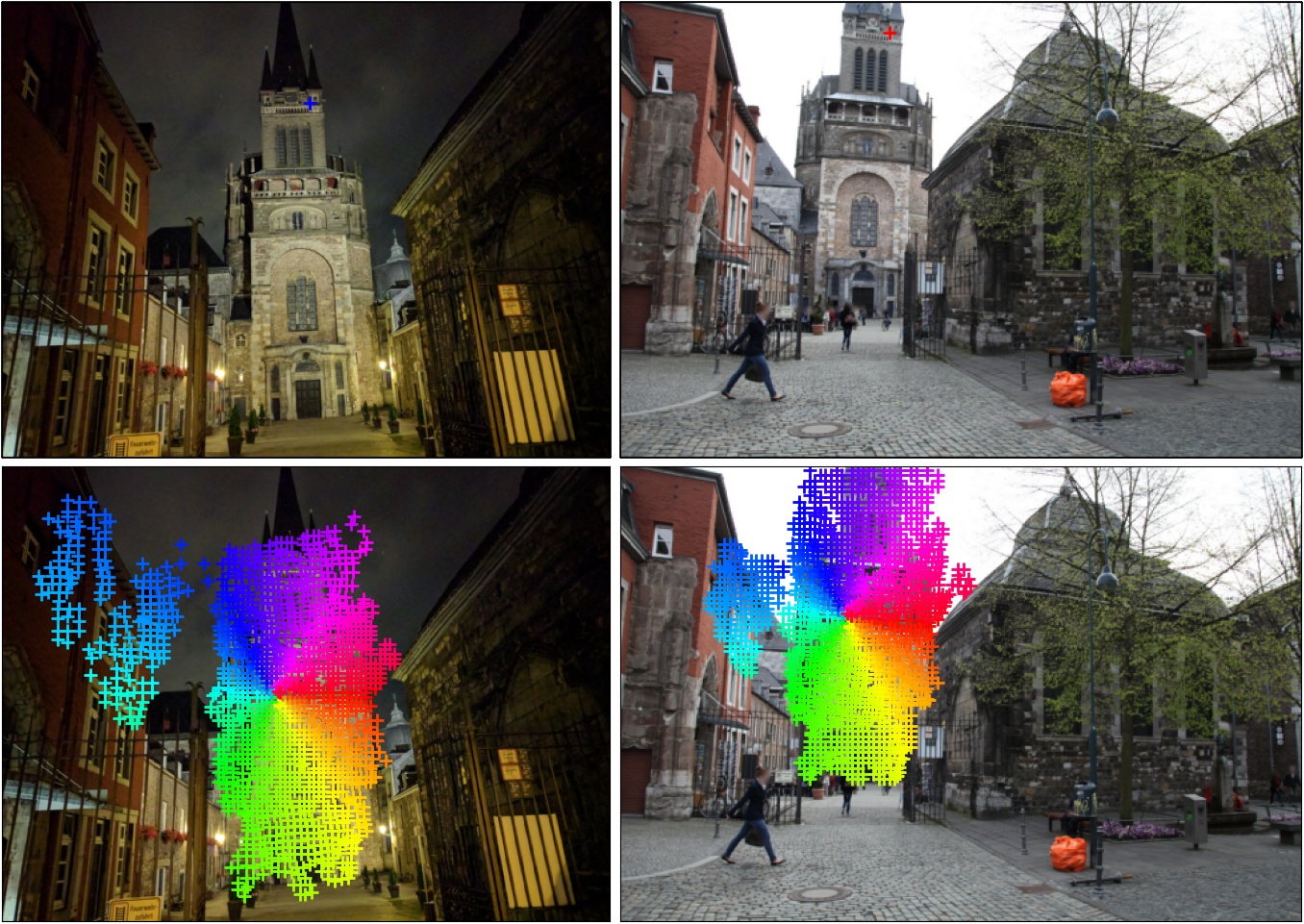

Visualization of results:

python -m tools.viz --img1 path/to/img1 --img2 path/to/img2 --corres path/to/correspondences.npy

Reproducing results on the ETH-3D dataset

Download the ETH-3D dataset from their website and extract it in

datasets/eth3d/Run the code

python run_ETH3D.py. You should get results slightly better than reported in the paper.

Training PUMP from scratch

Download the training data with

bash download_training_data.shThis consists of web images from this paper for the self-supervised loss (as in R2D2) and image pairs from the SfM120k dataset with automatically extracted pixel correspondences. Note that correspondences are not used in the loss, since the loss is unsupervised. They are only necessary so that random cropping produces pairs of crops at least partially aligned. Therefore, correspondences do not need to be 100% correct or even pixel-precise.

Run

python train.py --save-path <output_dir>/Note that the training code is quite rudimentary (only supports

nn.DataParallel, no support forDataDistributedat the moment, and no validation phase neither).Move and rename your final checkpoint to

checkpoints/NAME.ptand test it withpython test_multiscale.py ... --desc NAME