Model Card for Minerva-7B-instruct-v1.0

Minerva is the first family of LLMs pretrained from scratch on Italian developed by Sapienza NLP in the context of the Future Artificial Intelligence Research (FAIR) project, in collaboration with CINECA and with additional contributions from Babelscape and the CREATIVE PRIN Project. Notably, the Minerva models are truly-open (data and model) Italian-English LLMs, with approximately half of the pretraining data including Italian text.

Description

This is the model card for Minerva-7B-instruct-v1.0, a 7 billion parameter model trained on almost 2.5 trillion tokens (1.14 trillion in Italian, 1.14 trillion in English and 200 billion in code).

This model is part of the Minerva LLM family:

- Minerva-350M-base-v1.0

- Minerva-1B-base-v1.0

- Minerva-3B-base-v1.0

- Minerva-7B-base-v1.0

- Minerva-7B-instruct-v1.0

🚨⚠️🚨 Bias, Risks, and Limitations 🚨⚠️🚨

This section identifies foreseeable harms and misunderstandings.

This is a chat foundation model, subject to model alignment and safety risk mitigation strategies. However, the model may still:

- Overrepresent some viewpoints and underrepresent others

- Contain stereotypes

- Contain personal information

- Generate:

- Racist and sexist content

- Hateful, abusive, or violent language

- Discriminatory or prejudicial language

- Content that may not be appropriate for all settings, including sexual content

- Make errors, including producing incorrect information or historical facts as if it were factual

- Generate irrelevant or repetitive outputs

We are aware of the biases and potential problematic/toxic content that current pretrained large language models exhibit: more specifically, as probabilistic models of (Italian and English) languages, they reflect and amplify the biases of their training data. For more information about this issue, please refer to our survey:

How to use Minerva with Hugging Face transformers

import transformers

import torch

model_id = "sapienzanlp/Minerva-7B-instruct-v1.0"

# Initialize the pipeline.

pipeline = transformers.pipeline(

model=model_id,

model_kwargs={"torch_dtype": torch.bfloat16},

device_map="auto",

)

# Input text for the model.

input_conv = [{"role": "user", "content": "Qual è la capitale dell'Italia?"}]

# Compute the outputs.

output = pipeline(

input_conv,

max_new_tokens=128,

)

output

[{'generated_text': [{'role': 'user', 'content': "Qual è la capitale dell'Italia?"}, {'role': 'assistant', 'content': "La capitale dell'Italia è Roma."}]}]

Model Architecture

Minerva-7B-base-v1.0 is a Transformer model based on the Mistral architecture. Please look at the configuration file for a detailed breakdown of the hyperparameters we chose for this model.

The Minerva LLM family is composed of:

| Model Name | Tokens | Layers | Hidden Size | Attention Heads | KV Heads | Sliding Window | Max Context Length |

|---|---|---|---|---|---|---|---|

| Minerva-350M-base-v1.0 | 70B (35B it + 35B en) | 16 | 1152 | 16 | 4 | 2048 | 16384 |

| Minerva-1B-base-v1.0 | 200B (100B it + 100B en) | 16 | 2048 | 16 | 4 | 2048 | 16384 |

| Minerva-3B-base-v1.0 | 660B (330B it + 330B en) | 32 | 2560 | 32 | 8 | 2048 | 16384 |

| Minerva-7B-base-v1.0 | 2.48T (1.14T it + 1.14T en + 200B code) | 32 | 4096 | 32 | 8 | None | 4096 |

Model Training

Minerva-7B-base-v1.0 was trained using llm-foundry 0.8.0 from MosaicML. The hyperparameters used are the following:

| Model Name | Optimizer | lr | betas | eps | weight decay | Scheduler | Warmup Steps | Batch Size (Tokens) | Total Steps |

|---|---|---|---|---|---|---|---|---|---|

| Minerva-350M-base-v1.0 | Decoupled AdamW | 2e-4 | (0.9, 0.95) | 1e-8 | 0.0 | Cosine | 2% | 4M | 16,690 |

| Minerva-1B-base-v1.0 | Decoupled AdamW | 2e-4 | (0.9, 0.95) | 1e-8 | 0.0 | Cosine | 2% | 4M | 47,684 |

| Minerva-3B-base-v1.0 | Decoupled AdamW | 2e-4 | (0.9, 0.95) | 1e-8 | 0.0 | Cosine | 2% | 4M | 157,357 |

| Minerva-7B-base-v1.0 | AdamW | 3e-4 | (0.9, 0.95) | 1e-5 | 0.1 | Cosine | 2000 | 4M | 591,558 |

SFT Training

The SFT model was trained using Llama-Factory. The data mix was the following:

| Dataset | Source | Code | English | Italian |

|---|---|---|---|---|

| Glaive-code-assistant | Link | 100,000 | 0 | 0 |

| Alpaca-python | Link | 20,000 | 0 | 0 |

| Alpaca-cleaned | Link | 0 | 50,000 | 0 |

| Databricks-dolly-15k | Link | 0 | 15,011 | 0 |

| No-robots | Link | 0 | 9,499 | 0 |

| OASST2 | Link | 0 | 29,000 | 528 |

| WizardLM | Link | 0 | 29,810 | 0 |

| LIMA | Link | 0 | 1,000 | 0 |

| OPENORCA | Link | 0 | 30,000 | 0 |

| Ultrachat | Link | 0 | 50,000 | 0 |

| MagpieMT | Link | 0 | 30,000 | 0 |

| Tulu-V2-Science | Link | 0 | 7,000 | 0 |

| Aya_datasets | Link | 0 | 3,944 | 738 |

| Tower-blocks_it | Link | 0 | 0 | 7,276 |

| Bactrian-X | Link | 0 | 0 | 67,000 |

| Magpie (Translated by us) | Link | 0 | 0 | 59,070 |

| Everyday-conversations (Translated by us) | Link | 0 | 0 | 2,260 |

| alpaca-gpt4-it | Link | 0 | 0 | 15,000 |

| capybara-claude-15k-ita | Link | 0 | 0 | 15,000 |

| Wildchat | Link | 0 | 0 | 5,000 |

| GPT4_INST | Link | 0 | 0 | 10,000 |

| Italian Safety Instructions | - | 0 | 0 | 21,426 |

| Italian Conversations | - | 0 | 0 | 4,843 |

For more details, please check our tech page.

Online DPO Training

This model card is for our DPO model. Direct Preference Optimization (DPO) is a method that refines models based on user feedback, similar to Reinforcement Learning from Human Feedback (RLHF), but without the complexity of reinforcement learning. Online DPO further improves this by allowing real-time adaptation during training, continuously refining the model with new feedback. For training this model, we used the Hugging Face TRL library and Online DPO, with the Skywork/Skywork-Reward-Llama-3.1-8B-v0.2 model as the judge to evaluate and guide optimization. For this stage we used just the prompts from HuggingFaceH4/ultrafeedback_binarized (English), efederici/evol-dpo-ita (Italian) and Babelscape/ALERT translated to Italian, with additional manually curated data for safety.

For more details, please check our tech page.

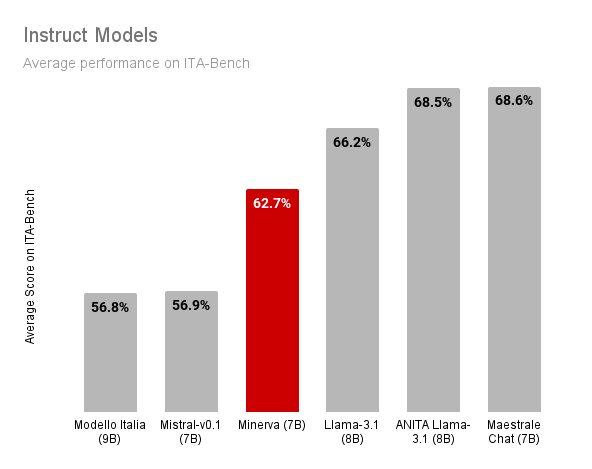

Model Evaluation

For Minerva's evaluation process, we utilized ITA-Bench, a new evaluation suite to test the capabilities of Italian-speaking models. ITA-Bench is a collection of 18 benchmarks that assess the performance of language models on various tasks, including scientific knowledge, commonsense reasoning, and mathematical problem-solving.

.png)

Tokenizer Fertility

The tokenizer fertility measures the average amount of tokens produced per tokenized word. A tokenizer displaying high fertility values in a particular language typically indicates that it segments words in that language extensively. The tokenizer fertility is strictly correlated with the inference speed of the model with respect to a specific language, as higher values mean longer sequences of tokens to generate and thus lower inference speed.

Fertility computed over a sample of Cultura X (CX) data and Wikipedia (Wp):

| Model | Voc. Size | Fertility IT (CX) | Fertility EN (CX) | Fertility IT (Wp) | Fertility EN (Wp) |

|---|---|---|---|---|---|

| Mistral-7B-v0.1 | 32000 | 1.87 | 1.32 | 2.05 | 1.57 |

| gemma-7b | 256000 | 1.42 | 1.18 | 1.56 | 1.34 |

| Minerva-3B-base-v1.0 | 32768 | 1.39 | 1.32 | 1.66 | 1.59 |

| Minerva-7B-base-v1.0 | 51200 | 1.32 | 1.26 | 1.56 | 1.51 |

The Sapienza NLP Team

🧭 Project Lead and Coordination

- Roberto Navigli: project lead and coordination; model analysis, evaluation and selection, safety and guardrailing, conversations.

🤖 Model Development

- Edoardo Barba: pre-training, post-training, data analysis, prompt engineering.

- Simone Conia: pre-training, post-training, evaluation, model, and data analysis.

- Pere-Lluís Huguet Cabot: data processing, filtering,g and deduplication, preference modeling.

- Luca Moroni: data analysis, evaluation, post-training.

- Riccardo Orlando: pre-training process and data processing.

👮 Safety and Guardrailing

- Stefan Bejgu: safety and guardrailing.

- Federico Martelli: synthetic prompt generation, model and safety analysis.

- Ciro Porcaro: additional safety prompts.

- Alessandro Scirè: safety and guardrailing.

- Simone Stirpe: additional safety prompts.

- Simone Tedeschi: English dataset for safety evaluation.

Special thanks for their support

- Giuseppe Fiameni, Nvidia

- Sergio Orlandini, CINECA

Acknowledgments

This work was funded by the PNRR MUR project PE0000013-FAIR and the CREATIVE PRIN project, which is funded by the MUR Progetti di Rilevante Interesse Nazionale programme (PRIN 2020). We acknowledge the CINECA award "IscB_medit" under the ISCRA initiative for the availability of high-performance computing resources and support.

- Downloads last month

- 2,265

Model tree for sapienzanlp/Minerva-7B-instruct-v1.0

Base model

sapienzanlp/Minerva-7B-base-v1.0