MedFalcon v2.1a 40b LoRA - Step 4500

Model Description

This a model check point release at 4500 steps. For evaluation use only! Limitations:

- LoRA output will be more concise than the base model

- Due to the size, base knowledge may be overwritten from falcon-40b

- Due to the size, more hardware may be required to load falcon-40b when using this LoRA

Architecture

nmitchko/medfalconv2-1a-40b-lora' is a large language model LoRa specifically fine-tuned for medical domain tasks.

It is based on Falcon-40b at 40 billion parameters.

The primary goal of this model is to improve question-answering and medical dialogue tasks. It was trained using LoRA, specifically QLora, to reduce memory footprint.

See Training Parameters for more info This Lora supports 4-bit and 8-bit modes.

Requirements

bitsandbytes>=0.39.0

peft

transformers

Steps to load this model:

- Load base model using transformers

- Apply LoRA using peft

#

from transformers import AutoTokenizer, AutoModelForCausalLM

import transformers

import torch

from peft import PeftModel

model = "tiiuae/falcon-40b"

LoRA = "nmitchko/medfalconv2-1a-40b-lora"

# If you want 8 or 4 bit set the appropriate flags

load_8bit = True

tokenizer = AutoTokenizer.from_pretrained(model)

model = AutoModelForCausalLM.from_pretrained(model,

load_in_8bit=load_8bit,

torch_dtype=torch.float16,

trust_remote_code=True,

)

model = PeftModel.from_pretrained(model, LoRA)

pipeline = transformers.pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

torch_dtype=torch.bfloat16,

trust_remote_code=True,

device_map="auto",

)

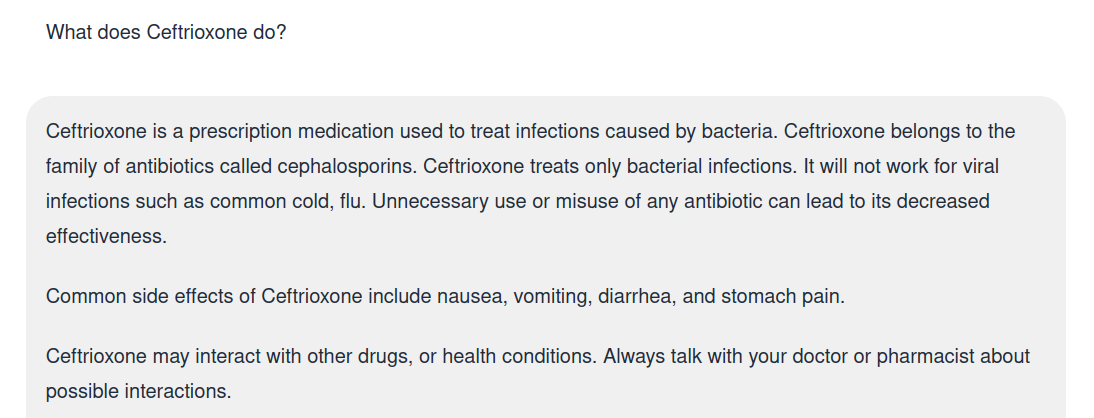

sequences = pipeline(

"What does the drug ceftrioxone do?\nDoctor:",

max_length=200,

do_sample=True,

top_k=40,

num_return_sequences=1,

eos_token_id=tokenizer.eos_token_id,

)

for seq in sequences:

print(f"Result: {seq['generated_text']}")

Training Parameters

The model was trained for 4500 steps or 1 epoch on a custom, unreleased dataset named medconcat.

medconcat contains only human generated content and weighs in at over 100MiB of raw text.

The below bash script initiated training in 4bit mode for a rather large LoRA:

| Item | Amount | Units |

|---|---|---|

| LoRA Rank | 128 | ~ |

| LoRA Alpha | 256 | ~ |

| Learning Rate | 1e-3 | SI |

| Dropout | 5 | % |

CURRENTDATEONLY=`date +"%b %d %Y"`

sudo nvidia-smi -i 1 -pl 250

export CUDA_VISIBLE_DEVICES=0

nohup python qlora.py \

--model_name_or_path models/tiiuae_falcon-40b \

--output_dir ./loras/medfalcon2.1a-40b \

--logging_steps 100 \

--save_strategy steps \

--data_seed 42 \

--save_steps 200 \

--save_total_limit 40 \

--evaluation_strategy steps \

--eval_dataset_size 1024 \

--max_eval_samples 1000 \

--per_device_eval_batch_size 1 \

--max_new_tokens 32 \

--dataloader_num_workers 3 \

--group_by_length \

--logging_strategy steps \

--remove_unused_columns False \

--do_train \

--lora_r 128 \

--lora_alpha 256 \

--lora_modules all \

--double_quant \

--quant_type nf4 \

--bf16 \

--bits 4 \

--warmup_ratio 0.03 \

--lr_scheduler_type constant \

--gradient_checkpointing \

--dataset="training/datasets/medconcat/" \

--dataset_format alpaca \

--trust_remote_code=True \

--source_max_len 16 \

--target_max_len 512 \

--per_device_train_batch_size 1 \

--gradient_accumulation_steps 16 \

--max_steps 4500 \

--eval_steps 1000 \

--learning_rate 0.0001 \

--adam_beta2 0.999 \

--max_grad_norm 0.3 \

--lora_dropout 0.05 \

--weight_decay 0.0 \

--seed 0 > "${CURRENTDATEONLY}-finetune-medfalcon2.1a.log" &

- Downloads last month

- 0