Finetuning Overview:

Model Used: gpt2

Dataset: WizardLM/WizardLM_evol_instruct_70k

Dataset Insights:

The WizardLM/WizardLM_evol_instruct_70k dataset, tailored specifically for enhancing interactive capabilities, was developed using the EVOL-Instruct method. This method enhances a smaller dataset with tougher questions for the LLM to perform.

Finetuning Details:

With the utilization of MonsterAPI's LLM finetuner, this finetuning:

- Was achieved with great cost-effectiveness.

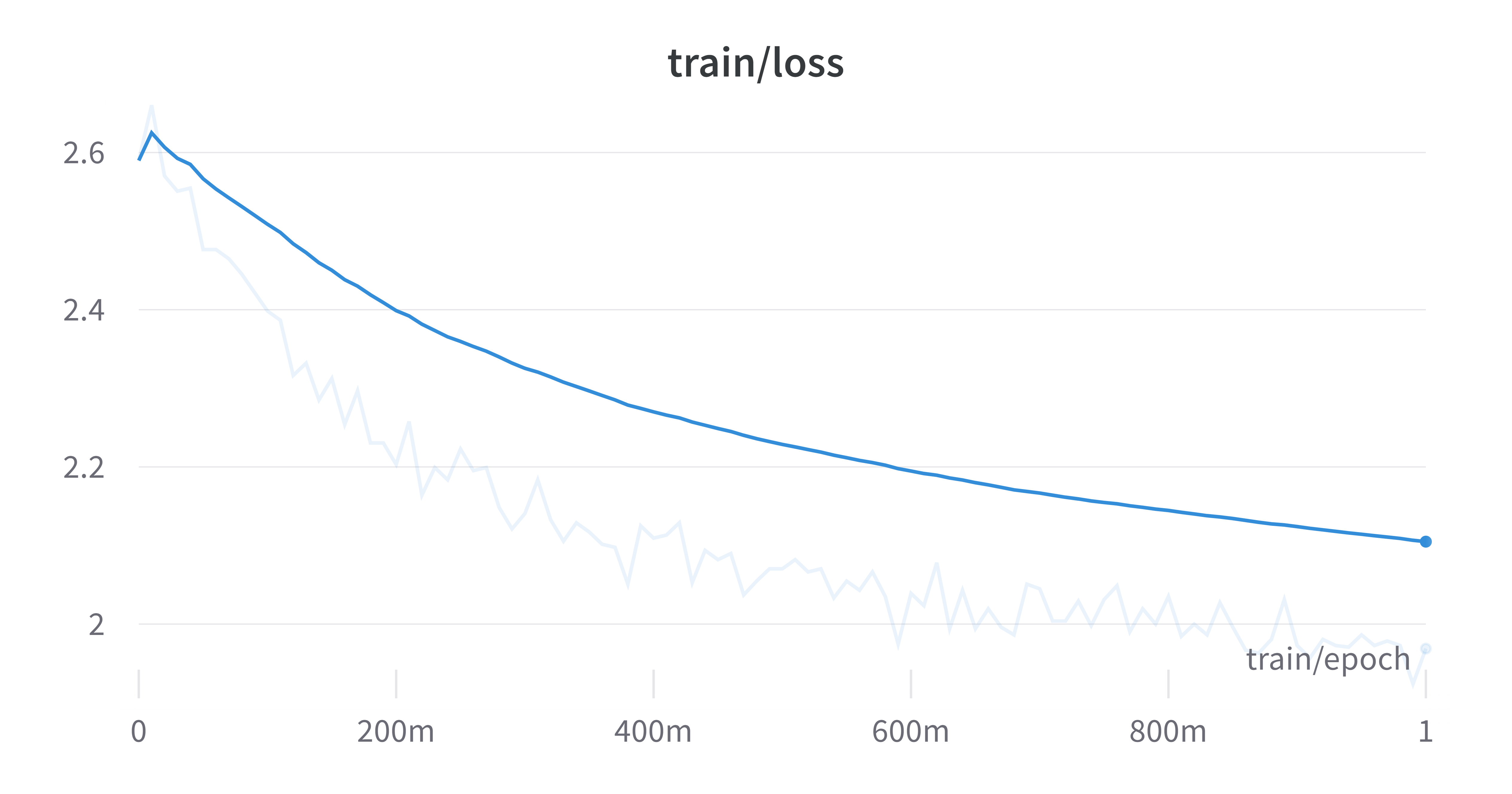

- Completed in a total duration of 14mins for 1 epoch.

- Costed

$0.525for the entire epoch.

Hyperparameters & Additional Details:

- Epochs: 1

- Cost Per Epoch: $0.525

- Total Finetuning Cost: $0.525

- Model Path: gpt2

- Learning Rate: 0.0002

- Data Split: 90% train 10% validation

- Gradient Accumulation Steps: 4

### INSTRUCTION:

[instruction]

### RESPONSE:

[output]

license: apache-2.0

- Downloads last month

- 2

Model tree for monsterapi/gpt2_124m_WizardLMEvolInstruct70k

Base model

openai-community/gpt2