Quantizations of https://huggingface.co/SicariusSicariiStuff/Impish_LLAMA_3B

Inference Clients/UIs

From original readme

Model Details

Intended use: Role-Play, General tasks.

Censorship level: Medium - Low

5.5 / 10 (10 completely uncensored)

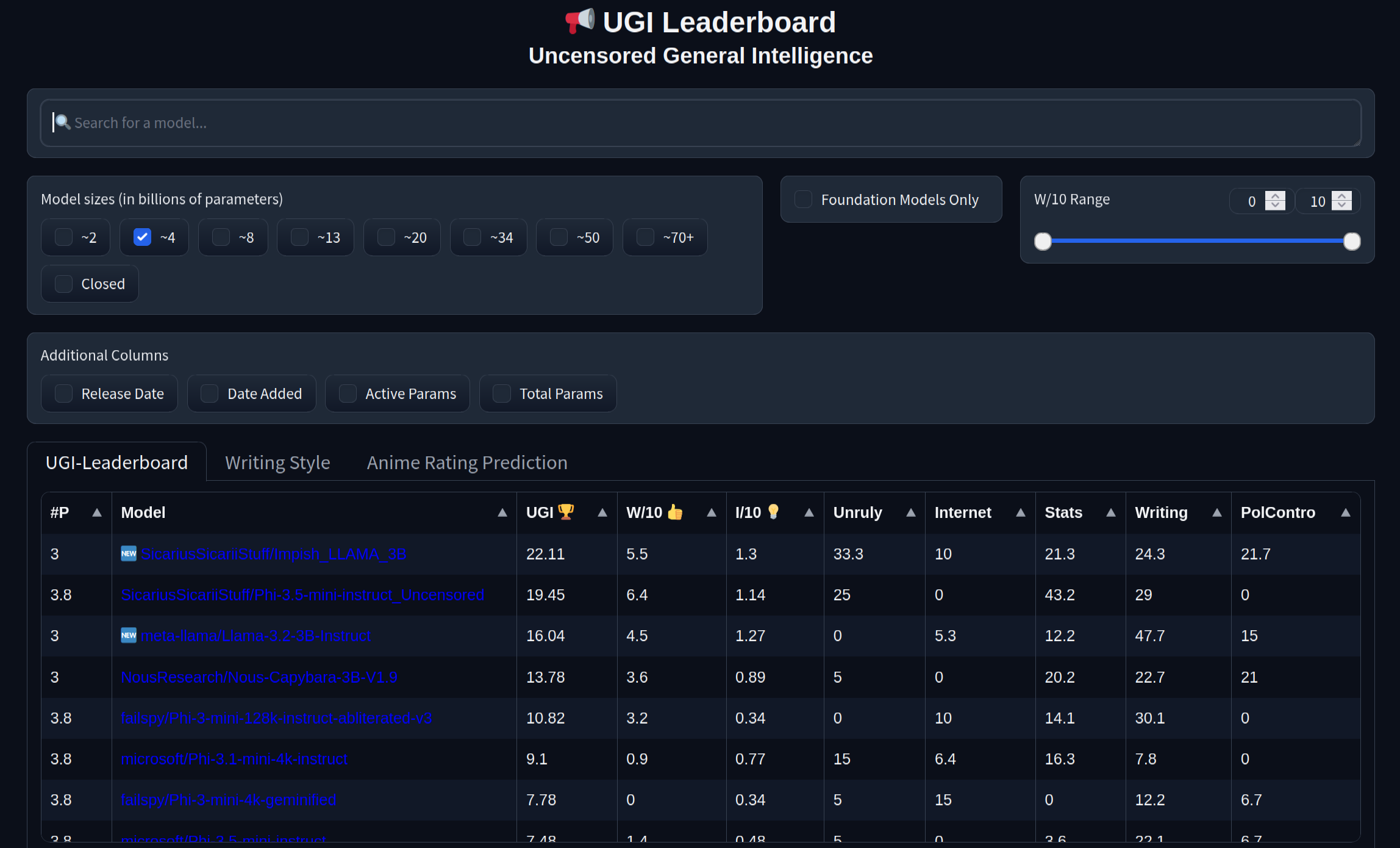

UGI score:

"I want some legit RP models of LLAMA 3.2 3B, we got phones!"

"So make one."

"K."

This model was trained on ~25M tokens, in 3 phases, the first and longest phase was an FFT to teach the model new stuff, and to confuse the shit out of it too, so it would be a little bit less inclined to use GPTisms.

It worked pretty well. In fact, the model was so damn thoroughly confused, that the little devil didn't even make any sense at all, but the knowledge was there.

In the next phase, a DEEP QLORA of R = 512 was used on a new dataset, to... unconfuse it. A completely different dataset was used to avoid overfitting.

Finally, another somewhat deep QLORA of R = 128 was used to tie it all together in a coherent way, and connect all the dots, and this was also with a different dataset as well.

The results are sometimes surprisingly good, it even managed to fool some people into thinking it's a MUCH larger model, and sometimes... sometimes it behaves just like you would expect a 3B model to...

Fun fact: the model was uploaded while there were 200 ICBMs headed my way, just flying there in the sky.

I lived, so expect more models in the future!

Model instruction template: Llama-3-Instruct

<|begin_of_text|><|start_header_id|>system<|end_header_id|>

{system_prompt}<|eot_id|><|start_header_id|>user<|end_header_id|>

{input}<|eot_id|><|start_header_id|>assistant<|end_header_id|>

{output}<|eot_id|>

Recommended generation Presets:

Midnight Enigma

max_new_tokens: 512temperature: 0.98

top_p: 0.37

top_k: 100

typical_p: 1

min_p: 0

repetition_penalty: 1.18

do_sample: True

min_p

max_new_tokens: 512temperature: 1

top_p: 1

top_k: 0

typical_p: 1

min_p: 0.05

repetition_penalty: 1

do_sample: True

Divine Intellect

max_new_tokens: 512temperature: 1.31

top_p: 0.14

top_k: 49

typical_p: 1

min_p: 0

repetition_penalty: 1.17

do_sample: True

simple-1

max_new_tokens: 512temperature: 0.7

top_p: 0.9

top_k: 20

typical_p: 1

min_p: 0

repetition_penalty: 1.15

do_sample: True

- Downloads last month

- 767