license: mit

datasets:

- Slim205/Barka_data_2B

language:

- ar

base_model:

- google/gemma-2-2b-it

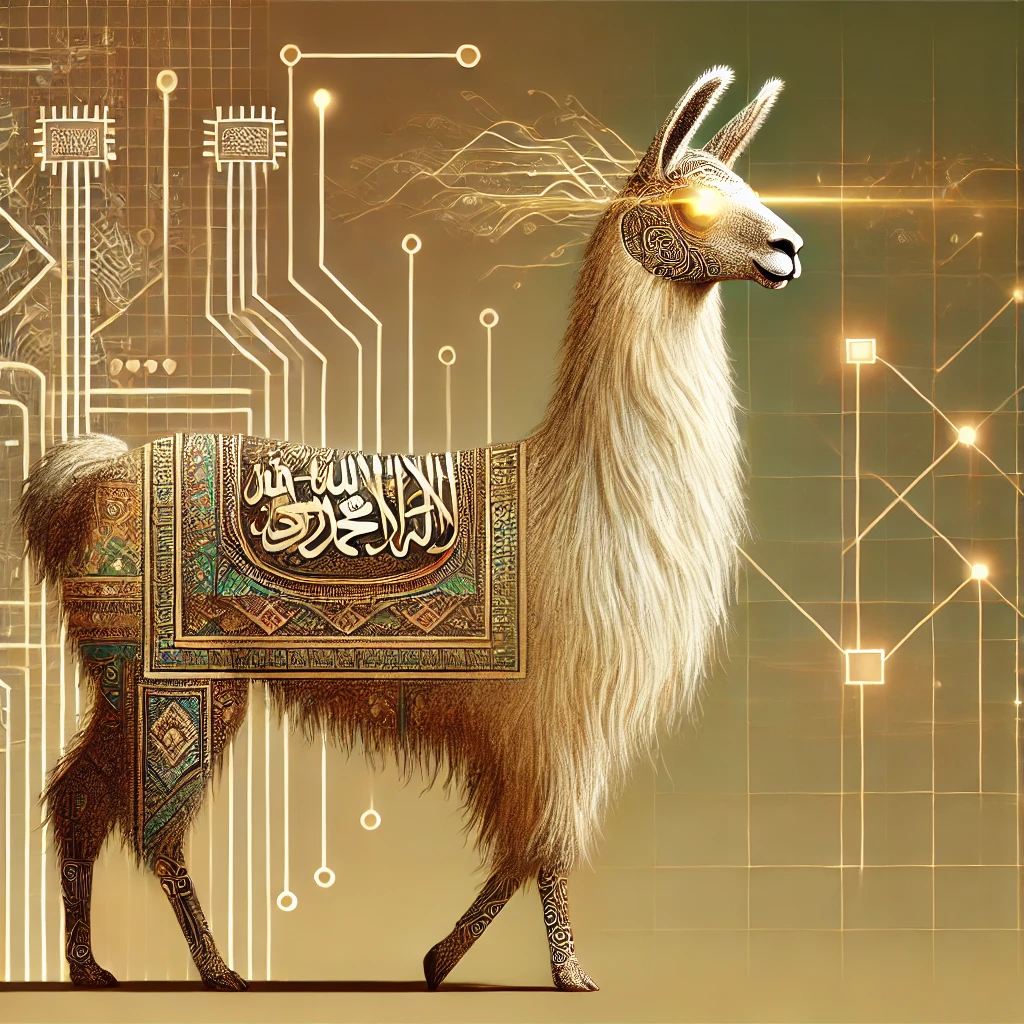

Welcome to Barka-2b-it : The best 2B Arabic LLM

Motivation :

The goal of the project was to adapt large language models for the Arabic language and create a new state-of-the-art Arabic LLM. Due to the scarcity of Arabic instruction fine-tuning data, not many LLMs have been trained specifically in Arabic, which is surprising given the large number of Arabic speakers.

Our final model was trained on a high-quality instruction fine-tuning (IFT) dataset, generated synthetically and then evaluated using the Hugging Face Arabic leaderboard.

Training :

This model is the 2B version. It was trained for 2 days on 1 A100 GPU using LoRA with a rank of 128, a learning rate of 1e-4, and a cosine learning rate schedule.

Evaluation :

My model is now on the Arabic leaderboard.

| Metric | Slim205/Barka-2b-it |

|---|---|

| Average | 46.98 |

| ACVA | 39.5 |

| AlGhafa | 46.5 |

| MMLU | 37.06 |

| EXAMS | 38.73 |

| ARC Challenge | 35.78 |

| ARC Easy | 36.97 |

| BOOLQ | 73.77 |

| COPA | 50 |

| HELLAWSWAG | 28.98 |

| OPENBOOK QA | 43.84 |

| PIQA | 56.36 |

| RACE | 36.19 |

| SCIQ | 55.78 |

| TOXIGEN | 78.29 |

Please refer to https://github.com/Slim205/Arabicllm/ for more details.

Using the Model

The model uses transformers to generate responses based on the provided inputs. Here’s an example code to use the model:

from transformers import AutoTokenizer, AutoModelForCausalLM

from peft import PeftModel

import torch

model_id = "google/gemma-2-2b-it"

peft_model_id = "Slim205/Barka-2b-it"

model = AutoModelForCausalLM.from_pretrained(model_id).to("cuda")

tokenizer = AutoTokenizer.from_pretrained("Slim205/Barka-2b-it")

model1 = PeftModel.from_pretrained(model, peft_model_id)

input_text = "ما هي عاصمة تونس؟" # "What is the capital of Tunisia?"

chat = [

{ "role": "user", "content": input_text },

]

prompt = tokenizer.apply_chat_template(chat, tokenize=False, add_generation_prompt=True)

inputs = tokenizer.encode(prompt, add_special_tokens=False, return_tensors="pt")

outputs = model.generate(

input_ids=inputs.to(model.device),

max_new_tokens=32,

top_p=0.9,

do_sample=True

)

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

<bos><start_of_turn>user

ما هي عاصمة تونس؟<end_of_turn>

<start_of_turn>model

عاصمة تونس هي تونس. يشار إليها عادة باسم مدينة تونس. المدينة لديها حوالي 2،500،000 نسمة