Upload folder using huggingface_hub

#1

by

sharpenb

- opened

- README.md +81 -0

- config.json +43 -0

- generation_config.json +9 -0

- model-00001-of-00002.safetensors +3 -0

- model-00002-of-00002.safetensors +3 -0

- model.safetensors.index.json +522 -0

- plots.png +0 -0

- smash_config.json +29 -0

README.md

ADDED

|

@@ -0,0 +1,81 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

library_name: pruna-engine

|

| 3 |

+

thumbnail: "https://assets-global.website-files.com/646b351987a8d8ce158d1940/64ec9e96b4334c0e1ac41504_Logo%20with%20white%20text.svg"

|

| 4 |

+

metrics:

|

| 5 |

+

- memory_disk

|

| 6 |

+

- memory_inference

|

| 7 |

+

- inference_latency

|

| 8 |

+

- inference_throughput

|

| 9 |

+

- inference_CO2_emissions

|

| 10 |

+

- inference_energy_consumption

|

| 11 |

+

---

|

| 12 |

+

<!-- header start -->

|

| 13 |

+

<!-- 200823 -->

|

| 14 |

+

<div style="width: auto; margin-left: auto; margin-right: auto">

|

| 15 |

+

<a href="https://www.pruna.ai/" target="_blank" rel="noopener noreferrer">

|

| 16 |

+

<img src="https://i.imgur.com/eDAlcgk.png" alt="PrunaAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

|

| 17 |

+

</a>

|

| 18 |

+

</div>

|

| 19 |

+

<!-- header end -->

|

| 20 |

+

|

| 21 |

+

[](https://twitter.com/PrunaAI)

|

| 22 |

+

[](https://github.com/PrunaAI)

|

| 23 |

+

[](https://www.linkedin.com/company/93832878/admin/feed/posts/?feedType=following)

|

| 24 |

+

[](https://discord.gg/CP4VSgck)

|

| 25 |

+

|

| 26 |

+

# Simply make AI models cheaper, smaller, faster, and greener!

|

| 27 |

+

|

| 28 |

+

- Give a thumbs up if you like this model!

|

| 29 |

+

- Contact us and tell us which model to compress next [here](https://www.pruna.ai/contact).

|

| 30 |

+

- Request access to easily compress your *own* AI models [here](https://z0halsaff74.typeform.com/pruna-access?typeform-source=www.pruna.ai).

|

| 31 |

+

- Read the documentations to know more [here](https://pruna-ai-pruna.readthedocs-hosted.com/en/latest/)

|

| 32 |

+

- Join Pruna AI community on Discord [here](https://discord.gg/CP4VSgck) to share feedback/suggestions or get help.

|

| 33 |

+

|

| 34 |

+

## Results

|

| 35 |

+

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

**Frequently Asked Questions**

|

| 39 |

+

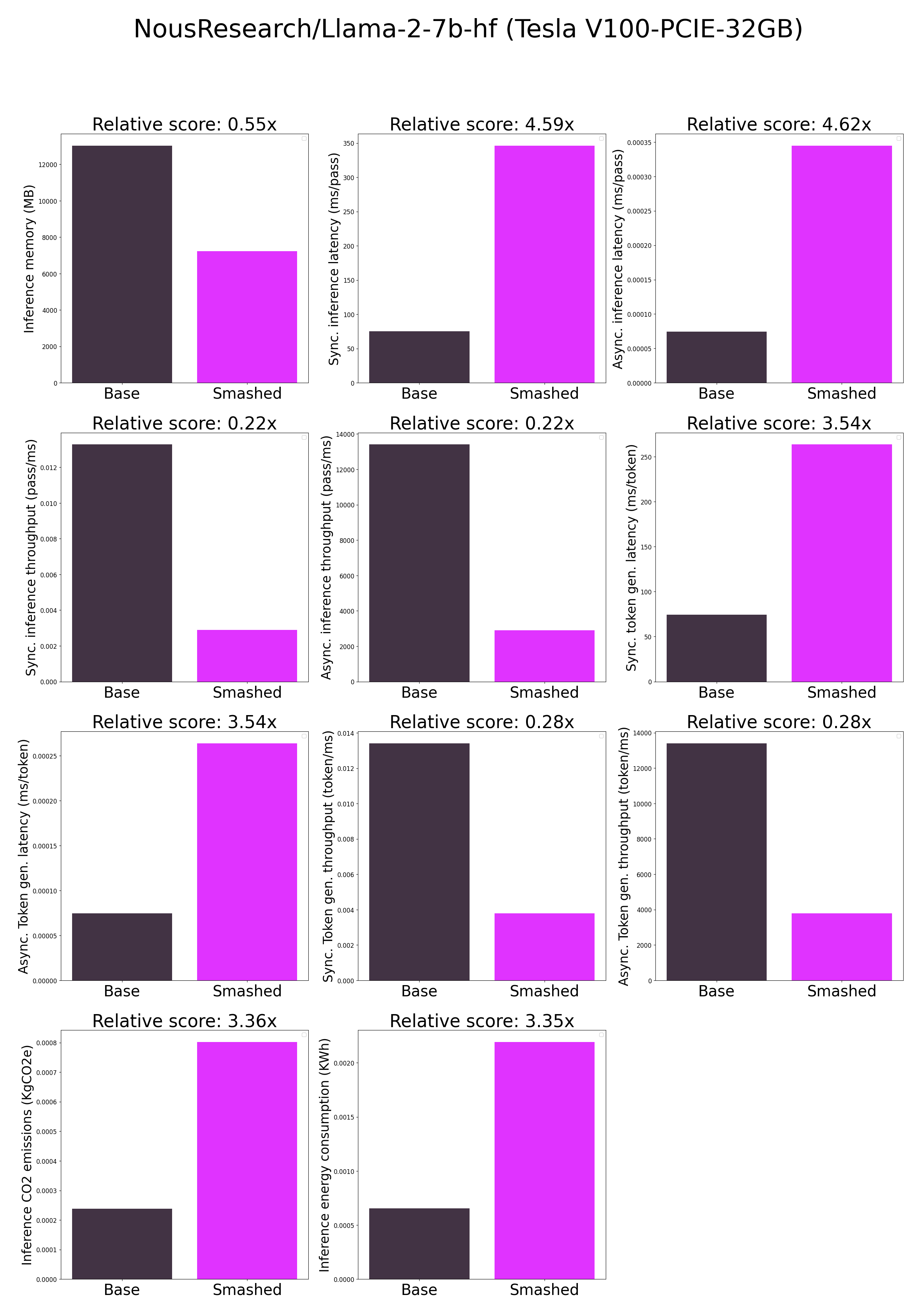

- ***How does the compression work?*** The model is compressed with llm-int8.

|

| 40 |

+

- ***How does the model quality change?*** The quality of the model output might vary compared to the base model.

|

| 41 |

+

- ***How is the model efficiency evaluated?*** These results were obtained on Tesla V100-PCIE-32GB with configuration described in `model/smash_config.json` and are obtained after a hardware warmup. The smashed model is directly compared to the original base model. Efficiency results may vary in other settings (e.g. other hardware, image size, batch size, ...). We recommend to directly run them in the use-case conditions to know if the smashed model can benefit you.

|

| 42 |

+

- ***What is the model format?*** We use safetensors.

|

| 43 |

+

- ***What is the naming convention for Pruna Huggingface models?*** We take the original model name and append "turbo", "tiny", or "green" if the smashed model has a measured inference speed, inference memory, or inference energy consumption which is less than 90% of the original base model.

|

| 44 |

+

- ***How to compress my own models?*** You can request premium access to more compression methods and tech support for your specific use-cases [here](https://z0halsaff74.typeform.com/pruna-access?typeform-source=www.pruna.ai).

|

| 45 |

+

- ***What are "first" metrics?*** Results mentioning "first" are obtained after the first run of the model. The first run might take more memory or be slower than the subsequent runs due cuda overheads.

|

| 46 |

+

- ***What are "Sync" and "Async" metrics?*** "Sync" metrics are obtained by syncing all GPU processes and stop measurement when all of them are executed. "Async" metrics are obtained without syncing all GPU processes and stop when the model output can be used by the CPU. We provide both metrics since both could be relevant depending on the use-case. We recommend to test the efficiency gains directly in your use-cases.

|

| 47 |

+

|

| 48 |

+

## Setup

|

| 49 |

+

|

| 50 |

+

You can run the smashed model with these steps:

|

| 51 |

+

|

| 52 |

+

0. Check requirements from the original repo NousResearch/Llama-2-7b-hf installed. In particular, check python, cuda, and transformers versions.

|

| 53 |

+

1. Make sure that you have installed quantization related packages.

|

| 54 |

+

```bash

|

| 55 |

+

pip install transformers accelerate bitsandbytes>0.37.0

|

| 56 |

+

```

|

| 57 |

+

2. Load & run the model.

|

| 58 |

+

```python

|

| 59 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 60 |

+

|

| 61 |

+

model = AutoModelForCausalLM.from_pretrained("PrunaAI/NousResearch-Llama-2-7b-hf-bnb-8bit-smashed",

|

| 62 |

+

trust_remote_code=True)

|

| 63 |

+

tokenizer = AutoTokenizer.from_pretrained("NousResearch/Llama-2-7b-hf")

|

| 64 |

+

|

| 65 |

+

input_ids = tokenizer("What is the color of prunes?,", return_tensors='pt').to(model.device)["input_ids"]

|

| 66 |

+

|

| 67 |

+

outputs = model.generate(input_ids, max_new_tokens=216)

|

| 68 |

+

```

|

| 69 |

+

|

| 70 |

+

## Configurations

|

| 71 |

+

|

| 72 |

+

The configuration info are in `smash_config.json`.

|

| 73 |

+

|

| 74 |

+

## Credits & License

|

| 75 |

+

|

| 76 |

+

The license of the smashed model follows the license of the original model. Please check the license of the original model NousResearch/Llama-2-7b-hf before using this model which provided the base model. The license of the `pruna-engine` is [here](https://pypi.org/project/pruna-engine/) on Pypi.

|

| 77 |

+

|

| 78 |

+

## Want to compress other models?

|

| 79 |

+

|

| 80 |

+

- Contact us and tell us which model to compress next [here](https://www.pruna.ai/contact).

|

| 81 |

+

- Request access to easily compress your own AI models [here](https://z0halsaff74.typeform.com/pruna-access?typeform-source=www.pruna.ai).

|

config.json

ADDED

|

@@ -0,0 +1,43 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "/tmp/tmpfhm9gkgl",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"LlamaForCausalLM"

|

| 5 |

+

],

|

| 6 |

+

"attention_bias": false,

|

| 7 |

+

"attention_dropout": 0.0,

|

| 8 |

+

"bos_token_id": 1,

|

| 9 |

+

"eos_token_id": 2,

|

| 10 |

+

"hidden_act": "silu",

|

| 11 |

+

"hidden_size": 4096,

|

| 12 |

+

"initializer_range": 0.02,

|

| 13 |

+

"intermediate_size": 11008,

|

| 14 |

+

"max_position_embeddings": 4096,

|

| 15 |

+

"model_type": "llama",

|

| 16 |

+

"num_attention_heads": 32,

|

| 17 |

+

"num_hidden_layers": 32,

|

| 18 |

+

"num_key_value_heads": 32,

|

| 19 |

+

"pad_token_id": 0,

|

| 20 |

+

"pretraining_tp": 1,

|

| 21 |

+

"quantization_config": {

|

| 22 |

+

"bnb_4bit_compute_dtype": "bfloat16",

|

| 23 |

+

"bnb_4bit_quant_type": "fp4",

|

| 24 |

+

"bnb_4bit_use_double_quant": true,

|

| 25 |

+

"llm_int8_enable_fp32_cpu_offload": false,

|

| 26 |

+

"llm_int8_has_fp16_weight": false,

|

| 27 |

+

"llm_int8_skip_modules": [

|

| 28 |

+

"lm_head"

|

| 29 |

+

],

|

| 30 |

+

"llm_int8_threshold": 6.0,

|

| 31 |

+

"load_in_4bit": false,

|

| 32 |

+

"load_in_8bit": true,

|

| 33 |

+

"quant_method": "bitsandbytes"

|

| 34 |

+

},

|

| 35 |

+

"rms_norm_eps": 1e-05,

|

| 36 |

+

"rope_scaling": null,

|

| 37 |

+

"rope_theta": 10000.0,

|

| 38 |

+

"tie_word_embeddings": false,

|

| 39 |

+

"torch_dtype": "float16",

|

| 40 |

+

"transformers_version": "4.37.1",

|

| 41 |

+

"use_cache": true,

|

| 42 |

+

"vocab_size": 32000

|

| 43 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,9 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"bos_token_id": 1,

|

| 4 |

+

"eos_token_id": 2,

|

| 5 |

+

"pad_token_id": 32000,

|

| 6 |

+

"temperature": 0.9,

|

| 7 |

+

"top_p": 0.6,

|

| 8 |

+

"transformers_version": "4.37.1"

|

| 9 |

+

}

|

model-00001-of-00002.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b5d81b1cf2c0d075c79bdd84e84e0755b49c017a4494abfcb442b3e28d7afd19

|

| 3 |

+

size 4988274536

|

model-00002-of-00002.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:50f4d42aed4fa5d058d537a09489c9ea96bdbdc594fd5e62ab9c501ee0d7e90c

|

| 3 |

+

size 2018046512

|

model.safetensors.index.json

ADDED

|

@@ -0,0 +1,522 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"metadata": {

|

| 3 |

+

"total_size": 7006265344

|

| 4 |

+

},

|

| 5 |

+

"weight_map": {

|

| 6 |

+

"lm_head.weight": "model-00002-of-00002.safetensors",

|

| 7 |

+

"model.embed_tokens.weight": "model-00001-of-00002.safetensors",

|

| 8 |

+

"model.layers.0.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 9 |

+

"model.layers.0.mlp.down_proj.SCB": "model-00001-of-00002.safetensors",

|

| 10 |

+

"model.layers.0.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 11 |

+

"model.layers.0.mlp.gate_proj.SCB": "model-00001-of-00002.safetensors",

|

| 12 |

+

"model.layers.0.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 13 |

+

"model.layers.0.mlp.up_proj.SCB": "model-00001-of-00002.safetensors",

|

| 14 |

+

"model.layers.0.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 15 |

+

"model.layers.0.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 16 |

+

"model.layers.0.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 17 |

+

"model.layers.0.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 18 |

+

"model.layers.0.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 19 |

+

"model.layers.0.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 20 |

+

"model.layers.0.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 21 |

+

"model.layers.0.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 22 |

+

"model.layers.0.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 23 |

+

"model.layers.0.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 24 |

+

"model.layers.1.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 25 |

+

"model.layers.1.mlp.down_proj.SCB": "model-00001-of-00002.safetensors",

|

| 26 |

+

"model.layers.1.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 27 |

+

"model.layers.1.mlp.gate_proj.SCB": "model-00001-of-00002.safetensors",

|

| 28 |

+

"model.layers.1.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 29 |

+

"model.layers.1.mlp.up_proj.SCB": "model-00001-of-00002.safetensors",

|

| 30 |

+

"model.layers.1.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 31 |

+

"model.layers.1.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 32 |

+

"model.layers.1.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 33 |

+

"model.layers.1.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 34 |

+

"model.layers.1.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 35 |

+

"model.layers.1.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 36 |

+

"model.layers.1.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 37 |

+

"model.layers.1.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 38 |

+

"model.layers.1.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 39 |

+

"model.layers.1.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 40 |

+

"model.layers.10.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 41 |

+

"model.layers.10.mlp.down_proj.SCB": "model-00001-of-00002.safetensors",

|

| 42 |

+

"model.layers.10.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 43 |

+

"model.layers.10.mlp.gate_proj.SCB": "model-00001-of-00002.safetensors",

|

| 44 |

+

"model.layers.10.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 45 |

+

"model.layers.10.mlp.up_proj.SCB": "model-00001-of-00002.safetensors",

|

| 46 |

+

"model.layers.10.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 47 |

+

"model.layers.10.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 48 |

+

"model.layers.10.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 49 |

+

"model.layers.10.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 50 |

+

"model.layers.10.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 51 |

+

"model.layers.10.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 52 |

+

"model.layers.10.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 53 |

+

"model.layers.10.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 54 |

+

"model.layers.10.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 55 |

+

"model.layers.10.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 56 |

+

"model.layers.11.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 57 |

+

"model.layers.11.mlp.down_proj.SCB": "model-00001-of-00002.safetensors",

|

| 58 |

+

"model.layers.11.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 59 |

+

"model.layers.11.mlp.gate_proj.SCB": "model-00001-of-00002.safetensors",

|

| 60 |

+

"model.layers.11.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 61 |

+

"model.layers.11.mlp.up_proj.SCB": "model-00001-of-00002.safetensors",

|

| 62 |

+

"model.layers.11.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 63 |

+

"model.layers.11.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 64 |

+

"model.layers.11.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 65 |

+

"model.layers.11.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 66 |

+

"model.layers.11.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 67 |

+

"model.layers.11.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 68 |

+

"model.layers.11.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 69 |

+

"model.layers.11.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 70 |

+

"model.layers.11.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 71 |

+

"model.layers.11.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 72 |

+

"model.layers.12.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 73 |

+

"model.layers.12.mlp.down_proj.SCB": "model-00001-of-00002.safetensors",

|

| 74 |

+

"model.layers.12.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 75 |

+

"model.layers.12.mlp.gate_proj.SCB": "model-00001-of-00002.safetensors",

|

| 76 |

+

"model.layers.12.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 77 |

+

"model.layers.12.mlp.up_proj.SCB": "model-00001-of-00002.safetensors",

|

| 78 |

+

"model.layers.12.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 79 |

+

"model.layers.12.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 80 |

+

"model.layers.12.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 81 |

+

"model.layers.12.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 82 |

+

"model.layers.12.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 83 |

+

"model.layers.12.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 84 |

+

"model.layers.12.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 85 |

+

"model.layers.12.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 86 |

+

"model.layers.12.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 87 |

+

"model.layers.12.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 88 |

+

"model.layers.13.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 89 |

+

"model.layers.13.mlp.down_proj.SCB": "model-00001-of-00002.safetensors",

|

| 90 |

+

"model.layers.13.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 91 |

+

"model.layers.13.mlp.gate_proj.SCB": "model-00001-of-00002.safetensors",

|

| 92 |

+

"model.layers.13.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 93 |

+

"model.layers.13.mlp.up_proj.SCB": "model-00001-of-00002.safetensors",

|

| 94 |

+

"model.layers.13.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 95 |

+

"model.layers.13.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 96 |

+

"model.layers.13.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 97 |

+

"model.layers.13.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 98 |

+

"model.layers.13.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 99 |

+

"model.layers.13.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 100 |

+

"model.layers.13.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 101 |

+

"model.layers.13.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 102 |

+

"model.layers.13.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 103 |

+

"model.layers.13.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 104 |

+

"model.layers.14.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 105 |

+

"model.layers.14.mlp.down_proj.SCB": "model-00001-of-00002.safetensors",

|

| 106 |

+

"model.layers.14.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 107 |

+

"model.layers.14.mlp.gate_proj.SCB": "model-00001-of-00002.safetensors",

|

| 108 |

+

"model.layers.14.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 109 |

+

"model.layers.14.mlp.up_proj.SCB": "model-00001-of-00002.safetensors",

|

| 110 |

+

"model.layers.14.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 111 |

+

"model.layers.14.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 112 |

+

"model.layers.14.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 113 |

+

"model.layers.14.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 114 |

+

"model.layers.14.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 115 |

+

"model.layers.14.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 116 |

+

"model.layers.14.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 117 |

+

"model.layers.14.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 118 |

+

"model.layers.14.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 119 |

+

"model.layers.14.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 120 |

+

"model.layers.15.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 121 |

+

"model.layers.15.mlp.down_proj.SCB": "model-00001-of-00002.safetensors",

|

| 122 |

+

"model.layers.15.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 123 |

+

"model.layers.15.mlp.gate_proj.SCB": "model-00001-of-00002.safetensors",

|

| 124 |

+

"model.layers.15.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 125 |

+

"model.layers.15.mlp.up_proj.SCB": "model-00001-of-00002.safetensors",

|

| 126 |

+

"model.layers.15.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 127 |

+

"model.layers.15.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 128 |

+

"model.layers.15.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 129 |

+

"model.layers.15.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 130 |

+

"model.layers.15.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 131 |

+

"model.layers.15.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 132 |

+

"model.layers.15.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 133 |

+

"model.layers.15.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 134 |

+

"model.layers.15.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 135 |

+

"model.layers.15.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 136 |

+

"model.layers.16.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 137 |

+

"model.layers.16.mlp.down_proj.SCB": "model-00001-of-00002.safetensors",

|

| 138 |

+

"model.layers.16.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 139 |

+

"model.layers.16.mlp.gate_proj.SCB": "model-00001-of-00002.safetensors",

|

| 140 |

+

"model.layers.16.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 141 |

+

"model.layers.16.mlp.up_proj.SCB": "model-00001-of-00002.safetensors",

|

| 142 |

+

"model.layers.16.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 143 |

+

"model.layers.16.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 144 |

+

"model.layers.16.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 145 |

+

"model.layers.16.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 146 |

+

"model.layers.16.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 147 |

+

"model.layers.16.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 148 |

+

"model.layers.16.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 149 |

+

"model.layers.16.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 150 |

+

"model.layers.16.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 151 |

+

"model.layers.16.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 152 |

+

"model.layers.17.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 153 |

+

"model.layers.17.mlp.down_proj.SCB": "model-00001-of-00002.safetensors",

|

| 154 |

+

"model.layers.17.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 155 |

+

"model.layers.17.mlp.gate_proj.SCB": "model-00001-of-00002.safetensors",

|

| 156 |

+

"model.layers.17.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 157 |

+

"model.layers.17.mlp.up_proj.SCB": "model-00001-of-00002.safetensors",

|

| 158 |

+

"model.layers.17.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 159 |

+

"model.layers.17.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 160 |

+

"model.layers.17.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 161 |

+

"model.layers.17.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 162 |

+

"model.layers.17.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 163 |

+

"model.layers.17.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 164 |

+

"model.layers.17.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 165 |

+

"model.layers.17.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 166 |

+

"model.layers.17.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 167 |

+

"model.layers.17.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 168 |

+

"model.layers.18.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 169 |

+

"model.layers.18.mlp.down_proj.SCB": "model-00001-of-00002.safetensors",

|

| 170 |

+

"model.layers.18.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 171 |

+

"model.layers.18.mlp.gate_proj.SCB": "model-00001-of-00002.safetensors",

|

| 172 |

+

"model.layers.18.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 173 |

+

"model.layers.18.mlp.up_proj.SCB": "model-00001-of-00002.safetensors",

|

| 174 |

+

"model.layers.18.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 175 |

+

"model.layers.18.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 176 |

+

"model.layers.18.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 177 |

+

"model.layers.18.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 178 |

+

"model.layers.18.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 179 |

+

"model.layers.18.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 180 |

+

"model.layers.18.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 181 |

+

"model.layers.18.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 182 |

+

"model.layers.18.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 183 |

+

"model.layers.18.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 184 |

+

"model.layers.19.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 185 |

+

"model.layers.19.mlp.down_proj.SCB": "model-00001-of-00002.safetensors",

|

| 186 |

+

"model.layers.19.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 187 |

+

"model.layers.19.mlp.gate_proj.SCB": "model-00001-of-00002.safetensors",

|

| 188 |

+

"model.layers.19.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 189 |

+

"model.layers.19.mlp.up_proj.SCB": "model-00001-of-00002.safetensors",

|

| 190 |

+

"model.layers.19.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 191 |

+

"model.layers.19.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 192 |

+

"model.layers.19.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 193 |

+

"model.layers.19.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 194 |

+

"model.layers.19.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 195 |

+

"model.layers.19.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 196 |

+

"model.layers.19.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 197 |

+

"model.layers.19.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 198 |

+

"model.layers.19.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 199 |

+

"model.layers.19.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 200 |

+

"model.layers.2.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 201 |

+

"model.layers.2.mlp.down_proj.SCB": "model-00001-of-00002.safetensors",

|

| 202 |

+

"model.layers.2.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 203 |

+

"model.layers.2.mlp.gate_proj.SCB": "model-00001-of-00002.safetensors",

|

| 204 |

+

"model.layers.2.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 205 |

+

"model.layers.2.mlp.up_proj.SCB": "model-00001-of-00002.safetensors",

|

| 206 |

+

"model.layers.2.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 207 |

+

"model.layers.2.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 208 |

+

"model.layers.2.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 209 |

+

"model.layers.2.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 210 |

+

"model.layers.2.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 211 |

+

"model.layers.2.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 212 |

+

"model.layers.2.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 213 |

+

"model.layers.2.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 214 |

+

"model.layers.2.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 215 |

+

"model.layers.2.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 216 |

+

"model.layers.20.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 217 |

+

"model.layers.20.mlp.down_proj.SCB": "model-00001-of-00002.safetensors",

|

| 218 |

+

"model.layers.20.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 219 |

+

"model.layers.20.mlp.gate_proj.SCB": "model-00001-of-00002.safetensors",

|

| 220 |

+

"model.layers.20.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 221 |

+

"model.layers.20.mlp.up_proj.SCB": "model-00001-of-00002.safetensors",

|

| 222 |

+

"model.layers.20.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 223 |

+

"model.layers.20.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 224 |

+

"model.layers.20.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 225 |

+

"model.layers.20.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 226 |

+

"model.layers.20.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 227 |

+

"model.layers.20.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 228 |

+

"model.layers.20.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 229 |

+

"model.layers.20.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 230 |

+

"model.layers.20.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 231 |

+

"model.layers.20.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 232 |

+

"model.layers.21.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 233 |

+

"model.layers.21.mlp.down_proj.SCB": "model-00001-of-00002.safetensors",

|

| 234 |

+

"model.layers.21.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 235 |

+

"model.layers.21.mlp.gate_proj.SCB": "model-00001-of-00002.safetensors",

|

| 236 |

+

"model.layers.21.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 237 |

+

"model.layers.21.mlp.up_proj.SCB": "model-00001-of-00002.safetensors",

|

| 238 |

+

"model.layers.21.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 239 |

+

"model.layers.21.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 240 |

+

"model.layers.21.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 241 |

+

"model.layers.21.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 242 |

+

"model.layers.21.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 243 |

+

"model.layers.21.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 244 |

+

"model.layers.21.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 245 |

+

"model.layers.21.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 246 |

+

"model.layers.21.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 247 |

+

"model.layers.21.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 248 |

+

"model.layers.22.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 249 |

+

"model.layers.22.mlp.down_proj.SCB": "model-00001-of-00002.safetensors",

|

| 250 |

+

"model.layers.22.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 251 |

+

"model.layers.22.mlp.gate_proj.SCB": "model-00001-of-00002.safetensors",

|

| 252 |

+

"model.layers.22.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 253 |

+

"model.layers.22.mlp.up_proj.SCB": "model-00001-of-00002.safetensors",

|

| 254 |

+

"model.layers.22.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 255 |

+

"model.layers.22.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 256 |

+

"model.layers.22.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 257 |

+

"model.layers.22.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 258 |

+

"model.layers.22.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 259 |

+

"model.layers.22.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 260 |

+

"model.layers.22.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 261 |

+

"model.layers.22.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 262 |

+

"model.layers.22.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 263 |

+

"model.layers.22.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 264 |

+

"model.layers.23.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 265 |

+

"model.layers.23.mlp.down_proj.SCB": "model-00002-of-00002.safetensors",

|

| 266 |

+

"model.layers.23.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

| 267 |

+

"model.layers.23.mlp.gate_proj.SCB": "model-00002-of-00002.safetensors",

|

| 268 |

+

"model.layers.23.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

| 269 |

+

"model.layers.23.mlp.up_proj.SCB": "model-00002-of-00002.safetensors",

|

| 270 |

+

"model.layers.23.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

| 271 |

+

"model.layers.23.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 272 |

+

"model.layers.23.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 273 |

+

"model.layers.23.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 274 |

+

"model.layers.23.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 275 |

+

"model.layers.23.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 276 |

+

"model.layers.23.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 277 |

+

"model.layers.23.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 278 |

+

"model.layers.23.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 279 |

+

"model.layers.23.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 280 |

+

"model.layers.24.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 281 |

+

"model.layers.24.mlp.down_proj.SCB": "model-00002-of-00002.safetensors",

|

| 282 |

+

"model.layers.24.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

| 283 |

+

"model.layers.24.mlp.gate_proj.SCB": "model-00002-of-00002.safetensors",

|

| 284 |

+

"model.layers.24.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

| 285 |

+

"model.layers.24.mlp.up_proj.SCB": "model-00002-of-00002.safetensors",

|

| 286 |

+

"model.layers.24.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

| 287 |

+

"model.layers.24.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 288 |

+

"model.layers.24.self_attn.k_proj.SCB": "model-00002-of-00002.safetensors",

|

| 289 |

+

"model.layers.24.self_attn.k_proj.weight": "model-00002-of-00002.safetensors",

|

| 290 |

+

"model.layers.24.self_attn.o_proj.SCB": "model-00002-of-00002.safetensors",

|

| 291 |

+

"model.layers.24.self_attn.o_proj.weight": "model-00002-of-00002.safetensors",

|

| 292 |

+

"model.layers.24.self_attn.q_proj.SCB": "model-00002-of-00002.safetensors",

|

| 293 |

+

"model.layers.24.self_attn.q_proj.weight": "model-00002-of-00002.safetensors",

|

| 294 |

+

"model.layers.24.self_attn.v_proj.SCB": "model-00002-of-00002.safetensors",

|

| 295 |

+

"model.layers.24.self_attn.v_proj.weight": "model-00002-of-00002.safetensors",

|

| 296 |

+

"model.layers.25.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 297 |

+

"model.layers.25.mlp.down_proj.SCB": "model-00002-of-00002.safetensors",

|

| 298 |

+

"model.layers.25.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

| 299 |

+

"model.layers.25.mlp.gate_proj.SCB": "model-00002-of-00002.safetensors",

|

| 300 |

+

"model.layers.25.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

| 301 |

+

"model.layers.25.mlp.up_proj.SCB": "model-00002-of-00002.safetensors",

|

| 302 |

+

"model.layers.25.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

| 303 |

+

"model.layers.25.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 304 |

+

"model.layers.25.self_attn.k_proj.SCB": "model-00002-of-00002.safetensors",

|

| 305 |

+

"model.layers.25.self_attn.k_proj.weight": "model-00002-of-00002.safetensors",

|

| 306 |

+

"model.layers.25.self_attn.o_proj.SCB": "model-00002-of-00002.safetensors",

|

| 307 |

+

"model.layers.25.self_attn.o_proj.weight": "model-00002-of-00002.safetensors",

|

| 308 |

+

"model.layers.25.self_attn.q_proj.SCB": "model-00002-of-00002.safetensors",

|

| 309 |

+

"model.layers.25.self_attn.q_proj.weight": "model-00002-of-00002.safetensors",

|

| 310 |

+

"model.layers.25.self_attn.v_proj.SCB": "model-00002-of-00002.safetensors",

|

| 311 |

+

"model.layers.25.self_attn.v_proj.weight": "model-00002-of-00002.safetensors",

|

| 312 |

+

"model.layers.26.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 313 |

+

"model.layers.26.mlp.down_proj.SCB": "model-00002-of-00002.safetensors",

|

| 314 |

+

"model.layers.26.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

| 315 |

+

"model.layers.26.mlp.gate_proj.SCB": "model-00002-of-00002.safetensors",

|

| 316 |

+

"model.layers.26.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

| 317 |

+

"model.layers.26.mlp.up_proj.SCB": "model-00002-of-00002.safetensors",

|

| 318 |

+

"model.layers.26.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

| 319 |

+

"model.layers.26.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 320 |

+

"model.layers.26.self_attn.k_proj.SCB": "model-00002-of-00002.safetensors",

|

| 321 |

+

"model.layers.26.self_attn.k_proj.weight": "model-00002-of-00002.safetensors",

|

| 322 |

+

"model.layers.26.self_attn.o_proj.SCB": "model-00002-of-00002.safetensors",

|

| 323 |

+

"model.layers.26.self_attn.o_proj.weight": "model-00002-of-00002.safetensors",

|

| 324 |

+

"model.layers.26.self_attn.q_proj.SCB": "model-00002-of-00002.safetensors",

|

| 325 |

+

"model.layers.26.self_attn.q_proj.weight": "model-00002-of-00002.safetensors",

|

| 326 |

+

"model.layers.26.self_attn.v_proj.SCB": "model-00002-of-00002.safetensors",

|

| 327 |

+

"model.layers.26.self_attn.v_proj.weight": "model-00002-of-00002.safetensors",

|

| 328 |

+

"model.layers.27.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 329 |

+

"model.layers.27.mlp.down_proj.SCB": "model-00002-of-00002.safetensors",

|

| 330 |

+

"model.layers.27.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

| 331 |

+

"model.layers.27.mlp.gate_proj.SCB": "model-00002-of-00002.safetensors",

|

| 332 |

+

"model.layers.27.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

| 333 |

+

"model.layers.27.mlp.up_proj.SCB": "model-00002-of-00002.safetensors",

|

| 334 |

+

"model.layers.27.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

| 335 |

+

"model.layers.27.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 336 |

+

"model.layers.27.self_attn.k_proj.SCB": "model-00002-of-00002.safetensors",

|

| 337 |

+

"model.layers.27.self_attn.k_proj.weight": "model-00002-of-00002.safetensors",

|

| 338 |

+

"model.layers.27.self_attn.o_proj.SCB": "model-00002-of-00002.safetensors",

|

| 339 |

+

"model.layers.27.self_attn.o_proj.weight": "model-00002-of-00002.safetensors",

|

| 340 |

+

"model.layers.27.self_attn.q_proj.SCB": "model-00002-of-00002.safetensors",

|

| 341 |

+

"model.layers.27.self_attn.q_proj.weight": "model-00002-of-00002.safetensors",

|

| 342 |

+

"model.layers.27.self_attn.v_proj.SCB": "model-00002-of-00002.safetensors",

|

| 343 |

+

"model.layers.27.self_attn.v_proj.weight": "model-00002-of-00002.safetensors",

|

| 344 |

+

"model.layers.28.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 345 |

+

"model.layers.28.mlp.down_proj.SCB": "model-00002-of-00002.safetensors",

|

| 346 |

+

"model.layers.28.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

| 347 |

+

"model.layers.28.mlp.gate_proj.SCB": "model-00002-of-00002.safetensors",

|

| 348 |

+

"model.layers.28.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

| 349 |

+

"model.layers.28.mlp.up_proj.SCB": "model-00002-of-00002.safetensors",

|

| 350 |

+

"model.layers.28.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

| 351 |

+

"model.layers.28.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 352 |

+

"model.layers.28.self_attn.k_proj.SCB": "model-00002-of-00002.safetensors",

|

| 353 |

+

"model.layers.28.self_attn.k_proj.weight": "model-00002-of-00002.safetensors",

|

| 354 |

+

"model.layers.28.self_attn.o_proj.SCB": "model-00002-of-00002.safetensors",

|

| 355 |

+

"model.layers.28.self_attn.o_proj.weight": "model-00002-of-00002.safetensors",

|

| 356 |

+

"model.layers.28.self_attn.q_proj.SCB": "model-00002-of-00002.safetensors",

|

| 357 |

+

"model.layers.28.self_attn.q_proj.weight": "model-00002-of-00002.safetensors",

|

| 358 |

+

"model.layers.28.self_attn.v_proj.SCB": "model-00002-of-00002.safetensors",

|

| 359 |

+

"model.layers.28.self_attn.v_proj.weight": "model-00002-of-00002.safetensors",

|

| 360 |

+

"model.layers.29.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 361 |

+

"model.layers.29.mlp.down_proj.SCB": "model-00002-of-00002.safetensors",

|

| 362 |

+

"model.layers.29.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

| 363 |

+

"model.layers.29.mlp.gate_proj.SCB": "model-00002-of-00002.safetensors",

|

| 364 |

+

"model.layers.29.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

| 365 |

+

"model.layers.29.mlp.up_proj.SCB": "model-00002-of-00002.safetensors",

|

| 366 |

+

"model.layers.29.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

| 367 |

+

"model.layers.29.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 368 |

+

"model.layers.29.self_attn.k_proj.SCB": "model-00002-of-00002.safetensors",

|

| 369 |

+

"model.layers.29.self_attn.k_proj.weight": "model-00002-of-00002.safetensors",

|

| 370 |

+

"model.layers.29.self_attn.o_proj.SCB": "model-00002-of-00002.safetensors",

|

| 371 |

+

"model.layers.29.self_attn.o_proj.weight": "model-00002-of-00002.safetensors",

|

| 372 |

+

"model.layers.29.self_attn.q_proj.SCB": "model-00002-of-00002.safetensors",

|

| 373 |

+

"model.layers.29.self_attn.q_proj.weight": "model-00002-of-00002.safetensors",

|

| 374 |

+

"model.layers.29.self_attn.v_proj.SCB": "model-00002-of-00002.safetensors",

|

| 375 |

+

"model.layers.29.self_attn.v_proj.weight": "model-00002-of-00002.safetensors",

|

| 376 |

+

"model.layers.3.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 377 |

+

"model.layers.3.mlp.down_proj.SCB": "model-00001-of-00002.safetensors",

|

| 378 |

+

"model.layers.3.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 379 |

+

"model.layers.3.mlp.gate_proj.SCB": "model-00001-of-00002.safetensors",

|

| 380 |

+

"model.layers.3.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 381 |

+

"model.layers.3.mlp.up_proj.SCB": "model-00001-of-00002.safetensors",

|

| 382 |

+

"model.layers.3.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 383 |

+

"model.layers.3.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 384 |

+

"model.layers.3.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 385 |

+

"model.layers.3.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 386 |

+

"model.layers.3.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 387 |

+

"model.layers.3.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 388 |

+

"model.layers.3.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 389 |

+

"model.layers.3.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 390 |

+

"model.layers.3.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 391 |

+

"model.layers.3.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 392 |

+

"model.layers.30.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 393 |

+

"model.layers.30.mlp.down_proj.SCB": "model-00002-of-00002.safetensors",

|

| 394 |

+

"model.layers.30.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

| 395 |

+

"model.layers.30.mlp.gate_proj.SCB": "model-00002-of-00002.safetensors",

|

| 396 |

+

"model.layers.30.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

| 397 |

+

"model.layers.30.mlp.up_proj.SCB": "model-00002-of-00002.safetensors",

|

| 398 |

+

"model.layers.30.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

| 399 |

+

"model.layers.30.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 400 |

+

"model.layers.30.self_attn.k_proj.SCB": "model-00002-of-00002.safetensors",

|

| 401 |

+

"model.layers.30.self_attn.k_proj.weight": "model-00002-of-00002.safetensors",

|

| 402 |

+

"model.layers.30.self_attn.o_proj.SCB": "model-00002-of-00002.safetensors",

|

| 403 |

+

"model.layers.30.self_attn.o_proj.weight": "model-00002-of-00002.safetensors",

|

| 404 |

+

"model.layers.30.self_attn.q_proj.SCB": "model-00002-of-00002.safetensors",

|

| 405 |

+

"model.layers.30.self_attn.q_proj.weight": "model-00002-of-00002.safetensors",

|

| 406 |

+

"model.layers.30.self_attn.v_proj.SCB": "model-00002-of-00002.safetensors",

|

| 407 |

+

"model.layers.30.self_attn.v_proj.weight": "model-00002-of-00002.safetensors",

|

| 408 |

+

"model.layers.31.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 409 |

+

"model.layers.31.mlp.down_proj.SCB": "model-00002-of-00002.safetensors",

|

| 410 |

+

"model.layers.31.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

| 411 |

+

"model.layers.31.mlp.gate_proj.SCB": "model-00002-of-00002.safetensors",

|

| 412 |

+

"model.layers.31.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

| 413 |

+

"model.layers.31.mlp.up_proj.SCB": "model-00002-of-00002.safetensors",

|

| 414 |

+

"model.layers.31.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

| 415 |

+

"model.layers.31.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 416 |

+

"model.layers.31.self_attn.k_proj.SCB": "model-00002-of-00002.safetensors",

|

| 417 |

+

"model.layers.31.self_attn.k_proj.weight": "model-00002-of-00002.safetensors",

|

| 418 |

+

"model.layers.31.self_attn.o_proj.SCB": "model-00002-of-00002.safetensors",

|

| 419 |

+

"model.layers.31.self_attn.o_proj.weight": "model-00002-of-00002.safetensors",

|

| 420 |

+

"model.layers.31.self_attn.q_proj.SCB": "model-00002-of-00002.safetensors",

|

| 421 |

+

"model.layers.31.self_attn.q_proj.weight": "model-00002-of-00002.safetensors",

|

| 422 |

+

"model.layers.31.self_attn.v_proj.SCB": "model-00002-of-00002.safetensors",

|

| 423 |

+

"model.layers.31.self_attn.v_proj.weight": "model-00002-of-00002.safetensors",

|

| 424 |

+

"model.layers.4.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 425 |

+

"model.layers.4.mlp.down_proj.SCB": "model-00001-of-00002.safetensors",

|

| 426 |

+

"model.layers.4.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 427 |

+

"model.layers.4.mlp.gate_proj.SCB": "model-00001-of-00002.safetensors",

|

| 428 |

+

"model.layers.4.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 429 |

+

"model.layers.4.mlp.up_proj.SCB": "model-00001-of-00002.safetensors",

|

| 430 |

+

"model.layers.4.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 431 |

+

"model.layers.4.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 432 |

+

"model.layers.4.self_attn.k_proj.SCB": "model-00001-of-00002.safetensors",

|

| 433 |

+

"model.layers.4.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 434 |

+

"model.layers.4.self_attn.o_proj.SCB": "model-00001-of-00002.safetensors",

|

| 435 |

+

"model.layers.4.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 436 |

+

"model.layers.4.self_attn.q_proj.SCB": "model-00001-of-00002.safetensors",

|

| 437 |

+

"model.layers.4.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 438 |

+

"model.layers.4.self_attn.v_proj.SCB": "model-00001-of-00002.safetensors",

|

| 439 |

+

"model.layers.4.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 440 |

+