library_name: diffusers

pipeline_tag: image-to-image

LDM3D-VR model

The LDM3D-VR model was proposed in "LDM3D-VR: Latent Diffusion Model for 3D" by Gabriela Ben Melech Stan, Diana Wofk, Estelle Aflalo, Shao-Yen Tseng, Zhipeng Cai, Michael Paulitsch, Vasudev Lal.

LDM3D-VR got accepted to [NeurIPS Workshop'23 on Diffusion Models][https://neurips.cc/virtual/2023/workshop/66539].

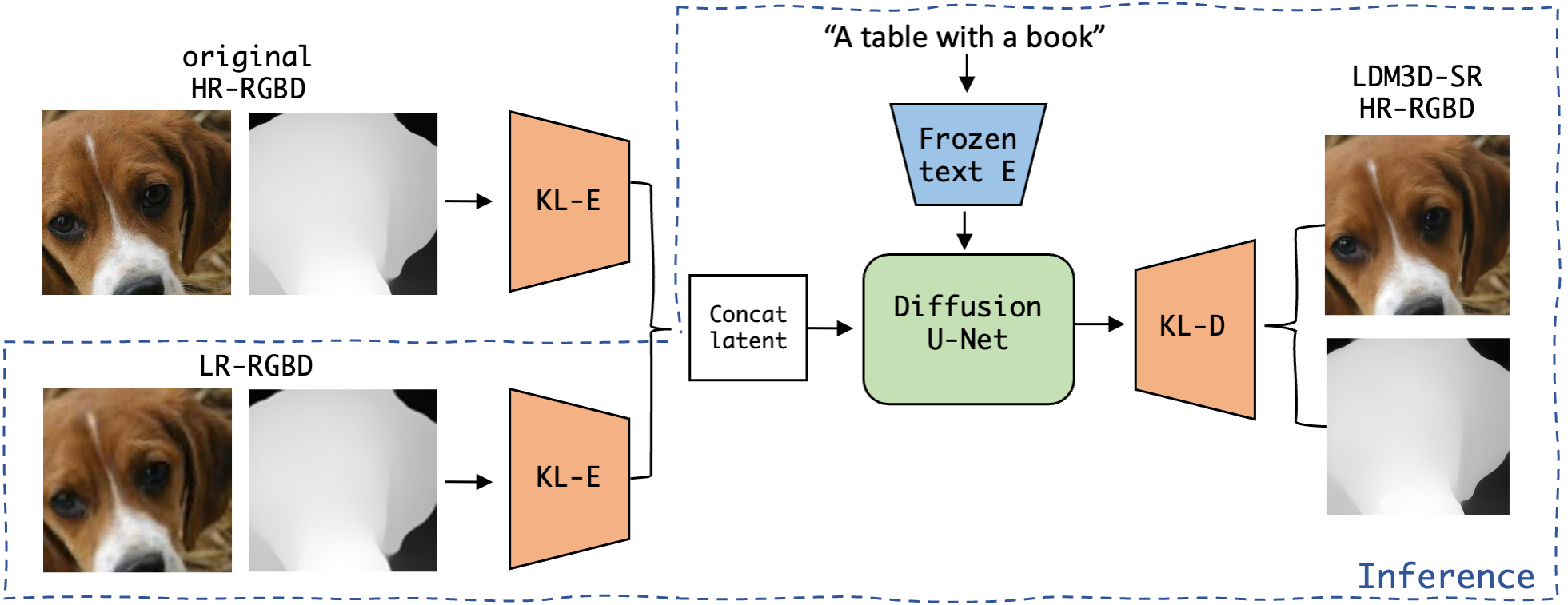

This new checkpoint related to the upscaler called ldm3d-sr.

Model description

The abstract from the paper is the following: Latent diffusion models have proven to be state-of-the-art in the creation and manipulation of visual outputs. However, as far as we know, the generation of depth maps jointly with RGB is still limited. We introduce LDM3D-VR, a suite of diffusion models targeting virtual reality development that includes LDM3D-pano and LDM3D-SR. These models enable the generation of panoramic RGBD based on textual prompts and the upscaling of low-resolution inputs to high-resolution RGBD, respectively. Our models are fine-tuned from existing pretrained models on datasets containing panoramic/high-resolution RGB images, depth maps and captions. Both models are evaluated in comparison to existing related methods.

Examples

Using the 🤗's Diffusers library in a simple and efficient manner.

from PIL import Image

import os

import torch

from diffusers import StableDiffusionUpscaleLDM3DPipeline, StableDiffusionLDM3DPipeline

#Generate a rgb/depth output from LDM3D

from PIL import Image

import os

import torch

from diffusers import StableDiffusionLDM3DPipeline, DiffusionPipeline

#Generate a rgb/depth output from LDM3D

pipe_ldm3d = StableDiffusionLDM3DPipeline.from_pretrained("Intel/ldm3d-4c")

pipe_ldm3d.to("cuda")

prompt =f"A picture of some lemons on a table"

output = pipe_ldm3d(prompt)

rgb_image, depth_image = output.rgb, output.depth

rgb_image[0].save(f"lemons_ldm3d_rgb.jpg")

depth_image[0].save(f"lemons_ldm3d_depth.png")

#Upscale the previous output to a resolution of (1024, 1024)

pipe_ldm3d_upscale = DiffusionPipeline.from_pretrained("Intel/ldm3d-sr", custom_pipeline="pipeline_stable_diffusion_upscale_ldm3d")

pipe_ldm3d_upscale.to("cuda")

low_res_img = Image.open(f"lemons_ldm3d_rgb.jpg").convert("RGB")

low_res_depth = Image.open(f"lemons_ldm3d_depth.png").convert("L")

outputs = pipe_ldm3d_upscale(prompt="high quality high resolution uhd 4k image", rgb=low_res_img, depth=low_res_depth, num_inference_steps=50, target_res=[1024, 1024])

upscaled_rgb, upscaled_depth =outputs.rgb[0], outputs.depth[0]

upscaled_rgb.save(f"upscaled_lemons_rgb.png")

upscaled_depth.save(f"upscaled_lemons_depth.png")

Results

BibTeX entry and citation info

@misc{stan2023ldm3dvr, title={LDM3D-VR: Latent Diffusion Model for 3D VR}, author={Gabriela Ben Melech Stan and Diana Wofk and Estelle Aflalo and Shao-Yen Tseng and Zhipeng Cai and Michael Paulitsch and Vasudev Lal}, year={2023}, eprint={2311.03226}, archivePrefix={arXiv}, primaryClass={cs.CV} }