metadata

license: cc-by-nc-4.0

language:

- pl

tags:

- llama

- qlora

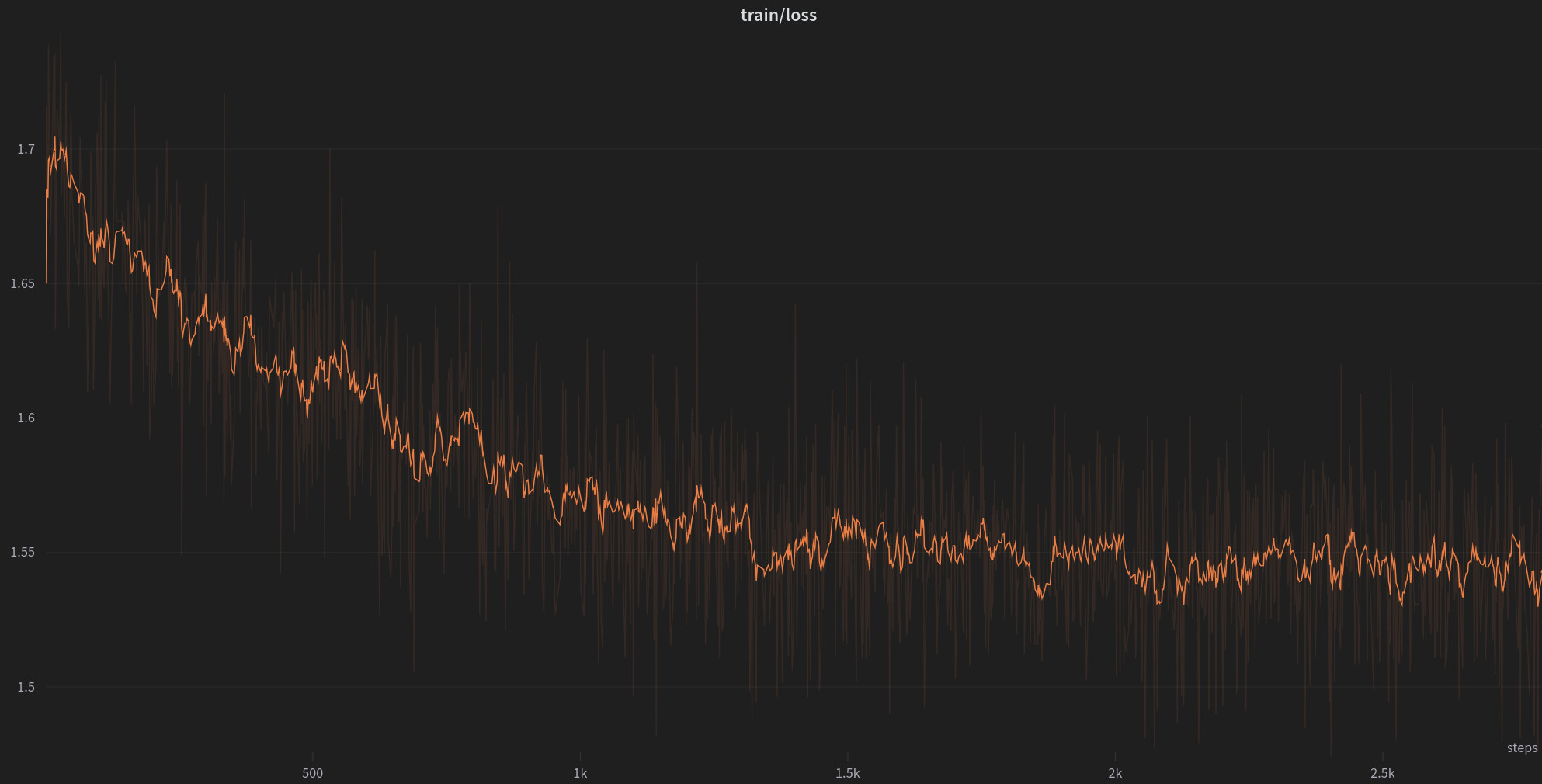

This repo contains a qlora adapter for Llama-2-7b, trained on 1B tokens, only in Polish.

The training took 20 days on a single RTX 4090 with the following hyperparameters:

- context length: 4096

- batch_size: 128

- learning_rate: 0.0002, cosine with warmup

- lora_r: 64

- lora_alpha: 16

- lora_modules: all

- lora_dropout: 0.0

- weight_decay: 0.1

- max_grad_norm: 0.3

- double_quant, nf4

- optimizer: paged_adamw_32bit

This adapter allows the model to speak Polish more accurately than vanilla Llama-2-7b.