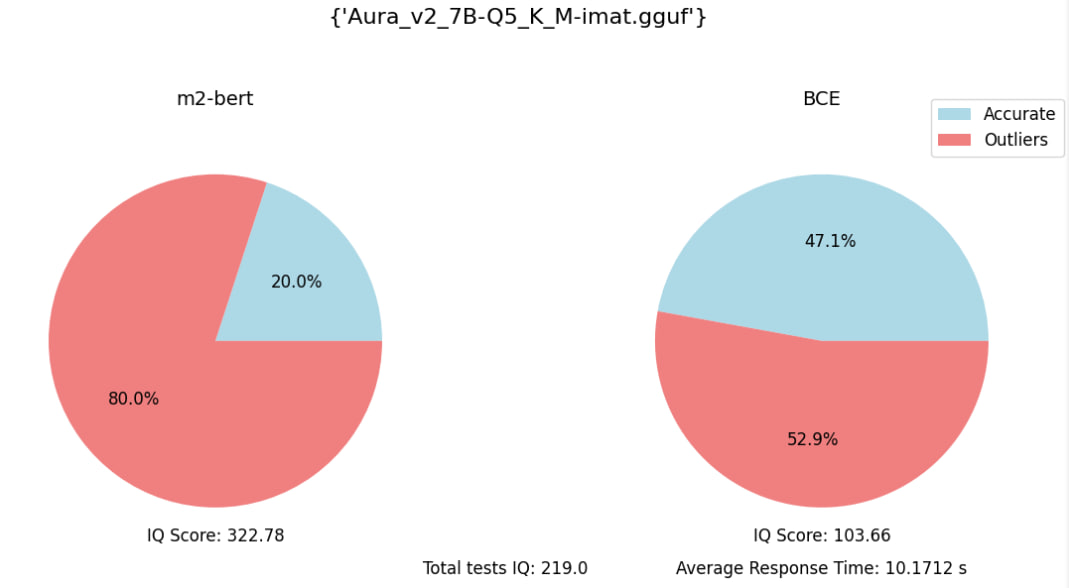

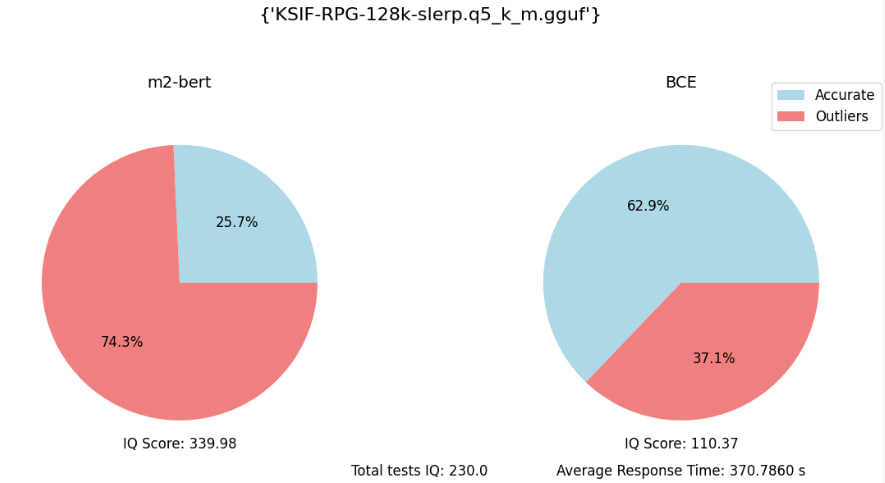

Russian speaking 7B models

Collection

There is some my 7B models good speak and understand Russian language. Approved by some data-set my own tests. Will be link to github repo soon...🪬

•

7 items

•

Updated

•

4