File size: 5,062 Bytes

8bff385 b66970a 8bff385 b66970a a91ffef 8bff385 b66970a 8bff385 b66970a 26b6542 b66970a 26b6542 b66970a 26b6542 8bff385 26b6542 b66970a 26b6542 b66970a 8bff385 26b6542 8bff385 26b6542 8bff385 b66970a 8bff385 26b6542 8bff385 b66970a 8bff385 26b6542 8bff385 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 |

---

license: mit

license_link: https://huggingface.co/microsoft/Florence-2-base-ft/resolve/main/LICENSE

pipeline_tag: image-text-to-text

tags:

- vision

- ocr

- segmentation

datasets:

- yifeihu/TF-ID-arxiv-papers

---

# TF-ID: Table/Figure IDentifier for academic papers

## Model Summary

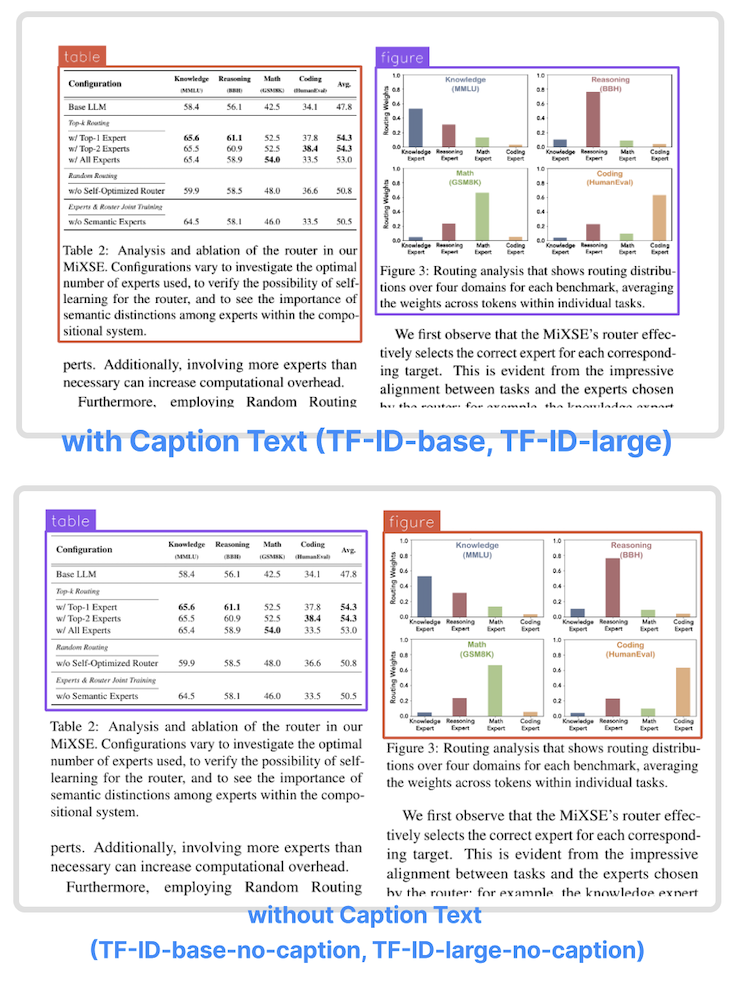

TF-ID (Table/Figure IDentifier) is a family of object detection models finetuned to extract tables and figures in academic papers created by [Yifei Hu](https://x.com/hu_yifei). They come in four versions:

| Model | Model size | Model Description |

| ------- | ------------- | ------------- |

| TF-ID-base[[HF]](https://huggingface.co/yifeihu/TF-ID-base) | 0.23B | Extract tables/figures and their caption text

| TF-ID-large[[HF]](https://huggingface.co/yifeihu/TF-ID-large) (Recommended) | 0.77B | Extract tables/figures and their caption text

| TF-ID-base-no-caption[[HF]](https://huggingface.co/yifeihu/TF-ID-base-no-caption) | 0.23B | Extract tables/figures without caption text

| TF-ID-large-no-caption[[HF]](https://huggingface.co/yifeihu/TF-ID-large-no-caption) (Recommended) | 0.77B | Extract tables/figures without caption text

All TF-ID models are finetuned from [microsoft/Florence-2](https://huggingface.co/microsoft/Florence-2-large-ft) checkpoints.

- The models were finetuned with papers from Hugging Face Daily Papers. All bounding boxes are manually annotated and checked by humans.

- TF-ID models take an image of a single paper page as the input, and return bounding boxes for all tables and figures in the given page.

- TF-ID-base and TF-ID-large draw bounding boxes around tables/figures and their caption text.

- TF-ID-base-no-caption and TF-ID-large-no-caption draw bounding boxes around tables/figures without their caption text.

**Large models are always recommended!**

Object Detection results format:

{'\<OD>': {'bboxes': [[x1, y1, x2, y2], ...],

'labels': ['label1', 'label2', ...]} }

## Training Code and Dataset

- Dataset: [yifeihu/TF-ID-arxiv-papers](https://huggingface.co/datasets/yifeihu/TF-ID-arxiv-papers)

- Code: [github.com/ai8hyf/TF-ID](https://github.com/ai8hyf/TF-ID)

## Benchmarks

We tested the models on paper pages outside the training dataset. The papers are a subset of huggingface daily paper.

Correct output - the model draws correct bounding boxes for every table/figure in the given page.

| Model | Total Images | Correct Output | Success Rate |

|---------------------------------------------------------------|--------------|----------------|--------------|

| TF-ID-base[[HF]](https://huggingface.co/yifeihu/TF-ID-base) | 258 | 251 | 97.29% |

| TF-ID-large[[HF]](https://huggingface.co/yifeihu/TF-ID-large) | 258 | 253 | 98.06% |

| Model | Total Images | Correct Output | Success Rate |

|---------------------------------------------------------------|--------------|----------------|--------------|

| TF-ID-base-no-caption[[HF]](https://huggingface.co/yifeihu/TF-ID-base-no-caption) | 261 | 253 | 96.93% |

| TF-ID-large-no-caption[[HF]](https://huggingface.co/yifeihu/TF-ID-large-no-caption) | 261 | 254 | 97.32% |

Depending on the use cases, some "incorrect" output could be totally usable. For example, the model draw two bounding boxes for one figure with two child components.

## How to Get Started with the Model

Use the code below to get started with the model.

```python

import requests

from PIL import Image

from transformers import AutoProcessor, AutoModelForCausalLM

model = AutoModelForCausalLM.from_pretrained("yifeihu/TF-ID-base", trust_remote_code=True)

processor = AutoProcessor.from_pretrained("yifeihu/TF-ID-base", trust_remote_code=True)

prompt = "<OD>"

url = "https://huggingface.co/yifeihu/TF-ID-base/resolve/main/arxiv_2305_10853_5.png?download=true"

image = Image.open(requests.get(url, stream=True).raw)

inputs = processor(text=prompt, images=image, return_tensors="pt")

generated_ids = model.generate(

input_ids=inputs["input_ids"],

pixel_values=inputs["pixel_values"],

max_new_tokens=1024,

do_sample=False,

num_beams=3

)

generated_text = processor.batch_decode(generated_ids, skip_special_tokens=False)[0]

parsed_answer = processor.post_process_generation(generated_text, task="<OD>", image_size=(image.width, image.height))

print(parsed_answer)

```

To visualize the results, see [this tutorial notebook](https://colab.research.google.com/github/roboflow-ai/notebooks/blob/main/notebooks/how-to-finetune-florence-2-on-detection-dataset.ipynb) for more details.

## BibTex and citation info

```

@misc{TF-ID,

author = {Yifei Hu},

title = {TF-ID: Table/Figure IDentifier for academic papers},

year = {2024},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/ai8hyf/TF-ID}},

}

``` |