Spaces:

Running

on

Zero

Running

on

Zero

Mohamed Rashad

commited on

Commit

·

92871c6

1

Parent(s):

0a285ea

chore: Update app.py with GPU support for text extraction and image processing functionality

Browse files- app.py +134 -67

- book_page.jpeg → book_page1.jpeg +0 -0

- book_page2.jpeg +0 -0

- book_page3.jpeg +0 -0

- book_page4.jpeg +0 -0

- book_page5.jpeg +0 -0

app.py

CHANGED

|

@@ -1,98 +1,165 @@

|

|

| 1 |

-

from transformers import

|

|

|

|

|

|

|

|

|

|

|

|

|

| 2 |

import gradio as gr

|

| 3 |

import torch

|

| 4 |

-

from PIL import Image

|

| 5 |

from pathlib import Path

|

| 6 |

from pdf2image import convert_from_path

|

| 7 |

import spaces

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 8 |

|

| 9 |

-

# Load the model and processor

|

| 10 |

-

processor = NougatProcessor.from_pretrained("MohamedRashad/arabic-small-nougat")

|

| 11 |

-

model = VisionEncoderDecoderModel.from_pretrained("MohamedRashad/arabic-small-nougat")

|

| 12 |

-

device = "cuda" if torch.cuda.is_available() else "cpu"

|

| 13 |

-

model.to(device)

|

| 14 |

-

|

| 15 |

-

print(f"Using {device} device")

|

| 16 |

-

context_length = 2048

|

| 17 |

|

| 18 |

@spaces.GPU

|

| 19 |

-

def extract_text_from_image(image):

|

| 20 |

-

""

|

| 21 |

-

|

| 22 |

-

|

| 23 |

-

|

| 24 |

-

|

| 25 |

-

|

| 26 |

-

|

| 27 |

-

|

| 28 |

-

|

| 29 |

-

|

| 30 |

-

# prepare PDF image for the model

|

| 31 |

-

pixel_values = processor(image, return_tensors="pt").pixel_values

|

| 32 |

-

|

| 33 |

-

# generate transcription

|

| 34 |

-

outputs = model.generate(

|

| 35 |

-

pixel_values.to(device),

|

| 36 |

-

min_length=1,

|

| 37 |

-

max_new_tokens=context_length,

|

| 38 |

-

bad_words_ids=[[processor.tokenizer.unk_token_id]],

|

| 39 |

)

|

| 40 |

-

|

| 41 |

-

|

| 42 |

-

|

| 43 |

-

|

| 44 |

-

|

| 45 |

-

|

| 46 |

-

|

| 47 |

-

|

| 48 |

-

|

| 49 |

-

|

| 50 |

-

|

| 51 |

-

|

| 52 |

-

|

| 53 |

-

|

| 54 |

-

|

| 55 |

-

|

| 56 |

-

|

| 57 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 58 |

images = convert_from_path(pdf_path)

|

| 59 |

-

texts = []

|

| 60 |

-

for image in progress.tqdm(images):

|

| 61 |

-

extracted_text = extract_text_from_image(image)

|

| 62 |

-

texts.append(extracted_text)

|

| 63 |

|

| 64 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 65 |

|

| 66 |

-

|

| 67 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 68 |

|

| 69 |

-

|

| 70 |

-

|

| 71 |

|

| 72 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 73 |

"""

|

| 74 |

|

| 75 |

-

example_images =

|

| 76 |

|

| 77 |

-

with gr.Blocks(title="Arabic

|

| 78 |

-

gr.HTML(

|

|

|

|

|

|

|

| 79 |

gr.Markdown(model_description)

|

| 80 |

|

| 81 |

with gr.Tab("Extract Text from Image"):

|

| 82 |

with gr.Row():

|

| 83 |

with gr.Column():

|

| 84 |

input_image = gr.Image(label="Input Image", type="pil")

|

|

|

|

|

|

|

|

|

|

| 85 |

image_submit_button = gr.Button(value="Submit", variant="primary")

|

| 86 |

-

output = gr.Markdown(label="Output Markdown", rtl=True)

|

| 87 |

-

image_submit_button.click(

|

| 88 |

-

|

| 89 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 90 |

with gr.Tab("Extract Text from PDF"):

|

| 91 |

with gr.Row():

|

| 92 |

with gr.Column():

|

| 93 |

pdf = gr.File(label="Input PDF", type="filepath")

|

|

|

|

|

|

|

|

|

|

| 94 |

pdf_submit_button = gr.Button(value="Submit", variant="primary")

|

| 95 |

-

output = gr.Markdown(label="Output Markdown", rtl=True)

|

| 96 |

-

pdf_submit_button.click(

|

|

|

|

|

|

|

| 97 |

|

| 98 |

demo.queue().launch(share=False)

|

|

|

|

| 1 |

+

from transformers import (

|

| 2 |

+

NougatProcessor,

|

| 3 |

+

VisionEncoderDecoderModel,

|

| 4 |

+

TextIteratorStreamer,

|

| 5 |

+

)

|

| 6 |

import gradio as gr

|

| 7 |

import torch

|

|

|

|

| 8 |

from pathlib import Path

|

| 9 |

from pdf2image import convert_from_path

|

| 10 |

import spaces

|

| 11 |

+

from threading import Thread

|

| 12 |

+

|

| 13 |

+

models_supported = {

|

| 14 |

+

"arabic-small-nougat": [

|

| 15 |

+

NougatProcessor.from_pretrained("MohamedRashad/arabic-small-nougat"),

|

| 16 |

+

VisionEncoderDecoderModel.from_pretrained("MohamedRashad/arabic-small-nougat"),

|

| 17 |

+

],

|

| 18 |

+

"arabic-base-nougat": [

|

| 19 |

+

NougatProcessor.from_pretrained("MohamedRashad/arabic-base-nougat"),

|

| 20 |

+

VisionEncoderDecoderModel.from_pretrained(

|

| 21 |

+

"MohamedRashad/arabic-base-nougat",

|

| 22 |

+

torch_dtype=torch.bfloat16,

|

| 23 |

+

attn_implementation={"decoder": "flash_attention_2", "encoder": "eager"},

|

| 24 |

+

),

|

| 25 |

+

],

|

| 26 |

+

"arabic-large-nougat": [

|

| 27 |

+

NougatProcessor.from_pretrained("MohamedRashad/arabic-large-nougat"),

|

| 28 |

+

VisionEncoderDecoderModel.from_pretrained(

|

| 29 |

+

"MohamedRashad/arabic-large-nougat",

|

| 30 |

+

torch_dtype=torch.bfloat16,

|

| 31 |

+

attn_implementation={"decoder": "flash_attention_2", "encoder": "eager"},

|

| 32 |

+

),

|

| 33 |

+

],

|

| 34 |

+

}

|

| 35 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 36 |

|

| 37 |

@spaces.GPU

|

| 38 |

+

def extract_text_from_image(image, model_name):

|

| 39 |

+

print(f"Extracting text from image using model: {model_name}")

|

| 40 |

+

processor, model = models_supported[model_name]

|

| 41 |

+

context_length = model.decoder.config.max_position_embeddings

|

| 42 |

+

torch_dtype = model.dtype

|

| 43 |

+

device = "cuda" if torch.cuda.is_available() else "cpu"

|

| 44 |

+

model.to(device)

|

| 45 |

+

|

| 46 |

+

pixel_values = (

|

| 47 |

+

processor(image, return_tensors="pt").pixel_values.to(torch_dtype).to(device)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 48 |

)

|

| 49 |

+

streamer = TextIteratorStreamer(processor.tokenizer, skip_special_tokens=True)

|

| 50 |

+

|

| 51 |

+

# Start generation in a separate thread

|

| 52 |

+

generation_kwargs = {

|

| 53 |

+

"pixel_values": pixel_values,

|

| 54 |

+

"min_length": 1,

|

| 55 |

+

"max_new_tokens": context_length,

|

| 56 |

+

"streamer": streamer,

|

| 57 |

+

}

|

| 58 |

+

|

| 59 |

+

thread = Thread(target=model.generate, kwargs=generation_kwargs)

|

| 60 |

+

thread.start()

|

| 61 |

+

|

| 62 |

+

# Yield tokens as they become available

|

| 63 |

+

output = ""

|

| 64 |

+

for token in streamer:

|

| 65 |

+

output += token

|

| 66 |

+

yield output

|

| 67 |

+

|

| 68 |

+

thread.join()

|

| 69 |

+

|

| 70 |

+

|

| 71 |

+

@spaces.GPU

|

| 72 |

+

def extract_text_from_pdf(pdf_path, model_name):

|

| 73 |

+

processor, model = models_supported[model_name]

|

| 74 |

+

context_length = model.decoder.config.max_position_embeddings

|

| 75 |

+

torch_dtype = model.dtype

|

| 76 |

+

device = "cuda" if torch.cuda.is_available() else "cpu"

|

| 77 |

+

model.to(device)

|

| 78 |

+

|

| 79 |

+

streamer = TextIteratorStreamer(processor.tokenizer, skip_special_tokens=True)

|

| 80 |

+

print(f"Extracting text from PDF: {pdf_path}")

|

| 81 |

images = convert_from_path(pdf_path)

|

|

|

|

|

|

|

|

|

|

|

|

|

| 82 |

|

| 83 |

+

pdf_output = ""

|

| 84 |

+

for image in images:

|

| 85 |

+

pixel_values = (

|

| 86 |

+

processor(image, return_tensors="pt")

|

| 87 |

+

.pixel_values.to(torch_dtype)

|

| 88 |

+

.to(device)

|

| 89 |

+

)

|

| 90 |

|

| 91 |

+

# Start generation in a separate thread

|

| 92 |

+

generation_kwargs = {

|

| 93 |

+

"pixel_values": pixel_values,

|

| 94 |

+

"min_length": 1,

|

| 95 |

+

"max_new_tokens": context_length,

|

| 96 |

+

"streamer": streamer,

|

| 97 |

+

}

|

| 98 |

|

| 99 |

+

thread = Thread(target=model.generate, kwargs=generation_kwargs)

|

| 100 |

+

thread.start()

|

| 101 |

|

| 102 |

+

# Yield tokens as they become available

|

| 103 |

+

for token in streamer:

|

| 104 |

+

pdf_output += token

|

| 105 |

+

yield pdf_output

|

| 106 |

+

|

| 107 |

+

thread.join()

|

| 108 |

+

pdf_output += "\n\n"

|

| 109 |

+

yield pdf_output

|

| 110 |

+

|

| 111 |

+

|

| 112 |

+

model_description = """This is the official demo for the Arabic Nougat models. It is an end-to-end Markdown Extraction model that extracts text from images or PDFs and write them in Markdown.

|

| 113 |

+

|

| 114 |

+

There are three models available:

|

| 115 |

+

- [arabic-small-nougat](https://huggingface.co/MohamedRashad/arabic-small-nougat): A small model that is faster but less accurate (a finetune from [facebook/nougat-small](https://huggingface.co/facebook/nougat-small)).

|

| 116 |

+

- [arabic-base-nougat](https://huggingface.co/MohamedRashad/arabic-base-nougat): A base model that is more accurate but slower (a finetune from [facebook/nougat-base](https://huggingface.co/facebook/nougat-base)).

|

| 117 |

+

- [arabic-large-nougat](https://huggingface.co/MohamedRashad/arabic-large-nougat): The largest of the three (Made from scratch using [riotu-lab/Aranizer-PBE-86k](https://huggingface.co/riotu-lab/Aranizer-PBE-86k) tokenizer and a larger transformer decoder model).

|

| 118 |

+

|

| 119 |

+

**Disclaimer**: These models hallucinate text and are not perfect. They are trained on a mix of synthetic and real data and may not work well on all types of images.

|

| 120 |

"""

|

| 121 |

|

| 122 |

+

example_images = list(Path(__file__).parent.glob("*.jpeg"))

|

| 123 |

|

| 124 |

+

with gr.Blocks(title="Arabic Nougat") as demo:

|

| 125 |

+

gr.HTML(

|

| 126 |

+

"<h1 style='text-align: center'>Arabic End-to-End Structured OCR for textbooks</h1>"

|

| 127 |

+

)

|

| 128 |

gr.Markdown(model_description)

|

| 129 |

|

| 130 |

with gr.Tab("Extract Text from Image"):

|

| 131 |

with gr.Row():

|

| 132 |

with gr.Column():

|

| 133 |

input_image = gr.Image(label="Input Image", type="pil")

|

| 134 |

+

model_dropdown = gr.Dropdown(

|

| 135 |

+

label="Model", choices=list(models_supported.keys()), value=None

|

| 136 |

+

)

|

| 137 |

image_submit_button = gr.Button(value="Submit", variant="primary")

|

| 138 |

+

output = gr.Markdown(label="Output Markdown", rtl=True)

|

| 139 |

+

image_submit_button.click(

|

| 140 |

+

extract_text_from_image,

|

| 141 |

+

inputs=[input_image, model_dropdown],

|

| 142 |

+

outputs=output,

|

| 143 |

+

)

|

| 144 |

+

gr.Examples(

|

| 145 |

+

example_images,

|

| 146 |

+

[input_image],

|

| 147 |

+

output,

|

| 148 |

+

extract_text_from_image,

|

| 149 |

+

cache_examples=False,

|

| 150 |

+

)

|

| 151 |

+

|

| 152 |

with gr.Tab("Extract Text from PDF"):

|

| 153 |

with gr.Row():

|

| 154 |

with gr.Column():

|

| 155 |

pdf = gr.File(label="Input PDF", type="filepath")

|

| 156 |

+

model_dropdown = gr.Dropdown(

|

| 157 |

+

label="Model", choices=list(models_supported.keys()), value=None

|

| 158 |

+

)

|

| 159 |

pdf_submit_button = gr.Button(value="Submit", variant="primary")

|

| 160 |

+

output = gr.Markdown(label="Output Markdown", rtl=True)

|

| 161 |

+

pdf_submit_button.click(

|

| 162 |

+

extract_text_from_pdf, inputs=[pdf, model_dropdown], outputs=output

|

| 163 |

+

)

|

| 164 |

|

| 165 |

demo.queue().launch(share=False)

|

book_page.jpeg → book_page1.jpeg

RENAMED

|

File without changes

|

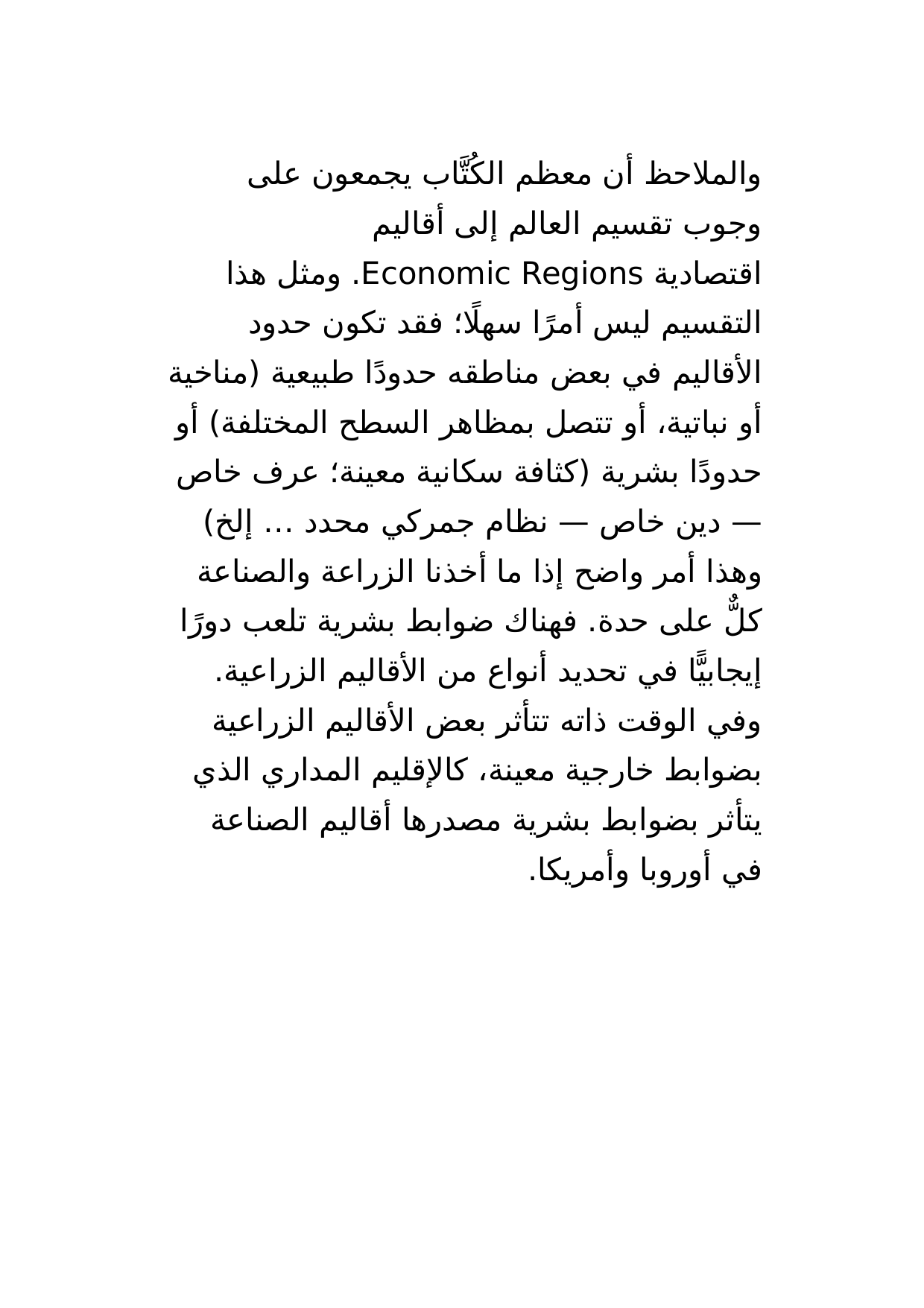

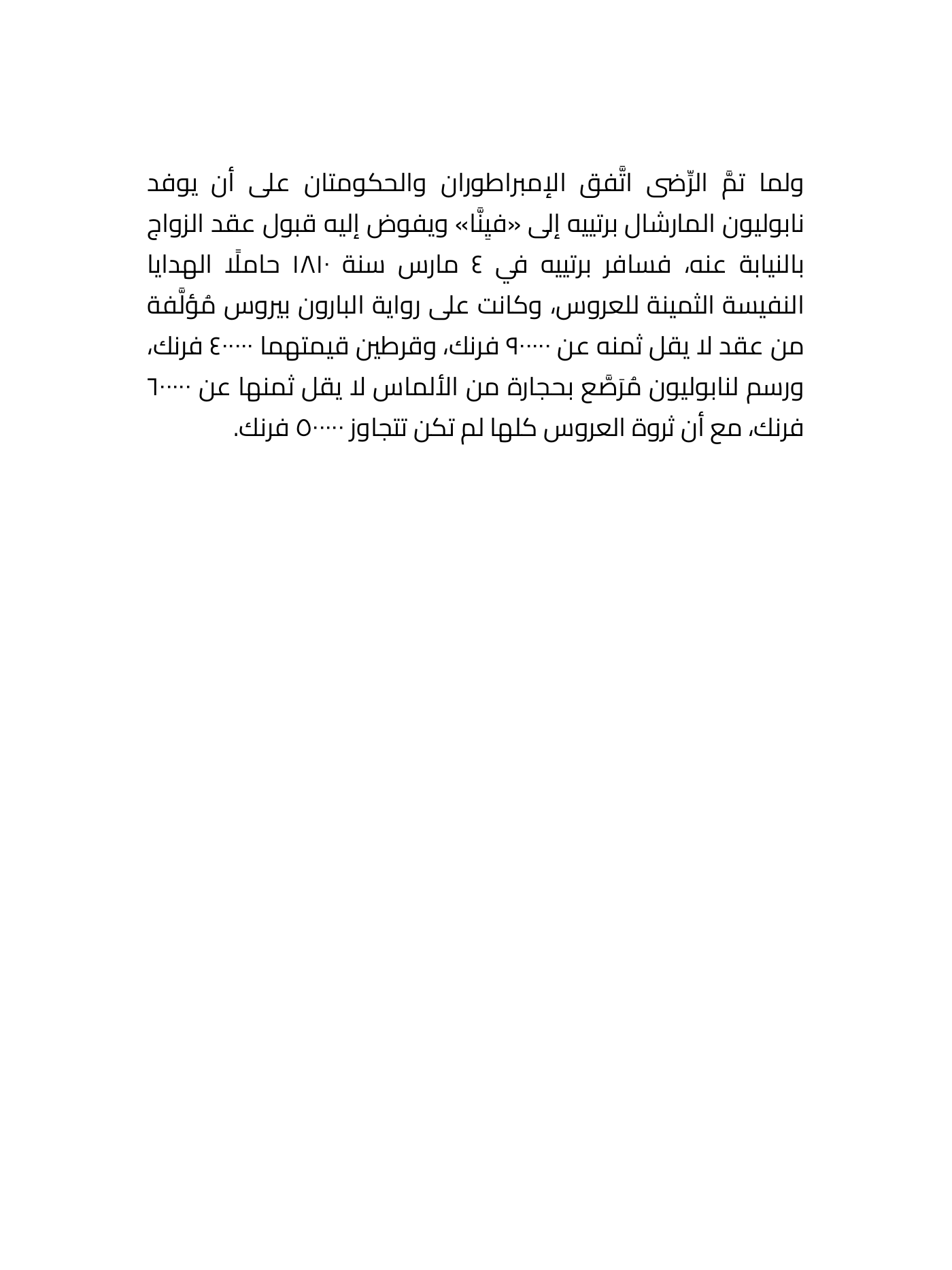

book_page2.jpeg

ADDED

|

book_page3.jpeg

ADDED

|

book_page4.jpeg

ADDED

|

book_page5.jpeg

ADDED

|