Spaces:

Running

Running

Upload 26 files

Browse files- .gitattributes +1 -0

- README.md +136 -293

- WizardCoder/CODE_LICENSE +201 -0

- WizardCoder/DATA_LICENSE +407 -0

- WizardCoder/MODEL_WEIGHTS_LICENSE +111 -0

- WizardCoder/README.md +364 -0

- WizardCoder/data/humaneval.59.8.gen.zip +3 -0

- WizardCoder/data/mbpp.test.zip +3 -0

- WizardCoder/imgs/compare_sota.png +0 -0

- WizardCoder/imgs/pass1.png +0 -0

- WizardCoder/src/humaneval_gen.py +161 -0

- WizardCoder/src/humaneval_gen_vllm.py +114 -0

- WizardCoder/src/inference_wizardcoder.py +121 -0

- WizardCoder/src/mbpp_gen.py +197 -0

- WizardCoder/src/process_humaneval.py +69 -0

- WizardCoder/src/process_mbpp.py +73 -0

- WizardCoder/src/train_wizardcoder.py +248 -0

- training/data/alpaca_data.json +3 -0

- training/requirements.txt +10 -0

- training/src/configs/deepspeed_config.json +46 -0

- training/src/configs/hostfile +2 -0

- training/src/conversation.py +478 -0

- training/src/environment.yml +203 -0

- training/src/generate.py +145 -0

- training/src/train.py +246 -0

- training/src/train_freeform_multiturn.py +301 -0

- training/src/utils.py +173 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

training/data/alpaca_data.json filter=lfs diff=lfs merge=lfs -text

|

README.md

CHANGED

|

@@ -1,359 +1,201 @@

|

|

| 1 |

-

|

| 2 |

-

license: apache-2.0

|

| 3 |

-

title: WizardLM

|

| 4 |

-

sdk: streamlit

|

| 5 |

-

emoji: 🏃

|

| 6 |

-

colorFrom: red

|

| 7 |

-

colorTo: purple

|

| 8 |

-

sdk_version: 1.41.1

|

| 9 |

-

---

|

| 10 |

-

|

| 11 |

-

|

| 12 |

-

# WizardCoder: Empowering Code Large Language Models with Evol-Instruct

|

| 13 |

-

|

| 14 |

-

[](CODE_LICENSE)

|

| 15 |

-

[](DATA_LICENSE)

|

| 16 |

-

<!-- [](MODEL_WEIGHTS_LICENSE) -->

|

| 17 |

-

[](https://www.python.org/downloads/release/python-390/)

|

| 18 |

-

|

| 19 |

-

To develop our WizardCoder model, we begin by adapting the Evol-Instruct method specifically for coding tasks. This involves tailoring the prompt to the domain of code-related instructions. Subsequently, we fine-tune the Code LLMs, StarCoder or Code LLama, utilizing the newly created instruction-following training set.

|

| 20 |

-

|

| 21 |

-

## News

|

| 22 |

-

|

| 23 |

-

- 🔥🔥🔥[2023/08/26] We released **WizardCoder-Python-34B-V1.0** , which achieves the **73.2 pass@1** and surpasses **GPT4 (2023/03/15)**, **ChatGPT-3.5**, and **Claude2** on the [HumanEval Benchmarks](https://github.com/openai/human-eval).

|

| 24 |

-

- [2023/06/16] We released **WizardCoder-15B-V1.0** , which achieves the **57.3 pass@1** and surpasses **Claude-Plus (+6.8)**, **Bard (+15.3)** and **InstructCodeT5+ (+22.3)** on the [HumanEval Benchmarks](https://github.com/openai/human-eval).

|

| 25 |

-

|

| 26 |

-

❗Note: There are two HumanEval results of GPT4 and ChatGPT-3.5. The 67.0 and 48.1 are reported by the official GPT4 Report (2023/03/15) of [OpenAI](https://arxiv.org/abs/2303.08774). The 82.0 and 72.5 are tested by ourselves with the latest API (2023/08/26).

|

| 27 |

-

|

| 28 |

-

|

| 29 |

-

| Model | Checkpoint | Paper | HumanEval | MBPP | Demo | License |

|

| 30 |

-

| ----- |------| ---- |------|-------| ----- | ----- |

|

| 31 |

-

| WizardCoder-Python-34B-V1.0 | 🤗 <a href="https://huggingface.co/WizardLM/WizardCoder-Python-34B-V1.0" target="_blank">HF Link</a> | 📃 <a href="https://arxiv.org/abs/2306.08568" target="_blank">[WizardCoder]</a> | 73.2 | 61.2 | [Demo](http://47.103.63.15:50085/) | <a href="https://ai.meta.com/resources/models-and-libraries/llama-downloads/" target="_blank">Llama2</a> |

|

| 32 |

-

| WizardCoder-15B-V1.0 | 🤗 <a href="https://huggingface.co/WizardLM/WizardCoder-15B-V1.0" target="_blank">HF Link</a> | 📃 <a href="https://arxiv.org/abs/2306.08568" target="_blank">[WizardCoder]</a> | 59.8 |50.6 | -- | <a href="https://huggingface.co/spaces/bigcode/bigcode-model-license-agreement" target="_blank">OpenRAIL-M</a> |

|

| 33 |

|

| 34 |

-

- 📣 Please refer to our Twitter account https://twitter.com/WizardLM_AI and HuggingFace Repo https://huggingface.co/WizardLM . We will use them to announce any new release at the 1st time.

|

| 35 |

|

| 36 |

-

|

| 37 |

-

|

| 38 |

-

|

| 39 |

-

|

| 40 |

-

<

|

| 41 |

-

<a ><img src="imgs/compare_sota.png" alt="WizardCoder" style="width: 96%; min-width: 300px; display: block; margin: auto;"></a>

|

| 42 |

</p>

|

| 43 |

-

|

| 44 |

-

❗❗❗**Note: This performance is 100% reproducible! If you cannot reproduce it, please follow the steps in [Evaluation](#evaluation).**

|

| 45 |

-

|

| 46 |

-

❗Note: There are two HumanEval results of GPT4 and ChatGPT-3.5. The 67.0 and 48.1 are reported by the official GPT4 Report (2023/03/15) of [OpenAI](https://arxiv.org/abs/2303.08774). The 82.0 and 72.5 are tested by ourselves with the latest API (2023/08/26).

|

| 47 |

-

|

| 48 |

-

## Comparing WizardCoder-15B-V1.0 with the Closed-Source Models.

|

| 49 |

-

|

| 50 |

-

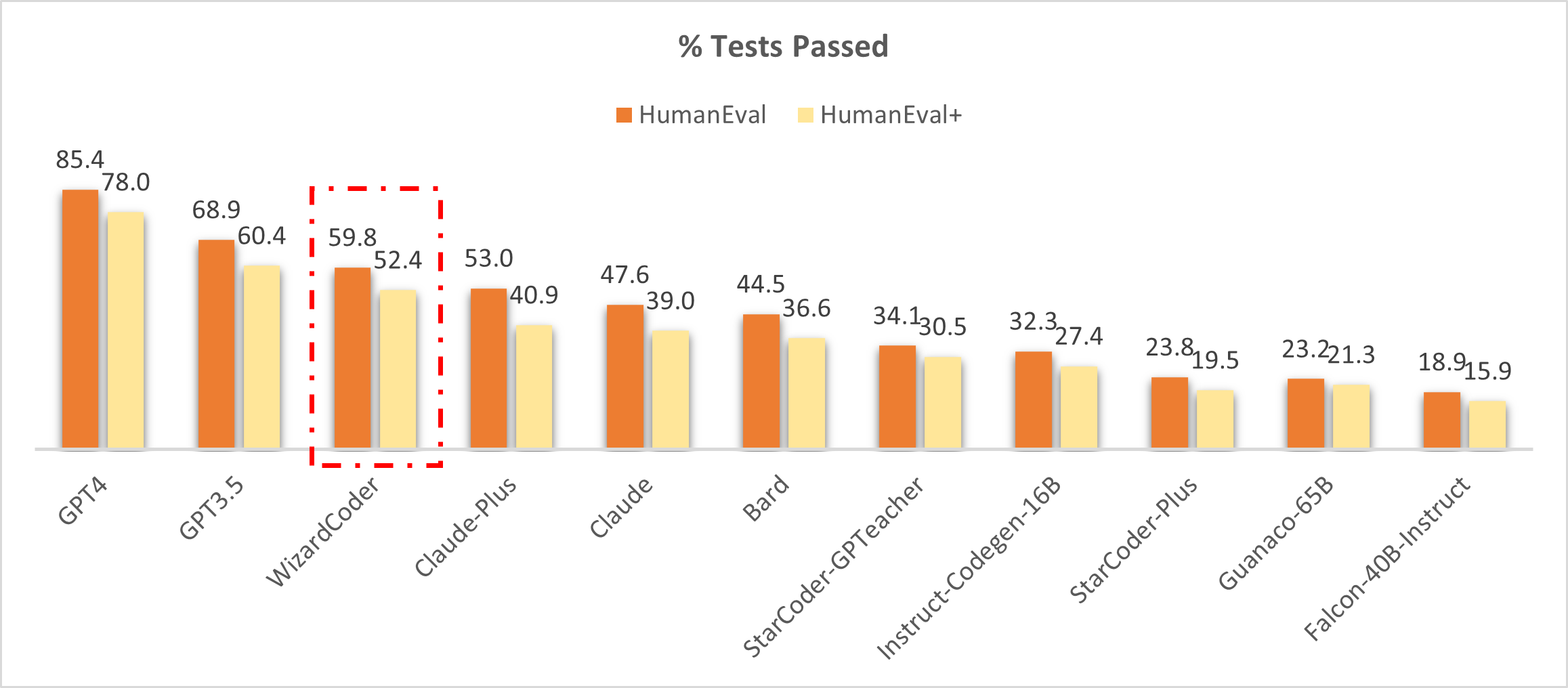

🔥 The following figure shows that our **WizardCoder attains the third position in this benchmark**, surpassing Claude-Plus (59.8 vs. 53.0) and Bard (59.8 vs. 44.5). Notably, our model exhibits a substantially smaller size compared to these models.

|

| 51 |

|

| 52 |

<p align="center" width="100%">

|

| 53 |

-

<a ><img src="imgs/

|

| 54 |

</p>

|

| 55 |

|

| 56 |

-

|

|

|

|

|

|

|

| 57 |

|

| 58 |

-

|

| 59 |

|

| 60 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

| 61 |

|

| 62 |

-

|

| 63 |

|

|

|

|

|

|

|

| 64 |

|

| 65 |

-

| Model | HumanEval Pass@1 | MBPP Pass@1 |

|

| 66 |

-

|------------------|------------------|-------------|

|

| 67 |

-

| CodeGen-16B-Multi| 18.3 |20.9 |

|

| 68 |

-

| CodeGeeX | 22.9 |24.4 |

|

| 69 |

-

| LLaMA-33B | 21.7 |30.2 |

|

| 70 |

-

| LLaMA-65B | 23.7 |37.7 |

|

| 71 |

-

| PaLM-540B | 26.2 |36.8 |

|

| 72 |

-

| PaLM-Coder-540B | 36.0 |47.0 |

|

| 73 |

-

| PaLM 2-S | 37.6 |50.0 |

|

| 74 |

-

| CodeGen-16B-Mono | 29.3 |35.3 |

|

| 75 |

-

| Code-Cushman-001 | 33.5 |45.9 |

|

| 76 |

-

| StarCoder-15B | 33.6 |43.6* |

|

| 77 |

-

| InstructCodeT5+ | 35.0 |-- |

|

| 78 |

-

| WizardLM-30B 1.0| 37.8 |-- |

|

| 79 |

-

| WizardCoder-15B 1.0 | **57.3** |**51.8** |

|

| 80 |

|

| 81 |

-

|

|

|

|

|

|

|

|

|

|

| 82 |

|

| 83 |

-

❗**Note: The above table conducts a comprehensive comparison of our **WizardCoder** with other models on the HumanEval and MBPP benchmarks. We adhere to the approach outlined in previous studies by generating **20 samples** for each problem to estimate the pass@1 score and evaluate with the same [code](https://github.com/openai/human-eval/tree/master). The scores of GPT4 and GPT3.5 reported by [OpenAI](https://openai.com/research/gpt-4) are 67.0 and 48.1 (maybe these are the early version GPT4&3.5).**

|

| 84 |

|

| 85 |

-

## Call for Feedbacks

|

| 86 |

-

We welcome everyone to use your professional and difficult instructions to evaluate WizardCoder, and show us examples of poor performance and your suggestions in the [issue discussion](https://github.com/nlpxucan/WizardLM/issues) area. We are focusing on improving the Evol-Instruct now and hope to relieve existing weaknesses and issues in the the next version of WizardCoder. After that, we will open the code and pipeline of up-to-date Evol-Instruct algorithm and work with you together to improve it.

|

| 87 |

|

| 88 |

-

|

| 89 |

-

|

| 90 |

-

1. [WizardCoder AI Is The NEW ChatGPT's Coding TWIN!](https://www.youtube.com/watch?v=XjsyHrmd3Xo)

|

| 91 |

|

| 92 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 93 |

|

| 94 |

-

1. [Online Demo](#online-demo)

|

| 95 |

|

| 96 |

-

|

| 97 |

|

| 98 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 99 |

|

| 100 |

-

4. [Evaluation](#evaluation)

|

| 101 |

|

| 102 |

-

5. [Citation](#citation)

|

| 103 |

|

| 104 |

-

6. [Disclaimer](#disclaimer)

|

| 105 |

|

| 106 |

-

|

| 107 |

|

| 108 |

-

|

|

|

|

|

|

|

|

|

|

| 109 |

|

| 110 |

-

|

| 111 |

|

| 112 |

-

|

| 113 |

|

| 114 |

-

|

| 115 |

-

We fine-tune StarCoder-15B with the following hyperparameters:

|

| 116 |

|

| 117 |

-

| Hyperparameter | StarCoder-15B |

|

| 118 |

-

|----------------|---------------|

|

| 119 |

-

| Batch size | 512 |

|

| 120 |

-

| Learning rate | 2e-5 |

|

| 121 |

-

| Epochs | 3 |

|

| 122 |

-

| Max length | 2048 |

|

| 123 |

-

| Warmup step | 30 |

|

| 124 |

-

| LR scheduler | cosine |

|

| 125 |

|

| 126 |

-

|

| 127 |

-

1. According to the instructions of [Llama-X](https://github.com/AetherCortex/Llama-X), install the environment, download the training code, and deploy. (Note: `deepspeed==0.9.2` and `transformers==4.29.2`)

|

| 128 |

-

2. Replace the `train.py` with the `train_wizardcoder.py` in our repo (`src/train_wizardcoder.py`)

|

| 129 |

-

3. Login Huggingface:

|

| 130 |

-

```bash

|

| 131 |

-

huggingface-cli login

|

| 132 |

-

```

|

| 133 |

-

4. Execute the following training command:

|

| 134 |

-

```bash

|

| 135 |

-

deepspeed train_wizardcoder.py \

|

| 136 |

-

--model_name_or_path "bigcode/starcoder" \

|

| 137 |

-

--data_path "/your/path/to/code_instruction_data.json" \

|

| 138 |

-

--output_dir "/your/path/to/ckpt" \

|

| 139 |

-

--num_train_epochs 3 \

|

| 140 |

-

--model_max_length 2048 \

|

| 141 |

-

--per_device_train_batch_size 16 \

|

| 142 |

-

--per_device_eval_batch_size 1 \

|

| 143 |

-

--gradient_accumulation_steps 4 \

|

| 144 |

-

--evaluation_strategy "no" \

|

| 145 |

-

--save_strategy "steps" \

|

| 146 |

-

--save_steps 50 \

|

| 147 |

-

--save_total_limit 2 \

|

| 148 |

-

--learning_rate 2e-5 \

|

| 149 |

-

--warmup_steps 30 \

|

| 150 |

-

--logging_steps 2 \

|

| 151 |

-

--lr_scheduler_type "cosine" \

|

| 152 |

-

--report_to "tensorboard" \

|

| 153 |

-

--gradient_checkpointing True \

|

| 154 |

-

--deepspeed configs/deepspeed_config.json \

|

| 155 |

-

--fp16 True

|

| 156 |

-

```

|

| 157 |

|

| 158 |

-

|

| 159 |

|

| 160 |

-

|

| 161 |

|

| 162 |

-

You can specify `base_model`, `input_data_path` and `output_data_path` in `src\inference_wizardcoder.py` to set the decoding model, path of input file and path of output file.

|

| 163 |

-

|

| 164 |

-

```bash

|

| 165 |

-

pip install jsonlines

|

| 166 |

-

```

|

| 167 |

-

|

| 168 |

-

The decoding command is:

|

| 169 |

```

|

| 170 |

-

|

| 171 |

-

--base_model "/your/path/to/ckpt" \

|

| 172 |

-

--input_data_path "/your/path/to/input/data.jsonl" \

|

| 173 |

-

--output_data_path "/your/path/to/output/result.jsonl"

|

| 174 |

```

|

| 175 |

|

| 176 |

-

|

|

|

|

| 177 |

```

|

| 178 |

-

|

| 179 |

-

{"idx": 12, "Instruction": "Write a Java code to sum 1 to 10."}

|

| 180 |

```

|

| 181 |

|

| 182 |

-

|

| 183 |

-

```

|

| 184 |

-

Below is an instruction that describes a task. Write a response that appropriately completes the request.

|

| 185 |

|

| 186 |

-

|

| 187 |

-

{instruction}

|

| 188 |

|

| 189 |

-

### Response:

|

| 190 |

```

|

| 191 |

-

|

| 192 |

-

## Evaluation

|

| 193 |

-

|

| 194 |

-

### HumanEval

|

| 195 |

-

|

| 196 |

-

1. According to the instructions of [HumanEval](https://github.com/openai/human-eval), install the environment.

|

| 197 |

-

2. Run the following scripts to generate the answer.

|

| 198 |

-

|

| 199 |

-

- (1) For WizardCoder-15B-V1.0 (base on StarCoder)

|

| 200 |

-

```bash

|

| 201 |

-

model="/path/to/your/model"

|

| 202 |

-

temp=0.2

|

| 203 |

-

max_len=2048

|

| 204 |

-

pred_num=200

|

| 205 |

-

num_seqs_per_iter=2

|

| 206 |

-

|

| 207 |

-

output_path=preds/T${temp}_N${pred_num}

|

| 208 |

-

|

| 209 |

-

mkdir -p ${output_path}

|

| 210 |

-

echo 'Output path: '$output_path

|

| 211 |

-

echo 'Model to eval: '$model

|

| 212 |

-

|

| 213 |

-

# 164 problems, 21 per GPU if GPU=8

|

| 214 |

-

index=0

|

| 215 |

-

gpu_num=8

|

| 216 |

-

for ((i = 0; i < $gpu_num; i++)); do

|

| 217 |

-

start_index=$((i * 21))

|

| 218 |

-

end_index=$(((i + 1) * 21))

|

| 219 |

-

|

| 220 |

-

gpu=$((i))

|

| 221 |

-

echo 'Running process #' ${i} 'from' $start_index 'to' $end_index 'on GPU' ${gpu}

|

| 222 |

-

((index++))

|

| 223 |

-

(

|

| 224 |

-

CUDA_VISIBLE_DEVICES=$gpu python humaneval_gen.py --model ${model} \

|

| 225 |

-

--start_index ${start_index} --end_index ${end_index} --temperature ${temp} \

|

| 226 |

-

--num_seqs_per_iter ${num_seqs_per_iter} --N ${pred_num} --max_len ${max_len} --output_path ${output_path}

|

| 227 |

-

) &

|

| 228 |

-

if (($index % $gpu_num == 0)); then wait; fi

|

| 229 |

-

done

|

| 230 |

```

|

| 231 |

|

| 232 |

-

- (2) For WizardCoder-Python-34B-V1.0 (base on CodeLLama)

|

| 233 |

-

|

| 234 |

-

```bash

|

| 235 |

-

pip install vllm # This can acclerate the inference process a lot.

|

| 236 |

-

pip install transformers==4.31.0

|

| 237 |

-

|

| 238 |

-

model="/path/to/your/model"

|

| 239 |

-

temp=0.2

|

| 240 |

-

max_len=2048

|

| 241 |

-

pred_num=200

|

| 242 |

-

num_seqs_per_iter=2

|

| 243 |

|

| 244 |

-

|

| 245 |

|

| 246 |

-

mkdir -p ${output_path}

|

| 247 |

-

echo 'Output path: '$output_path

|

| 248 |

-

echo 'Model to eval: '$model

|

| 249 |

|

| 250 |

-

CUDA_VISIBLE_DEVICES=0,1,2,3 python humaneval_gen_vllm.py --model ${model} \

|

| 251 |

-

--start_index 0 --end_index 164 --temperature ${temp} \

|

| 252 |

-

--num_seqs_per_iter ${num_seqs_per_iter} --N ${pred_num} --max_len ${max_len} --output_path ${output_path} --num_gpus 4

|

| 253 |

```

|

| 254 |

-

|

| 255 |

-

3. Run the post processing code `src/process_humaneval.py` to collect the code completions from all answer files.

|

| 256 |

-

```bash

|

| 257 |

-

output_path=preds/T${temp}_N${pred_num}

|

| 258 |

-

|

| 259 |

-

echo 'Output path: '$output_path

|

| 260 |

-

python process_humaneval.py --path ${output_path} --out_path ${output_path}.jsonl --add_prompt

|

| 261 |

-

|

| 262 |

-

evaluate_functional_correctness ${output_path}.jsonl

|

| 263 |

```

|

| 264 |

|

| 265 |

-

###

|

| 266 |

|

| 267 |

-

|

|

|

|

|

|

|

|

|

|

| 268 |

|

| 269 |

-

|

| 270 |

|

| 271 |

-

|

| 272 |

|

| 273 |

-

|

| 274 |

-

|

| 275 |

-

|

| 276 |

-

max_len=2048

|

| 277 |

-

pred_num=1

|

| 278 |

-

num_seqs_per_iter=1

|

| 279 |

|

| 280 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 281 |

|

| 282 |

-

|

| 283 |

-

|

| 284 |

-

echo 'Model to eval: '$model

|

| 285 |

|

| 286 |

-

# 164 problems, 21 per GPU if GPU=8

|

| 287 |

-

index=0

|

| 288 |

-

gpu_num=8

|

| 289 |

-

for ((i = 0; i < $gpu_num; i++)); do

|

| 290 |

-

start_index=$((i * 21))

|

| 291 |

-

end_index=$(((i + 1) * 21))

|

| 292 |

|

| 293 |

-

gpu=$((i))

|

| 294 |

-

echo 'Running process #' ${i} 'from' $start_index 'to' $end_index 'on GPU' ${gpu}

|

| 295 |

-

((index++))

|

| 296 |

-

(

|

| 297 |

-

CUDA_VISIBLE_DEVICES=$gpu python humaneval_gen.py --model ${model} \

|

| 298 |

-

--start_index ${start_index} --end_index ${end_index} --temperature ${temp} \

|

| 299 |

-

--num_seqs_per_iter ${num_seqs_per_iter} --N ${pred_num} --max_len ${max_len} --output_path ${output_path} --greedy_decode

|

| 300 |

-

) &

|

| 301 |

-

if (($index % $gpu_num == 0)); then wait; fi

|

| 302 |

-

done

|

| 303 |

-

```

|

| 304 |

|

| 305 |

-

|

| 306 |

-

|

| 307 |

-

1. Run the following script to generate the answer.

|

| 308 |

-

```bash

|

| 309 |

-

model="/path/to/your/model"

|

| 310 |

-

temp=0.2

|

| 311 |

-

max_len=2048

|

| 312 |

-

pred_num=200

|

| 313 |

-

num_seqs_per_iter=2

|

| 314 |

-

|

| 315 |

-

output_path=preds/MBPP_T${temp}_N${pred_num}

|

| 316 |

-

mbpp_path=data/mbpp.test.jsonl # we provide this file in data/mbpp.test.zip

|

| 317 |

-

|

| 318 |

-

mkdir -p ${output_path}

|

| 319 |

-

echo 'Output path: '$output_path

|

| 320 |

-

echo 'Model to eval: '$model

|

| 321 |

-

|

| 322 |

-

# 500 problems, 63 per GPU if GPU=8

|

| 323 |

-

index=0

|

| 324 |

-

gpu_num=8

|

| 325 |

-

for ((i = 0; i < $gpu_num; i++)); do

|

| 326 |

-

start_index=$((i * 50))

|

| 327 |

-

end_index=$(((i + 1) * 50))

|

| 328 |

-

|

| 329 |

-

gpu=$((i))

|

| 330 |

-

echo 'Running process #' ${i} 'from' $start_index 'to' $end_index 'on GPU' ${gpu}

|

| 331 |

-

((index++))

|

| 332 |

-

(

|

| 333 |

-

CUDA_VISIBLE_DEVICES=$gpu python mbpp_gen.py --model ${model} \

|

| 334 |

-

--start_index ${start_index} --end_index ${end_index} --temperature ${temp} \

|

| 335 |

-

--num_seqs_per_iter ${num_seqs_per_iter} --N ${pred_num} --max_len ${max_len} --output_path ${output_path} --mbpp_path ${mbpp_path}

|

| 336 |

-

) &

|

| 337 |

-

if (($index % $gpu_num == 0)); then wait; fi

|

| 338 |

-

done

|

| 339 |

-

```

|

| 340 |

|

| 341 |

-

|

| 342 |

-

```bash

|

| 343 |

-

output_path=preds/MBPP_T${temp}_N${pred_num}

|

| 344 |

-

mbpp_path=data/mbpp.test.jsonl # we provide this file in data/mbpp.test.zip

|

| 345 |

|

| 346 |

-

|

| 347 |

-

|

| 348 |

-

|

| 349 |

|

| 350 |

-

|

|

|

|

|

|

|

| 351 |

|

| 352 |

-

|

| 353 |

|

| 354 |

-

|

| 355 |

|

| 356 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 357 |

|

| 358 |

```

|

| 359 |

@misc{luo2023wizardcoder,

|

|

@@ -367,8 +209,9 @@ Please cite the repo if you use the data or code in this repo.

|

|

| 367 |

```

|

| 368 |

## Disclaimer

|

| 369 |

|

| 370 |

-

|

| 371 |

|

| 372 |

## Star History

|

| 373 |

|

| 374 |

-

[](https://star-history.com/#nlpxucan/WizardLM&Timeline)

|

|

|

|

|

|

| 1 |

+

## WizardLM: Empowering Large Pre-Trained Language Models to Follow Complex Instructions

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 2 |

|

|

|

|

| 3 |

|

| 4 |

+

<p align="center">

|

| 5 |

+

🤗 <a href="https://huggingface.co/WizardLM" target="_blank">HF Repo</a> • 🐦 <a href="https://twitter.com/WizardLM_AI" target="_blank">Twitter</a> • 📃 <a href="https://arxiv.org/abs/2304.12244" target="_blank">[WizardLM]</a> • 📃 <a href="https://arxiv.org/abs/2306.08568" target="_blank">[WizardCoder]</a> • 📃 <a href="https://arxiv.org/abs/2308.09583" target="_blank">[WizardMath]</a> <br>

|

| 6 |

+

</p>

|

| 7 |

+

<p align="center">

|

| 8 |

+

👋 Join our <a href="https://discord.gg/VZjjHtWrKs" target="_blank">Discord</a>

|

|

|

|

| 9 |

</p>

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 10 |

|

| 11 |

<p align="center" width="100%">

|

| 12 |

+

<a ><img src="imgs/WizardLM.png" alt="WizardLM" style="width: 20%; min-width: 300px; display: block; margin: auto;"></a>

|

| 13 |

</p>

|

| 14 |

|

| 15 |

+

[](https://github.com/tatsu-lab/stanford_alpaca/blob/main/LICENSE)

|

| 16 |

+

[](https://github.com/tatsu-lab/stanford_alpaca/blob/main/DATA_LICENSE)

|

| 17 |

+

[](https://www.python.org/downloads/release/python-390/)

|

| 18 |

|

| 19 |

+

**Unofficial Video Introductions**

|

| 20 |

|

| 21 |

+

Thanks to the enthusiastic friends, their video introductions are more lively and interesting.

|

| 22 |

+

1. [NEW WizardLM 70b 🔥 Giant Model...Insane Performance](https://www.youtube.com/watch?v=WdpiIXrO4_o)

|

| 23 |

+

2. [GET WizardLM NOW! 7B LLM KING That Can Beat ChatGPT! I'm IMPRESSED!](https://www.youtube.com/watch?v=SaJ8wyKMBds)

|

| 24 |

+

3. [WizardLM: Enhancing Large Language Models to Follow Complex Instructions](https://www.youtube.com/watch?v=I6sER-qivYk)

|

| 25 |

+

4. [WizardCoder AI Is The NEW ChatGPT's Coding TWIN!](https://www.youtube.com/watch?v=XjsyHrmd3Xo)

|

| 26 |

|

| 27 |

+

## News

|

| 28 |

|

| 29 |

+

- 🔥🔥🔥[2023/08/26] We released **WizardCoder-Python-34B-V1.0** , which achieves the **73.2 pass@1** and surpasses **GPT4 (2023/03/15)**, **ChatGPT-3.5**, and **Claude2** on the [HumanEval Benchmarks](https://github.com/openai/human-eval). For more details, please refer to [WizardCoder](https://github.com/nlpxucan/WizardLM/tree/main/WizardCoder).

|

| 30 |

+

- [2023/06/16] We released **WizardCoder-15B-V1.0** , which surpasses **Claude-Plus (+6.8)**, **Bard (+15.3)** and **InstructCodeT5+ (+22.3)** on the [HumanEval Benchmarks](https://github.com/openai/human-eval). For more details, please refer to [WizardCoder](https://github.com/nlpxucan/WizardLM/tree/main/WizardCoder).

|

| 31 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 32 |

|

| 33 |

+

| Model | Checkpoint | Paper | HumanEval | MBPP | Demo | License |

|

| 34 |

+

| ----- |------| ---- |------|-------| ----- | ----- |

|

| 35 |

+

| WizardCoder-Python-34B-V1.0 | 🤗 <a href="https://huggingface.co/WizardLM/WizardCoder-Python-34B-V1.0" target="_blank">HF Link</a> | 📃 <a href="https://arxiv.org/abs/2306.08568" target="_blank">[WizardCoder]</a> | 73.2 | 61.2 | [Demo](http://47.103.63.15:50085/) | <a href="https://ai.meta.com/resources/models-and-libraries/llama-downloads/" target="_blank">Llama2</a> |

|

| 36 |

+

| WizardCoder-15B-V1.0 | 🤗 <a href="https://huggingface.co/WizardLM/WizardCoder-15B-V1.0" target="_blank">HF Link</a> | 📃 <a href="https://arxiv.org/abs/2306.08568" target="_blank">[WizardCoder]</a> | 59.8 |50.6 | -- | <a href="https://huggingface.co/spaces/bigcode/bigcode-model-license-agreement" target="_blank">OpenRAIL-M</a> |

|

| 37 |

|

|

|

|

| 38 |

|

|

|

|

|

|

|

| 39 |

|

| 40 |

+

- Our **WizardMath-70B-V1.0** model slightly outperforms some closed-source LLMs on the GSM8K, including **ChatGPT 3.5**, **Claude Instant 1** and **PaLM 2 540B**.

|

| 41 |

+

- Our **WizardMath-70B-V1.0** model achieves **81.6 pass@1** on the [GSM8k Benchmarks](https://github.com/openai/grade-school-math), which is **24.8** points higher than the SOTA open-source LLM, and achieves **22.7 pass@1** on the [MATH Benchmarks](https://github.com/hendrycks/math), which is **9.2** points higher than the SOTA open-source LLM.

|

|

|

|

| 42 |

|

| 43 |

+

<font size=0.5>

|

| 44 |

+

|

| 45 |

+

| Model | Checkpoint | Paper | GSM8k | MATH |Online Demo| License|

|

| 46 |

+

| ----- |------| ---- |------|-------| ----- | ----- |

|

| 47 |

+

| WizardMath-70B-V1.0 | 🤗 <a href="https://huggingface.co/WizardLM/WizardMath-70B-V1.0" target="_blank">HF Link</a> | 📃 <a href="https://arxiv.org/abs/2308.09583" target="_blank">[WizardMath]</a>| **81.6** | **22.7** |[Demo](http://47.103.63.15:50083/)| <a href="https://ai.meta.com/resources/models-and-libraries/llama-downloads/" target="_blank">Llama 2 </a> |

|

| 48 |

+

| WizardMath-13B-V1.0 | 🤗 <a href="https://huggingface.co/WizardLM/WizardMath-13B-V1.0" target="_blank">HF Link</a> | 📃 <a href="https://arxiv.org/abs/2308.09583" target="_blank">[WizardMath]</a>| **63.9** | **14.0** |[Demo](http://47.103.63.15:50082/)| <a href="https://ai.meta.com/resources/models-and-libraries/llama-downloads/" target="_blank">Llama 2 </a> |

|

| 49 |

+

| WizardMath-7B-V1.0 | 🤗 <a href="https://huggingface.co/WizardLM/WizardMath-7B-V1.0" target="_blank">HF Link</a> | 📃 <a href="https://arxiv.org/abs/2308.09583" target="_blank">[WizardMath]</a>| **54.9** | **10.7** | [Demo ](http://47.103.63.15:50080/)| <a href="https://ai.meta.com/resources/models-and-libraries/llama-downloads/" target="_blank">Llama 2 </a>|

|

| 50 |

+

</font>

|

| 51 |

|

|

|

|

| 52 |

|

| 53 |

+

- [08/09/2023] We released **WizardLM-70B-V1.0** model. Here is [Full Model Weight](https://huggingface.co/WizardLM/WizardLM-70B-V1.0).

|

| 54 |

|

| 55 |

+

<font size=0.5>

|

| 56 |

+

|

| 57 |

+

|

| 58 |

+

| <sup>Model</sup> | <sup>Checkpoint</sup> | <sup>Paper</sup> |<sup>MT-Bench</sup> | <sup>AlpacaEval</sup> | <sup>GSM8k</sup> | <sup>HumanEval</sup> | <sup>License</sup>|

|

| 59 |

+

| ----- |------| ---- |------|-------| ----- | ----- | ----- |

|

| 60 |

+

| <sup>**WizardLM-70B-V1.0**</sup> | <sup>🤗 <a href="https://huggingface.co/WizardLM/WizardLM-70B-V1.0" target="_blank">HF Link</a> </sup>|<sup>📃**Coming Soon**</sup>| <sup>**7.78**</sup> | <sup>**92.91%**</sup> |<sup>**77.6%**</sup> | <sup> **50.6**</sup>|<sup> <a href="https://ai.meta.com/resources/models-and-libraries/llama-downloads/" target="_blank">Llama 2 License </a></sup> |

|

| 61 |

+

| <sup>WizardLM-13B-V1.2</sup> | <sup>🤗 <a href="https://huggingface.co/WizardLM/WizardLM-13B-V1.2" target="_blank">HF Link</a> </sup>| | <sup>7.06</sup> | <sup>89.17%</sup> |<sup>55.3%</sup> | <sup>36.6 </sup>|<sup> <a href="https://ai.meta.com/resources/models-and-libraries/llama-downloads/" target="_blank">Llama 2 License </a></sup> |

|

| 62 |

+

| <sup>WizardLM-13B-V1.1</sup> |<sup> 🤗 <a href="https://huggingface.co/WizardLM/WizardLM-13B-V1.1" target="_blank">HF Link</a> </sup> | | <sup>6.76</sup> |<sup>86.32%</sup> | | <sup>25.0 </sup>| <sup>Non-commercial</sup>|

|

| 63 |

+

| <sup>WizardLM-30B-V1.0</sup> | <sup>🤗 <a href="https://huggingface.co/WizardLM/WizardLM-30B-V1.0" target="_blank">HF Link</a></sup> | | <sup>7.01</sup> | | | <sup>37.8 </sup>| <sup>Non-commercial</sup> |

|

| 64 |

+

| <sup>WizardLM-13B-V1.0</sup> | <sup>🤗 <a href="https://huggingface.co/WizardLM/WizardLM-13B-V1.0" target="_blank">HF Link</a> </sup> | | <sup>6.35</sup> | <sup>75.31%</sup> | | <sup> 24.0 </sup> | <sup>Non-commercial</sup>|

|

| 65 |

+

| <sup>WizardLM-7B-V1.0 </sup>| <sup>🤗 <a href="https://huggingface.co/WizardLM/WizardLM-7B-V1.0" target="_blank">HF Link</a> </sup> |<sup> 📃 <a href="https://arxiv.org/abs/2304.12244" target="_blank">[WizardLM]</a> </sup>| | | |<sup>19.1 </sup>|<sup> Non-commercial</sup>|

|

| 66 |

+

</font>

|

| 67 |

|

|

|

|

| 68 |

|

|

|

|

| 69 |

|

|

|

|

| 70 |

|

| 71 |

+

❗To commen concern about dataset:

|

| 72 |

|

| 73 |

+

Recently, there have been clear changes in the open-source policy and regulations of our overall organization's code, data, and models.

|

| 74 |

+

Despite this, we have still worked hard to obtain opening the weights of the model first, but the data involves stricter auditing and is in review with our legal team .

|

| 75 |

+

Our researchers have no authority to publicly release them without authorization.

|

| 76 |

+

Thank you for your understanding.

|

| 77 |

|

| 78 |

+

## Hiring

|

| 79 |

|

| 80 |

+

- 📣 We are looking for highly motivated students to join us as interns to create more intelligent AI together. Please contact [email protected]

|

| 81 |

|

| 82 |

+

<!-- Although on our **complexity-balanced test set**, **WizardLM-7B has more cases that are preferred by human labelers than ChatGPT** in the high-complexity instructions (difficulty level >= 8), it still lags behind ChatGPT on the entire test set, and we also consider WizardLM to still be in a **baby state**. This repository will **continue to improve WizardLM**, train on larger scales, add more training data, and innovate more advanced large-model training methods. -->

|

|

|

|

| 83 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 84 |

|

| 85 |

+

<b>Note for model system prompts usage:</b>

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 86 |

|

| 87 |

+

To obtain results **identical to our demo**, please strictly follow the prompts and invocation methods provided in the **"src/infer_wizardlm13b.py"** to use our model for inference. Our model adopts the prompt format from <b>Vicuna</b> and supports **multi-turn** conversation.

|

| 88 |

|

| 89 |

+

<b>For WizardLM</b>, the Prompt should be as following:

|

| 90 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 91 |

```

|

| 92 |

+

A chat between a curious user and an artificial intelligence assistant. The assistant gives helpful, detailed, and polite answers to the user's questions. USER: Hi ASSISTANT: Hello.</s>USER: Who are you? ASSISTANT: I am WizardLM.</s>......

|

|

|

|

|

|

|

|

|

|

| 93 |

```

|

| 94 |

|

| 95 |

+

<b>For WizardCoder </b>, the Prompt should be as following:

|

| 96 |

+

|

| 97 |

```

|

| 98 |

+

"Below is an instruction that describes a task. Write a response that appropriately completes the request.\n\n### Instruction:\n{instruction}\n\n### Response:"

|

|

|

|

| 99 |

```

|

| 100 |

|

| 101 |

+

<b>For WizardMath</b>, the Prompts should be as following:

|

|

|

|

|

|

|

| 102 |

|

| 103 |

+

**Default version:**

|

|

|

|

| 104 |

|

|

|

|

| 105 |

```

|

| 106 |

+

"Below is an instruction that describes a task. Write a response that appropriately completes the request.\n\n### Instruction:\n{instruction}\n\n### Response:"

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 107 |

```

|

| 108 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 109 |

|

| 110 |

+

**CoT Version:** (❗For the **simple** math questions, we do NOT recommend to use the CoT prompt.)

|

| 111 |

|

|

|

|

|

|

|

|

|

|

| 112 |

|

|

|

|

|

|

|

|

|

|

| 113 |

```

|

| 114 |

+

"Below is an instruction that describes a task. Write a response that appropriately completes the request.\n\n### Instruction:\n{instruction}\n\n### Response: Let's think step by step."

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 115 |

```

|

| 116 |

|

| 117 |

+

### GPT-4 automatic evaluation

|

| 118 |

|

| 119 |

+

We adopt the automatic evaluation framework based on GPT-4 proposed by FastChat to assess the performance of chatbot models. As shown in the following figure, WizardLM-30B achieved better results than Guanaco-65B.

|

| 120 |

+

<p align="center" width="100%">

|

| 121 |

+

<a ><img src="imgs/WizarLM30b-GPT4.png" alt="WizardLM" style="width: 100%; min-width: 300px; display: block; margin: auto;"></a>

|

| 122 |

+

</p>

|

| 123 |

|

| 124 |

+

### WizardLM-30B performance on different skills.

|

| 125 |

|

| 126 |

+

The following figure compares WizardLM-30B and ChatGPT’s skill on Evol-Instruct testset. The result indicates that WizardLM-30B achieves 97.8% of ChatGPT’s performance on average, with almost 100% (or more than) capacity on 18 skills, and more than 90% capacity on 24 skills.

|

| 127 |

|

| 128 |

+

<p align="center" width="100%">

|

| 129 |

+

<a ><img src="imgs/evol-testset_skills-30b.png" alt="WizardLM" style="width: 100%; min-width: 300px; display: block; margin: auto;"></a>

|

| 130 |

+

</p>

|

|

|

|

|

|

|

|

|

|

| 131 |

|

| 132 |

+

### WizardLM performance on NLP foundation tasks.

|

| 133 |

+

|

| 134 |

+

The following table provides a comparison of WizardLMs and other LLMs on NLP foundation tasks. The results indicate that WizardLMs consistently exhibit superior performance in comparison to the LLaMa models of the same size. Furthermore, our WizardLM-30B model showcases comparable performance to OpenAI's Text-davinci-003 on the MMLU and HellaSwag benchmarks.

|

| 135 |

+

|

| 136 |

+

| Model | MMLU 5-shot | ARC 25-shot | TruthfulQA 0-shot | HellaSwag 10-shot | Average |

|

| 137 |

+

|------------------|-------------|-------------|-------------------|-------------------|------------|

|

| 138 |

+

| Text-davinci-003 | <u>56.9<u/> | **85.2** | **59.3** | <u>82.2<u/> | **70.9** |

|

| 139 |

+

|Vicuna-13b 1.1 | 51.3 | 53.0 | 51.8 | 80.1 | 59.1 |

|

| 140 |

+

|Guanaco 30B | 57.6 | 63.7 | 50.7 | **85.1** | 64.3 |

|

| 141 |

+

| WizardLM-7B 1.0 | 42.7 | 51.6 | 44.7 | 77.7 | 54.2 |

|

| 142 |

+

| WizardLM-13B 1.0 | 52.3 | 57.2 | 50.5 | 81.0 | 60.2 |

|

| 143 |

+

| WizardLM-30B 1.0 | **58.8** | <u>62.5<u/> | <u>52.4<u/> | 83.3 | <u>64.2<u/>|

|

| 144 |

+

|

| 145 |

+

### WizardLM performance on code generation.

|

| 146 |

+

|

| 147 |

+

The following table provides a comprehensive comparison of WizardLMs and several other LLMs on the code generation task, namely HumanEval. The evaluation metric is pass@1. The results indicate that WizardLMs consistently exhibit superior performance in comparison to the LLaMa models of the same size. Furthermore, our WizardLM-30B model surpasses StarCoder and OpenAI's code-cushman-001. Moreover, our Code LLM, WizardCoder, demonstrates exceptional performance, achieving a pass@1 score of 57.3, surpassing the open-source SOTA by approximately 20 points.

|

| 148 |

+

|

| 149 |

+

|

| 150 |

+

| Model | HumanEval Pass@1 |

|

| 151 |

+

|------------------|------------------|

|

| 152 |

+

| LLaMA-7B | 10.5 |

|

| 153 |

+

| LLaMA-13B | 15.8 |

|

| 154 |

+

| CodeGen-16B-Multi| 18.3 |

|

| 155 |

+

| CodeGeeX | 22.9 |

|

| 156 |

+

| LLaMA-33B | 21.7 |

|

| 157 |

+

| LLaMA-65B | 23.7 |

|

| 158 |

+

| PaLM-540B | 26.2 |

|

| 159 |

+

| CodeGen-16B-Mono | 29.3 |

|

| 160 |

+

| code-cushman-001 | 33.5 |

|

| 161 |

+

| StarCoder | <u>33.6<u/> |

|

| 162 |

+

| WizardLM-7B 1.0 | 19.1 |

|

| 163 |

+

| WizardLM-13B 1.0 | 24.0 |

|

| 164 |

+

| WizardLM-30B 1.0 | **37.8** |

|

| 165 |

+

| WizardCoder-15B 1.0 | **57.3** |

|

| 166 |

|

| 167 |

+

## Call for Feedbacks

|

| 168 |

+

We welcome everyone to use your professional and difficult instructions to evaluate WizardLM, and show us examples of poor performance and your suggestions in the [issue discussion](https://github.com/nlpxucan/WizardLM/issues) area. We are focusing on improving the Evol-Instruct now and hope to relieve existing weaknesses and issues in the the next version of WizardLM. After that, we will open the code and pipeline of up-to-date Evol-Instruct algorithm and work with you together to improve it.

|

|

|

|

| 169 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 170 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 171 |

|

| 172 |

+

## Overview of Evol-Instruct

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 173 |

|

| 174 |

+

[Evol-Instruct](https://github.com/nlpxucan/evol-instruct) is a novel method using LLMs instead of humans to automatically mass-produce open-domain instructions of various difficulty levels and skills range, to improve the performance of LLMs.

|

|

|

|

|

|

|

|

|

|

| 175 |

|

| 176 |

+

<p align="center" width="100%">

|

| 177 |

+

<a ><img src="imgs/git_overall.png" alt="WizardLM" style="width: 86%; min-width: 300px; display: block; margin: auto;"></a>

|

| 178 |

+

</p>

|

| 179 |

|

| 180 |

+

<p align="center" width="100%">

|

| 181 |

+

<a ><img src="imgs/git_running.png" alt="WizardLM" style="width: 86%; min-width: 300px; display: block; margin: auto;"></a>

|

| 182 |

+

</p>

|

| 183 |

|

| 184 |

+

### Citation

|

| 185 |

|

| 186 |

+

Please cite the paper if you use the data or code from WizardLM.

|

| 187 |

|

| 188 |

+

```

|

| 189 |

+

@misc{xu2023wizardlm,

|

| 190 |

+

title={WizardLM: Empowering Large Language Models to Follow Complex Instructions},

|

| 191 |

+

author={Can Xu and Qingfeng Sun and Kai Zheng and Xiubo Geng and Pu Zhao and Jiazhan Feng and Chongyang Tao and Daxin Jiang},

|

| 192 |

+

year={2023},

|

| 193 |

+

eprint={2304.12244},

|

| 194 |

+

archivePrefix={arXiv},

|

| 195 |

+

primaryClass={cs.CL}

|

| 196 |

+

}

|

| 197 |

+

```

|

| 198 |

+

Please cite the paper if you use the data or code from WizardCoder.

|

| 199 |

|

| 200 |

```

|

| 201 |

@misc{luo2023wizardcoder,

|

|

|

|

| 209 |

```

|

| 210 |

## Disclaimer

|

| 211 |

|

| 212 |

+

The resources, including code, data, and model weights, associated with this project are restricted for academic research purposes only and cannot be used for commercial purposes. The content produced by any version of WizardLM is influenced by uncontrollable variables such as randomness, and therefore, the accuracy of the output cannot be guaranteed by this project. This project does not accept any legal liability for the content of the model output, nor does it assume responsibility for any losses incurred due to the use of associated resources and output results.

|

| 213 |

|

| 214 |

## Star History

|

| 215 |

|

| 216 |

+

[](https://star-history.com/#nlpxucan/WizardLM&Timeline)

|

| 217 |

+

|

WizardCoder/CODE_LICENSE

ADDED

|

@@ -0,0 +1,201 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Apache License

|

| 2 |

+

Version 2.0, January 2004

|

| 3 |

+

http://www.apache.org/licenses/

|

| 4 |

+

|

| 5 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 6 |

+

|

| 7 |

+

1. Definitions.

|

| 8 |

+

|

| 9 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 10 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 11 |

+

|

| 12 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 13 |

+

the copyright owner that is granting the License.

|

| 14 |

+

|

| 15 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 16 |

+

other entities that control, are controlled by, or are under common

|

| 17 |

+

control with that entity. For the purposes of this definition,

|

| 18 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 19 |

+

direction or management of such entity, whether by contract or

|

| 20 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 21 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 22 |

+

|

| 23 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 24 |

+

exercising permissions granted by this License.

|

| 25 |

+

|

| 26 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 27 |

+

including but not limited to software source code, documentation

|

| 28 |

+

source, and configuration files.

|

| 29 |

+

|

| 30 |

+

"Object" form shall mean any form resulting from mechanical

|

| 31 |

+

transformation or translation of a Source form, including but

|

| 32 |

+

not limited to compiled object code, generated documentation,

|

| 33 |

+

and conversions to other media types.

|

| 34 |

+

|

| 35 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 36 |

+

Object form, made available under the License, as indicated by a

|

| 37 |

+

copyright notice that is included in or attached to the work

|

| 38 |

+

(an example is provided in the Appendix below).

|

| 39 |

+

|

| 40 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 41 |

+

form, that is based on (or derived from) the Work and for which the

|

| 42 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 43 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 44 |

+

of this License, Derivative Works shall not include works that remain

|

| 45 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 46 |

+

the Work and Derivative Works thereof.

|

| 47 |

+

|

| 48 |

+

"Contribution" shall mean any work of authorship, including

|

| 49 |

+

the original version of the Work and any modifications or additions

|

| 50 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 51 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 52 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 53 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 54 |

+

means any form of electronic, verbal, or written communication sent

|

| 55 |

+

to the Licensor or its representatives, including but not limited to

|

| 56 |

+

communication on electronic mailing lists, source code control systems,

|

| 57 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 58 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 59 |

+

excluding communication that is conspicuously marked or otherwise

|

| 60 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 61 |

+

|

| 62 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 63 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 64 |

+

subsequently incorporated within the Work.

|

| 65 |

+

|

| 66 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 67 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 68 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 69 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 70 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 71 |

+

Work and such Derivative Works in Source or Object form.

|

| 72 |

+

|

| 73 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 74 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 75 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 76 |

+

(except as stated in this section) patent license to make, have made,

|

| 77 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 78 |

+

where such license applies only to those patent claims licensable

|

| 79 |

+

by such Contributor that are necessarily infringed by their

|

| 80 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 81 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 82 |

+

institute patent litigation against any entity (including a

|

| 83 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 84 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 85 |

+

or contributory patent infringement, then any patent licenses

|

| 86 |

+

granted to You under this License for that Work shall terminate

|

| 87 |

+

as of the date such litigation is filed.

|

| 88 |

+

|

| 89 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 90 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 91 |

+

modifications, and in Source or Object form, provided that You

|

| 92 |

+

meet the following conditions:

|

| 93 |

+

|

| 94 |

+

(a) You must give any other recipients of the Work or

|

| 95 |

+

Derivative Works a copy of this License; and

|

| 96 |

+

|

| 97 |

+

(b) You must cause any modified files to carry prominent notices

|

| 98 |

+

stating that You changed the files; and

|

| 99 |

+

|

| 100 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 101 |

+

that You distribute, all copyright, patent, trademark, and

|

| 102 |

+

attribution notices from the Source form of the Work,

|

| 103 |

+

excluding those notices that do not pertain to any part of

|

| 104 |

+

the Derivative Works; and

|

| 105 |

+

|

| 106 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 107 |

+

distribution, then any Derivative Works that You distribute must

|

| 108 |

+

include a readable copy of the attribution notices contained

|

| 109 |

+

within such NOTICE file, excluding those notices that do not

|

| 110 |

+

pertain to any part of the Derivative Works, in at least one

|

| 111 |

+

of the following places: within a NOTICE text file distributed

|

| 112 |

+

as part of the Derivative Works; within the Source form or

|

| 113 |

+

documentation, if provided along with the Derivative Works; or,

|

| 114 |

+

within a display generated by the Derivative Works, if and

|

| 115 |

+

wherever such third-party notices normally appear. The contents

|

| 116 |

+

of the NOTICE file are for informational purposes only and

|

| 117 |

+

do not modify the License. You may add Your own attribution

|

| 118 |

+

notices within Derivative Works that You distribute, alongside

|

| 119 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 120 |

+

that such additional attribution notices cannot be construed

|

| 121 |

+

as modifying the License.

|

| 122 |

+

|

| 123 |

+

You may add Your own copyright statement to Your modifications and

|

| 124 |

+

may provide additional or different license terms and conditions

|

| 125 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 126 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 127 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 128 |

+

the conditions stated in this License.

|

| 129 |

+

|

| 130 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 131 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 132 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 133 |

+

this License, without any additional terms or conditions.

|

| 134 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 135 |

+

the terms of any separate license agreement you may have executed

|

| 136 |

+

with Licensor regarding such Contributions.

|

| 137 |

+

|

| 138 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 139 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 140 |

+

except as required for reasonable and customary use in describing the

|

| 141 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 142 |

+

|

| 143 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 144 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 145 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 146 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 147 |

+

implied, including, without limitation, any warranties or conditions

|

| 148 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 149 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 150 |

+

appropriateness of using or redistributing the Work and assume any

|

| 151 |

+

risks associated with Your exercise of permissions under this License.

|

| 152 |

+

|

| 153 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 154 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 155 |

+

unless required by applicable law (such as deliberate and grossly

|

| 156 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 157 |

+

liable to You for damages, including any direct, indirect, special,

|

| 158 |

+

incidental, or consequential damages of any character arising as a

|

| 159 |

+

result of this License or out of the use or inability to use the

|

| 160 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 161 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 162 |

+

other commercial damages or losses), even if such Contributor

|

| 163 |

+

has been advised of the possibility of such damages.

|

| 164 |

+

|

| 165 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 166 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 167 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 168 |

+

or other liability obligations and/or rights consistent with this

|

| 169 |

+

License. However, in accepting such obligations, You may act only

|

| 170 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 171 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 172 |

+

defend, and hold each Contributor harmless for any liability

|

| 173 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 174 |

+

of your accepting any such warranty or additional liability.

|

| 175 |

+

|

| 176 |

+

END OF TERMS AND CONDITIONS

|

| 177 |

+

|

| 178 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 179 |

+

|

| 180 |

+

To apply the Apache License to your work, attach the following

|

| 181 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 182 |

+

replaced with your own identifying information. (Don't include

|

| 183 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 184 |

+

comment syntax for the file format. We also recommend that a

|

| 185 |

+

file or class name and description of purpose be included on the

|

| 186 |

+

same "printed page" as the copyright notice for easier

|

| 187 |

+

identification within third-party archives.

|

| 188 |

+

|

| 189 |

+

Copyright 2023 Rohan Taori, Ishaan Gulrajani, Tianyi Zhang, Yann Dubois, Xuechen Li

|

| 190 |

+

|

| 191 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 192 |

+

you may not use this file except in compliance with the License.

|

| 193 |

+

You may obtain a copy of the License at

|

| 194 |

+

|

| 195 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 196 |

+

|

| 197 |

+

Unless required by applicable law or agreed to in writing, software

|

| 198 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 199 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 200 |

+

See the License for the specific language governing permissions and

|

| 201 |

+

limitations under the License.

|

WizardCoder/DATA_LICENSE

ADDED

|

@@ -0,0 +1,407 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|