Commit

•

ed0f56d

1

Parent(s):

d13d103

Upload 33 files

Browse files- README.md +49 -3

- added_tokens.json +40 -0

- assets/demo-1.jpg +0 -0

- assets/demo-2.jpg +0 -0

- assets/demo-3.jpg +0 -0

- assets/demo-4.jpg +0 -0

- assets/demo-5.jpg +0 -0

- config.json +15 -0

- configuration_moondream.py +74 -0

- generation_config.json +4 -0

- merges.txt +0 -0

- model-00001-of-00002.safetensors +3 -0

- model-00002-of-00002.safetensors +3 -0

- model.safetensors +3 -0

- model.safetensors.index.json +591 -0

- modeling_phi.py +720 -0

- moondream.py +107 -0

- pytorch_model.bin +3 -0

- special_tokens_map.json +23 -0

- text_model.pt +3 -0

- text_model.py +19 -0

- text_model_cfg.json +31 -0

- tokenizer.json +0 -0

- tokenizer/added_tokens.json +40 -0

- tokenizer/merges.txt +0 -0

- tokenizer/special_tokens_map.json +5 -0

- tokenizer/tokenizer.json +0 -0

- tokenizer/tokenizer_config.json +323 -0

- tokenizer/vocab.json +0 -0

- tokenizer_config.json +323 -0

- vision.pt +3 -0

- vision_encoder.py +136 -0

- vocab.json +0 -0

README.md

CHANGED

|

@@ -1,3 +1,49 @@

|

|

| 1 |

-

---

|

| 2 |

-

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language:

|

| 3 |

+

- en

|

| 4 |

+

---

|

| 5 |

+

|

| 6 |

+

# 🌔 moondream1

|

| 7 |

+

|

| 8 |

+

1.6B parameter model built by [@vikhyatk](https://x.com/vikhyatk) using SigLIP, Phi-1.5 and the LLaVa training dataset.

|

| 9 |

+

The model is release for research purposes only, commercial use is not allowed.

|

| 10 |

+

|

| 11 |

+

Try it out on [Huggingface Spaces](https://huggingface.co/spaces/vikhyatk/moondream1)!

|

| 12 |

+

|

| 13 |

+

**Usage**

|

| 14 |

+

|

| 15 |

+

```

|

| 16 |

+

pip install transformers timm einops

|

| 17 |

+

```

|

| 18 |

+

|

| 19 |

+

```python

|

| 20 |

+

from transformers import AutoModelForCausalLM, CodeGenTokenizerFast as Tokenizer

|

| 21 |

+

from PIL import Image

|

| 22 |

+

|

| 23 |

+

model_id = "vikhyatk/moondream1"

|

| 24 |

+

model = AutoModelForCausalLM.from_pretrained(model_id, trust_remote_code=True)

|

| 25 |

+

tokenizer = Tokenizer.from_pretrained(model_id)

|

| 26 |

+

|

| 27 |

+

image = Image.open('<IMAGE_PATH>')

|

| 28 |

+

enc_image = model.encode_image(image)

|

| 29 |

+

print(model.answer_question(enc_image, "<QUESTION>", tokenizer))

|

| 30 |

+

```

|

| 31 |

+

|

| 32 |

+

## Benchmarks

|

| 33 |

+

|

| 34 |

+

| Model | Parameters | VQAv2 | GQA | TextVQA |

|

| 35 |

+

| --- | --- | --- | --- | --- |

|

| 36 |

+

| LLaVA-1.5 | 13.3B | 80.0 | 63.3 | 61.3 |

|

| 37 |

+

| LLaVA-1.5 | 7.3B | 78.5 | 62.0 | 58.2 |

|

| 38 |

+

| **moondream1** | 1.6B | 74.7 | 57.9 | 35.6 |

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

## Examples

|

| 42 |

+

|

| 43 |

+

| Image | Examples |

|

| 44 |

+

| --- | --- |

|

| 45 |

+

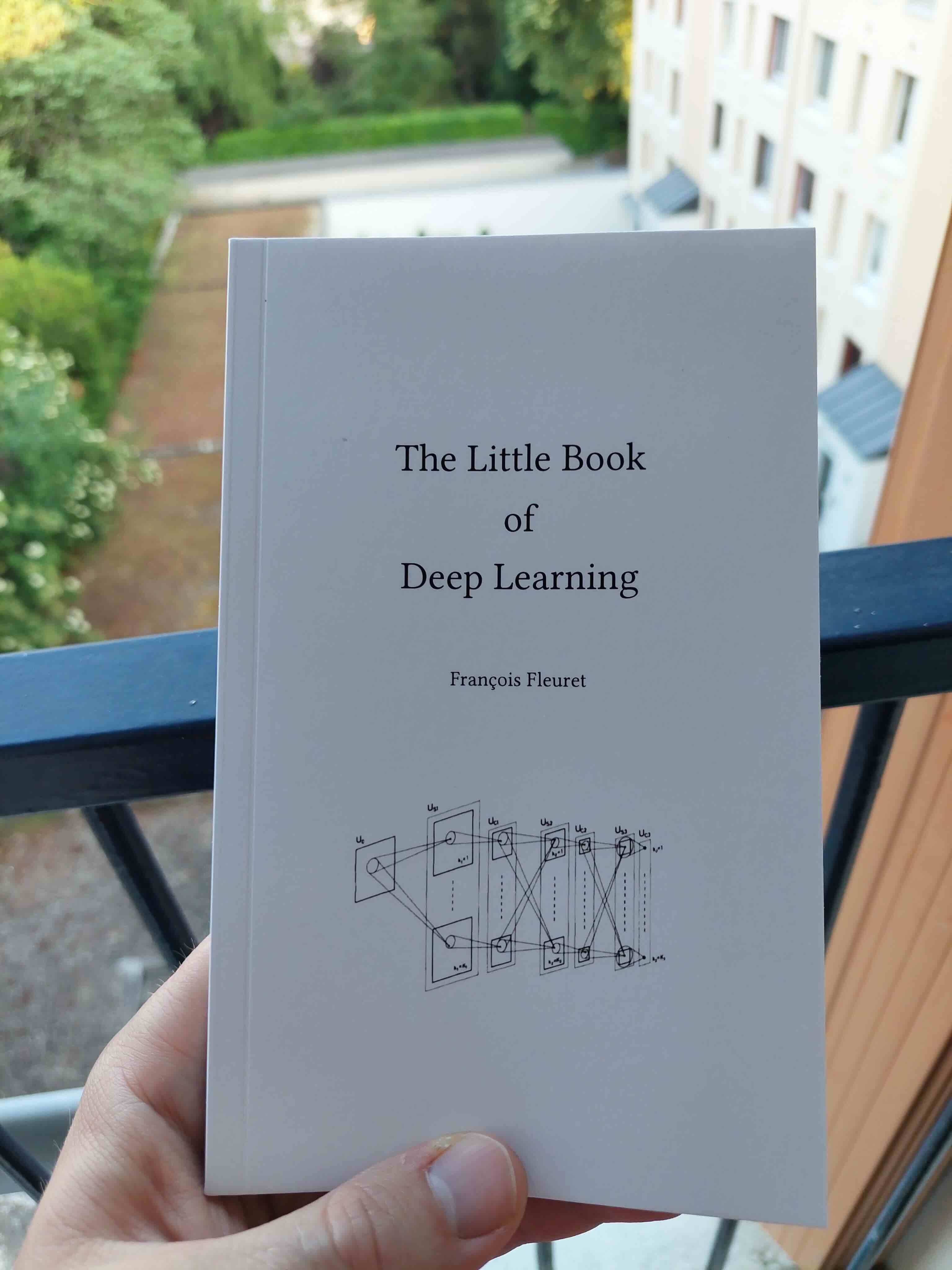

|  | **What is the title of this book?**<br>The Little Book of Deep Learning<br><br>**What can you tell me about this book?**<br>The book in the image is titled "The Little Book of Deep Learning." It appears to be a guide or manual that provides information and instructions on the subject of deep learning. The book is described as being small and thin, making it easy to carry and read. It is set in a room with a view outside, suggesting that the person holding the book might be in a living or working space with a view. The content of the book is likely to be complex, as the title implies a focus on advanced concepts in deep learning.<br><br>**Where is the person standing?**<br> The person is standing on a balcony or deck, which is outside. |

|

| 46 |

+

|  | **What type of food is the girl holding?**<br>The girl is holding a hamburger.<br><br>**What color is the woman's hair?**<br>The woman's hair is white.<br><br>**What is the girl doing in the image?**<br>The girl in the image is eating a hamburger. |

|

| 47 |

+

|  | **What color is the train?**<br>The train is red.<br><br>**What is the bus doing in the image?**<br> The bus in the image is driving down a city street, passing through an intersection.<br><br>**What kind of public transportation is in the image?**<br>The image features a red train or trolley as the public transportation option. |

|

| 48 |

+

|  | **What is the girl looking at?**<br>The girl is looking at her reflection in the mirror.<br><br>**What color is the woman's hair?**<br>The woman's hair is brown.<br><br>**What is the woman doing in the mirror?**<br>The woman is adjusting her hair in the mirror. |

|

| 49 |

+

|  | **What might be the reason for the dog's aggressive behavior?**<br>The dog's aggressive behavior, including its growling, baring its teeth, and showing its sharp teeth, could be due to various reasons. It is possible that the dog is feeling threatened, scared, or defensive about something in its environment, such as another animal, a loud noise, or a person nearby. Alternatively, the dog might be reacting to a command it received from its owner, or it could be experiencing discomfort or pain, which is causing it to display aggressive behavior. It is essential to observe the dog's body language and context to determine the underlying cause of its aggressive behavior and respond accordingly to address its needs or concerns.<br><br>**What kind of dog is in the picture?**<br>The dog in the picture is a small, black and white chihuahua. |

|

added_tokens.json

ADDED

|

@@ -0,0 +1,40 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"\t\t": 50294,

|

| 3 |

+

"\t\t\t": 50293,

|

| 4 |

+

"\t\t\t\t": 50292,

|

| 5 |

+

"\t\t\t\t\t": 50291,

|

| 6 |

+

"\t\t\t\t\t\t": 50290,

|

| 7 |

+

"\t\t\t\t\t\t\t": 50289,

|

| 8 |

+

"\t\t\t\t\t\t\t\t": 50288,

|

| 9 |

+

"\t\t\t\t\t\t\t\t\t": 50287,

|

| 10 |

+

" ": 50286,

|

| 11 |

+

" ": 50285,

|

| 12 |

+

" ": 50284,

|

| 13 |

+

" ": 50283,

|

| 14 |

+

" ": 50282,

|

| 15 |

+

" ": 50281,

|

| 16 |

+

" ": 50280,

|

| 17 |

+

" ": 50279,

|

| 18 |

+

" ": 50278,

|

| 19 |

+

" ": 50277,

|

| 20 |

+

" ": 50276,

|

| 21 |

+

" ": 50275,

|

| 22 |

+

" ": 50274,

|

| 23 |

+

" ": 50273,

|

| 24 |

+

" ": 50272,

|

| 25 |

+

" ": 50271,

|

| 26 |

+

" ": 50270,

|

| 27 |

+

" ": 50269,

|

| 28 |

+

" ": 50268,

|

| 29 |

+

" ": 50267,

|

| 30 |

+

" ": 50266,

|

| 31 |

+

" ": 50265,

|

| 32 |

+

" ": 50264,

|

| 33 |

+

" ": 50263,

|

| 34 |

+

" ": 50262,

|

| 35 |

+

" ": 50261,

|

| 36 |

+

" ": 50260,

|

| 37 |

+

" ": 50259,

|

| 38 |

+

" ": 50258,

|

| 39 |

+

" ": 50257

|

| 40 |

+

}

|

assets/demo-1.jpg

ADDED

|

assets/demo-2.jpg

ADDED

|

assets/demo-3.jpg

ADDED

|

assets/demo-4.jpg

ADDED

|

assets/demo-5.jpg

ADDED

|

config.json

ADDED

|

@@ -0,0 +1,15 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"Moondream"

|

| 4 |

+

],

|

| 5 |

+

"auto_map": {

|

| 6 |

+

"AutoConfig": "configuration_moondream.MoondreamConfig",

|

| 7 |

+

"AutoModelForCausalLM": "moondream.Moondream"

|

| 8 |

+

},

|

| 9 |

+

"model_type": "moondream1",

|

| 10 |

+

"phi_config": {

|

| 11 |

+

"model_type": "phi-msft"

|

| 12 |

+

},

|

| 13 |

+

"torch_dtype": "float16",

|

| 14 |

+

"transformers_version": "4.36.2"

|

| 15 |

+

}

|

configuration_moondream.py

ADDED

|

@@ -0,0 +1,74 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from transformers import PretrainedConfig

|

| 2 |

+

|

| 3 |

+

from typing import Optional

|

| 4 |

+

import math

|

| 5 |

+

|

| 6 |

+

|

| 7 |

+

class PhiConfig(PretrainedConfig):

|

| 8 |

+

model_type = "phi-msft"

|

| 9 |

+

|

| 10 |

+

def __init__(

|

| 11 |

+

self,

|

| 12 |

+

vocab_size: int = 51200,

|

| 13 |

+

n_positions: int = 2048,

|

| 14 |

+

n_embd: int = 2048,

|

| 15 |

+

n_layer: int = 24,

|

| 16 |

+

n_inner: Optional[int] = None,

|

| 17 |

+

n_head: int = 32,

|

| 18 |

+

n_head_kv: Optional[int] = None,

|

| 19 |

+

rotary_dim: Optional[int] = 32,

|

| 20 |

+

activation_function: Optional[str] = "gelu_new",

|

| 21 |

+

flash_attn: bool = False,

|

| 22 |

+

flash_rotary: bool = False,

|

| 23 |

+

fused_dense: bool = False,

|

| 24 |

+

attn_pdrop: float = 0.0,

|

| 25 |

+

embd_pdrop: float = 0.0,

|

| 26 |

+

resid_pdrop: float = 0.0,

|

| 27 |

+

layer_norm_epsilon: float = 1e-5,

|

| 28 |

+

initializer_range: float = 0.02,

|

| 29 |

+

tie_word_embeddings: bool = False,

|

| 30 |

+

pad_vocab_size_multiple: int = 64,

|

| 31 |

+

gradient_checkpointing: bool = False,

|

| 32 |

+

**kwargs

|

| 33 |

+

):

|

| 34 |

+

pad_vocab_size = (

|

| 35 |

+

math.ceil(vocab_size / pad_vocab_size_multiple) * pad_vocab_size_multiple

|

| 36 |

+

)

|

| 37 |

+

super().__init__(

|

| 38 |

+

vocab_size=pad_vocab_size,

|

| 39 |

+

n_positions=n_positions,

|

| 40 |

+

n_embd=n_embd,

|

| 41 |

+

n_layer=n_layer,

|

| 42 |

+

n_inner=n_inner,

|

| 43 |

+

n_head=n_head,

|

| 44 |

+

n_head_kv=n_head_kv,

|

| 45 |

+

activation_function=activation_function,

|

| 46 |

+

attn_pdrop=attn_pdrop,

|

| 47 |

+

embd_pdrop=embd_pdrop,

|

| 48 |

+

resid_pdrop=resid_pdrop,

|

| 49 |

+

layer_norm_epsilon=layer_norm_epsilon,

|

| 50 |

+

initializer_range=initializer_range,

|

| 51 |

+

pad_vocab_size_multiple=pad_vocab_size_multiple,

|

| 52 |

+

tie_word_embeddings=tie_word_embeddings,

|

| 53 |

+

gradient_checkpointing=gradient_checkpointing,

|

| 54 |

+

**kwargs

|

| 55 |

+

)

|

| 56 |

+

self.rotary_dim = min(rotary_dim, n_embd // n_head)

|

| 57 |

+

self.flash_attn = flash_attn

|

| 58 |

+

self.flash_rotary = flash_rotary

|

| 59 |

+

self.fused_dense = fused_dense

|

| 60 |

+

|

| 61 |

+

attribute_map = {

|

| 62 |

+

"max_position_embeddings": "n_positions",

|

| 63 |

+

"hidden_size": "n_embd",

|

| 64 |

+

"num_attention_heads": "n_head",

|

| 65 |

+

"num_hidden_layers": "n_layer",

|

| 66 |

+

}

|

| 67 |

+

|

| 68 |

+

|

| 69 |

+

class MoondreamConfig(PretrainedConfig):

|

| 70 |

+

model_type = "moondream1"

|

| 71 |

+

|

| 72 |

+

def __init__(self, **kwargs):

|

| 73 |

+

self.phi_config = PhiConfig(**kwargs)

|

| 74 |

+

super().__init__(**kwargs)

|

generation_config.json

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"transformers_version": "4.36.2"

|

| 4 |

+

}

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

model-00001-of-00002.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:44ea739f35b3eae160979d3bc03e4a091816a61acad2a58aff3518812c891b1c

|

| 3 |

+

size 135

|

model-00002-of-00002.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e0520d63ad66cc7dfe1f8cc6a8230735ce8791152917b45fe9e7eec751f86526

|

| 3 |

+

size 135

|

model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:3746971ff772573912a5bb83d1a3dce1bde96eb49d2ac5dc504e31a9aa60105e

|

| 3 |

+

size 135

|

model.safetensors.index.json

ADDED

|

@@ -0,0 +1,591 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"metadata": {

|

| 3 |

+

"total_size": 7564205504

|

| 4 |

+

},

|

| 5 |

+

"weight_map": {

|

| 6 |

+

"text_model.model.lm_head.linear.bias": "model-00002-of-00002.safetensors",

|

| 7 |

+

"text_model.model.lm_head.linear.weight": "model-00002-of-00002.safetensors",

|

| 8 |

+

"text_model.model.lm_head.ln.bias": "model-00002-of-00002.safetensors",

|

| 9 |

+

"text_model.model.lm_head.ln.weight": "model-00002-of-00002.safetensors",

|

| 10 |

+

"text_model.model.transformer.embd.wte.weight": "model-00001-of-00002.safetensors",

|

| 11 |

+

"text_model.model.transformer.h.0.ln.bias": "model-00001-of-00002.safetensors",

|

| 12 |

+

"text_model.model.transformer.h.0.ln.weight": "model-00001-of-00002.safetensors",

|

| 13 |

+

"text_model.model.transformer.h.0.mixer.Wqkv.bias": "model-00001-of-00002.safetensors",

|

| 14 |

+

"text_model.model.transformer.h.0.mixer.Wqkv.weight": "model-00001-of-00002.safetensors",

|

| 15 |

+

"text_model.model.transformer.h.0.mixer.out_proj.bias": "model-00001-of-00002.safetensors",

|

| 16 |

+

"text_model.model.transformer.h.0.mixer.out_proj.weight": "model-00001-of-00002.safetensors",

|

| 17 |

+

"text_model.model.transformer.h.0.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 18 |

+

"text_model.model.transformer.h.0.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 19 |

+

"text_model.model.transformer.h.0.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 20 |

+

"text_model.model.transformer.h.0.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 21 |

+

"text_model.model.transformer.h.1.ln.bias": "model-00001-of-00002.safetensors",

|

| 22 |

+

"text_model.model.transformer.h.1.ln.weight": "model-00001-of-00002.safetensors",

|

| 23 |

+

"text_model.model.transformer.h.1.mixer.Wqkv.bias": "model-00001-of-00002.safetensors",

|

| 24 |

+

"text_model.model.transformer.h.1.mixer.Wqkv.weight": "model-00001-of-00002.safetensors",

|

| 25 |

+

"text_model.model.transformer.h.1.mixer.out_proj.bias": "model-00001-of-00002.safetensors",

|

| 26 |

+

"text_model.model.transformer.h.1.mixer.out_proj.weight": "model-00001-of-00002.safetensors",

|

| 27 |

+

"text_model.model.transformer.h.1.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 28 |

+

"text_model.model.transformer.h.1.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 29 |

+

"text_model.model.transformer.h.1.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 30 |

+

"text_model.model.transformer.h.1.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 31 |

+

"text_model.model.transformer.h.10.ln.bias": "model-00001-of-00002.safetensors",

|

| 32 |

+

"text_model.model.transformer.h.10.ln.weight": "model-00001-of-00002.safetensors",

|

| 33 |

+

"text_model.model.transformer.h.10.mixer.Wqkv.bias": "model-00001-of-00002.safetensors",

|

| 34 |

+

"text_model.model.transformer.h.10.mixer.Wqkv.weight": "model-00001-of-00002.safetensors",

|

| 35 |

+

"text_model.model.transformer.h.10.mixer.out_proj.bias": "model-00001-of-00002.safetensors",

|

| 36 |

+

"text_model.model.transformer.h.10.mixer.out_proj.weight": "model-00001-of-00002.safetensors",

|

| 37 |

+

"text_model.model.transformer.h.10.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 38 |

+

"text_model.model.transformer.h.10.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 39 |

+

"text_model.model.transformer.h.10.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 40 |

+

"text_model.model.transformer.h.10.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 41 |

+

"text_model.model.transformer.h.11.ln.bias": "model-00001-of-00002.safetensors",

|

| 42 |

+

"text_model.model.transformer.h.11.ln.weight": "model-00001-of-00002.safetensors",

|

| 43 |

+

"text_model.model.transformer.h.11.mixer.Wqkv.bias": "model-00001-of-00002.safetensors",

|

| 44 |

+

"text_model.model.transformer.h.11.mixer.Wqkv.weight": "model-00001-of-00002.safetensors",

|

| 45 |

+

"text_model.model.transformer.h.11.mixer.out_proj.bias": "model-00001-of-00002.safetensors",

|

| 46 |

+

"text_model.model.transformer.h.11.mixer.out_proj.weight": "model-00001-of-00002.safetensors",

|

| 47 |

+

"text_model.model.transformer.h.11.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 48 |

+

"text_model.model.transformer.h.11.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 49 |

+

"text_model.model.transformer.h.11.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 50 |

+

"text_model.model.transformer.h.11.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 51 |

+

"text_model.model.transformer.h.12.ln.bias": "model-00001-of-00002.safetensors",

|

| 52 |

+

"text_model.model.transformer.h.12.ln.weight": "model-00001-of-00002.safetensors",

|

| 53 |

+

"text_model.model.transformer.h.12.mixer.Wqkv.bias": "model-00001-of-00002.safetensors",

|

| 54 |

+

"text_model.model.transformer.h.12.mixer.Wqkv.weight": "model-00001-of-00002.safetensors",

|

| 55 |

+

"text_model.model.transformer.h.12.mixer.out_proj.bias": "model-00001-of-00002.safetensors",

|

| 56 |

+

"text_model.model.transformer.h.12.mixer.out_proj.weight": "model-00001-of-00002.safetensors",

|

| 57 |

+

"text_model.model.transformer.h.12.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 58 |

+

"text_model.model.transformer.h.12.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 59 |

+

"text_model.model.transformer.h.12.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 60 |

+

"text_model.model.transformer.h.12.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 61 |

+

"text_model.model.transformer.h.13.ln.bias": "model-00001-of-00002.safetensors",

|

| 62 |

+

"text_model.model.transformer.h.13.ln.weight": "model-00001-of-00002.safetensors",

|

| 63 |

+

"text_model.model.transformer.h.13.mixer.Wqkv.bias": "model-00001-of-00002.safetensors",

|

| 64 |

+

"text_model.model.transformer.h.13.mixer.Wqkv.weight": "model-00001-of-00002.safetensors",

|

| 65 |

+

"text_model.model.transformer.h.13.mixer.out_proj.bias": "model-00001-of-00002.safetensors",

|

| 66 |

+

"text_model.model.transformer.h.13.mixer.out_proj.weight": "model-00001-of-00002.safetensors",

|

| 67 |

+

"text_model.model.transformer.h.13.mlp.fc1.bias": "model-00002-of-00002.safetensors",

|

| 68 |

+

"text_model.model.transformer.h.13.mlp.fc1.weight": "model-00002-of-00002.safetensors",

|

| 69 |

+

"text_model.model.transformer.h.13.mlp.fc2.bias": "model-00002-of-00002.safetensors",

|

| 70 |

+

"text_model.model.transformer.h.13.mlp.fc2.weight": "model-00002-of-00002.safetensors",

|

| 71 |

+

"text_model.model.transformer.h.14.ln.bias": "model-00002-of-00002.safetensors",

|

| 72 |

+

"text_model.model.transformer.h.14.ln.weight": "model-00002-of-00002.safetensors",

|

| 73 |

+

"text_model.model.transformer.h.14.mixer.Wqkv.bias": "model-00002-of-00002.safetensors",

|

| 74 |

+

"text_model.model.transformer.h.14.mixer.Wqkv.weight": "model-00002-of-00002.safetensors",

|

| 75 |

+

"text_model.model.transformer.h.14.mixer.out_proj.bias": "model-00002-of-00002.safetensors",

|

| 76 |

+

"text_model.model.transformer.h.14.mixer.out_proj.weight": "model-00002-of-00002.safetensors",

|

| 77 |

+

"text_model.model.transformer.h.14.mlp.fc1.bias": "model-00002-of-00002.safetensors",

|

| 78 |

+

"text_model.model.transformer.h.14.mlp.fc1.weight": "model-00002-of-00002.safetensors",

|

| 79 |

+

"text_model.model.transformer.h.14.mlp.fc2.bias": "model-00002-of-00002.safetensors",

|

| 80 |

+

"text_model.model.transformer.h.14.mlp.fc2.weight": "model-00002-of-00002.safetensors",

|

| 81 |

+

"text_model.model.transformer.h.15.ln.bias": "model-00002-of-00002.safetensors",

|

| 82 |

+

"text_model.model.transformer.h.15.ln.weight": "model-00002-of-00002.safetensors",

|

| 83 |

+

"text_model.model.transformer.h.15.mixer.Wqkv.bias": "model-00002-of-00002.safetensors",

|

| 84 |

+

"text_model.model.transformer.h.15.mixer.Wqkv.weight": "model-00002-of-00002.safetensors",

|

| 85 |

+

"text_model.model.transformer.h.15.mixer.out_proj.bias": "model-00002-of-00002.safetensors",

|

| 86 |

+

"text_model.model.transformer.h.15.mixer.out_proj.weight": "model-00002-of-00002.safetensors",

|

| 87 |

+

"text_model.model.transformer.h.15.mlp.fc1.bias": "model-00002-of-00002.safetensors",

|

| 88 |

+

"text_model.model.transformer.h.15.mlp.fc1.weight": "model-00002-of-00002.safetensors",

|

| 89 |

+

"text_model.model.transformer.h.15.mlp.fc2.bias": "model-00002-of-00002.safetensors",

|

| 90 |

+

"text_model.model.transformer.h.15.mlp.fc2.weight": "model-00002-of-00002.safetensors",

|

| 91 |

+

"text_model.model.transformer.h.16.ln.bias": "model-00002-of-00002.safetensors",

|

| 92 |

+

"text_model.model.transformer.h.16.ln.weight": "model-00002-of-00002.safetensors",

|

| 93 |

+

"text_model.model.transformer.h.16.mixer.Wqkv.bias": "model-00002-of-00002.safetensors",

|

| 94 |

+

"text_model.model.transformer.h.16.mixer.Wqkv.weight": "model-00002-of-00002.safetensors",

|

| 95 |

+

"text_model.model.transformer.h.16.mixer.out_proj.bias": "model-00002-of-00002.safetensors",

|

| 96 |

+

"text_model.model.transformer.h.16.mixer.out_proj.weight": "model-00002-of-00002.safetensors",

|

| 97 |

+

"text_model.model.transformer.h.16.mlp.fc1.bias": "model-00002-of-00002.safetensors",

|

| 98 |

+

"text_model.model.transformer.h.16.mlp.fc1.weight": "model-00002-of-00002.safetensors",

|

| 99 |

+

"text_model.model.transformer.h.16.mlp.fc2.bias": "model-00002-of-00002.safetensors",

|

| 100 |

+

"text_model.model.transformer.h.16.mlp.fc2.weight": "model-00002-of-00002.safetensors",

|

| 101 |

+

"text_model.model.transformer.h.17.ln.bias": "model-00002-of-00002.safetensors",

|

| 102 |

+

"text_model.model.transformer.h.17.ln.weight": "model-00002-of-00002.safetensors",

|

| 103 |

+

"text_model.model.transformer.h.17.mixer.Wqkv.bias": "model-00002-of-00002.safetensors",

|

| 104 |

+

"text_model.model.transformer.h.17.mixer.Wqkv.weight": "model-00002-of-00002.safetensors",

|

| 105 |

+

"text_model.model.transformer.h.17.mixer.out_proj.bias": "model-00002-of-00002.safetensors",

|

| 106 |

+

"text_model.model.transformer.h.17.mixer.out_proj.weight": "model-00002-of-00002.safetensors",

|

| 107 |

+

"text_model.model.transformer.h.17.mlp.fc1.bias": "model-00002-of-00002.safetensors",

|

| 108 |

+

"text_model.model.transformer.h.17.mlp.fc1.weight": "model-00002-of-00002.safetensors",

|

| 109 |

+

"text_model.model.transformer.h.17.mlp.fc2.bias": "model-00002-of-00002.safetensors",

|

| 110 |

+

"text_model.model.transformer.h.17.mlp.fc2.weight": "model-00002-of-00002.safetensors",

|

| 111 |

+

"text_model.model.transformer.h.18.ln.bias": "model-00002-of-00002.safetensors",

|

| 112 |

+

"text_model.model.transformer.h.18.ln.weight": "model-00002-of-00002.safetensors",

|

| 113 |

+

"text_model.model.transformer.h.18.mixer.Wqkv.bias": "model-00002-of-00002.safetensors",

|

| 114 |

+

"text_model.model.transformer.h.18.mixer.Wqkv.weight": "model-00002-of-00002.safetensors",

|

| 115 |

+

"text_model.model.transformer.h.18.mixer.out_proj.bias": "model-00002-of-00002.safetensors",

|

| 116 |

+

"text_model.model.transformer.h.18.mixer.out_proj.weight": "model-00002-of-00002.safetensors",

|

| 117 |

+

"text_model.model.transformer.h.18.mlp.fc1.bias": "model-00002-of-00002.safetensors",

|

| 118 |

+

"text_model.model.transformer.h.18.mlp.fc1.weight": "model-00002-of-00002.safetensors",

|

| 119 |

+

"text_model.model.transformer.h.18.mlp.fc2.bias": "model-00002-of-00002.safetensors",

|

| 120 |

+

"text_model.model.transformer.h.18.mlp.fc2.weight": "model-00002-of-00002.safetensors",

|

| 121 |

+

"text_model.model.transformer.h.19.ln.bias": "model-00002-of-00002.safetensors",

|

| 122 |

+

"text_model.model.transformer.h.19.ln.weight": "model-00002-of-00002.safetensors",

|

| 123 |

+

"text_model.model.transformer.h.19.mixer.Wqkv.bias": "model-00002-of-00002.safetensors",

|

| 124 |

+

"text_model.model.transformer.h.19.mixer.Wqkv.weight": "model-00002-of-00002.safetensors",

|

| 125 |

+

"text_model.model.transformer.h.19.mixer.out_proj.bias": "model-00002-of-00002.safetensors",

|

| 126 |

+

"text_model.model.transformer.h.19.mixer.out_proj.weight": "model-00002-of-00002.safetensors",

|

| 127 |

+

"text_model.model.transformer.h.19.mlp.fc1.bias": "model-00002-of-00002.safetensors",

|

| 128 |

+

"text_model.model.transformer.h.19.mlp.fc1.weight": "model-00002-of-00002.safetensors",

|

| 129 |

+

"text_model.model.transformer.h.19.mlp.fc2.bias": "model-00002-of-00002.safetensors",

|

| 130 |

+

"text_model.model.transformer.h.19.mlp.fc2.weight": "model-00002-of-00002.safetensors",

|

| 131 |

+

"text_model.model.transformer.h.2.ln.bias": "model-00001-of-00002.safetensors",

|

| 132 |

+

"text_model.model.transformer.h.2.ln.weight": "model-00001-of-00002.safetensors",

|

| 133 |

+

"text_model.model.transformer.h.2.mixer.Wqkv.bias": "model-00001-of-00002.safetensors",

|

| 134 |

+

"text_model.model.transformer.h.2.mixer.Wqkv.weight": "model-00001-of-00002.safetensors",

|

| 135 |

+

"text_model.model.transformer.h.2.mixer.out_proj.bias": "model-00001-of-00002.safetensors",

|

| 136 |

+

"text_model.model.transformer.h.2.mixer.out_proj.weight": "model-00001-of-00002.safetensors",

|

| 137 |

+

"text_model.model.transformer.h.2.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 138 |

+

"text_model.model.transformer.h.2.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 139 |

+

"text_model.model.transformer.h.2.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 140 |

+

"text_model.model.transformer.h.2.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 141 |

+

"text_model.model.transformer.h.20.ln.bias": "model-00002-of-00002.safetensors",

|

| 142 |

+

"text_model.model.transformer.h.20.ln.weight": "model-00002-of-00002.safetensors",

|

| 143 |

+

"text_model.model.transformer.h.20.mixer.Wqkv.bias": "model-00002-of-00002.safetensors",

|

| 144 |

+

"text_model.model.transformer.h.20.mixer.Wqkv.weight": "model-00002-of-00002.safetensors",

|

| 145 |

+

"text_model.model.transformer.h.20.mixer.out_proj.bias": "model-00002-of-00002.safetensors",

|

| 146 |

+

"text_model.model.transformer.h.20.mixer.out_proj.weight": "model-00002-of-00002.safetensors",

|

| 147 |

+

"text_model.model.transformer.h.20.mlp.fc1.bias": "model-00002-of-00002.safetensors",

|

| 148 |

+

"text_model.model.transformer.h.20.mlp.fc1.weight": "model-00002-of-00002.safetensors",

|

| 149 |

+

"text_model.model.transformer.h.20.mlp.fc2.bias": "model-00002-of-00002.safetensors",

|

| 150 |

+

"text_model.model.transformer.h.20.mlp.fc2.weight": "model-00002-of-00002.safetensors",

|

| 151 |

+

"text_model.model.transformer.h.21.ln.bias": "model-00002-of-00002.safetensors",

|

| 152 |

+

"text_model.model.transformer.h.21.ln.weight": "model-00002-of-00002.safetensors",

|

| 153 |

+

"text_model.model.transformer.h.21.mixer.Wqkv.bias": "model-00002-of-00002.safetensors",

|

| 154 |

+

"text_model.model.transformer.h.21.mixer.Wqkv.weight": "model-00002-of-00002.safetensors",

|

| 155 |

+

"text_model.model.transformer.h.21.mixer.out_proj.bias": "model-00002-of-00002.safetensors",

|

| 156 |

+

"text_model.model.transformer.h.21.mixer.out_proj.weight": "model-00002-of-00002.safetensors",

|

| 157 |

+

"text_model.model.transformer.h.21.mlp.fc1.bias": "model-00002-of-00002.safetensors",

|

| 158 |

+

"text_model.model.transformer.h.21.mlp.fc1.weight": "model-00002-of-00002.safetensors",

|

| 159 |

+

"text_model.model.transformer.h.21.mlp.fc2.bias": "model-00002-of-00002.safetensors",

|

| 160 |

+

"text_model.model.transformer.h.21.mlp.fc2.weight": "model-00002-of-00002.safetensors",

|

| 161 |

+

"text_model.model.transformer.h.22.ln.bias": "model-00002-of-00002.safetensors",

|

| 162 |

+

"text_model.model.transformer.h.22.ln.weight": "model-00002-of-00002.safetensors",

|

| 163 |

+

"text_model.model.transformer.h.22.mixer.Wqkv.bias": "model-00002-of-00002.safetensors",

|

| 164 |

+

"text_model.model.transformer.h.22.mixer.Wqkv.weight": "model-00002-of-00002.safetensors",

|

| 165 |

+

"text_model.model.transformer.h.22.mixer.out_proj.bias": "model-00002-of-00002.safetensors",

|

| 166 |

+

"text_model.model.transformer.h.22.mixer.out_proj.weight": "model-00002-of-00002.safetensors",

|

| 167 |

+

"text_model.model.transformer.h.22.mlp.fc1.bias": "model-00002-of-00002.safetensors",

|

| 168 |

+

"text_model.model.transformer.h.22.mlp.fc1.weight": "model-00002-of-00002.safetensors",

|

| 169 |

+

"text_model.model.transformer.h.22.mlp.fc2.bias": "model-00002-of-00002.safetensors",

|

| 170 |

+

"text_model.model.transformer.h.22.mlp.fc2.weight": "model-00002-of-00002.safetensors",

|

| 171 |

+

"text_model.model.transformer.h.23.ln.bias": "model-00002-of-00002.safetensors",

|

| 172 |

+

"text_model.model.transformer.h.23.ln.weight": "model-00002-of-00002.safetensors",

|

| 173 |

+

"text_model.model.transformer.h.23.mixer.Wqkv.bias": "model-00002-of-00002.safetensors",

|

| 174 |

+

"text_model.model.transformer.h.23.mixer.Wqkv.weight": "model-00002-of-00002.safetensors",

|

| 175 |

+

"text_model.model.transformer.h.23.mixer.out_proj.bias": "model-00002-of-00002.safetensors",

|

| 176 |

+

"text_model.model.transformer.h.23.mixer.out_proj.weight": "model-00002-of-00002.safetensors",

|

| 177 |

+

"text_model.model.transformer.h.23.mlp.fc1.bias": "model-00002-of-00002.safetensors",

|

| 178 |

+

"text_model.model.transformer.h.23.mlp.fc1.weight": "model-00002-of-00002.safetensors",

|

| 179 |

+

"text_model.model.transformer.h.23.mlp.fc2.bias": "model-00002-of-00002.safetensors",

|

| 180 |

+

"text_model.model.transformer.h.23.mlp.fc2.weight": "model-00002-of-00002.safetensors",

|

| 181 |

+

"text_model.model.transformer.h.3.ln.bias": "model-00001-of-00002.safetensors",

|

| 182 |

+

"text_model.model.transformer.h.3.ln.weight": "model-00001-of-00002.safetensors",

|

| 183 |

+

"text_model.model.transformer.h.3.mixer.Wqkv.bias": "model-00001-of-00002.safetensors",

|

| 184 |

+

"text_model.model.transformer.h.3.mixer.Wqkv.weight": "model-00001-of-00002.safetensors",

|

| 185 |

+

"text_model.model.transformer.h.3.mixer.out_proj.bias": "model-00001-of-00002.safetensors",

|

| 186 |

+

"text_model.model.transformer.h.3.mixer.out_proj.weight": "model-00001-of-00002.safetensors",

|

| 187 |

+

"text_model.model.transformer.h.3.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 188 |

+

"text_model.model.transformer.h.3.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 189 |

+

"text_model.model.transformer.h.3.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 190 |

+

"text_model.model.transformer.h.3.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 191 |

+

"text_model.model.transformer.h.4.ln.bias": "model-00001-of-00002.safetensors",

|

| 192 |

+

"text_model.model.transformer.h.4.ln.weight": "model-00001-of-00002.safetensors",

|

| 193 |

+

"text_model.model.transformer.h.4.mixer.Wqkv.bias": "model-00001-of-00002.safetensors",

|

| 194 |

+

"text_model.model.transformer.h.4.mixer.Wqkv.weight": "model-00001-of-00002.safetensors",

|

| 195 |

+

"text_model.model.transformer.h.4.mixer.out_proj.bias": "model-00001-of-00002.safetensors",

|

| 196 |

+

"text_model.model.transformer.h.4.mixer.out_proj.weight": "model-00001-of-00002.safetensors",

|

| 197 |

+

"text_model.model.transformer.h.4.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 198 |

+

"text_model.model.transformer.h.4.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 199 |

+

"text_model.model.transformer.h.4.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 200 |

+

"text_model.model.transformer.h.4.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 201 |

+

"text_model.model.transformer.h.5.ln.bias": "model-00001-of-00002.safetensors",

|

| 202 |

+

"text_model.model.transformer.h.5.ln.weight": "model-00001-of-00002.safetensors",

|

| 203 |

+

"text_model.model.transformer.h.5.mixer.Wqkv.bias": "model-00001-of-00002.safetensors",

|

| 204 |

+

"text_model.model.transformer.h.5.mixer.Wqkv.weight": "model-00001-of-00002.safetensors",

|

| 205 |

+

"text_model.model.transformer.h.5.mixer.out_proj.bias": "model-00001-of-00002.safetensors",

|

| 206 |

+

"text_model.model.transformer.h.5.mixer.out_proj.weight": "model-00001-of-00002.safetensors",

|

| 207 |

+

"text_model.model.transformer.h.5.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 208 |

+

"text_model.model.transformer.h.5.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 209 |

+

"text_model.model.transformer.h.5.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 210 |

+

"text_model.model.transformer.h.5.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 211 |

+

"text_model.model.transformer.h.6.ln.bias": "model-00001-of-00002.safetensors",

|

| 212 |

+

"text_model.model.transformer.h.6.ln.weight": "model-00001-of-00002.safetensors",

|

| 213 |

+

"text_model.model.transformer.h.6.mixer.Wqkv.bias": "model-00001-of-00002.safetensors",

|

| 214 |

+

"text_model.model.transformer.h.6.mixer.Wqkv.weight": "model-00001-of-00002.safetensors",

|

| 215 |

+

"text_model.model.transformer.h.6.mixer.out_proj.bias": "model-00001-of-00002.safetensors",

|

| 216 |

+

"text_model.model.transformer.h.6.mixer.out_proj.weight": "model-00001-of-00002.safetensors",

|

| 217 |

+

"text_model.model.transformer.h.6.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 218 |

+

"text_model.model.transformer.h.6.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 219 |

+

"text_model.model.transformer.h.6.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 220 |

+

"text_model.model.transformer.h.6.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 221 |

+

"text_model.model.transformer.h.7.ln.bias": "model-00001-of-00002.safetensors",

|

| 222 |

+

"text_model.model.transformer.h.7.ln.weight": "model-00001-of-00002.safetensors",

|

| 223 |

+

"text_model.model.transformer.h.7.mixer.Wqkv.bias": "model-00001-of-00002.safetensors",

|

| 224 |

+

"text_model.model.transformer.h.7.mixer.Wqkv.weight": "model-00001-of-00002.safetensors",

|

| 225 |

+

"text_model.model.transformer.h.7.mixer.out_proj.bias": "model-00001-of-00002.safetensors",

|

| 226 |

+

"text_model.model.transformer.h.7.mixer.out_proj.weight": "model-00001-of-00002.safetensors",

|

| 227 |

+

"text_model.model.transformer.h.7.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 228 |

+

"text_model.model.transformer.h.7.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 229 |

+

"text_model.model.transformer.h.7.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 230 |

+

"text_model.model.transformer.h.7.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 231 |

+

"text_model.model.transformer.h.8.ln.bias": "model-00001-of-00002.safetensors",

|

| 232 |

+

"text_model.model.transformer.h.8.ln.weight": "model-00001-of-00002.safetensors",

|

| 233 |

+

"text_model.model.transformer.h.8.mixer.Wqkv.bias": "model-00001-of-00002.safetensors",

|

| 234 |

+

"text_model.model.transformer.h.8.mixer.Wqkv.weight": "model-00001-of-00002.safetensors",

|

| 235 |

+

"text_model.model.transformer.h.8.mixer.out_proj.bias": "model-00001-of-00002.safetensors",

|

| 236 |

+

"text_model.model.transformer.h.8.mixer.out_proj.weight": "model-00001-of-00002.safetensors",

|

| 237 |

+

"text_model.model.transformer.h.8.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 238 |

+

"text_model.model.transformer.h.8.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 239 |

+

"text_model.model.transformer.h.8.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 240 |

+

"text_model.model.transformer.h.8.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 241 |

+

"text_model.model.transformer.h.9.ln.bias": "model-00001-of-00002.safetensors",

|

| 242 |

+

"text_model.model.transformer.h.9.ln.weight": "model-00001-of-00002.safetensors",

|

| 243 |

+

"text_model.model.transformer.h.9.mixer.Wqkv.bias": "model-00001-of-00002.safetensors",

|

| 244 |

+

"text_model.model.transformer.h.9.mixer.Wqkv.weight": "model-00001-of-00002.safetensors",

|

| 245 |

+

"text_model.model.transformer.h.9.mixer.out_proj.bias": "model-00001-of-00002.safetensors",

|

| 246 |

+

"text_model.model.transformer.h.9.mixer.out_proj.weight": "model-00001-of-00002.safetensors",

|

| 247 |

+

"text_model.model.transformer.h.9.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 248 |

+

"text_model.model.transformer.h.9.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 249 |

+

"text_model.model.transformer.h.9.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 250 |

+

"text_model.model.transformer.h.9.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 251 |

+

"vision_encoder.model.encoder.model.visual.blocks.0.attn.proj.bias": "model-00001-of-00002.safetensors",

|

| 252 |

+

"vision_encoder.model.encoder.model.visual.blocks.0.attn.proj.weight": "model-00001-of-00002.safetensors",

|

| 253 |

+

"vision_encoder.model.encoder.model.visual.blocks.0.attn.qkv.bias": "model-00001-of-00002.safetensors",

|

| 254 |

+

"vision_encoder.model.encoder.model.visual.blocks.0.attn.qkv.weight": "model-00001-of-00002.safetensors",

|

| 255 |

+

"vision_encoder.model.encoder.model.visual.blocks.0.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 256 |

+

"vision_encoder.model.encoder.model.visual.blocks.0.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 257 |

+

"vision_encoder.model.encoder.model.visual.blocks.0.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 258 |

+

"vision_encoder.model.encoder.model.visual.blocks.0.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 259 |

+

"vision_encoder.model.encoder.model.visual.blocks.0.norm1.bias": "model-00001-of-00002.safetensors",

|

| 260 |

+

"vision_encoder.model.encoder.model.visual.blocks.0.norm1.weight": "model-00001-of-00002.safetensors",

|

| 261 |

+

"vision_encoder.model.encoder.model.visual.blocks.0.norm2.bias": "model-00001-of-00002.safetensors",

|

| 262 |

+

"vision_encoder.model.encoder.model.visual.blocks.0.norm2.weight": "model-00001-of-00002.safetensors",

|

| 263 |

+

"vision_encoder.model.encoder.model.visual.blocks.1.attn.proj.bias": "model-00001-of-00002.safetensors",

|

| 264 |

+

"vision_encoder.model.encoder.model.visual.blocks.1.attn.proj.weight": "model-00001-of-00002.safetensors",

|

| 265 |

+

"vision_encoder.model.encoder.model.visual.blocks.1.attn.qkv.bias": "model-00001-of-00002.safetensors",

|

| 266 |

+

"vision_encoder.model.encoder.model.visual.blocks.1.attn.qkv.weight": "model-00001-of-00002.safetensors",

|

| 267 |

+

"vision_encoder.model.encoder.model.visual.blocks.1.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 268 |

+

"vision_encoder.model.encoder.model.visual.blocks.1.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 269 |

+

"vision_encoder.model.encoder.model.visual.blocks.1.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 270 |

+

"vision_encoder.model.encoder.model.visual.blocks.1.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 271 |

+

"vision_encoder.model.encoder.model.visual.blocks.1.norm1.bias": "model-00001-of-00002.safetensors",

|

| 272 |

+

"vision_encoder.model.encoder.model.visual.blocks.1.norm1.weight": "model-00001-of-00002.safetensors",

|

| 273 |

+

"vision_encoder.model.encoder.model.visual.blocks.1.norm2.bias": "model-00001-of-00002.safetensors",

|

| 274 |

+

"vision_encoder.model.encoder.model.visual.blocks.1.norm2.weight": "model-00001-of-00002.safetensors",

|

| 275 |

+

"vision_encoder.model.encoder.model.visual.blocks.10.attn.proj.bias": "model-00001-of-00002.safetensors",

|

| 276 |

+

"vision_encoder.model.encoder.model.visual.blocks.10.attn.proj.weight": "model-00001-of-00002.safetensors",

|

| 277 |

+

"vision_encoder.model.encoder.model.visual.blocks.10.attn.qkv.bias": "model-00001-of-00002.safetensors",

|

| 278 |

+

"vision_encoder.model.encoder.model.visual.blocks.10.attn.qkv.weight": "model-00001-of-00002.safetensors",

|

| 279 |

+

"vision_encoder.model.encoder.model.visual.blocks.10.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 280 |

+

"vision_encoder.model.encoder.model.visual.blocks.10.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 281 |

+

"vision_encoder.model.encoder.model.visual.blocks.10.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 282 |

+

"vision_encoder.model.encoder.model.visual.blocks.10.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 283 |

+

"vision_encoder.model.encoder.model.visual.blocks.10.norm1.bias": "model-00001-of-00002.safetensors",

|

| 284 |

+

"vision_encoder.model.encoder.model.visual.blocks.10.norm1.weight": "model-00001-of-00002.safetensors",

|

| 285 |

+

"vision_encoder.model.encoder.model.visual.blocks.10.norm2.bias": "model-00001-of-00002.safetensors",

|

| 286 |

+

"vision_encoder.model.encoder.model.visual.blocks.10.norm2.weight": "model-00001-of-00002.safetensors",

|

| 287 |

+

"vision_encoder.model.encoder.model.visual.blocks.11.attn.proj.bias": "model-00001-of-00002.safetensors",

|

| 288 |

+

"vision_encoder.model.encoder.model.visual.blocks.11.attn.proj.weight": "model-00001-of-00002.safetensors",

|

| 289 |

+

"vision_encoder.model.encoder.model.visual.blocks.11.attn.qkv.bias": "model-00001-of-00002.safetensors",

|

| 290 |

+

"vision_encoder.model.encoder.model.visual.blocks.11.attn.qkv.weight": "model-00001-of-00002.safetensors",

|

| 291 |

+

"vision_encoder.model.encoder.model.visual.blocks.11.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 292 |

+

"vision_encoder.model.encoder.model.visual.blocks.11.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 293 |

+

"vision_encoder.model.encoder.model.visual.blocks.11.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 294 |

+

"vision_encoder.model.encoder.model.visual.blocks.11.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 295 |

+

"vision_encoder.model.encoder.model.visual.blocks.11.norm1.bias": "model-00001-of-00002.safetensors",

|

| 296 |

+

"vision_encoder.model.encoder.model.visual.blocks.11.norm1.weight": "model-00001-of-00002.safetensors",

|

| 297 |

+

"vision_encoder.model.encoder.model.visual.blocks.11.norm2.bias": "model-00001-of-00002.safetensors",

|

| 298 |

+

"vision_encoder.model.encoder.model.visual.blocks.11.norm2.weight": "model-00001-of-00002.safetensors",

|

| 299 |

+

"vision_encoder.model.encoder.model.visual.blocks.12.attn.proj.bias": "model-00001-of-00002.safetensors",

|

| 300 |

+

"vision_encoder.model.encoder.model.visual.blocks.12.attn.proj.weight": "model-00001-of-00002.safetensors",

|

| 301 |

+

"vision_encoder.model.encoder.model.visual.blocks.12.attn.qkv.bias": "model-00001-of-00002.safetensors",

|

| 302 |

+

"vision_encoder.model.encoder.model.visual.blocks.12.attn.qkv.weight": "model-00001-of-00002.safetensors",

|

| 303 |

+

"vision_encoder.model.encoder.model.visual.blocks.12.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 304 |

+

"vision_encoder.model.encoder.model.visual.blocks.12.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 305 |

+

"vision_encoder.model.encoder.model.visual.blocks.12.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 306 |

+

"vision_encoder.model.encoder.model.visual.blocks.12.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 307 |

+

"vision_encoder.model.encoder.model.visual.blocks.12.norm1.bias": "model-00001-of-00002.safetensors",

|

| 308 |

+

"vision_encoder.model.encoder.model.visual.blocks.12.norm1.weight": "model-00001-of-00002.safetensors",

|

| 309 |

+

"vision_encoder.model.encoder.model.visual.blocks.12.norm2.bias": "model-00001-of-00002.safetensors",

|

| 310 |

+

"vision_encoder.model.encoder.model.visual.blocks.12.norm2.weight": "model-00001-of-00002.safetensors",

|

| 311 |

+

"vision_encoder.model.encoder.model.visual.blocks.13.attn.proj.bias": "model-00001-of-00002.safetensors",

|

| 312 |

+

"vision_encoder.model.encoder.model.visual.blocks.13.attn.proj.weight": "model-00001-of-00002.safetensors",

|

| 313 |

+

"vision_encoder.model.encoder.model.visual.blocks.13.attn.qkv.bias": "model-00001-of-00002.safetensors",

|

| 314 |

+

"vision_encoder.model.encoder.model.visual.blocks.13.attn.qkv.weight": "model-00001-of-00002.safetensors",

|

| 315 |

+

"vision_encoder.model.encoder.model.visual.blocks.13.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 316 |

+

"vision_encoder.model.encoder.model.visual.blocks.13.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 317 |

+

"vision_encoder.model.encoder.model.visual.blocks.13.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 318 |

+

"vision_encoder.model.encoder.model.visual.blocks.13.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 319 |

+

"vision_encoder.model.encoder.model.visual.blocks.13.norm1.bias": "model-00001-of-00002.safetensors",

|

| 320 |

+

"vision_encoder.model.encoder.model.visual.blocks.13.norm1.weight": "model-00001-of-00002.safetensors",

|

| 321 |

+

"vision_encoder.model.encoder.model.visual.blocks.13.norm2.bias": "model-00001-of-00002.safetensors",

|

| 322 |

+

"vision_encoder.model.encoder.model.visual.blocks.13.norm2.weight": "model-00001-of-00002.safetensors",

|

| 323 |

+

"vision_encoder.model.encoder.model.visual.blocks.14.attn.proj.bias": "model-00001-of-00002.safetensors",

|

| 324 |

+

"vision_encoder.model.encoder.model.visual.blocks.14.attn.proj.weight": "model-00001-of-00002.safetensors",

|

| 325 |

+

"vision_encoder.model.encoder.model.visual.blocks.14.attn.qkv.bias": "model-00001-of-00002.safetensors",

|

| 326 |

+

"vision_encoder.model.encoder.model.visual.blocks.14.attn.qkv.weight": "model-00001-of-00002.safetensors",

|

| 327 |

+

"vision_encoder.model.encoder.model.visual.blocks.14.mlp.fc1.bias": "model-00001-of-00002.safetensors",

|

| 328 |

+

"vision_encoder.model.encoder.model.visual.blocks.14.mlp.fc1.weight": "model-00001-of-00002.safetensors",

|

| 329 |

+

"vision_encoder.model.encoder.model.visual.blocks.14.mlp.fc2.bias": "model-00001-of-00002.safetensors",

|

| 330 |

+

"vision_encoder.model.encoder.model.visual.blocks.14.mlp.fc2.weight": "model-00001-of-00002.safetensors",

|

| 331 |

+

"vision_encoder.model.encoder.model.visual.blocks.14.norm1.bias": "model-00001-of-00002.safetensors",

|

| 332 |

+

"vision_encoder.model.encoder.model.visual.blocks.14.norm1.weight": "model-00001-of-00002.safetensors",

|

| 333 |

+

"vision_encoder.model.encoder.model.visual.blocks.14.norm2.bias": "model-00001-of-00002.safetensors",

|

| 334 |

+