Commit

•

deb1165

1

Parent(s):

ccb45a4

Create README.md

Browse files

README.md

ADDED

|

@@ -0,0 +1,344 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

language:

|

| 2 |

+

- zh

|

| 3 |

+

- en

|

| 4 |

+

pipeline_tag: text-generation

|

| 5 |

+

base_model: yentinglin/Llama-3-Taiwan-8B-Instruct-DPO

|

| 6 |

+

tags:

|

| 7 |

+

- zhtw

|

| 8 |

+

license: llama3

|

| 9 |

+

---

|

| 10 |

+

|

| 11 |

+

## Description

|

| 12 |

+

|

| 13 |

+

This repo contains GGUF format model files for [yentinglin/Llama-3-Taiwan-8B-Instruct-DPO](https://huggingface.co/yentinglin/Llama-3-Taiwan-8B-Instruct-DPO).

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

## Provided files

|

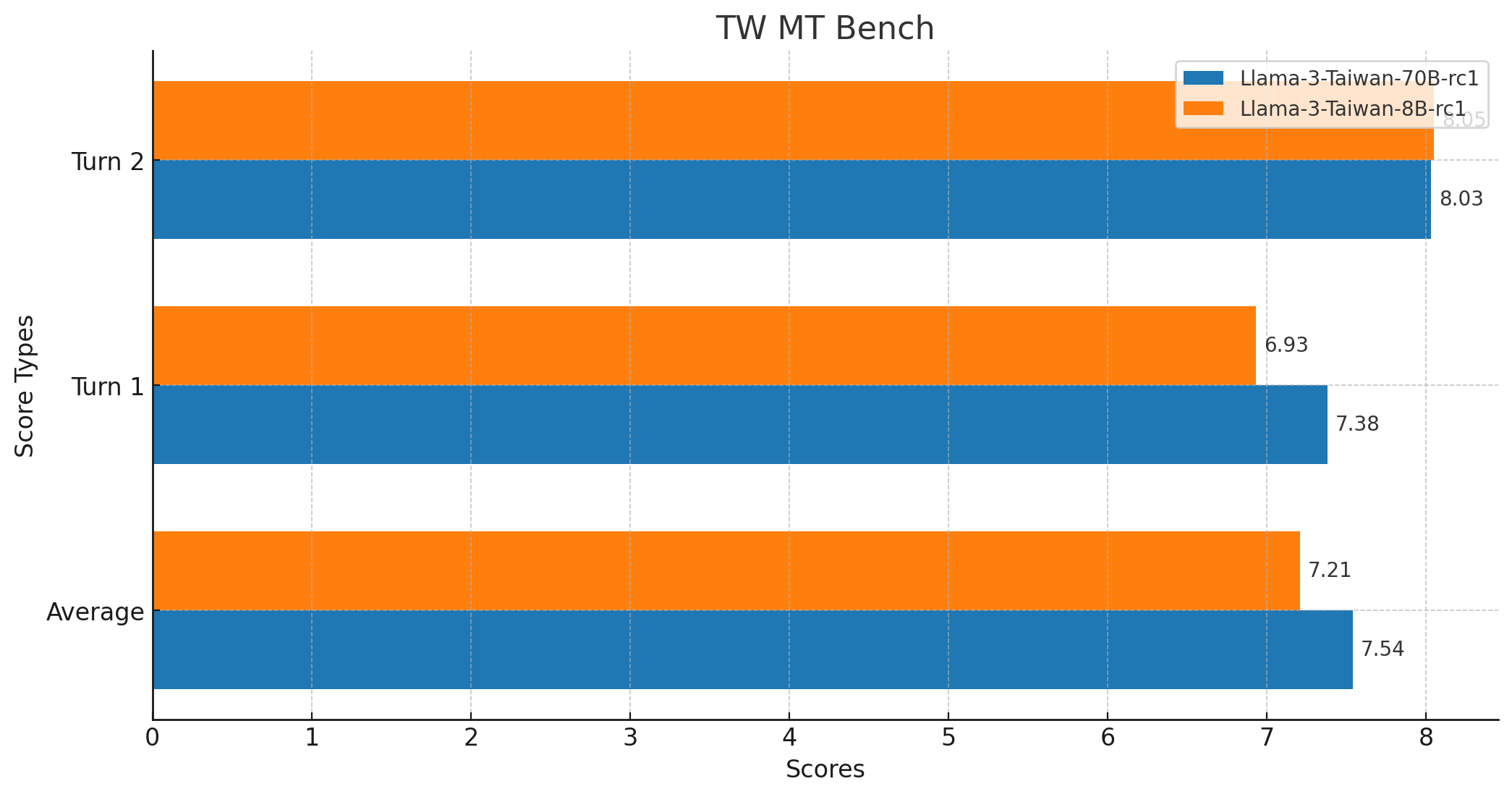

| 17 |

+

| Name | Quant method | Bits | Size | Use case |

|

| 18 |

+

| ---- | ---- | ---- | ---- | ---- |

|

| 19 |

+

| [llama-3-taiwan-8b-instruct-dpo-q5_0.gguf](https://huggingface.co/chienweichang/Llama-3-Taiwan-8B-Instruct-GGUF/blob/main/llama-3-taiwan-8b-instruct-dpo-q5_0.gguf) | Q5_0 | 5 | 5.6 GB| legacy; medium, balanced quality |

|

| 20 |

+

| [llama-3-taiwan-8b-instruct-dpo-q5_1.gguf](https://huggingface.co/chienweichang/Llama-3-Taiwan-8B-Instruct-GGUF/blob/main/llama-3-taiwan-8b-instruct-dpo-q5_1.gguf) | Q5_1 | 5 | 6.07 GB| large, low quality loss |

|

| 21 |

+

| [llama-3-taiwan-8b-instruct-dpo-q5_k_s.gguf](https://huggingface.co/chienweichang/Llama-3-Taiwan-8B-Instruct-GGUF/blob/main/llama-3-taiwan-8b-instruct-dpo-q5_k_s.gguf) | Q5_K_S | 5 | 5.6 GB| large, very low quality loss |

|

| 22 |

+

| [llama-3-taiwan-8b-instruct-dpo-q5_k_m.gguf](https://huggingface.co/chienweichang/Llama-3-Taiwan-8B-Instruct-GGUF/blob/main/llama-3-taiwan-8b-instruct-dpo-q5_k_m.gguf) | Q5_K_M | 5 | 5.73 GB| large, very low quality loss |

|

| 23 |

+

| [llama-3-taiwan-8b-instruct-dpo-q6_k.gguf](https://huggingface.co/chienweichang/Llama-3-Taiwan-8B-Instruct-GGUF/blob/main/llama-3-taiwan-8b-instruct-dpo-q6_k.gguf) | Q6_K | 6 | 6.6 GB| very large, extremely low quality loss |

|

| 24 |

+

| [llama-3-taiwan-8b-instruct-dpo-q8_0.gguf](https://huggingface.co/chienweichang/Llama-3-Taiwan-8B-Instruct-GGUF/blob/main/llama-3-taiwan-8b-instruct-dpo-q8_0.gguf) | Q8_0 | 8 | 8.54 GB| very large, extremely low quality loss |

|

| 25 |

+

|

| 26 |

+

## Original model card

|

| 27 |

+

|

| 28 |

+

<img src="https://cdn-uploads.huggingface.co/production/uploads/5df9c78eda6d0311fd3d541f/vlfv5sHbt4hBxb3YwULlU.png" alt="Taiwan LLM Logo" width="600" style="margin-left:'auto' margin-right:'auto' display:'block'"/>

|

| 29 |

+

|

| 30 |

+

# 🚀 [Demo Site](https://twllm.com/)

|

| 31 |

+

|

| 32 |

+

Try out Llama-3-Taiwan interactively at [twllm.com](https://twllm.com/)

|

| 33 |

+

|

| 34 |

+

# ⚔️ [Chatbot Arena](https://arena.twllm.com/)

|

| 35 |

+

|

| 36 |

+

Participate in the exciting [Chatbot Arena](https://arena.twllm.com/) and compete against other chatbots!

|

| 37 |

+

|

| 38 |

+

🚀 We're excited to introduce Llama-3-Taiwan-70B! Llama-3-Taiwan-70B is a 70B parameter model finetuned on a large corpus of Traditional Mandarin and English data using the Llama-3 architecture. It demonstrates state-of-the-art performance on various Traditional Mandarin NLP benchmarks.

|

| 39 |

+

|

| 40 |

+

The model was trained with [NVIDIA NeMo™ Framework](https://www.nvidia.com/en-us/ai-data-science/generative-ai/nemo-framework/) using the NVIDIA Taipei-1 built with [NVIDIA DGX H100](https://www.nvidia.com/en-us/data-center/dgx-h100/) systems.

|

| 41 |

+

|

| 42 |

+

The compute and data for training Llama-3-Taiwan-70B was generously sponsored by [Chang Gung Memorial Hospital](https://www.cgmh.org.tw/eng), [Chang Chun Group](https://www.ccp.com.tw/ccpweb.nsf/homepage?openagent), [Legalsign.ai](https://legalsign.ai/), [NVIDIA](https://www.nvidia.com/zh-tw/), [Pegatron](https://www.pegatroncorp.com/), [TechOrange](https://buzzorange.com/techorange/), and [Unimicron](https://www.unimicron.com/) (in alphabetical order).

|

| 43 |

+

|

| 44 |

+

We would like to acknowledge the [contributions](https://huggingface.co/yentinglin/Llama-3-Taiwan-70B-Instruct-rc1#contributions) of our data provider, team members and advisors in the development of this model, including [shasha77](https://www.youtube.com/@shasha77) for high-quality YouTube scripts and study materials, [Taiwan AI Labs](https://ailabs.tw/) for providing local media content, [Ubitus K.K.](https://ubitus.net/zh/) for offering gaming content, Professor Yun-Nung (Vivian) Chen for her guidance and advisement, Wei-Lin Chen for leading our pretraining data pipeline, Tzu-Han Lin for synthetic data generation, Chang-Sheng Kao for enhancing our synthetic data quality, and Kang-Chieh Chen for cleaning instruction-following data.

|

| 45 |

+

|

| 46 |

+

|

| 47 |

+

# Model Summary

|

| 48 |

+

|

| 49 |

+

Llama-3-Taiwan-70B is a large language model finetuned for Traditional Mandarin and English users. It has strong capabilities in language understanding, generation, reasoning, and multi-turn dialogue. Key features include:

|

| 50 |

+

|

| 51 |

+

- 70B parameters

|

| 52 |

+

- Languages: Traditional Mandarin (zh-tw), English (en)

|

| 53 |

+

- Finetuned on High-quality Traditional Mandarin and English corpus covering general knowledge as well as industrial knowledge in legal, manufacturing, medical, and electronics domains

|

| 54 |

+

- 8K context length

|

| 55 |

+

- Open model released under the Llama-3 license

|

| 56 |

+

|

| 57 |

+

# Training Details

|

| 58 |

+

|

| 59 |

+

- Training Framework: [NVIDIA NeMo](https://www.nvidia.com/zh-tw/ai-data-science/products/nemo/), [NVIDIA NeMo Megatron](https://docs.nvidia.com/nemo-framework/user-guide/latest/nemotoolkit/nlp/megatron.html)

|

| 60 |

+

- Inference Framework: [NVIDIA TensorRT-LLM](https://github.com/NVIDIA/TensorRT-LLM)

|

| 61 |

+

- Base model: [Llama-3 70B](https://llama.meta.com/llama3/)

|

| 62 |

+

- Hardware: [NVIDIA DGX H100](https://www.nvidia.com/zh-tw/data-center/dgx-h100/) on Taipei-1

|

| 63 |

+

- Context length: 8K tokens ([128k version](https://huggingface.co/yentinglin/Llama-3-Taiwan-70B-Instruct-128k))

|

| 64 |

+

- Batch size: 2M tokens per step

|

| 65 |

+

|

| 66 |

+

# Evaluation

|

| 67 |

+

|

| 68 |

+

Checkout [Open TW LLM Leaderboard](https://huggingface.co/spaces/yentinglin/open-tw-llm-leaderboard) for full and updated list.

|

| 69 |

+

|

| 70 |

+

| Model | [TMLU](https://arxiv.org/pdf/2403.20180) | Taiwan Truthful QA | [Legal Eval](https://huggingface.co/datasets/lianghsun/tw-legal-benchmark-v1) | [TW MT-Bench](https://huggingface.co/datasets/MediaTek-Research/TCEval-v2) | Long context | Function Calling | [TMMLU+](https://github.com/iKala/ievals) |

|

| 71 |

+

|---------------------------------------------------------------------------------|--------------|---------------|--------------------|--------------|--------------|-----------------|-----------|

|

| 72 |

+

| | 學科知識 | 台灣在地化測試 | 台灣法律考題 | 中文多輪對答 | 長文本支援 | 函數呼叫 | |

|

| 73 |

+

| [**yentinglin/Llama-3-Taiwan-70B-Instruct**](https://huggingface.co/yentinglin/Llama-3-Taiwan-70B-Instruct) | **74.76%** | 80.95% | 68.42% | 7.54 | [128k version](https://huggingface.co/yentinglin/Llama-3-Taiwan-70B-Instruct-128k) | ✅ | 67.53% |

|

| 74 |

+

| [**yentinglin/Llama-3-Taiwan-70B-Instruct-DPO**](https://huggingface.co/yentinglin/Llama-3-Taiwan-70B-Instruct-DPO) | 74.60% | **81.75%** | **70.33%** | - | - | ✅ | - |

|

| 75 |

+

| [**yentinglin/Llama-3-Taiwan-70B-Instruct-128k**](https://huggingface.co/yentinglin/Llama-3-Taiwan-70B-Instruct) | 73.01% | 80.16% | 63.64% | - | - | ✅ | - |

|

| 76 |

+

| [**yentinglin/Llama-3-Taiwan-8B-Instruct**](https://huggingface.co/yentinglin/Llama-3-Taiwan-8B-Instruct) | 59.50% | 61.11% | 53.11% | 7.21 | [128k version](https://huggingface.co/yentinglin/Llama-3-Taiwan-8B-Instruct-128k) | ✅ | 52.28% |

|

| 77 |

+

| [**yentinglin/Llama-3-Taiwan-8B-Instruct-DPO**](https://huggingface.co/yentinglin/Llama-3-Taiwan-8B-Instruct-DPO) | 59.88% | 59.52% | 52.63% | - | - | ✅ | - |

|

| 78 |

+

| [**yentinglin/Llama-3-Taiwan-8B-Instruct-128k**](https://huggingface.co/yentinglin/Llama-3-Taiwan-8B-Instruct-128k) | - | - | - | - | - | ✅ | - |

|

| 79 |

+

| [Claude-3-Opus](https://www.anthropic.com/api) | [73.59% (5-shot)](https://arxiv.org/pdf/2403.20180) | [69.84%](https://huggingface.co/yentinglin/Llama-3-Taiwan-70B-Instruct-rc3/tree/main/opus-Taiwan-Truthful-QA) | [60.29%](https://huggingface.co/yentinglin/Llama-3-Taiwan-70B-Instruct-rc3/tree/main/opus) | - | 200k | ✅ | - |

|

| 80 |

+

| [GPT4-o](https://platform.openai.com/docs/api-reference/chat/create) | [65.56% (0-shot), 69.88% (5-shot)](https://huggingface.co/yentinglin/Llama-3-Taiwan-70B-Instruct-rc3/tree/main/4o-tmlu) | [76.98%](https://huggingface.co/yentinglin/Llama-3-Taiwan-70B-Instruct-rc3/tree/main/4o-Taiwan-Truthful-QA) | [53.59%](https://huggingface.co/yentinglin/Llama-3-Taiwan-70B-Instruct-rc3/tree/main/4o) | - | 128k | ✅ | - |

|

| 81 |

+

| [GPT4-turbo](https://platform.openai.com/docs/api-reference/chat/create) | [70.42% (5-shot)](https://arxiv.org/pdf/2403.20180) | - | - | - | 128k | ✅ | 60.34%^ |

|

| 82 |

+

| [Gemini-Pro](https://ai.google.dev/gemini-api/docs) | [61.40% (5-shot)](https://arxiv.org/pdf/2403.20180) | - | - | - | 1000k | ✅ | 49.92%^ |

|

| 83 |

+

| [GPT-3.5-turbo-1106](https://platform.openai.com/docs/api-reference/chat/create) | [49.37% (5-shot)](https://arxiv.org/pdf/2403.20180) | - | - | 7.1 | 128k | ✅ | 41.76%^ |

|

| 84 |

+

| [Qwen1.5-110B-Chat](https://huggingface.co/Qwen/Qwen1.5-110B-Chat) | **75.69%** | 66.67% | 49.28% | - | 32k | ✅ | 65.81% |

|

| 85 |

+

| [Yi-34B-Chat](https://huggingface.co/01-ai/Yi-34B-Chat) | 73.59% | 71.43% | 55.02% | 6.9 | 200k | ✅ | 64.10% |

|

| 86 |

+

| [Meta-Llama-3-70B-Instruct](https://huggingface.co/meta-llama/Meta-Llama-3-70B-Instruct) | 70.95% | 65.08% | 52.63% | - | 8k | ✅ | 62.75% |

|

| 87 |

+

| [Mixtral-8x22B-Instruct-v0.1](https://huggingface.co/mistralai/Mixtral-8x22B-Instruct-v0.1) | 55.57% | 52.38% | 44.98% | - | 64k | ✅ | 52.16% |

|

| 88 |

+

| [Breexe-8x7B-Instruct-v0_1](https://huggingface.co/MediaTek-Research/Breexe-8x7B-Instruct-v0_1) | - | - | - | 7.2 | 8k | ❓ | 48.92% |

|

| 89 |

+

| [c4ai-command-r-plus](https://huggingface.co/CohereForAI/c4ai-command-r-plus) | 62.87% | 64.29% | 34.45% | - | 128k | ✅ | 49.75% |

|

| 90 |

+

| [Meta-Llama-3-8B-Instruct](https://huggingface.co/meta-llama/Meta-Llama-3-8B-Instruct) | 55.81% | 46.83% | 35.89% | - | 8k | ✅ | 43.38% |

|

| 91 |

+

| [Breeze-7B-Instruct-v1_0](https://huggingface.co/MediaTek-Research/Breeze-7B-Instruct-v1_0) | 55.57% | 52.38% | 39.23% | 6.0 | 32k | ❓ | 41.77% |

|

| 92 |

+

| [Llama3-TAIDE-LX-8B-Chat-Alpha1](https://huggingface.co/taide/Llama3-TAIDE-LX-8B-Chat-Alpha1) | 47.30% | 50.79% | 37.80% | - | 8k | ❓ | 39.03% |

|

| 93 |

+

| [Phi-3-mini-4k-instruct](https://huggingface.co/microsoft/Phi-3-mini-4k-instruct) | 40.97% | 37.30% | 27.27% | - | 4k | ❓ | 33.02% |

|

| 94 |

+

|

| 95 |

+

Numbers are 0-shot by default.

|

| 96 |

+

|

| 97 |

+

[Eval implementation](https://github.com/adamlin120/lm-evaluation-harness)

|

| 98 |

+

|

| 99 |

+

^ taken the closet matching numbers from original dataset.

|

| 100 |

+

|

| 101 |

+

## Needle in a Haystack Evaluation

|

| 102 |

+

|

| 103 |

+

The "Needle in a 出師表" evaluation tests the model's ability to locate and recall important information embedded within a large body of text, using the classic Chinese text 《出師表》 by 諸葛亮.

|

| 104 |

+

|

| 105 |

+

To run the evaluation, use the [script](https://github.com/adamlin120/needle-haystack/tree/main).

|

| 106 |

+

|

| 107 |

+

|

| 108 |

+

# TW MT-Bench Score

|

| 109 |

+

|

| 110 |

+

- Average Score: 7.5375

|

| 111 |

+

- Maximum Score: 10

|

| 112 |

+

- Minimum Score: 1

|

| 113 |

+

- Median Score: 9.0

|

| 114 |

+

- Standard Deviation: 3.0349783771882133

|

| 115 |

+

- Total Number of Scores: 160

|

| 116 |

+

- [Model resopnse](https://huggingface.co/yentinglin/Llama-3-Taiwan-70B-Instruct-rc1/blob/main/Llama-3-Taiwan.jsonl)

|

| 117 |

+

- [GPT-4 Eval](https://huggingface.co/yentinglin/Llama-3-Taiwan-70B-Instruct-rc1/blob/main/gpt-4_single.jsonl)

|

| 118 |

+

- [Code fork from `mtkresearch/TCEval` with bug fixing](https://github.com/adamlin120/TCEval)

|

| 119 |

+

|

| 120 |

+

|

| 121 |

+

|

| 122 |

+

|

| 123 |

+

|

| 124 |

+

# Use Cases

|

| 125 |

+

|

| 126 |

+

Llama-3-Taiwan-70B can be applied to a wide variety of NLP tasks in Traditional Chinese and English, including:

|

| 127 |

+

|

| 128 |

+

<details>

|

| 129 |

+

<summary>1. 多輪對話</summary>

|

| 130 |

+

|

| 131 |

+

<pre>

|

| 132 |

+

System: You are an AI assistant called Twllm, created by TAME (TAiwan Mixture of Expert) project.

|

| 133 |

+

|

| 134 |

+

User: 嗨,你好!

|

| 135 |

+

|

| 136 |

+

Assistant: 你好!我今天可以如何協助你?

|

| 137 |

+

|

| 138 |

+

User: 就想聊天而已....

|

| 139 |

+

|

| 140 |

+

Assistant: 當然,我很樂意和你聊天!有什麼特別想談論的嗎?

|

| 141 |

+

</pre>

|

| 142 |

+

|

| 143 |

+

</details>

|

| 144 |

+

|

| 145 |

+

<details>

|

| 146 |

+

<summary>2. RAG(檢索增強生成)</summary>

|

| 147 |

+

|

| 148 |

+

Demo: 可以打開 `Search Web` on [twllm.com](https://twllm.com/)

|

| 149 |

+

|

| 150 |

+

|

| 151 |

+

|

| 152 |

+

|

| 153 |

+

|

| 154 |

+

|

| 155 |

+

|

| 156 |

+

<pre>

|

| 157 |

+

System: You are an AI assistant called Twllm, created by TAME (TAiwan Mixture of Expert) project.

|

| 158 |

+

|

| 159 |

+

User: 明天台大天氣如何?

|

| 160 |

+

|

| 161 |

+

Assistant: 根據天氣預報,明天台大天氣為陣雨。低溫 24°C。10 到 15 公里/小時 的 東 風 。降雨機率為 40%。

|

| 162 |

+

</pre>

|

| 163 |

+

|

| 164 |

+

</details>

|

| 165 |

+

|

| 166 |

+

<details>

|

| 167 |

+

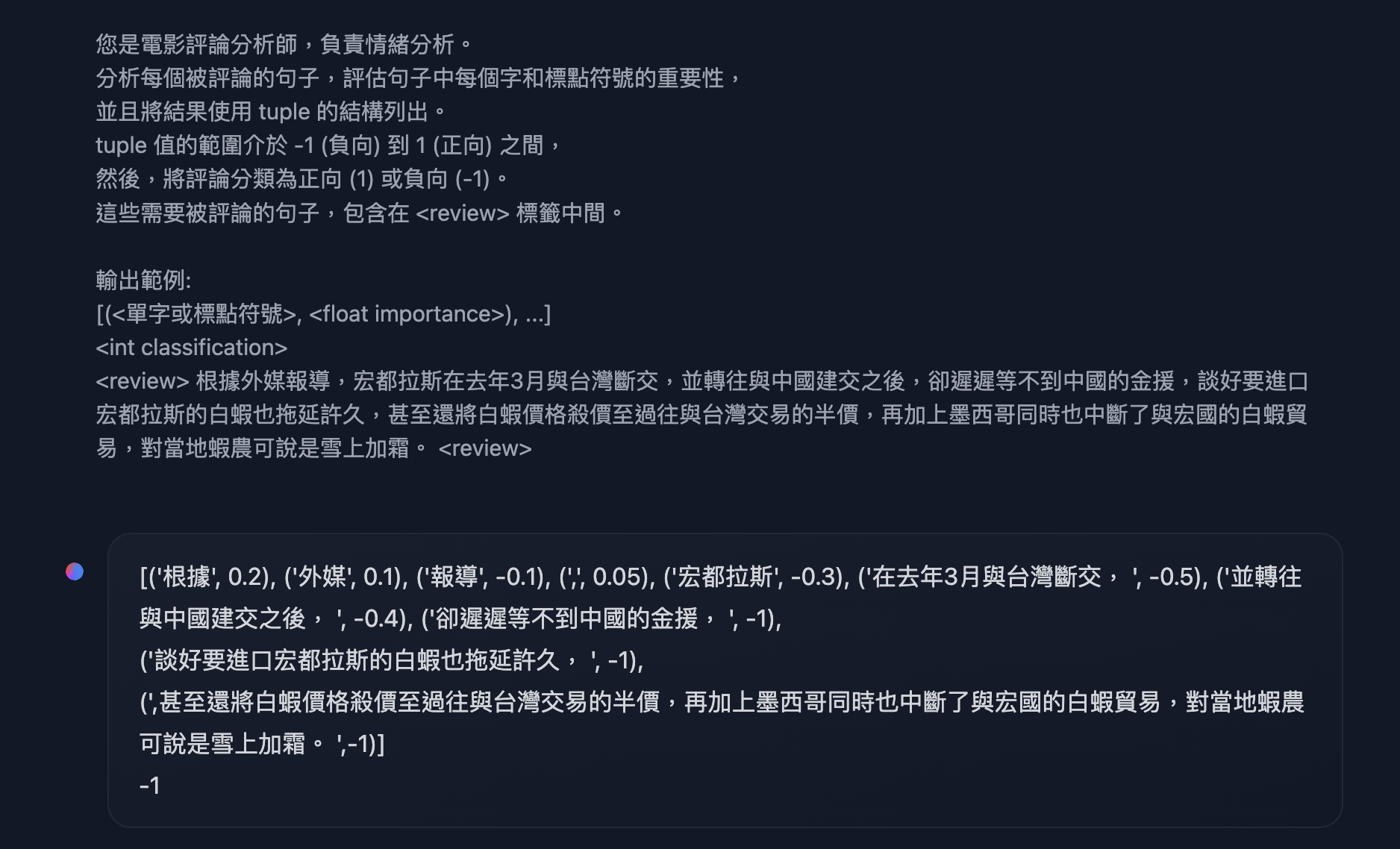

<summary>3. 格式化輸出, 語言理解, 實體識別, 函數調用</summary>

|

| 168 |

+

|

| 169 |

+

If you are interested in function-calling, I strongly recommend [using constrained decoding](https://huggingface.co/docs/text-generation-inference/basic_tutorials/using_guidance) to turn on `json mode`.

|

| 170 |

+

|

| 171 |

+

Example from HW7 in `INTRODUCTION TO GENERATIVE AI 2024 SPRING` from `HUNG-YI LEE (李宏毅)`

|

| 172 |

+

|

| 173 |

+

|

| 174 |

+

|

| 175 |

+

|

| 176 |

+

<pre>

|

| 177 |

+

System: You are an AI assistant called Twllm, created by TAME (TAiwan Mixture of Expert) project.

|

| 178 |

+

|

| 179 |

+

User: 您是電影評論分析師,負責情緒分析。

|

| 180 |

+

分析每個被評論的句子,評估句子中每個字和標點符號的重要性,

|

| 181 |

+

並且將結果使用 tuple 的結構列出。

|

| 182 |

+

tuple 值的範圍介於 -1 (負向) 到 1 (正向) 之間,

|

| 183 |

+

然後,將評論分類為正向 (1) 或負向 (-1)。

|

| 184 |

+

這些需要被評論的句子,包含在 <review> 標籤中間。

|

| 185 |

+

|

| 186 |

+

輸出範例:

|

| 187 |

+

[(<單字或標點符號>, <float importance>), ...]

|

| 188 |

+

<int classification>

|

| 189 |

+

<review> 根據外媒報導,宏都拉斯在去年3月與台灣斷交,並轉往與中國建交之後,卻遲遲等不到中國的金援,談好要進口宏都拉斯的白蝦也拖延許久,甚至還將白蝦價格殺價至過往與台灣交易的半價,再加上墨西哥同時也中斷了與宏國的白蝦貿易,對當地蝦農可說是雪上加霜。 <review>

|

| 190 |

+

|

| 191 |

+

|

| 192 |

+

Assistant: [('根據', 0.2), ('外媒', 0.1), ('報導', -0.1), (',', 0.05), ('宏都拉斯', -0.3), ('在去年3月與台灣斷交, ', -0.5), ('並轉往與中國建交之後, ', -0.4), ('卻遲遲等不到中國的金援, ', -1),

|

| 193 |

+

('談好要進口宏都拉斯的白蝦也拖延許久, ', -1),

|

| 194 |

+

(',甚至還將白蝦價格殺價至過往與台灣交易的半價,再加上墨西哥同時也中斷了與宏國的白蝦貿易,對當地蝦農可說是雪上加霜。 ',-1)]

|

| 195 |

+

-1

|

| 196 |

+

|

| 197 |

+

</pre>

|

| 198 |

+

</details>

|

| 199 |

+

|

| 200 |

+

|

| 201 |

+

|

| 202 |

+

|

| 203 |

+

|

| 204 |

+

# Get Started

|

| 205 |

+

|

| 206 |

+

*Caveat: System message should always be set.*

|

| 207 |

+

|

| 208 |

+

## Hugging Face Transformers library

|

| 209 |

+

You can use Llama-3-Taiwan-70B with the Hugging Face Transformers library:

|

| 210 |

+

|

| 211 |

+

|

| 212 |

+

```python

|

| 213 |

+

import torch

|

| 214 |

+

from transformers import pipeline, StoppingCriteria

|

| 215 |

+

|

| 216 |

+

# Define a custom stopping criteria class

|

| 217 |

+

class EosListStoppingCriteria(StoppingCriteria):

|

| 218 |

+

def __init__(self, eos_sequence=[128256]):

|

| 219 |

+

self.eos_sequence = eos_sequence

|

| 220 |

+

|

| 221 |

+

def __call__(self, input_ids: torch.LongTensor, scores: torch.FloatTensor, **kwargs) -> bool:

|

| 222 |

+

last_ids = input_ids[:, -len(self.eos_sequence):].tolist()

|

| 223 |

+

return self.eos_sequence in last_ids

|

| 224 |

+

|

| 225 |

+

# Initialize the model with automatic device mapping

|

| 226 |

+

llm = pipeline("text-generation", model="yentinglin/Llama-3-Taiwan-70B-Instruct-rc1", device_map="auto")

|

| 227 |

+

tokenizer = llm.tokenizer

|

| 228 |

+

|

| 229 |

+

# Define a conversation example

|

| 230 |

+

chat = [

|

| 231 |

+

{"role": "system", "content": "You are an AI assistant called Twllm, created by TAME (TAiwan Mixture of Expert) project."},

|

| 232 |

+

{"role": "user", "content": "你好,請問你可以完成什麼任務?"},

|

| 233 |

+

{"role": "assistant", "content": "你好,我可以幫助您解決各種問題、提供資訊並協助完成多種任務。例如:回答技術問題、提供建議、翻譯文字、尋找資料或協助您安排行程等。請告訴我如何能幫助您。"},

|

| 234 |

+

{"role": "user", "content": "太棒了!"}

|

| 235 |

+

]

|

| 236 |

+

flatten_chat_for_generation = tokenizer.apply_chat_template(chat, tokenize=False, add_generation_prompt=True)

|

| 237 |

+

"""

|

| 238 |

+

<|im_start|>user

|

| 239 |

+

You are an AI assistant called Twllm, created by TAME (TAiwan Mixture of Expert) project.<|im_end|>

|

| 240 |

+

<|im_start|>user

|

| 241 |

+

你好,請問你可以完成什麼任務?<|im_end|>

|

| 242 |

+

<|im_start|>assistant

|

| 243 |

+

你好,我可以幫助您解決各種問題、提供資訊和協助您完成許多不同的任務。例如:回答技術問題、提供建議、翻譯文字、尋找資料或協助您安排行程等。請告訴我如何能幫助您。<|im_end|>

|

| 244 |

+

<|im_start|>user

|

| 245 |

+

太棒了!<|im_end|>

|

| 246 |

+

<|im_start|>assistant

|

| 247 |

+

|

| 248 |

+

"""

|

| 249 |

+

|

| 250 |

+

# Generate a response using the custom stopping criteria

|

| 251 |

+

output = llm(flatten_chat_for_generation, return_full_text=False, max_new_tokens=128, top_p=0.9, temperature=0.7, stopping_criteria=[EosListStoppingCriteria([tokenizer.eos_token_id])])

|

| 252 |

+

print(output[0]['generated_text'])

|

| 253 |

+

"謝謝!很高興能夠為您服務。如果有任何其他需要協助的地方,請隨時與我聯繫。我會盡最大努力為您提供所需的支援。"

|

| 254 |

+

```

|

| 255 |

+

|

| 256 |

+

## vLLM

|

| 257 |

+

|

| 258 |

+

Start the server

|

| 259 |

+

```bash

|

| 260 |

+

export NUM_GPUS=4

|

| 261 |

+

export PORT=8000

|

| 262 |

+

|

| 263 |

+

docker run \

|

| 264 |

+

-e HF_TOKEN=$HF_TOKEN \

|

| 265 |

+

--gpus '"device=0,1,2,3"' \

|

| 266 |

+

-v ~/.cache/huggingface:/root/.cache/huggingface \

|

| 267 |

+

-p "${PORT}:8000" \

|

| 268 |

+

--ipc=host \

|

| 269 |

+

vllm/vllm-openai:v0.4.0.post1 \

|

| 270 |

+

--model "yentinglin/Llama-3-Taiwan-70B-Instruct-rc1" \

|

| 271 |

+

-tp "${NUM_GPUS}"

|

| 272 |

+

```

|

| 273 |

+

|

| 274 |

+

Sample client code, or you can use anything OpenAI-API compatible clients

|

| 275 |

+

|

| 276 |

+

```python

|

| 277 |

+

# pip install "openai>=1.0.0"

|

| 278 |

+

from openai import OpenAI

|

| 279 |

+

# Set OpenAI's API key and API base to use vLLM's API server.

|

| 280 |

+

openai_api_key = "EMPTY"

|

| 281 |

+

openai_api_base = "http://localhost:8000/v1"

|

| 282 |

+

|

| 283 |

+

client = OpenAI(

|

| 284 |

+

api_key=openai_api_key,

|

| 285 |

+

base_url=openai_api_base,

|

| 286 |

+

)

|

| 287 |

+

|

| 288 |

+

chat_response = client.chat.completions.create(

|

| 289 |

+

model="yentinglin/Llama-3-Taiwan-70B-Instruct-rc1",

|

| 290 |

+

messages=[

|

| 291 |

+

{"role": "system", "content": "You are a helpful assistant."},

|

| 292 |

+

{"role": "user", "content": "Tell me a joke."},

|

| 293 |

+

]

|

| 294 |

+

)

|

| 295 |

+

print("Chat response:", chat_response)

|

| 296 |

+

```

|

| 297 |

+

|

| 298 |

+

|

| 299 |

+

Enjoy exploring the capabilities of Llama-3-Taiwan-70B! We look forward to seeing what you create with this powerful open-source model. If you have any questions or feedback, please let us know.

|

| 300 |

+

|

| 301 |

+

# Contributions

|

| 302 |

+

- [**Professor Yun-Nung (Vivian) Chen**](https://www.csie.ntu.edu.tw/~yvchen/), for her guidance and advisement throughout the project.

|

| 303 |

+

- [**Wei-Lin Chen**](mailto:[email protected]), for leading our pretraining data pipeline.

|

| 304 |

+

- [**Tzu-Han Lin**](mailto:[email protected]), for synthetic data generation.

|

| 305 |

+

- [**Chang-Sheng Kao**](mailto:[email protected]), for enhancing our synthetic data quality.

|

| 306 |

+

- [**Kang-Chieh Chen**](mailto:[email protected]), for cleaning instruction-following data.

|

| 307 |

+

- [**Min-Yi Chen**](mailto:[email protected]) and [**Shao-Heng Hsu**](mailto:[email protected]), for collecting chemical engineering data and benchmarks.

|

| 308 |

+

- Chung-Yao Ma, Jonathan Guo and Kai-Chun Chang, for collecting manufacturing and electrical engineering data and benchmarks, and project progress management

|

| 309 |

+

|

| 310 |

+

# Citation

|

| 311 |

+

```

|

| 312 |

+

@article{DBLP:journals/corr/abs-2311-17487,

|

| 313 |

+

author = {Yen{-}Ting Lin and

|

| 314 |

+

Yun{-}Nung Chen},

|

| 315 |

+

title = {Taiwan {LLM:} Bridging the Linguistic Divide with a Culturally Aligned

|

| 316 |

+

Language Model},

|

| 317 |

+

journal = {CoRR},

|

| 318 |

+

volume = {abs/2311.17487},

|

| 319 |

+

year = {2023},

|

| 320 |

+

url = {https://doi.org/10.48550/arXiv.2311.17487},

|

| 321 |

+

doi = {10.48550/ARXIV.2311.17487},

|

| 322 |

+

eprinttype = {arXiv},

|

| 323 |

+

eprint = {2311.17487},

|

| 324 |

+

timestamp = {Tue, 05 Dec 2023 14:40:42 +0100},

|

| 325 |

+

biburl = {https://dblp.org/rec/journals/corr/abs-2311-17487.bib},

|

| 326 |

+

bibsource = {dblp computer science bibliography, https://dblp.org}

|

| 327 |

+

}

|

| 328 |

+

@article{DBLP:journals/corr/abs-2403-20180,

|

| 329 |

+

author = {Po{-}Heng Chen and

|

| 330 |

+

Sijia Cheng and

|

| 331 |

+

Wei{-}Lin Chen and

|

| 332 |

+

Yen{-}Ting Lin and

|

| 333 |

+

Yun{-}Nung Chen},

|

| 334 |

+

title = {Measuring Taiwanese Mandarin Language Understanding},

|

| 335 |

+

journal = {CoRR},

|

| 336 |

+

volume = {abs/2403.20180},

|

| 337 |

+

year = {2024},

|

| 338 |

+

url = {https://doi.org/10.48550/arXiv.2403.20180},

|

| 339 |

+

doi = {10.48550/ARXIV.2403.20180},

|

| 340 |

+

eprinttype = {arXiv},

|

| 341 |

+

eprint = {2403.20180},

|

| 342 |

+

timestamp = {Wed, 10 Apr 2024 17:37:45 +0200},

|

| 343 |

+

biburl = {https://dblp.org/rec/journals/corr/abs-2403-20180.bib},

|

| 344 |

+

bibsource = {dblp computer science bibliography, https://dblp.org}

|