Upload 8 files

Browse files- TB3F_Frugal_AI_Text_Classifier.bin +3 -0

- config.json +162 -0

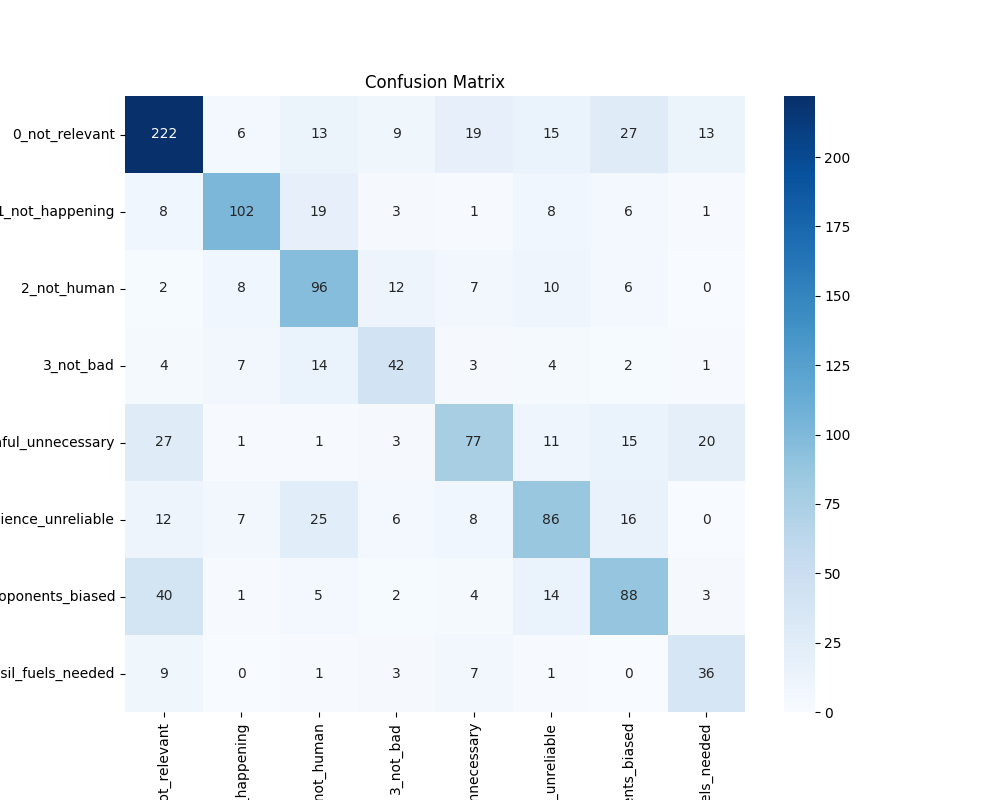

- confusion_matrix.png +0 -0

- model.safetensors +3 -0

- special_tokens_map.json +7 -0

- tokenizer.json +0 -0

- tokenizer_config.json +57 -0

- vocab.txt +0 -0

TB3F_Frugal_AI_Text_Classifier.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:652748176749593eae0cfbe63f80f29477205f3f60c97c79d7cbfc3e2afaed25

|

| 3 |

+

size 57628470

|

config.json

ADDED

|

@@ -0,0 +1,162 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "./models/TB3F_Frugal_AI_Text_Classifier",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"BertModel"

|

| 5 |

+

],

|

| 6 |

+

"attention_probs_dropout_prob": 0.1,

|

| 7 |

+

"cell": {},

|

| 8 |

+

"classifier_dropout": null,

|

| 9 |

+

"custom_model": true,

|

| 10 |

+

"emb_size": 312,

|

| 11 |

+

"hidden_act": "gelu",

|

| 12 |

+

"hidden_dropout_prob": 0.1,

|

| 13 |

+

"hidden_size": 312,

|

| 14 |

+

"id2label": {

|

| 15 |

+

"0": "LABEL_0",

|

| 16 |

+

"1": "LABEL_1",

|

| 17 |

+

"2": "LABEL_2",

|

| 18 |

+

"3": "LABEL_3",

|

| 19 |

+

"4": "LABEL_4",

|

| 20 |

+

"5": "LABEL_5",

|

| 21 |

+

"6": "LABEL_6",

|

| 22 |

+

"7": "LABEL_7",

|

| 23 |

+

"8": "LABEL_8",

|

| 24 |

+

"9": "LABEL_9",

|

| 25 |

+

"10": "LABEL_10",

|

| 26 |

+

"11": "LABEL_11",

|

| 27 |

+

"12": "LABEL_12",

|

| 28 |

+

"13": "LABEL_13",

|

| 29 |

+

"14": "LABEL_14",

|

| 30 |

+

"15": "LABEL_15",

|

| 31 |

+

"16": "LABEL_16",

|

| 32 |

+

"17": "LABEL_17",

|

| 33 |

+

"18": "LABEL_18",

|

| 34 |

+

"19": "LABEL_19",

|

| 35 |

+

"20": "LABEL_20",

|

| 36 |

+

"21": "LABEL_21",

|

| 37 |

+

"22": "LABEL_22",

|

| 38 |

+

"23": "LABEL_23",

|

| 39 |

+

"24": "LABEL_24",

|

| 40 |

+

"25": "LABEL_25",

|

| 41 |

+

"26": "LABEL_26",

|

| 42 |

+

"27": "LABEL_27",

|

| 43 |

+

"28": "LABEL_28",

|

| 44 |

+

"29": "LABEL_29",

|

| 45 |

+

"30": "LABEL_30",

|

| 46 |

+

"31": "LABEL_31",

|

| 47 |

+

"32": "LABEL_32",

|

| 48 |

+

"33": "LABEL_33",

|

| 49 |

+

"34": "LABEL_34",

|

| 50 |

+

"35": "LABEL_35",

|

| 51 |

+

"36": "LABEL_36",

|

| 52 |

+

"37": "LABEL_37",

|

| 53 |

+

"38": "LABEL_38",

|

| 54 |

+

"39": "LABEL_39",

|

| 55 |

+

"40": "LABEL_40",

|

| 56 |

+

"41": "LABEL_41",

|

| 57 |

+

"42": "LABEL_42",

|

| 58 |

+

"43": "LABEL_43",

|

| 59 |

+

"44": "LABEL_44",

|

| 60 |

+

"45": "LABEL_45",

|

| 61 |

+

"46": "LABEL_46",

|

| 62 |

+

"47": "LABEL_47",

|

| 63 |

+

"48": "LABEL_48",

|

| 64 |

+

"49": "LABEL_49",

|

| 65 |

+

"50": "LABEL_50",

|

| 66 |

+

"51": "LABEL_51",

|

| 67 |

+

"52": "LABEL_52",

|

| 68 |

+

"53": "LABEL_53",

|

| 69 |

+

"54": "LABEL_54",

|

| 70 |

+

"55": "LABEL_55",

|

| 71 |

+

"56": "LABEL_56",

|

| 72 |

+

"57": "LABEL_57",

|

| 73 |

+

"58": "LABEL_58",

|

| 74 |

+

"59": "LABEL_59",

|

| 75 |

+

"60": "LABEL_60",

|

| 76 |

+

"61": "LABEL_61",

|

| 77 |

+

"62": "LABEL_62",

|

| 78 |

+

"63": "LABEL_63"

|

| 79 |

+

},

|

| 80 |

+

"initializer_range": 0.02,

|

| 81 |

+

"intermediate_size": 1200,

|

| 82 |

+

"label2id": {

|

| 83 |

+

"LABEL_0": 0,

|

| 84 |

+

"LABEL_1": 1,

|

| 85 |

+

"LABEL_10": 10,

|

| 86 |

+

"LABEL_11": 11,

|

| 87 |

+

"LABEL_12": 12,

|

| 88 |

+

"LABEL_13": 13,

|

| 89 |

+

"LABEL_14": 14,

|

| 90 |

+

"LABEL_15": 15,

|

| 91 |

+

"LABEL_16": 16,

|

| 92 |

+

"LABEL_17": 17,

|

| 93 |

+

"LABEL_18": 18,

|

| 94 |

+

"LABEL_19": 19,

|

| 95 |

+

"LABEL_2": 2,

|

| 96 |

+

"LABEL_20": 20,

|

| 97 |

+

"LABEL_21": 21,

|

| 98 |

+

"LABEL_22": 22,

|

| 99 |

+

"LABEL_23": 23,

|

| 100 |

+

"LABEL_24": 24,

|

| 101 |

+

"LABEL_25": 25,

|

| 102 |

+

"LABEL_26": 26,

|

| 103 |

+

"LABEL_27": 27,

|

| 104 |

+

"LABEL_28": 28,

|

| 105 |

+

"LABEL_29": 29,

|

| 106 |

+

"LABEL_3": 3,

|

| 107 |

+

"LABEL_30": 30,

|

| 108 |

+

"LABEL_31": 31,

|

| 109 |

+

"LABEL_32": 32,

|

| 110 |

+

"LABEL_33": 33,

|

| 111 |

+

"LABEL_34": 34,

|

| 112 |

+

"LABEL_35": 35,

|

| 113 |

+

"LABEL_36": 36,

|

| 114 |

+

"LABEL_37": 37,

|

| 115 |

+

"LABEL_38": 38,

|

| 116 |

+

"LABEL_39": 39,

|

| 117 |

+

"LABEL_4": 4,

|

| 118 |

+

"LABEL_40": 40,

|

| 119 |

+

"LABEL_41": 41,

|

| 120 |

+

"LABEL_42": 42,

|

| 121 |

+

"LABEL_43": 43,

|

| 122 |

+

"LABEL_44": 44,

|

| 123 |

+

"LABEL_45": 45,

|

| 124 |

+

"LABEL_46": 46,

|

| 125 |

+

"LABEL_47": 47,

|

| 126 |

+

"LABEL_48": 48,

|

| 127 |

+

"LABEL_49": 49,

|

| 128 |

+

"LABEL_5": 5,

|

| 129 |

+

"LABEL_50": 50,

|

| 130 |

+

"LABEL_51": 51,

|

| 131 |

+

"LABEL_52": 52,

|

| 132 |

+

"LABEL_53": 53,

|

| 133 |

+

"LABEL_54": 54,

|

| 134 |

+

"LABEL_55": 55,

|

| 135 |

+

"LABEL_56": 56,

|

| 136 |

+

"LABEL_57": 57,

|

| 137 |

+

"LABEL_58": 58,

|

| 138 |

+

"LABEL_59": 59,

|

| 139 |

+

"LABEL_6": 6,

|

| 140 |

+

"LABEL_60": 60,

|

| 141 |

+

"LABEL_61": 61,

|

| 142 |

+

"LABEL_62": 62,

|

| 143 |

+

"LABEL_63": 63,

|

| 144 |

+

"LABEL_7": 7,

|

| 145 |

+

"LABEL_8": 8,

|

| 146 |

+

"LABEL_9": 9

|

| 147 |

+

},

|

| 148 |

+

"layer_norm_eps": 1e-12,

|

| 149 |

+

"max_position_embeddings": 512,

|

| 150 |

+

"model_type": "bert",

|

| 151 |

+

"num_attention_heads": 12,

|

| 152 |

+

"num_hidden_layers": 4,

|

| 153 |

+

"pad_token_id": 0,

|

| 154 |

+

"position_embedding_type": "absolute",

|

| 155 |

+

"pre_trained": "",

|

| 156 |

+

"structure": [],

|

| 157 |

+

"torch_dtype": "float32",

|

| 158 |

+

"transformers_version": "4.45.1",

|

| 159 |

+

"type_vocab_size": 2,

|

| 160 |

+

"use_cache": true,

|

| 161 |

+

"vocab_size": 30522

|

| 162 |

+

}

|

confusion_matrix.png

ADDED

|

model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:08e28ac0cfd04a0f69721bbe9a044100d22322e23df61525a8c52bf13537cccf

|

| 3 |

+

size 57408776

|

special_tokens_map.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"cls_token": "[CLS]",

|

| 3 |

+

"mask_token": "[MASK]",

|

| 4 |

+

"pad_token": "[PAD]",

|

| 5 |

+

"sep_token": "[SEP]",

|

| 6 |

+

"unk_token": "[UNK]"

|

| 7 |

+

}

|

tokenizer.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

tokenizer_config.json

ADDED

|

@@ -0,0 +1,57 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"added_tokens_decoder": {

|

| 3 |

+

"0": {

|

| 4 |

+

"content": "[PAD]",

|

| 5 |

+

"lstrip": false,

|

| 6 |

+

"normalized": false,

|

| 7 |

+

"rstrip": false,

|

| 8 |

+

"single_word": false,

|

| 9 |

+

"special": true

|

| 10 |

+

},

|

| 11 |

+

"100": {

|

| 12 |

+

"content": "[UNK]",

|

| 13 |

+

"lstrip": false,

|

| 14 |

+

"normalized": false,

|

| 15 |

+

"rstrip": false,

|

| 16 |

+

"single_word": false,

|

| 17 |

+

"special": true

|

| 18 |

+

},

|

| 19 |

+

"101": {

|

| 20 |

+

"content": "[CLS]",

|

| 21 |

+

"lstrip": false,

|

| 22 |

+

"normalized": false,

|

| 23 |

+

"rstrip": false,

|

| 24 |

+

"single_word": false,

|

| 25 |

+

"special": true

|

| 26 |

+

},

|

| 27 |

+

"102": {

|

| 28 |

+

"content": "[SEP]",

|

| 29 |

+

"lstrip": false,

|

| 30 |

+

"normalized": false,

|

| 31 |

+

"rstrip": false,

|

| 32 |

+

"single_word": false,

|

| 33 |

+

"special": true

|

| 34 |

+

},

|

| 35 |

+

"103": {

|

| 36 |

+

"content": "[MASK]",

|

| 37 |

+

"lstrip": false,

|

| 38 |

+

"normalized": false,

|

| 39 |

+

"rstrip": false,

|

| 40 |

+

"single_word": false,

|

| 41 |

+

"special": true

|

| 42 |

+

}

|

| 43 |

+

},

|

| 44 |

+

"clean_up_tokenization_spaces": true,

|

| 45 |

+

"cls_token": "[CLS]",

|

| 46 |

+

"do_basic_tokenize": true,

|

| 47 |

+

"do_lower_case": true,

|

| 48 |

+

"mask_token": "[MASK]",

|

| 49 |

+

"model_max_length": 1000000000000000019884624838656,

|

| 50 |

+

"never_split": null,

|

| 51 |

+

"pad_token": "[PAD]",

|

| 52 |

+

"sep_token": "[SEP]",

|

| 53 |

+

"strip_accents": null,

|

| 54 |

+

"tokenize_chinese_chars": true,

|

| 55 |

+

"tokenizer_class": "BertTokenizer",

|

| 56 |

+

"unk_token": "[UNK]"

|

| 57 |

+

}

|

vocab.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|