Spaces:

Runtime error

Runtime error

git subrepo clone (merge) --branch=exbert-mods https://github.com/bhoov/transformers.git server/transformers

Browse filessubrepo:

subdir: "server/transformers"

merged: "a0b899d1"

upstream:

origin: "https://github.com/bhoov/transformers.git"

branch: "exbert-mods"

commit: "a0b899d1"

git-subrepo:

version: "0.4.1"

origin: "https://github.com/ingydotnet/git-subrepo"

commit: "a04d8c2"

This view is limited to 50 files because it contains too many changes.

See raw diff

- server/transformers/.circleci/config.yml +143 -0

- server/transformers/.circleci/deploy.sh +28 -0

- server/transformers/.coveragerc +12 -0

- server/transformers/.github/ISSUE_TEMPLATE/---new-benchmark.md +22 -0

- server/transformers/.github/ISSUE_TEMPLATE/--new-model-addition.md +20 -0

- server/transformers/.github/ISSUE_TEMPLATE/bug-report.md +52 -0

- server/transformers/.github/ISSUE_TEMPLATE/feature-request.md +25 -0

- server/transformers/.github/ISSUE_TEMPLATE/migration.md +57 -0

- server/transformers/.github/ISSUE_TEMPLATE/question-help.md +29 -0

- server/transformers/.github/stale.yml +17 -0

- server/transformers/.gitignore +141 -0

- server/transformers/.gitrepo +12 -0

- server/transformers/CONTRIBUTING.md +258 -0

- server/transformers/LICENSE +202 -0

- server/transformers/MANIFEST.in +1 -0

- server/transformers/Makefile +24 -0

- server/transformers/README.md +684 -0

- server/transformers/deploy_multi_version_doc.sh +23 -0

- server/transformers/docker/Dockerfile +7 -0

- server/transformers/docs/Makefile +19 -0

- server/transformers/docs/README.md +67 -0

- server/transformers/docs/source/_static/css/Calibre-Light.ttf +0 -0

- server/transformers/docs/source/_static/css/Calibre-Medium.otf +0 -0

- server/transformers/docs/source/_static/css/Calibre-Regular.otf +0 -0

- server/transformers/docs/source/_static/css/Calibre-Thin.otf +0 -0

- server/transformers/docs/source/_static/css/code-snippets.css +12 -0

- server/transformers/docs/source/_static/css/huggingface.css +196 -0

- server/transformers/docs/source/_static/js/custom.js +79 -0

- server/transformers/docs/source/_static/js/huggingface_logo.svg +47 -0

- server/transformers/docs/source/benchmarks.md +54 -0

- server/transformers/docs/source/bertology.rst +18 -0

- server/transformers/docs/source/conf.py +188 -0

- server/transformers/docs/source/converting_tensorflow_models.rst +137 -0

- server/transformers/docs/source/examples.md +1 -0

- server/transformers/docs/source/glossary.rst +145 -0

- server/transformers/docs/source/imgs/transformers_logo_name.png +0 -0

- server/transformers/docs/source/imgs/warmup_constant_schedule.png +0 -0

- server/transformers/docs/source/imgs/warmup_cosine_hard_restarts_schedule.png +0 -0

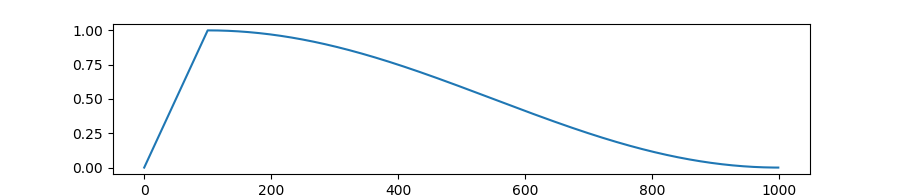

- server/transformers/docs/source/imgs/warmup_cosine_schedule.png +0 -0

- server/transformers/docs/source/imgs/warmup_cosine_warm_restarts_schedule.png +0 -0

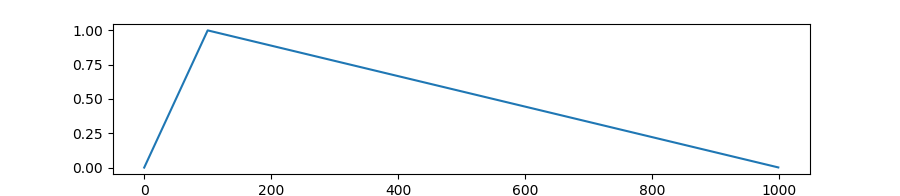

- server/transformers/docs/source/imgs/warmup_linear_schedule.png +0 -0

- server/transformers/docs/source/index.rst +102 -0

- server/transformers/docs/source/installation.md +51 -0

- server/transformers/docs/source/main_classes/configuration.rst +10 -0

- server/transformers/docs/source/main_classes/model.rst +21 -0

- server/transformers/docs/source/main_classes/optimizer_schedules.rst +72 -0

- server/transformers/docs/source/main_classes/processors.rst +153 -0

- server/transformers/docs/source/main_classes/tokenizer.rst +16 -0

- server/transformers/docs/source/migration.md +109 -0

- server/transformers/docs/source/model_doc/albert.rst +93 -0

server/transformers/.circleci/config.yml

ADDED

|

@@ -0,0 +1,143 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version: 2

|

| 2 |

+

jobs:

|

| 3 |

+

run_tests_torch_and_tf:

|

| 4 |

+

working_directory: ~/transformers

|

| 5 |

+

docker:

|

| 6 |

+

- image: circleci/python:3.5

|

| 7 |

+

environment:

|

| 8 |

+

OMP_NUM_THREADS: 1

|

| 9 |

+

resource_class: xlarge

|

| 10 |

+

parallelism: 1

|

| 11 |

+

steps:

|

| 12 |

+

- checkout

|

| 13 |

+

- run: sudo pip install .[sklearn,tf,torch,testing]

|

| 14 |

+

- run: sudo pip install codecov pytest-cov

|

| 15 |

+

- run: python -m pytest -n 8 --dist=loadfile -s -v ./tests/ --cov

|

| 16 |

+

- run: codecov

|

| 17 |

+

run_all_tests_torch_and_tf:

|

| 18 |

+

working_directory: ~/transformers

|

| 19 |

+

docker:

|

| 20 |

+

- image: circleci/python:3.5

|

| 21 |

+

environment:

|

| 22 |

+

OMP_NUM_THREADS: 1

|

| 23 |

+

RUN_SLOW: yes

|

| 24 |

+

RUN_CUSTOM_TOKENIZERS: yes

|

| 25 |

+

resource_class: xlarge

|

| 26 |

+

parallelism: 1

|

| 27 |

+

steps:

|

| 28 |

+

- checkout

|

| 29 |

+

- run: sudo pip install .[mecab,sklearn,tf,torch,testing]

|

| 30 |

+

- run: python -m pytest -n 8 --dist=loadfile -s -v ./tests/

|

| 31 |

+

run_tests_torch:

|

| 32 |

+

working_directory: ~/transformers

|

| 33 |

+

docker:

|

| 34 |

+

- image: circleci/python:3.7

|

| 35 |

+

environment:

|

| 36 |

+

OMP_NUM_THREADS: 1

|

| 37 |

+

resource_class: xlarge

|

| 38 |

+

parallelism: 1

|

| 39 |

+

steps:

|

| 40 |

+

- checkout

|

| 41 |

+

- run: sudo pip install .[sklearn,torch,testing]

|

| 42 |

+

- run: sudo pip install codecov pytest-cov

|

| 43 |

+

- run: python -m pytest -n 8 --dist=loadfile -s -v ./tests/ --cov

|

| 44 |

+

- run: codecov

|

| 45 |

+

run_tests_tf:

|

| 46 |

+

working_directory: ~/transformers

|

| 47 |

+

docker:

|

| 48 |

+

- image: circleci/python:3.7

|

| 49 |

+

environment:

|

| 50 |

+

OMP_NUM_THREADS: 1

|

| 51 |

+

resource_class: xlarge

|

| 52 |

+

parallelism: 1

|

| 53 |

+

steps:

|

| 54 |

+

- checkout

|

| 55 |

+

- run: sudo pip install .[sklearn,tf,testing]

|

| 56 |

+

- run: sudo pip install codecov pytest-cov

|

| 57 |

+

- run: python -m pytest -n 8 --dist=loadfile -s -v ./tests/ --cov

|

| 58 |

+

- run: codecov

|

| 59 |

+

run_tests_custom_tokenizers:

|

| 60 |

+

working_directory: ~/transformers

|

| 61 |

+

docker:

|

| 62 |

+

- image: circleci/python:3.5

|

| 63 |

+

environment:

|

| 64 |

+

RUN_CUSTOM_TOKENIZERS: yes

|

| 65 |

+

steps:

|

| 66 |

+

- checkout

|

| 67 |

+

- run: sudo pip install .[mecab,testing]

|

| 68 |

+

- run: python -m pytest -sv ./tests/test_tokenization_bert_japanese.py

|

| 69 |

+

run_examples_torch:

|

| 70 |

+

working_directory: ~/transformers

|

| 71 |

+

docker:

|

| 72 |

+

- image: circleci/python:3.5

|

| 73 |

+

environment:

|

| 74 |

+

OMP_NUM_THREADS: 1

|

| 75 |

+

resource_class: xlarge

|

| 76 |

+

parallelism: 1

|

| 77 |

+

steps:

|

| 78 |

+

- checkout

|

| 79 |

+

- run: sudo pip install .[sklearn,torch,testing]

|

| 80 |

+

- run: sudo pip install -r examples/requirements.txt

|

| 81 |

+

- run: python -m pytest -n 8 --dist=loadfile -s -v ./examples/

|

| 82 |

+

deploy_doc:

|

| 83 |

+

working_directory: ~/transformers

|

| 84 |

+

docker:

|

| 85 |

+

- image: circleci/python:3.5

|

| 86 |

+

steps:

|

| 87 |

+

- add_ssh_keys:

|

| 88 |

+

fingerprints:

|

| 89 |

+

- "5b:7a:95:18:07:8c:aa:76:4c:60:35:88:ad:60:56:71"

|

| 90 |

+

- checkout

|

| 91 |

+

- run: sudo pip install .[tf,torch,docs]

|

| 92 |

+

- run: ./.circleci/deploy.sh

|

| 93 |

+

check_code_quality:

|

| 94 |

+

working_directory: ~/transformers

|

| 95 |

+

docker:

|

| 96 |

+

- image: circleci/python:3.6

|

| 97 |

+

resource_class: medium

|

| 98 |

+

parallelism: 1

|

| 99 |

+

steps:

|

| 100 |

+

- checkout

|

| 101 |

+

# we need a version of isort with https://github.com/timothycrosley/isort/pull/1000

|

| 102 |

+

- run: sudo pip install git+git://github.com/timothycrosley/isort.git@e63ae06ec7d70b06df9e528357650281a3d3ec22#egg=isort

|

| 103 |

+

- run: sudo pip install .[tf,torch,quality]

|

| 104 |

+

- run: black --check --line-length 119 --target-version py35 examples templates tests src utils

|

| 105 |

+

- run: isort --check-only --recursive examples templates tests src utils

|

| 106 |

+

- run: flake8 examples templates tests src utils

|

| 107 |

+

check_repository_consistency:

|

| 108 |

+

working_directory: ~/transformers

|

| 109 |

+

docker:

|

| 110 |

+

- image: circleci/python:3.5

|

| 111 |

+

resource_class: small

|

| 112 |

+

parallelism: 1

|

| 113 |

+

steps:

|

| 114 |

+

- checkout

|

| 115 |

+

- run: sudo pip install requests

|

| 116 |

+

- run: python ./utils/link_tester.py

|

| 117 |

+

workflow_filters: &workflow_filters

|

| 118 |

+

filters:

|

| 119 |

+

branches:

|

| 120 |

+

only:

|

| 121 |

+

- master

|

| 122 |

+

workflows:

|

| 123 |

+

version: 2

|

| 124 |

+

build_and_test:

|

| 125 |

+

jobs:

|

| 126 |

+

- check_code_quality

|

| 127 |

+

- check_repository_consistency

|

| 128 |

+

- run_examples_torch

|

| 129 |

+

- run_tests_custom_tokenizers

|

| 130 |

+

- run_tests_torch_and_tf

|

| 131 |

+

- run_tests_torch

|

| 132 |

+

- run_tests_tf

|

| 133 |

+

- deploy_doc: *workflow_filters

|

| 134 |

+

run_slow_tests:

|

| 135 |

+

triggers:

|

| 136 |

+

- schedule:

|

| 137 |

+

cron: "0 4 * * 1"

|

| 138 |

+

filters:

|

| 139 |

+

branches:

|

| 140 |

+

only:

|

| 141 |

+

- master

|

| 142 |

+

jobs:

|

| 143 |

+

- run_all_tests_torch_and_tf

|

server/transformers/.circleci/deploy.sh

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

cd docs

|

| 2 |

+

|

| 3 |

+

function deploy_doc(){

|

| 4 |

+

echo "Creating doc at commit $1 and pushing to folder $2"

|

| 5 |

+

git checkout $1

|

| 6 |

+

if [ ! -z "$2" ]

|

| 7 |

+

then

|

| 8 |

+

if [ -d "$dir/$2" ]; then

|

| 9 |

+

echo "Directory" $2 "already exists"

|

| 10 |

+

else

|

| 11 |

+

echo "Pushing version" $2

|

| 12 |

+

make clean && make html && scp -r -oStrictHostKeyChecking=no _build/html $doc:$dir/$2

|

| 13 |

+

fi

|

| 14 |

+

else

|

| 15 |

+

echo "Pushing master"

|

| 16 |

+

make clean && make html && scp -r -oStrictHostKeyChecking=no _build/html/* $doc:$dir

|

| 17 |

+

fi

|

| 18 |

+

}

|

| 19 |

+

|

| 20 |

+

deploy_doc "master"

|

| 21 |

+

deploy_doc "b33a385" v1.0.0

|

| 22 |

+

deploy_doc "fe02e45" v1.1.0

|

| 23 |

+

deploy_doc "89fd345" v1.2.0

|

| 24 |

+

deploy_doc "fc9faa8" v2.0.0

|

| 25 |

+

deploy_doc "3ddce1d" v2.1.1

|

| 26 |

+

deploy_doc "3616209" v2.2.0

|

| 27 |

+

deploy_doc "d0f8b9a" v2.3.0

|

| 28 |

+

deploy_doc "6664ea9" v2.4.0

|

server/transformers/.coveragerc

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

[run]

|

| 2 |

+

source=transformers

|

| 3 |

+

omit =

|

| 4 |

+

# skip convertion scripts from testing for now

|

| 5 |

+

*/convert_*

|

| 6 |

+

*/__main__.py

|

| 7 |

+

[report]

|

| 8 |

+

exclude_lines =

|

| 9 |

+

pragma: no cover

|

| 10 |

+

raise

|

| 11 |

+

except

|

| 12 |

+

register_parameter

|

server/transformers/.github/ISSUE_TEMPLATE/---new-benchmark.md

ADDED

|

@@ -0,0 +1,22 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

name: "\U0001F5A5 New benchmark"

|

| 3 |

+

about: Benchmark a part of this library and share your results

|

| 4 |

+

title: "[Benchmark]"

|

| 5 |

+

labels: ''

|

| 6 |

+

assignees: ''

|

| 7 |

+

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

# 🖥 Benchmarking `transformers`

|

| 11 |

+

|

| 12 |

+

## Benchmark

|

| 13 |

+

|

| 14 |

+

Which part of `transformers` did you benchmark?

|

| 15 |

+

|

| 16 |

+

## Set-up

|

| 17 |

+

|

| 18 |

+

What did you run your benchmarks on? Please include details, such as: CPU, GPU? If using multiple GPUs, which parallelization did you use?

|

| 19 |

+

|

| 20 |

+

## Results

|

| 21 |

+

|

| 22 |

+

Put your results here!

|

server/transformers/.github/ISSUE_TEMPLATE/--new-model-addition.md

ADDED

|

@@ -0,0 +1,20 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

name: "\U0001F31F New model addition"

|

| 3 |

+

about: Submit a proposal/request to implement a new Transformer-based model

|

| 4 |

+

title: ''

|

| 5 |

+

labels: ''

|

| 6 |

+

assignees: ''

|

| 7 |

+

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

# 🌟 New model addition

|

| 11 |

+

|

| 12 |

+

## Model description

|

| 13 |

+

|

| 14 |

+

<!-- Important information -->

|

| 15 |

+

|

| 16 |

+

## Open source status

|

| 17 |

+

|

| 18 |

+

* [ ] the model implementation is available: (give details)

|

| 19 |

+

* [ ] the model weights are available: (give details)

|

| 20 |

+

* [ ] who are the authors: (mention them, if possible by @gh-username)

|

server/transformers/.github/ISSUE_TEMPLATE/bug-report.md

ADDED

|

@@ -0,0 +1,52 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

name: "\U0001F41B Bug Report"

|

| 3 |

+

about: Submit a bug report to help us improve transformers

|

| 4 |

+

title: ''

|

| 5 |

+

labels: ''

|

| 6 |

+

assignees: ''

|

| 7 |

+

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

# 🐛 Bug

|

| 11 |

+

|

| 12 |

+

## Information

|

| 13 |

+

|

| 14 |

+

Model I am using (Bert, XLNet ...):

|

| 15 |

+

|

| 16 |

+

Language I am using the model on (English, Chinese ...):

|

| 17 |

+

|

| 18 |

+

The problem arises when using:

|

| 19 |

+

* [ ] the official example scripts: (give details below)

|

| 20 |

+

* [ ] my own modified scripts: (give details below)

|

| 21 |

+

|

| 22 |

+

The tasks I am working on is:

|

| 23 |

+

* [ ] an official GLUE/SQUaD task: (give the name)

|

| 24 |

+

* [ ] my own task or dataset: (give details below)

|

| 25 |

+

|

| 26 |

+

## To reproduce

|

| 27 |

+

|

| 28 |

+

Steps to reproduce the behavior:

|

| 29 |

+

|

| 30 |

+

1.

|

| 31 |

+

2.

|

| 32 |

+

3.

|

| 33 |

+

|

| 34 |

+

<!-- If you have code snippets, error messages, stack traces please provide them here as well.

|

| 35 |

+

Important! Use code tags to correctly format your code. See https://help.github.com/en/github/writing-on-github/creating-and-highlighting-code-blocks#syntax-highlighting

|

| 36 |

+

Do not use screenshots, as they are hard to read and (more importantly) don't allow others to copy-and-paste your code.-->

|

| 37 |

+

|

| 38 |

+

## Expected behavior

|

| 39 |

+

|

| 40 |

+

<!-- A clear and concise description of what you would expect to happen. -->

|

| 41 |

+

|

| 42 |

+

## Environment info

|

| 43 |

+

<!-- You can run the command `python transformers-cli env` and copy-and-paste its output below.

|

| 44 |

+

Don't forget to fill out the missing fields in that output! -->

|

| 45 |

+

|

| 46 |

+

- `transformers` version:

|

| 47 |

+

- Platform:

|

| 48 |

+

- Python version:

|

| 49 |

+

- PyTorch version (GPU?):

|

| 50 |

+

- Tensorflow version (GPU?):

|

| 51 |

+

- Using GPU in script?:

|

| 52 |

+

- Using distributed or parallel set-up in script?:

|

server/transformers/.github/ISSUE_TEMPLATE/feature-request.md

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

name: "\U0001F680 Feature request"

|

| 3 |

+

about: Submit a proposal/request for a new transformers feature

|

| 4 |

+

title: ''

|

| 5 |

+

labels: ''

|

| 6 |

+

assignees: ''

|

| 7 |

+

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

# 🚀 Feature request

|

| 11 |

+

|

| 12 |

+

<!-- A clear and concise description of the feature proposal.

|

| 13 |

+

Please provide a link to the paper and code in case they exist. -->

|

| 14 |

+

|

| 15 |

+

## Motivation

|

| 16 |

+

|

| 17 |

+

<!-- Please outline the motivation for the proposal. Is your feature request

|

| 18 |

+

related to a problem? e.g., I'm always frustrated when [...]. If this is related

|

| 19 |

+

to another GitHub issue, please link here too. -->

|

| 20 |

+

|

| 21 |

+

## Your contribution

|

| 22 |

+

|

| 23 |

+

<!-- Is there any way that you could help, e.g. by submitting a PR?

|

| 24 |

+

Make sure to read the CONTRIBUTING.MD readme:

|

| 25 |

+

https://github.com/huggingface/transformers/blob/master/CONTRIBUTING.md -->

|

server/transformers/.github/ISSUE_TEMPLATE/migration.md

ADDED

|

@@ -0,0 +1,57 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

name: "\U0001F4DA Migration from pytorch-pretrained-bert or pytorch-transformers"

|

| 3 |

+

about: Report a problem when migrating from pytorch-pretrained-bert or pytorch-transformers to transformers

|

| 4 |

+

title: ''

|

| 5 |

+

labels: ''

|

| 6 |

+

assignees: ''

|

| 7 |

+

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

# 📚 Migration

|

| 11 |

+

|

| 12 |

+

## Information

|

| 13 |

+

|

| 14 |

+

<!-- Important information -->

|

| 15 |

+

|

| 16 |

+

Model I am using (Bert, XLNet ...):

|

| 17 |

+

|

| 18 |

+

Language I am using the model on (English, Chinese ...):

|

| 19 |

+

|

| 20 |

+

The problem arises when using:

|

| 21 |

+

* [ ] the official example scripts: (give details below)

|

| 22 |

+

* [ ] my own modified scripts: (give details below)

|

| 23 |

+

|

| 24 |

+

The tasks I am working on is:

|

| 25 |

+

* [ ] an official GLUE/SQUaD task: (give the name)

|

| 26 |

+

* [ ] my own task or dataset: (give details below)

|

| 27 |

+

|

| 28 |

+

## Details

|

| 29 |

+

|

| 30 |

+

<!-- A clear and concise description of the migration issue.

|

| 31 |

+

If you have code snippets, please provide it here as well.

|

| 32 |

+

Important! Use code tags to correctly format your code. See https://help.github.com/en/github/writing-on-github/creating-and-highlighting-code-blocks#syntax-highlighting

|

| 33 |

+

Do not use screenshots, as they are hard to read and (more importantly) don't allow others to copy-and-paste your code.

|

| 34 |

+

-->

|

| 35 |

+

|

| 36 |

+

## Environment info

|

| 37 |

+

<!-- You can run the command `python transformers-cli env` and copy-and-paste its output below.

|

| 38 |

+

Don't forget to fill out the missing fields in that output! -->

|

| 39 |

+

|

| 40 |

+

- `transformers` version:

|

| 41 |

+

- Platform:

|

| 42 |

+

- Python version:

|

| 43 |

+

- PyTorch version (GPU?):

|

| 44 |

+

- Tensorflow version (GPU?):

|

| 45 |

+

- Using GPU in script?:

|

| 46 |

+

- Using distributed or parallel set-up in script?:

|

| 47 |

+

|

| 48 |

+

<!-- IMPORTANT: which version of the former library do you use? -->

|

| 49 |

+

* `pytorch-transformers` or `pytorch-pretrained-bert` version (or branch):

|

| 50 |

+

|

| 51 |

+

|

| 52 |

+

## Checklist

|

| 53 |

+

|

| 54 |

+

- [ ] I have read the migration guide in the readme.

|

| 55 |

+

([pytorch-transformers](https://github.com/huggingface/transformers#migrating-from-pytorch-transformers-to-transformers);

|

| 56 |

+

[pytorch-pretrained-bert](https://github.com/huggingface/transformers#migrating-from-pytorch-pretrained-bert-to-transformers))

|

| 57 |

+

- [ ] I checked if a related official extension example runs on my machine.

|

server/transformers/.github/ISSUE_TEMPLATE/question-help.md

ADDED

|

@@ -0,0 +1,29 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

name: "❓ Questions & Help"

|

| 3 |

+

about: Post your general questions on Stack Overflow tagged huggingface-transformers

|

| 4 |

+

title: ''

|

| 5 |

+

labels: ''

|

| 6 |

+

assignees: ''

|

| 7 |

+

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

# ❓ Questions & Help

|

| 11 |

+

|

| 12 |

+

<!-- The GitHub issue tracker is primarly intended for bugs, feature requests,

|

| 13 |

+

new models and benchmarks, and migration questions. For all other questions,

|

| 14 |

+

we direct you to Stack Overflow (SO) where a whole community of PyTorch and

|

| 15 |

+

Tensorflow enthusiast can help you out. Make sure to tag your question with the

|

| 16 |

+

right deep learning framework as well as the huggingface-transformers tag:

|

| 17 |

+

https://stackoverflow.com/questions/tagged/huggingface-transformers

|

| 18 |

+

|

| 19 |

+

If your question wasn't answered after a period of time on Stack Overflow, you

|

| 20 |

+

can always open a question on GitHub. You should then link to the SO question

|

| 21 |

+

that you posted.

|

| 22 |

+

-->

|

| 23 |

+

|

| 24 |

+

## Details

|

| 25 |

+

<!-- Description of your issue -->

|

| 26 |

+

|

| 27 |

+

<!-- You should first ask your question on SO, and only if

|

| 28 |

+

you didn't get an answer ask it here on GitHub. -->

|

| 29 |

+

**A link to original question on Stack Overflow**:

|

server/transformers/.github/stale.yml

ADDED

|

@@ -0,0 +1,17 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Number of days of inactivity before an issue becomes stale

|

| 2 |

+

daysUntilStale: 60

|

| 3 |

+

# Number of days of inactivity before a stale issue is closed

|

| 4 |

+

daysUntilClose: 7

|

| 5 |

+

# Issues with these labels will never be considered stale

|

| 6 |

+

exemptLabels:

|

| 7 |

+

- pinned

|

| 8 |

+

- security

|

| 9 |

+

# Label to use when marking an issue as stale

|

| 10 |

+

staleLabel: wontfix

|

| 11 |

+

# Comment to post when marking an issue as stale. Set to `false` to disable

|

| 12 |

+

markComment: >

|

| 13 |

+

This issue has been automatically marked as stale because it has not had

|

| 14 |

+

recent activity. It will be closed if no further activity occurs. Thank you

|

| 15 |

+

for your contributions.

|

| 16 |

+

# Comment to post when closing a stale issue. Set to `false` to disable

|

| 17 |

+

closeComment: false

|

server/transformers/.gitignore

ADDED

|

@@ -0,0 +1,141 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Initially taken from Github's Python gitignore file

|

| 2 |

+

|

| 3 |

+

# Byte-compiled / optimized / DLL files

|

| 4 |

+

__pycache__/

|

| 5 |

+

*.py[cod]

|

| 6 |

+

*$py.class

|

| 7 |

+

|

| 8 |

+

# C extensions

|

| 9 |

+

*.so

|

| 10 |

+

|

| 11 |

+

# Distribution / packaging

|

| 12 |

+

.Python

|

| 13 |

+

build/

|

| 14 |

+

develop-eggs/

|

| 15 |

+

dist/

|

| 16 |

+

downloads/

|

| 17 |

+

eggs/

|

| 18 |

+

.eggs/

|

| 19 |

+

lib/

|

| 20 |

+

lib64/

|

| 21 |

+

parts/

|

| 22 |

+

sdist/

|

| 23 |

+

var/

|

| 24 |

+

wheels/

|

| 25 |

+

*.egg-info/

|

| 26 |

+

.installed.cfg

|

| 27 |

+

*.egg

|

| 28 |

+

MANIFEST

|

| 29 |

+

|

| 30 |

+

# PyInstaller

|

| 31 |

+

# Usually these files are written by a python script from a template

|

| 32 |

+

# before PyInstaller builds the exe, so as to inject date/other infos into it.

|

| 33 |

+

*.manifest

|

| 34 |

+

*.spec

|

| 35 |

+

|

| 36 |

+

# Installer logs

|

| 37 |

+

pip-log.txt

|

| 38 |

+

pip-delete-this-directory.txt

|

| 39 |

+

|

| 40 |

+

# Unit test / coverage reports

|

| 41 |

+

htmlcov/

|

| 42 |

+

.tox/

|

| 43 |

+

.nox/

|

| 44 |

+

.coverage

|

| 45 |

+

.coverage.*

|

| 46 |

+

.cache

|

| 47 |

+

nosetests.xml

|

| 48 |

+

coverage.xml

|

| 49 |

+

*.cover

|

| 50 |

+

.hypothesis/

|

| 51 |

+

.pytest_cache/

|

| 52 |

+

|

| 53 |

+

# Translations

|

| 54 |

+

*.mo

|

| 55 |

+

*.pot

|

| 56 |

+

|

| 57 |

+

# Django stuff:

|

| 58 |

+

*.log

|

| 59 |

+

local_settings.py

|

| 60 |

+

db.sqlite3

|

| 61 |

+

|

| 62 |

+

# Flask stuff:

|

| 63 |

+

instance/

|

| 64 |

+

.webassets-cache

|

| 65 |

+

|

| 66 |

+

# Scrapy stuff:

|

| 67 |

+

.scrapy

|

| 68 |

+

|

| 69 |

+

# Sphinx documentation

|

| 70 |

+

docs/_build/

|

| 71 |

+

|

| 72 |

+

# PyBuilder

|

| 73 |

+

target/

|

| 74 |

+

|

| 75 |

+

# Jupyter Notebook

|

| 76 |

+

.ipynb_checkpoints

|

| 77 |

+

|

| 78 |

+

# IPython

|

| 79 |

+

profile_default/

|

| 80 |

+

ipython_config.py

|

| 81 |

+

|

| 82 |

+

# pyenv

|

| 83 |

+

.python-version

|

| 84 |

+

|

| 85 |

+

# celery beat schedule file

|

| 86 |

+

celerybeat-schedule

|

| 87 |

+

|

| 88 |

+

# SageMath parsed files

|

| 89 |

+

*.sage.py

|

| 90 |

+

|

| 91 |

+

# Environments

|

| 92 |

+

.env

|

| 93 |

+

.venv

|

| 94 |

+

env/

|

| 95 |

+

venv/

|

| 96 |

+

ENV/

|

| 97 |

+

env.bak/

|

| 98 |

+

venv.bak/

|

| 99 |

+

|

| 100 |

+

# Spyder project settings

|

| 101 |

+

.spyderproject

|

| 102 |

+

.spyproject

|

| 103 |

+

|

| 104 |

+

# Rope project settings

|

| 105 |

+

.ropeproject

|

| 106 |

+

|

| 107 |

+

# mkdocs documentation

|

| 108 |

+

/site

|

| 109 |

+

|

| 110 |

+

# mypy

|

| 111 |

+

.mypy_cache/

|

| 112 |

+

.dmypy.json

|

| 113 |

+

dmypy.json

|

| 114 |

+

|

| 115 |

+

# Pyre type checker

|

| 116 |

+

.pyre/

|

| 117 |

+

|

| 118 |

+

# vscode

|

| 119 |

+

.vscode

|

| 120 |

+

|

| 121 |

+

# Pycharm

|

| 122 |

+

.idea

|

| 123 |

+

|

| 124 |

+

# TF code

|

| 125 |

+

tensorflow_code

|

| 126 |

+

|

| 127 |

+

# Models

|

| 128 |

+

models

|

| 129 |

+

proc_data

|

| 130 |

+

|

| 131 |

+

# examples

|

| 132 |

+

runs

|

| 133 |

+

examples/runs

|

| 134 |

+

|

| 135 |

+

# data

|

| 136 |

+

/data

|

| 137 |

+

serialization_dir

|

| 138 |

+

|

| 139 |

+

# emacs

|

| 140 |

+

*.*~

|

| 141 |

+

debug.env

|

server/transformers/.gitrepo

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

; DO NOT EDIT (unless you know what you are doing)

|

| 2 |

+

;

|

| 3 |

+

; This subdirectory is a git "subrepo", and this file is maintained by the

|

| 4 |

+

; git-subrepo command. See https://github.com/git-commands/git-subrepo#readme

|

| 5 |

+

;

|

| 6 |

+

[subrepo]

|

| 7 |

+

remote = https://github.com/bhoov/transformers.git

|

| 8 |

+

branch = exbert-mods

|

| 9 |

+

commit = a0b899d114c1891dc685ce448077efab4a386348

|

| 10 |

+

parent = 8235ef04d0dca4d47c9106f70c0bd8681895fb8f

|

| 11 |

+

method = merge

|

| 12 |

+

cmdver = 0.4.1

|

server/transformers/CONTRIBUTING.md

ADDED

|

@@ -0,0 +1,258 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# How to contribute to transformers?

|

| 2 |

+

|

| 3 |

+

Everyone is welcome to contribute, and we value everybody's contribution. Code

|

| 4 |

+

is thus not the only way to help the community. Answering questions, helping

|

| 5 |

+

others, reaching out and improving the documentations are immensely valuable to

|

| 6 |

+

the community.

|

| 7 |

+

|

| 8 |

+

It also helps us if you spread the word: reference the library from blog posts

|

| 9 |

+

on the awesome projects it made possible, shout out on Twitter every time it has

|

| 10 |

+

helped you, or simply star the repo to say "thank you".

|

| 11 |

+

|

| 12 |

+

## You can contribute in so many ways!

|

| 13 |

+

|

| 14 |

+

There are 4 ways you can contribute to transformers:

|

| 15 |

+

* Fixing outstanding issues with the existing code;

|

| 16 |

+

* Implementing new models;

|

| 17 |

+

* Contributing to the examples or to the documentation;

|

| 18 |

+

* Submitting issues related to bugs or desired new features.

|

| 19 |

+

|

| 20 |

+

*All are equally valuable to the community.*

|

| 21 |

+

|

| 22 |

+

## Submitting a new issue or feature request

|

| 23 |

+

|

| 24 |

+

Do your best to follow these guidelines when submitting an issue or a feature

|

| 25 |

+

request. It will make it easier for us to come back to you quickly and with good

|

| 26 |

+

feedback.

|

| 27 |

+

|

| 28 |

+

### Did you find a bug?

|

| 29 |

+

|

| 30 |

+

The transformers are robust and reliable thanks to the users who notify us of

|

| 31 |

+

the problems they encounter. So thank you for reporting an issue.

|

| 32 |

+

|

| 33 |

+

First, we would really appreciate it if you could **make sure the bug was not

|

| 34 |

+

already reported** (use the search bar on Github under Issues).

|

| 35 |

+

|

| 36 |

+

Did not find it? :( So we can act quickly on it, please follow these steps:

|

| 37 |

+

|

| 38 |

+

* Include your **OS type and version**, the versions of **Python**, **PyTorch** and

|

| 39 |

+

**Tensorflow** when applicable;

|

| 40 |

+

* A short, self-contained, code snippet that allows us to reproduce the bug in

|

| 41 |

+

less than 30s;

|

| 42 |

+

* Provide the *full* traceback if an exception is raised.

|

| 43 |

+

|

| 44 |

+

To get the OS and software versions automatically, you can run the following command:

|

| 45 |

+

|

| 46 |

+

```bash

|

| 47 |

+

python transformers-cli env

|

| 48 |

+

```

|

| 49 |

+

|

| 50 |

+

### Do you want to implement a new model?

|

| 51 |

+

|

| 52 |

+

Awesome! Please provide the following information:

|

| 53 |

+

|

| 54 |

+

* Short description of the model and link to the paper;

|

| 55 |

+

* Link to the implementation if it is open-source;

|

| 56 |

+

* Link to the model weights if they are available.

|

| 57 |

+

|

| 58 |

+

If you are willing to contribute the model yourself, let us know so we can best

|

| 59 |

+

guide you.

|

| 60 |

+

|

| 61 |

+

We have added a **detailed guide and templates** to guide you in the process of adding a new model. You can find them in the [`templates`](./templates) folder.

|

| 62 |

+

|

| 63 |

+

### Do you want a new feature (that is not a model)?

|

| 64 |

+

|

| 65 |

+

A world-class feature request addresses the following points:

|

| 66 |

+

|

| 67 |

+

1. Motivation first:

|

| 68 |

+

* Is it related to a problem/frustration with the library? If so, please explain

|

| 69 |

+

why. Providing a code snippet that demonstrates the problem is best.

|

| 70 |

+

* Is it related to something you would need for a project? We'd love to hear

|

| 71 |

+

about it!

|

| 72 |

+

* Is it something you worked on and think could benefit the community?

|

| 73 |

+

Awesome! Tell us what problem it solved for you.

|

| 74 |

+

2. Write a *full paragraph* describing the feature;

|

| 75 |

+

3. Provide a **code snippet** that demonstrates its future use;

|

| 76 |

+

4. In case this is related to a paper, please attach a link;

|

| 77 |

+

5. Attach any additional information (drawings, screenshots, etc.) you think may help.

|

| 78 |

+

|

| 79 |

+

If your issue is well written we're already 80% of the way there by the time you

|

| 80 |

+

post it.

|

| 81 |

+

|

| 82 |

+

We have added **templates** to guide you in the process of adding a new example script for training or testing the models in the library. You can find them in the [`templates`](./templates) folder.

|

| 83 |

+

|

| 84 |

+

## Start contributing! (Pull Requests)

|

| 85 |

+

|

| 86 |

+

Before writing code, we strongly advise you to search through the exising PRs or

|

| 87 |

+

issues to make sure that nobody is already working on the same thing. If you are

|

| 88 |

+

unsure, it is always a good idea to open an issue to get some feedback.

|

| 89 |

+

|

| 90 |

+

You will need basic `git` proficiency to be able to contribute to

|

| 91 |

+

`transformers`. `git` is not the easiest tool to use but it has the greatest

|

| 92 |

+

manual. Type `git --help` in a shell and enjoy. If you prefer books, [Pro

|

| 93 |

+

Git](https://git-scm.com/book/en/v2) is a very good reference.

|

| 94 |

+

|

| 95 |

+

Follow these steps to start contributing:

|

| 96 |

+

|

| 97 |

+

1. Fork the [repository](https://github.com/huggingface/transformers) by

|

| 98 |

+

clicking on the 'Fork' button on the repository's page. This creates a copy of the code

|

| 99 |

+

under your GitHub user account.

|

| 100 |

+

|

| 101 |

+

2. Clone your fork to your local disk, and add the base repository as a remote:

|

| 102 |

+

|

| 103 |

+

```bash

|

| 104 |

+

$ git clone [email protected]:<your Github handle>/transformers.git

|

| 105 |

+

$ cd transformers

|

| 106 |

+

$ git remote add upstream https://github.com/huggingface/transformers.git

|

| 107 |

+

```

|

| 108 |

+

|

| 109 |

+

3. Create a new branch to hold your development changes:

|

| 110 |

+

|

| 111 |

+

```bash

|

| 112 |

+

$ git checkout -b a-descriptive-name-for-my-changes

|

| 113 |

+

```

|

| 114 |

+

|

| 115 |

+

**do not** work on the `master` branch.

|

| 116 |

+

|

| 117 |

+

4. Set up a development environment by running the following command in a virtual environment:

|

| 118 |

+

|

| 119 |

+

```bash

|

| 120 |

+

$ pip install -e ".[dev]"

|

| 121 |

+

```

|

| 122 |

+

|

| 123 |

+

(If transformers was already installed in the virtual environment, remove

|

| 124 |

+

it with `pip uninstall transformers` before reinstalling it in editable

|

| 125 |

+

mode with the `-e` flag.)

|

| 126 |

+

|

| 127 |

+

Right now, we need an unreleased version of `isort` to avoid a

|

| 128 |

+

[bug](https://github.com/timothycrosley/isort/pull/1000):

|

| 129 |

+

|

| 130 |

+

```bash

|

| 131 |

+

$ pip install -U git+git://github.com/timothycrosley/isort.git@e63ae06ec7d70b06df9e528357650281a3d3ec22#egg=isort

|

| 132 |

+

```

|

| 133 |

+

|

| 134 |

+

5. Develop the features on your branch.

|

| 135 |

+

|

| 136 |

+

As you work on the features, you should make sure that the test suite

|

| 137 |

+

passes:

|

| 138 |

+

|

| 139 |

+

```bash

|

| 140 |

+

$ make test

|

| 141 |

+

```

|

| 142 |

+

|

| 143 |

+

`transformers` relies on `black` and `isort` to format its source code

|

| 144 |

+

consistently. After you make changes, format them with:

|

| 145 |

+

|

| 146 |

+

```bash

|

| 147 |

+

$ make style

|

| 148 |

+

```

|

| 149 |

+

|

| 150 |

+

`transformers` also uses `flake8` to check for coding mistakes. Quality

|

| 151 |

+

control runs in CI, however you can also run the same checks with:

|

| 152 |

+

|

| 153 |

+

```bash

|

| 154 |

+

$ make quality

|

| 155 |

+

```

|

| 156 |

+

|

| 157 |

+

Once you're happy with your changes, add changed files using `git add` and

|

| 158 |

+

make a commit with `git commit` to record your changes locally:

|

| 159 |

+

|

| 160 |

+

```bash

|

| 161 |

+

$ git add modified_file.py

|

| 162 |

+

$ git commit

|

| 163 |

+

```

|

| 164 |

+

|

| 165 |

+

Please write [good commit

|

| 166 |

+

messages](https://chris.beams.io/posts/git-commit/).

|

| 167 |

+

|

| 168 |

+

It is a good idea to sync your copy of the code with the original

|

| 169 |

+

repository regularly. This way you can quickly account for changes:

|

| 170 |

+

|

| 171 |

+

```bash

|

| 172 |

+

$ git fetch upstream

|

| 173 |

+

$ git rebase upstream/master

|

| 174 |

+

```

|

| 175 |

+

|

| 176 |

+

Push the changes to your account using:

|

| 177 |

+

|

| 178 |

+

```bash

|

| 179 |

+

$ git push -u origin a-descriptive-name-for-my-changes

|

| 180 |

+

```

|

| 181 |

+

|

| 182 |

+

6. Once you are satisfied (**and the checklist below is happy too**), go to the

|

| 183 |

+

webpage of your fork on GitHub. Click on 'Pull request' to send your changes

|

| 184 |

+

to the project maintainers for review.

|

| 185 |

+

|

| 186 |

+

7. It's ok if maintainers ask you for changes. It happens to core contributors

|

| 187 |

+

too! So everyone can see the changes in the Pull request, work in your local

|

| 188 |

+

branch and push the changes to your fork. They will automatically appear in

|

| 189 |

+

the pull request.

|

| 190 |

+

|

| 191 |

+

|

| 192 |

+

### Checklist

|

| 193 |

+

|

| 194 |

+

1. The title of your pull request should be a summary of its contribution;

|

| 195 |

+

2. If your pull request adresses an issue, please mention the issue number in

|

| 196 |

+

the pull request description to make sure they are linked (and people

|

| 197 |

+

consulting the issue know you are working on it);

|

| 198 |

+

3. To indicate a work in progress please prefix the title with `[WIP]`. These

|

| 199 |

+

are useful to avoid duplicated work, and to differentiate it from PRs ready

|

| 200 |

+

to be merged;

|

| 201 |

+

4. Make sure pre-existing tests still pass;

|

| 202 |

+

5. Add high-coverage tests. No quality test, no merge;

|

| 203 |

+

6. All public methods must have informative docstrings;

|

| 204 |

+

|

| 205 |

+

|

| 206 |

+

### Tests

|

| 207 |

+

|

| 208 |

+

You can run 🤗 Transformers tests with `unittest` or `pytest`.

|

| 209 |

+

|

| 210 |

+

We like `pytest` and `pytest-xdist` because it's faster. From the root of the

|

| 211 |

+

repository, here's how to run tests with `pytest` for the library:

|

| 212 |

+

|

| 213 |

+

```bash

|

| 214 |

+

$ python -m pytest -n auto --dist=loadfile -s -v ./tests/

|

| 215 |

+

```

|

| 216 |

+

|

| 217 |

+

and for the examples:

|

| 218 |

+

|

| 219 |

+

```bash

|

| 220 |

+

$ pip install -r examples/requirements.txt # only needed the first time

|

| 221 |

+

$ python -m pytest -n auto --dist=loadfile -s -v ./examples/

|

| 222 |

+

```

|

| 223 |

+

|

| 224 |

+

In fact, that's how `make test` and `make test-examples` are implemented!

|

| 225 |

+

|

| 226 |

+

You can specify a smaller set of tests in order to test only the feature

|

| 227 |

+

you're working on.

|

| 228 |

+

|

| 229 |

+

By default, slow tests are skipped. Set the `RUN_SLOW` environment variable to

|

| 230 |

+

`yes` to run them. This will download many gigabytes of models — make sure you

|

| 231 |

+

have enough disk space and a good Internet connection, or a lot of patience!

|

| 232 |

+

|

| 233 |

+

```bash

|

| 234 |

+

$ RUN_SLOW=yes python -m pytest -n auto --dist=loadfile -s -v ./tests/

|

| 235 |

+

$ RUN_SLOW=yes python -m pytest -n auto --dist=loadfile -s -v ./examples/

|

| 236 |

+

```

|

| 237 |

+

|

| 238 |

+

Likewise, set the `RUN_CUSTOM_TOKENIZERS` environment variable to `yes` to run

|

| 239 |

+

tests for custom tokenizers, which don't run by default either.

|

| 240 |

+

|

| 241 |

+

🤗 Transformers uses `pytest` as a test runner only. It doesn't use any

|

| 242 |

+

`pytest`-specific features in the test suite itself.

|

| 243 |

+

|

| 244 |

+

This means `unittest` is fully supported. Here's how to run tests with

|

| 245 |

+

`unittest`:

|

| 246 |

+

|

| 247 |

+

```bash

|

| 248 |

+

$ python -m unittest discover -s tests -t . -v

|

| 249 |

+

$ python -m unittest discover -s examples -t examples -v

|

| 250 |

+

```

|

| 251 |

+

|

| 252 |

+

|

| 253 |

+

### Style guide

|

| 254 |

+

|

| 255 |

+

For documentation strings, `transformers` follows the [google

|

| 256 |

+

style](https://google.github.io/styleguide/pyguide.html).

|

| 257 |

+

|

| 258 |

+

#### This guide was heavily inspired by the awesome [scikit-learn guide to contributing](https://github.com/scikit-learn/scikit-learn/blob/master/CONTRIBUTING.md)

|

server/transformers/LICENSE

ADDED

|

@@ -0,0 +1,202 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|