Ii

commited on

Upload 18 files

Browse files- Dockerfile.nvidia +20 -0

- LICENSE +21 -0

- README.md +120 -8

- app.py +117 -0

- arcface_onnx.py +91 -0

- dockerfile +33 -0

- face_align.py +141 -0

- gitattributes +37 -0

- gitignore +173 -0

- gitkeep +0 -0

- main.py +57 -0

- scrfd.py +329 -0

- script.py +41 -0

Dockerfile.nvidia

ADDED

|

@@ -0,0 +1,20 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

FROM nvidia/cuda:11.8.0-cudnn8-runtime-ubuntu22.04

|

| 2 |

+

|

| 3 |

+

# Always use UTC on a server

|

| 4 |

+

RUN ln -snf /usr/share/zoneinfo/UTC /etc/localtime && echo UTC > /etc/timezone

|

| 5 |

+

|

| 6 |

+

RUN DEBIAN_FRONTEND=noninteractive apt update && apt install -y python3 python3-pip python3-tk git ffmpeg nvidia-cuda-toolkit nvidia-container-runtime libnvidia-decode-525-server wget unzip

|

| 7 |

+

RUN wget https://github.com/deepinsight/insightface/releases/download/v0.7/buffalo_l.zip -O /tmp/buffalo_l.zip && \

|

| 8 |

+

mkdir -p /root/.insightface/models/buffalo_l && \

|

| 9 |

+

cd /root/.insightface/models/buffalo_l && \

|

| 10 |

+

unzip /tmp/buffalo_l.zip && \

|

| 11 |

+

rm -f /tmp/buffalo_l.zip

|

| 12 |

+

|

| 13 |

+

RUN pip install nvidia-tensorrt

|

| 14 |

+

RUN git clone https://github.com/xaviviro/refacer && cd refacer && pip install -r requirements-GPU.txt

|

| 15 |

+

|

| 16 |

+

WORKDIR /refacer

|

| 17 |

+

|

| 18 |

+

# Test following commands in container to make sure GPU stuff works

|

| 19 |

+

# nvidia-smi

|

| 20 |

+

# python3 -c "import tensorflow as tf; print(tf.config.list_physical_devices('GPU'))"

|

LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT License

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2023 xaviviro

|

| 4 |

+

|

| 5 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 6 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 7 |

+

in the Software without restriction, including without limitation the rights

|

| 8 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 9 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 10 |

+

furnished to do so, subject to the following conditions:

|

| 11 |

+

|

| 12 |

+

The above copyright notice and this permission notice shall be included in all

|

| 13 |

+

copies or substantial portions of the Software.

|

| 14 |

+

|

| 15 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 16 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 17 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 18 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 19 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 20 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 21 |

+

SOFTWARE.

|

README.md

CHANGED

|

@@ -1,14 +1,126 @@

|

|

| 1 |

---

|

| 2 |

-

|

|

|

|

|

|

|

| 3 |

emoji: 🚀

|

| 4 |

colorFrom: blue

|

| 5 |

colorTo: yellow

|

| 6 |

-

sdk: gradio

|

| 7 |

-

sdk_version: 5.9.1

|

| 8 |

-

app_file: app.py

|

| 9 |

-

pinned: false

|

| 10 |

-

license: mit

|

| 11 |

-

short_description: 'swapface '

|

| 12 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 13 |

|

| 14 |

-

|

|

|

|

| 1 |

---

|

| 2 |

+

license: mit

|

| 3 |

+

title: Refacerr

|

| 4 |

+

sdk: gradio

|

| 5 |

emoji: 🚀

|

| 6 |

colorFrom: blue

|

| 7 |

colorTo: yellow

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 8 |

---

|

| 9 |

+

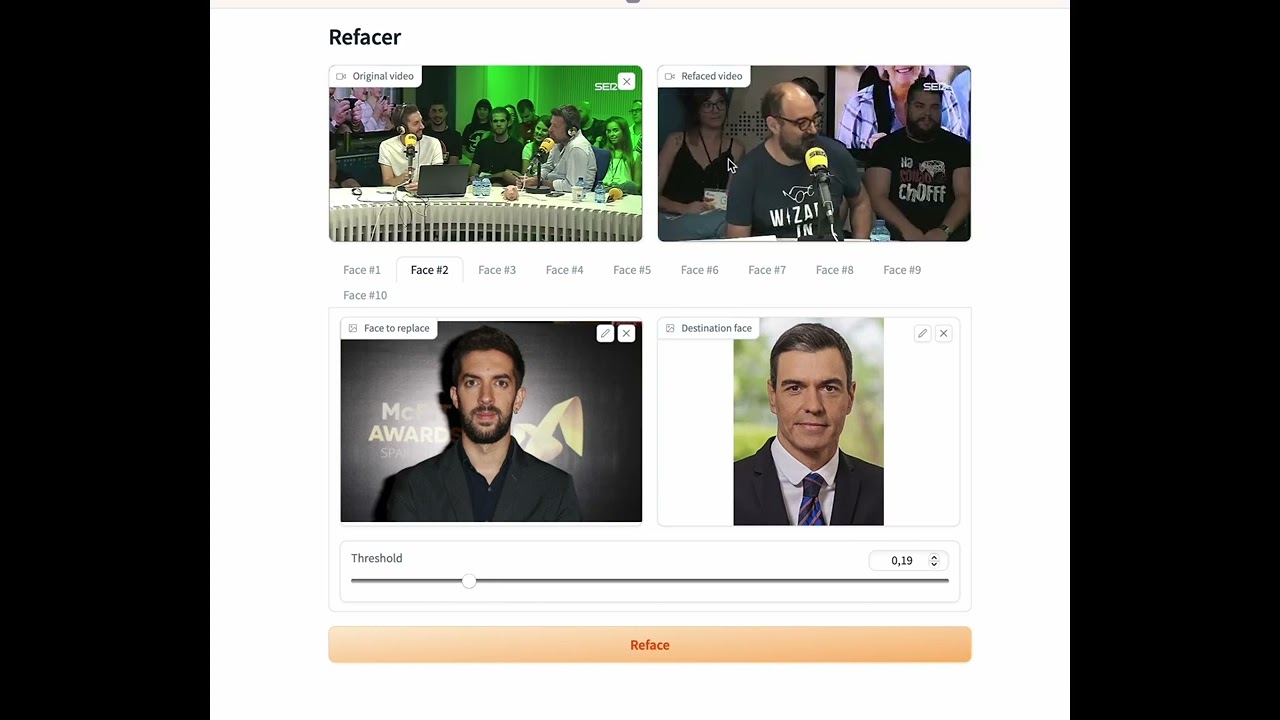

# Refacer: One-Click Deepfake Multi-Face Swap Tool

|

| 10 |

+

|

| 11 |

+

[](https://colab.research.google.com/github/xaviviro/refacer/blob/master/notebooks/Refacer_colab.ipynb)

|

| 12 |

+

|

| 13 |

+

👉 [Watch demo on Youtube](https://youtu.be/mXk1Ox7B244)

|

| 14 |

+

|

| 15 |

+

Refacer, a simple tool that allows you to create deepfakes with multiple faces with just one click! This project was inspired by [Roop](https://github.com/s0md3v/roop) and is powered by the excellent [Insightface](https://github.com/deepinsight/insightface). Refacer requires no training - just one photo and you're ready to go.

|

| 16 |

+

|

| 17 |

+

:warning: Please, before using the code from this repository, make sure to read the [disclaimer](https://github.com/xaviviro/refacer/tree/main#disclaimer).

|

| 18 |

+

|

| 19 |

+

## Demonstration

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

[](https://youtu.be/mXk1Ox7B244)

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

## System Compatibility

|

| 27 |

+

|

| 28 |

+

Refacer has been thoroughly tested on the following operating systems:

|

| 29 |

+

|

| 30 |

+

| Operating System | CPU Support | GPU Support |

|

| 31 |

+

| ---------------- | ----------- | ----------- |

|

| 32 |

+

| MacOSX | ✅ | :warning: |

|

| 33 |

+

| Windows | ✅ | ✅ |

|

| 34 |

+

| Linux | ✅ | ✅ |

|

| 35 |

+

|

| 36 |

+

The application is compatible with both CPU and GPU (Nvidia CUDA) environments, and MacOSX(CoreML)

|

| 37 |

+

|

| 38 |

+

:warning: Please note, we do not recommend using `onnxruntime-silicon` on MacOSX due to an apparent issue with memory management. If you manage to compile `onnxruntime` for Silicon, the program is prepared to use CoreML.

|

| 39 |

+

|

| 40 |

+

## Prerequisites

|

| 41 |

+

|

| 42 |

+

Ensure that you have `ffmpeg` installed and correctly configured. There are many guides available on the internet to help with this. Here are a few (note: I did not create these guides):

|

| 43 |

+

|

| 44 |

+

- [How to Install FFmpeg](https://www.hostinger.com/tutorials/how-to-install-ffmpeg)

|

| 45 |

+

|

| 46 |

+

|

| 47 |

+

## Installation

|

| 48 |

+

|

| 49 |

+

Refacer has been tested and is known to work with Python 3.10.9, but it is likely to work with other Python versions as well. It is recommended to use a virtual environment for setting up and running the project to avoid potential conflicts with other Python packages you may have installed.

|

| 50 |

+

|

| 51 |

+

Follow these steps to install Refacer:

|

| 52 |

+

|

| 53 |

+

1. Clone the repository:

|

| 54 |

+

```bash

|

| 55 |

+

git clone https://github.com/xaviviro/refacer.git

|

| 56 |

+

cd refacer

|

| 57 |

+

```

|

| 58 |

+

|

| 59 |

+

2. Download the Insightface model:

|

| 60 |

+

You can manually download the model created by Insightface from this [link](https://huggingface.co/deepinsight/inswapper/resolve/main/inswapper_128.onnx) and add it to the project folder. Alternatively, if you have `wget` installed, you can use the following command:

|

| 61 |

+

```bash

|

| 62 |

+

wget --content-disposition https://huggingface.co/deepinsight/inswapper/resolve/main/inswapper_128.onnx

|

| 63 |

+

```

|

| 64 |

+

|

| 65 |

+

3. Install dependencies:

|

| 66 |

+

|

| 67 |

+

* For CPU (compatible with Windows, MacOSX, and Linux):

|

| 68 |

+

```bash

|

| 69 |

+

pip install -r requirements.txt

|

| 70 |

+

```

|

| 71 |

+

|

| 72 |

+

* For GPU (compatible with Windows and Linux only, requires a NVIDIA GPU with CUDA and its libraries):

|

| 73 |

+

```bash

|

| 74 |

+

pip install -r requirements-GPU.txt

|

| 75 |

+

```

|

| 76 |

+

|

| 77 |

+

* For CoreML (compatible with MacOSX, requires Silicon architecture):

|

| 78 |

+

```bash

|

| 79 |

+

pip install -r requirements-COREML.txt

|

| 80 |

+

```

|

| 81 |

+

|

| 82 |

+

For more information on installing the CUDA necessary to use `onnxruntime-gpu`, please refer directly to the official [ONNX Runtime repository](https://github.com/microsoft/onnxruntime/).

|

| 83 |

+

|

| 84 |

+

For more details on using the Insightface model, you can refer to their [example](https://github.com/deepinsight/insightface/tree/master/examples/in_swapper).

|

| 85 |

+

|

| 86 |

+

|

| 87 |

+

## Usage

|

| 88 |

+

|

| 89 |

+

Once you have successfully installed Refacer and its dependencies, you can run the application using the following command:

|

| 90 |

+

|

| 91 |

+

```bash

|

| 92 |

+

python app.py

|

| 93 |

+

```

|

| 94 |

+

|

| 95 |

+

Then, open your web browser and navigate to the following address:

|

| 96 |

+

|

| 97 |

+

```

|

| 98 |

+

http://127.0.0.1:7680

|

| 99 |

+

```

|

| 100 |

+

|

| 101 |

+

|

| 102 |

+

## Questions?

|

| 103 |

+

|

| 104 |

+

If you have any questions or issues, feel free to [open an issue](https://github.com/xaviviro/refacer/issues/new) or submit a pull request.

|

| 105 |

+

|

| 106 |

+

|

| 107 |

+

## Recognition Module

|

| 108 |

+

|

| 109 |

+

The `recognition` folder in this repository is derived from Insightface's GitHub repository. You can find the original source code here: [Insightface Recognition Source Code](https://github.com/deepinsight/insightface/tree/master/web-demos/src_recognition)

|

| 110 |

+

|

| 111 |

+

This module is used for recognizing and handling face data within the Refacer application, enabling its powerful deepfake capabilities. We are grateful to Insightface for their work and for making their code available.

|

| 112 |

+

|

| 113 |

+

|

| 114 |

+

## Disclaimer

|

| 115 |

+

|

| 116 |

+

> :warning: This software is provided "as is", without warranty of any kind, express or implied, including but not limited to the warranties of merchantability, fitness for a particular purpose and noninfringement. In no event shall the authors or copyright holders be liable for any claim, damages or other liability, whether in an action of contract, tort or otherwise, arising from, out of or in connection with the software or the use or other dealings in the software.

|

| 117 |

+

|

| 118 |

+

> :warning: This software is intended for educational and research purposes only. It is not intended for use in any malicious activities. The author of this software does not condone or support the use of this software for any harmful actions, including but not limited to identity theft, invasion of privacy, or defamation. Any use of this software for such purposes is strictly prohibited.

|

| 119 |

+

|

| 120 |

+

> :warning: You may only use this software with images for which you have the right to use and the necessary permissions. Any use of images without the proper rights and permissions is strictly prohibited.

|

| 121 |

+

|

| 122 |

+

> :warning: The author of this software is not responsible for any misuse of the software or for any violation of rights and privacy resulting from such misuse.

|

| 123 |

+

|

| 124 |

+

> :warning: To prevent misuse, the software contains an integrated protective mechanism that prevents it from working with illegal or similar types of media.

|

| 125 |

|

| 126 |

+

> :warning: By using this software, you agree to abide by all applicable laws, to respect the rights and privacy of others, and to use the software responsibly and ethically.

|

app.py

ADDED

|

@@ -0,0 +1,117 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import gradio as gr

|

| 2 |

+

import cv2

|

| 3 |

+

import multiprocessing

|

| 4 |

+

import os

|

| 5 |

+

import requests

|

| 6 |

+

from refacer import Refacer

|

| 7 |

+

|

| 8 |

+

# Hugging Face URL to download the model

|

| 9 |

+

model_url = "https://huggingface.co/ofter/4x-UltraSharp/resolve/main/inswapper_128.onnx"

|

| 10 |

+

model_path = "./inswapper_128.onnx"

|

| 11 |

+

|

| 12 |

+

# Function to download the model

|

| 13 |

+

def download_model():

|

| 14 |

+

if not os.path.exists(model_path):

|

| 15 |

+

print("Downloading inswapper_128.onnx...")

|

| 16 |

+

response = requests.get(model_url)

|

| 17 |

+

if response.status_code == 200:

|

| 18 |

+

with open(model_path, 'wb') as f:

|

| 19 |

+

f.write(response.content)

|

| 20 |

+

print("Model downloaded successfully!")

|

| 21 |

+

else:

|

| 22 |

+

raise Exception(f"Failed to download the model. Status code: {response.status_code}")

|

| 23 |

+

else:

|

| 24 |

+

print("Model already exists.")

|

| 25 |

+

|

| 26 |

+

# Download the model when the script runs

|

| 27 |

+

download_model()

|

| 28 |

+

|

| 29 |

+

# Initialize Refacer class (force CPU mode)

|

| 30 |

+

refacer = Refacer(force_cpu=True)

|

| 31 |

+

|

| 32 |

+

# Dummy function to simulate frame-level processing

|

| 33 |

+

def process_frame(frame, origin_face, destination_face, threshold):

|

| 34 |

+

# Simulate face swapping or any processing needed

|

| 35 |

+

result_frame = refacer.reface(frame, [{

|

| 36 |

+

'origin': origin_face,

|

| 37 |

+

'destination': destination_face,

|

| 38 |

+

'threshold': threshold

|

| 39 |

+

}])

|

| 40 |

+

return result_frame

|

| 41 |

+

|

| 42 |

+

# Function to process the video in parallel using multiprocessing

|

| 43 |

+

def process_video(video_path, origins, destinations, thresholds, max_processes=2):

|

| 44 |

+

cap = cv2.VideoCapture(video_path)

|

| 45 |

+

frames = []

|

| 46 |

+

|

| 47 |

+

# Read all frames from the video

|

| 48 |

+

while cap.isOpened():

|

| 49 |

+

ret, frame = cap.read()

|

| 50 |

+

if not ret:

|

| 51 |

+

break

|

| 52 |

+

frames.append(frame)

|

| 53 |

+

|

| 54 |

+

cap.release()

|

| 55 |

+

|

| 56 |

+

# Parallel processing of frames with limited processes (for CPU optimization)

|

| 57 |

+

with multiprocessing.Pool(processes=max_processes) as pool:

|

| 58 |

+

processed_frames = pool.starmap(process_frame, [

|

| 59 |

+

(frame, origins[min(i, len(origins) - 1)], destinations[min(i, len(destinations) - 1)], thresholds[min(i, len(thresholds) - 1)])

|

| 60 |

+

for i, frame in enumerate(frames)

|

| 61 |

+

])

|

| 62 |

+

|

| 63 |

+

# Saving the processed frames back into a video

|

| 64 |

+

output_video_path = "processed_video.mp4"

|

| 65 |

+

fourcc = cv2.VideoWriter_fourcc(*'mp4v') # Compression using mp4 codec

|

| 66 |

+

out = cv2.VideoWriter(output_video_path, fourcc, 30.0, (640, 360)) # Reduce resolution to speed up processing

|

| 67 |

+

|

| 68 |

+

for frame in processed_frames:

|

| 69 |

+

out.write(frame)

|

| 70 |

+

|

| 71 |

+

out.release()

|

| 72 |

+

return output_video_path

|

| 73 |

+

|

| 74 |

+

# Gradio Interface function

|

| 75 |

+

def run(video_path, *vars):

|

| 76 |

+

# Split the inputs into origins, destinations, and thresholds based on num_faces

|

| 77 |

+

num_faces = 5 # You can adjust this based on your UI

|

| 78 |

+

origins = vars[:num_faces]

|

| 79 |

+

destinations = vars[num_faces:2*num_faces]

|

| 80 |

+

thresholds = vars[2*num_faces:]

|

| 81 |

+

|

| 82 |

+

# Ensure there are no index errors by limiting the number of inputs

|

| 83 |

+

if len(origins) != num_faces or len(destinations) != num_faces or len(thresholds) != num_faces:

|

| 84 |

+

return "Please provide input for all faces."

|

| 85 |

+

|

| 86 |

+

refaced_video_path = process_video(video_path, origins, destinations, thresholds)

|

| 87 |

+

print(f"Refaced video can be found at {refaced_video_path}")

|

| 88 |

+

|

| 89 |

+

return refaced_video_path

|

| 90 |

+

|

| 91 |

+

# Prepare Gradio components

|

| 92 |

+

origin = []

|

| 93 |

+

destination = []

|

| 94 |

+

thresholds = []

|

| 95 |

+

|

| 96 |

+

with gr.Blocks() as demo:

|

| 97 |

+

with gr.Row():

|

| 98 |

+

gr.Markdown("# Refacer")

|

| 99 |

+

with gr.Row():

|

| 100 |

+

video_input = gr.Video(label="Original video", format="mp4")

|

| 101 |

+

video_output = gr.Video(label="Refaced video", interactive=False, format="mp4")

|

| 102 |

+

|

| 103 |

+

for i in range(5): # Set max faces to 5

|

| 104 |

+

with gr.Tab(f"Face #{i+1}"):

|

| 105 |

+

with gr.Row():

|

| 106 |

+

origin.append(gr.Image(label="Face to replace"))

|

| 107 |

+

destination.append(gr.Image(label="Destination face"))

|

| 108 |

+

with gr.Row():

|

| 109 |

+

thresholds.append(gr.Slider(label="Threshold", minimum=0.0, maximum=1.0, value=0.2))

|

| 110 |

+

|

| 111 |

+

with gr.Row():

|

| 112 |

+

button = gr.Button("Reface", variant="primary")

|

| 113 |

+

|

| 114 |

+

button.click(fn=run, inputs=[video_input] + origin + destination + thresholds, outputs=[video_output])

|

| 115 |

+

|

| 116 |

+

# Launch the Gradio app

|

| 117 |

+

demo.launch(show_error=True, server_name="0.0.0.0", server_port=7860)

|

arcface_onnx.py

ADDED

|

@@ -0,0 +1,91 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# -*- coding: utf-8 -*-

|

| 2 |

+

# @Organization : insightface.ai

|

| 3 |

+

# @Author : Jia Guo

|

| 4 |

+

# @Time : 2021-05-04

|

| 5 |

+

# @Function :

|

| 6 |

+

|

| 7 |

+

import numpy as np

|

| 8 |

+

import cv2

|

| 9 |

+

import onnx

|

| 10 |

+

import onnxruntime

|

| 11 |

+

import face_align

|

| 12 |

+

|

| 13 |

+

__all__ = [

|

| 14 |

+

'ArcFaceONNX',

|

| 15 |

+

]

|

| 16 |

+

|

| 17 |

+

|

| 18 |

+

class ArcFaceONNX:

|

| 19 |

+

def __init__(self, model_file=None, session=None):

|

| 20 |

+

assert model_file is not None

|

| 21 |

+

self.model_file = model_file

|

| 22 |

+

self.session = session

|

| 23 |

+

self.taskname = 'recognition'

|

| 24 |

+

find_sub = False

|

| 25 |

+

find_mul = False

|

| 26 |

+

model = onnx.load(self.model_file)

|

| 27 |

+

graph = model.graph

|

| 28 |

+

for nid, node in enumerate(graph.node[:8]):

|

| 29 |

+

#print(nid, node.name)

|

| 30 |

+

if node.name.startswith('Sub') or node.name.startswith('_minus'):

|

| 31 |

+

find_sub = True

|

| 32 |

+

if node.name.startswith('Mul') or node.name.startswith('_mul'):

|

| 33 |

+

find_mul = True

|

| 34 |

+

if find_sub and find_mul:

|

| 35 |

+

#mxnet arcface model

|

| 36 |

+

input_mean = 0.0

|

| 37 |

+

input_std = 1.0

|

| 38 |

+

else:

|

| 39 |

+

input_mean = 127.5

|

| 40 |

+

input_std = 127.5

|

| 41 |

+

self.input_mean = input_mean

|

| 42 |

+

self.input_std = input_std

|

| 43 |

+

#print('input mean and std:', self.input_mean, self.input_std)

|

| 44 |

+

if self.session is None:

|

| 45 |

+

self.session = onnxruntime.InferenceSession(self.model_file, providers=['CoreMLExecutionProvider','CUDAExecutionProvider'])

|

| 46 |

+

input_cfg = self.session.get_inputs()[0]

|

| 47 |

+

input_shape = input_cfg.shape

|

| 48 |

+

input_name = input_cfg.name

|

| 49 |

+

self.input_size = tuple(input_shape[2:4][::-1])

|

| 50 |

+

self.input_shape = input_shape

|

| 51 |

+

outputs = self.session.get_outputs()

|

| 52 |

+

output_names = []

|

| 53 |

+

for out in outputs:

|

| 54 |

+

output_names.append(out.name)

|

| 55 |

+

self.input_name = input_name

|

| 56 |

+

self.output_names = output_names

|

| 57 |

+

assert len(self.output_names)==1

|

| 58 |

+

self.output_shape = outputs[0].shape

|

| 59 |

+

|

| 60 |

+

def prepare(self, ctx_id, **kwargs):

|

| 61 |

+

if ctx_id<0:

|

| 62 |

+

self.session.set_providers(['CPUExecutionProvider'])

|

| 63 |

+

|

| 64 |

+

def get(self, img, kps):

|

| 65 |

+

aimg = face_align.norm_crop(img, landmark=kps, image_size=self.input_size[0])

|

| 66 |

+

embedding = self.get_feat(aimg).flatten()

|

| 67 |

+

return embedding

|

| 68 |

+

|

| 69 |

+

def compute_sim(self, feat1, feat2):

|

| 70 |

+

from numpy.linalg import norm

|

| 71 |

+

feat1 = feat1.ravel()

|

| 72 |

+

feat2 = feat2.ravel()

|

| 73 |

+

sim = np.dot(feat1, feat2) / (norm(feat1) * norm(feat2))

|

| 74 |

+

return sim

|

| 75 |

+

|

| 76 |

+

def get_feat(self, imgs):

|

| 77 |

+

if not isinstance(imgs, list):

|

| 78 |

+

imgs = [imgs]

|

| 79 |

+

input_size = self.input_size

|

| 80 |

+

|

| 81 |

+

blob = cv2.dnn.blobFromImages(imgs, 1.0 / self.input_std, input_size,

|

| 82 |

+

(self.input_mean, self.input_mean, self.input_mean), swapRB=True)

|

| 83 |

+

net_out = self.session.run(self.output_names, {self.input_name: blob})[0]

|

| 84 |

+

return net_out

|

| 85 |

+

|

| 86 |

+

def forward(self, batch_data):

|

| 87 |

+

blob = (batch_data - self.input_mean) / self.input_std

|

| 88 |

+

net_out = self.session.run(self.output_names, {self.input_name: blob})[0]

|

| 89 |

+

return net_out

|

| 90 |

+

|

| 91 |

+

|

dockerfile

ADDED

|

@@ -0,0 +1,33 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

FROM python:3.10

|

| 2 |

+

|

| 3 |

+

# Install dependencies

|

| 4 |

+

RUN apt-get update && apt-get install -y \

|

| 5 |

+

git \

|

| 6 |

+

git-lfs \

|

| 7 |

+

ffmpeg \

|

| 8 |

+

libsm6 \

|

| 9 |

+

libxext6 \

|

| 10 |

+

cmake \

|

| 11 |

+

rsync \

|

| 12 |

+

libgl1-mesa-glx \

|

| 13 |

+

curl \

|

| 14 |

+

nodejs \

|

| 15 |

+

&& rm -rf /var/lib/apt/lists/* \

|

| 16 |

+

&& git lfs install

|

| 17 |

+

|

| 18 |

+

# Clone the insightface repository and install scrfd manually

|

| 19 |

+

RUN git clone https://github.com/deepinsight/insightface.git /opt/insightface && \

|

| 20 |

+

cd /opt/insightface && \

|

| 21 |

+

pip install .

|

| 22 |

+

|

| 23 |

+

# Set working directory

|

| 24 |

+

WORKDIR /home/user/app

|

| 25 |

+

|

| 26 |

+

# Copy the application files

|

| 27 |

+

COPY --chown=1000:1000 . /home/user/app

|

| 28 |

+

|

| 29 |

+

# Install Python dependencies

|

| 30 |

+

RUN pip install --no-cache-dir -r requirements.txt

|

| 31 |

+

|

| 32 |

+

# Command to run the application

|

| 33 |

+

CMD ["python", "app.py"]

|

face_align.py

ADDED

|

@@ -0,0 +1,141 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import cv2

|

| 2 |

+

import numpy as np

|

| 3 |

+

from skimage import transform as trans

|

| 4 |

+

|

| 5 |

+

src1 = np.array([[51.642, 50.115], [57.617, 49.990], [35.740, 69.007],

|

| 6 |

+

[51.157, 89.050], [57.025, 89.702]],

|

| 7 |

+

dtype=np.float32)

|

| 8 |

+

#<--left

|

| 9 |

+

src2 = np.array([[45.031, 50.118], [65.568, 50.872], [39.677, 68.111],

|

| 10 |

+

[45.177, 86.190], [64.246, 86.758]],

|

| 11 |

+

dtype=np.float32)

|

| 12 |

+

|

| 13 |

+

#---frontal

|

| 14 |

+

src3 = np.array([[39.730, 51.138], [72.270, 51.138], [56.000, 68.493],

|

| 15 |

+

[42.463, 87.010], [69.537, 87.010]],

|

| 16 |

+

dtype=np.float32)

|

| 17 |

+

|

| 18 |

+

#-->right

|

| 19 |

+

src4 = np.array([[46.845, 50.872], [67.382, 50.118], [72.737, 68.111],

|

| 20 |

+

[48.167, 86.758], [67.236, 86.190]],

|

| 21 |

+

dtype=np.float32)

|

| 22 |

+

|

| 23 |

+

#-->right profile

|

| 24 |

+

src5 = np.array([[54.796, 49.990], [60.771, 50.115], [76.673, 69.007],

|

| 25 |

+

[55.388, 89.702], [61.257, 89.050]],

|

| 26 |

+

dtype=np.float32)

|

| 27 |

+

|

| 28 |

+

src = np.array([src1, src2, src3, src4, src5])

|

| 29 |

+

src_map = {112: src, 224: src * 2}

|

| 30 |

+

|

| 31 |

+

arcface_src = np.array(

|

| 32 |

+

[[38.2946, 51.6963], [73.5318, 51.5014], [56.0252, 71.7366],

|

| 33 |

+

[41.5493, 92.3655], [70.7299, 92.2041]],

|

| 34 |

+

dtype=np.float32)

|

| 35 |

+

|

| 36 |

+

arcface_src = np.expand_dims(arcface_src, axis=0)

|

| 37 |

+

|

| 38 |

+

# In[66]:

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

# lmk is prediction; src is template

|

| 42 |

+

def estimate_norm(lmk, image_size=112, mode='arcface'):

|

| 43 |

+

assert lmk.shape == (5, 2)

|

| 44 |

+

tform = trans.SimilarityTransform()

|

| 45 |

+

lmk_tran = np.insert(lmk, 2, values=np.ones(5), axis=1)

|

| 46 |

+

min_M = []

|

| 47 |

+

min_index = []

|

| 48 |

+

min_error = float('inf')

|

| 49 |

+

if mode == 'arcface':

|

| 50 |

+

if image_size == 112:

|

| 51 |

+

src = arcface_src

|

| 52 |

+

else:

|

| 53 |

+

src = float(image_size) / 112 * arcface_src

|

| 54 |

+

else:

|

| 55 |

+

src = src_map[image_size]

|

| 56 |

+

for i in np.arange(src.shape[0]):

|

| 57 |

+

tform.estimate(lmk, src[i])

|

| 58 |

+

M = tform.params[0:2, :]

|

| 59 |

+

results = np.dot(M, lmk_tran.T)

|

| 60 |

+

results = results.T

|

| 61 |

+

error = np.sum(np.sqrt(np.sum((results - src[i])**2, axis=1)))

|

| 62 |

+

# print(error)

|

| 63 |

+

if error < min_error:

|

| 64 |

+

min_error = error

|

| 65 |

+

min_M = M

|

| 66 |

+

min_index = i

|

| 67 |

+

return min_M, min_index

|

| 68 |

+

|

| 69 |

+

|

| 70 |

+

def norm_crop(img, landmark, image_size=112, mode='arcface'):

|

| 71 |

+

M, pose_index = estimate_norm(landmark, image_size, mode)

|

| 72 |

+

warped = cv2.warpAffine(img, M, (image_size, image_size), borderValue=0.0)

|

| 73 |

+

return warped

|

| 74 |

+

|

| 75 |

+

def square_crop(im, S):

|

| 76 |

+

if im.shape[0] > im.shape[1]:

|

| 77 |

+

height = S

|

| 78 |

+

width = int(float(im.shape[1]) / im.shape[0] * S)

|

| 79 |

+

scale = float(S) / im.shape[0]

|

| 80 |

+

else:

|

| 81 |

+

width = S

|

| 82 |

+

height = int(float(im.shape[0]) / im.shape[1] * S)

|

| 83 |

+

scale = float(S) / im.shape[1]

|

| 84 |

+

resized_im = cv2.resize(im, (width, height))

|

| 85 |

+

det_im = np.zeros((S, S, 3), dtype=np.uint8)

|

| 86 |

+

det_im[:resized_im.shape[0], :resized_im.shape[1], :] = resized_im

|

| 87 |

+

return det_im, scale

|

| 88 |

+

|

| 89 |

+

|

| 90 |

+

def transform(data, center, output_size, scale, rotation):

|

| 91 |

+

scale_ratio = scale

|

| 92 |

+

rot = float(rotation) * np.pi / 180.0

|

| 93 |

+

#translation = (output_size/2-center[0]*scale_ratio, output_size/2-center[1]*scale_ratio)

|

| 94 |

+

t1 = trans.SimilarityTransform(scale=scale_ratio)

|

| 95 |

+

cx = center[0] * scale_ratio

|

| 96 |

+

cy = center[1] * scale_ratio

|

| 97 |

+

t2 = trans.SimilarityTransform(translation=(-1 * cx, -1 * cy))

|

| 98 |

+

t3 = trans.SimilarityTransform(rotation=rot)

|

| 99 |

+

t4 = trans.SimilarityTransform(translation=(output_size / 2,

|

| 100 |

+

output_size / 2))

|

| 101 |

+

t = t1 + t2 + t3 + t4

|

| 102 |

+

M = t.params[0:2]

|

| 103 |

+

cropped = cv2.warpAffine(data,

|

| 104 |

+

M, (output_size, output_size),

|

| 105 |

+

borderValue=0.0)

|

| 106 |

+

return cropped, M

|

| 107 |

+

|

| 108 |

+

|

| 109 |

+

def trans_points2d(pts, M):

|

| 110 |

+

new_pts = np.zeros(shape=pts.shape, dtype=np.float32)

|

| 111 |

+

for i in range(pts.shape[0]):

|

| 112 |

+

pt = pts[i]

|

| 113 |

+

new_pt = np.array([pt[0], pt[1], 1.], dtype=np.float32)

|

| 114 |

+

new_pt = np.dot(M, new_pt)

|

| 115 |

+

#print('new_pt', new_pt.shape, new_pt)

|

| 116 |

+

new_pts[i] = new_pt[0:2]

|

| 117 |

+

|

| 118 |

+

return new_pts

|

| 119 |

+

|

| 120 |

+

|

| 121 |

+

def trans_points3d(pts, M):

|

| 122 |

+

scale = np.sqrt(M[0][0] * M[0][0] + M[0][1] * M[0][1])

|

| 123 |

+

#print(scale)

|

| 124 |

+

new_pts = np.zeros(shape=pts.shape, dtype=np.float32)

|

| 125 |

+

for i in range(pts.shape[0]):

|

| 126 |

+

pt = pts[i]

|

| 127 |

+

new_pt = np.array([pt[0], pt[1], 1.], dtype=np.float32)

|

| 128 |

+

new_pt = np.dot(M, new_pt)

|

| 129 |

+

#print('new_pt', new_pt.shape, new_pt)

|

| 130 |

+

new_pts[i][0:2] = new_pt[0:2]

|

| 131 |

+

new_pts[i][2] = pts[i][2] * scale

|

| 132 |

+

|

| 133 |

+

return new_pts

|

| 134 |

+

|

| 135 |

+

|

| 136 |

+

def trans_points(pts, M):

|

| 137 |

+

if pts.shape[1] == 2:

|

| 138 |

+

return trans_points2d(pts, M)

|

| 139 |

+

else:

|

| 140 |

+

return trans_points3d(pts, M)

|

| 141 |

+

|

gitattributes

ADDED

|

@@ -0,0 +1,37 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

demo.gif filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

out filter=lfs diff=lfs merge=lfs -text

|

gitignore

ADDED

|

@@ -0,0 +1,173 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Byte-compiled / optimized / DLL files

|

| 2 |

+

__pycache__/

|

| 3 |

+

*.py[cod]

|

| 4 |

+

*$py.class

|

| 5 |

+

|

| 6 |

+

# C extensions

|

| 7 |

+

*.so

|

| 8 |

+

|

| 9 |

+

# Distribution / packaging

|

| 10 |

+

.Python

|

| 11 |

+

build/

|

| 12 |

+

develop-eggs/

|

| 13 |

+

dist/

|

| 14 |

+

downloads/

|

| 15 |

+

eggs/

|

| 16 |

+

.eggs/

|

| 17 |

+

lib/

|

| 18 |

+

lib64/

|

| 19 |

+

parts/

|

| 20 |

+

sdist/

|

| 21 |

+

var/

|

| 22 |

+

wheels/

|

| 23 |

+

share/python-wheels/

|

| 24 |

+

*.egg-info/

|

| 25 |

+

.installed.cfg

|

| 26 |

+

*.egg

|

| 27 |

+

MANIFEST

|

| 28 |

+

|

| 29 |

+

# PyInstaller

|

| 30 |

+

# Usually these files are written by a python script from a template

|

| 31 |

+

# before PyInstaller builds the exe, so as to inject date/other infos into it.

|

| 32 |

+

*.manifest

|

| 33 |

+

*.spec

|

| 34 |

+

|

| 35 |

+

# Installer logs

|

| 36 |

+

pip-log.txt

|

| 37 |

+

pip-delete-this-directory.txt

|

| 38 |

+

|

| 39 |

+

# Unit test / coverage reports

|

| 40 |

+

htmlcov/

|

| 41 |

+

.tox/

|

| 42 |

+

.nox/

|

| 43 |

+

.coverage

|

| 44 |

+

.coverage.*

|

| 45 |

+

.cache

|

| 46 |

+

nosetests.xml

|

| 47 |

+

coverage.xml

|

| 48 |

+

*.cover

|

| 49 |

+

*.py,cover

|

| 50 |

+

.hypothesis/

|

| 51 |

+

.pytest_cache/

|

| 52 |

+

cover/

|

| 53 |

+

|

| 54 |

+

# Translations

|

| 55 |

+

*.mo

|

| 56 |

+

*.pot

|

| 57 |

+

|

| 58 |

+

# Django stuff:

|

| 59 |

+

*.log

|

| 60 |

+

local_settings.py

|

| 61 |

+

db.sqlite3

|

| 62 |

+

db.sqlite3-journal

|

| 63 |

+

|

| 64 |

+

# Flask stuff:

|

| 65 |

+

instance/

|

| 66 |

+

.webassets-cache

|

| 67 |

+

|

| 68 |

+

# Scrapy stuff:

|

| 69 |

+

.scrapy

|

| 70 |

+

|

| 71 |

+

# Sphinx documentation

|

| 72 |

+

docs/_build/

|

| 73 |

+

|

| 74 |

+

# PyBuilder

|

| 75 |

+

.pybuilder/

|

| 76 |

+

target/

|

| 77 |

+

|

| 78 |

+

# Jupyter Notebook

|

| 79 |

+

.ipynb_checkpoints

|

| 80 |

+

|

| 81 |

+

# IPython

|

| 82 |

+

profile_default/

|

| 83 |

+

ipython_config.py

|

| 84 |

+

|

| 85 |

+

# pyenv

|

| 86 |

+

# For a library or package, you might want to ignore these files since the code is

|

| 87 |

+

# intended to run in multiple environments; otherwise, check them in:

|

| 88 |

+

# .python-version

|

| 89 |

+

|

| 90 |

+

# pipenv

|

| 91 |

+

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

|

| 92 |

+

# However, in case of collaboration, if having platform-specific dependencies or dependencies

|

| 93 |

+

# having no cross-platform support, pipenv may install dependencies that don't work, or not

|

| 94 |

+

# install all needed dependencies.

|

| 95 |

+

#Pipfile.lock

|

| 96 |

+

|

| 97 |

+

# poetry

|

| 98 |

+

# Similar to Pipfile.lock, it is generally recommended to include poetry.lock in version control.

|

| 99 |

+

# This is especially recommended for binary packages to ensure reproducibility, and is more

|

| 100 |

+

# commonly ignored for libraries.

|

| 101 |

+

# https://python-poetry.org/docs/basic-usage/#commit-your-poetrylock-file-to-version-control

|

| 102 |

+

#poetry.lock

|

| 103 |

+

|

| 104 |

+

# pdm

|

| 105 |

+

# Similar to Pipfile.lock, it is generally recommended to include pdm.lock in version control.

|

| 106 |

+

#pdm.lock

|

| 107 |

+

# pdm stores project-wide configurations in .pdm.toml, but it is recommended to not include it

|

| 108 |

+

# in version control.

|

| 109 |

+

# https://pdm.fming.dev/#use-with-ide

|

| 110 |

+

.pdm.toml

|

| 111 |

+

|

| 112 |

+

# PEP 582; used by e.g. github.com/David-OConnor/pyflow and github.com/pdm-project/pdm

|

| 113 |

+

__pypackages__/

|

| 114 |

+

|

| 115 |

+

# Celery stuff

|

| 116 |

+

celerybeat-schedule

|

| 117 |

+

celerybeat.pid

|

| 118 |

+

|

| 119 |

+

# SageMath parsed files

|

| 120 |

+

*.sage.py

|

| 121 |

+

|

| 122 |

+

# Environments

|

| 123 |

+

.env

|

| 124 |

+

.venv

|

| 125 |

+

env/

|

| 126 |

+

venv/

|

| 127 |

+

ENV/

|

| 128 |

+

env.bak/

|

| 129 |

+

venv.bak/

|

| 130 |

+

|

| 131 |

+

# Spyder project settings

|

| 132 |

+

.spyderproject

|

| 133 |

+

.spyproject

|

| 134 |

+

|

| 135 |

+

# Rope project settings

|

| 136 |

+

.ropeproject

|

| 137 |

+

|

| 138 |

+

# mkdocs documentation

|

| 139 |

+

/site

|

| 140 |

+

|

| 141 |

+

# mypy

|

| 142 |

+

.mypy_cache/

|

| 143 |

+

.dmypy.json

|

| 144 |

+

dmypy.json

|

| 145 |

+

|

| 146 |

+

# Pyre type checker

|

| 147 |

+

.pyre/

|

| 148 |

+

|

| 149 |

+

# pytype static type analyzer

|

| 150 |

+

.pytype/

|

| 151 |

+

|

| 152 |

+

# Cython debug symbols

|

| 153 |

+

cython_debug/

|

| 154 |

+

|

| 155 |

+

# PyCharm

|

| 156 |

+

# JetBrains specific template is maintained in a separate JetBrains.gitignore that can

|

| 157 |

+

# be found at https://github.com/github/gitignore/blob/main/Global/JetBrains.gitignore

|

| 158 |

+

# and can be added to the global gitignore or merged into this file. For a more nuclear

|

| 159 |

+

# option (not recommended) you can uncomment the following to ignore the entire idea folder.

|

| 160 |

+

#.idea/

|

| 161 |

+

|

| 162 |

+

out/*

|

| 163 |

+

!out/.gitkeep

|

| 164 |

+

media

|

| 165 |

+

tests

|

| 166 |

+

*.onnx

|

| 167 |

+

|

| 168 |

+

aaa.md

|

| 169 |

+

|

| 170 |

+

*_test.py

|

| 171 |

+

img.jpg

|

| 172 |

+

test_data

|

| 173 |

+

testsrc.mp4

|

gitkeep

ADDED

|

File without changes

|

main.py

ADDED

|

@@ -0,0 +1,57 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

#!/usr/bin/env python

|

| 2 |

+

|

| 3 |

+

import os

|

| 4 |

+

import os.path as osp

|

| 5 |

+

import argparse

|

| 6 |

+

import cv2

|

| 7 |

+

import numpy as np

|

| 8 |

+

import onnxruntime

|

| 9 |

+

from scrfd import SCRFD

|

| 10 |

+

from arcface_onnx import ArcFaceONNX

|

| 11 |

+

|

| 12 |

+

onnxruntime.set_default_logger_severity(5)

|

| 13 |

+

|

| 14 |

+

assets_dir = osp.expanduser('~/.insightface/models/buffalo_l')

|

| 15 |

+

|

| 16 |

+

detector = SCRFD(os.path.join(assets_dir, 'det_10g.onnx'))

|

| 17 |

+

detector.prepare(0)

|

| 18 |

+

model_path = os.path.join(assets_dir, 'w600k_r50.onnx')

|

| 19 |

+

rec = ArcFaceONNX(model_path)

|

| 20 |

+

rec.prepare(0)

|

| 21 |

+

|

| 22 |

+

def parse_args() -> argparse.Namespace:

|

| 23 |

+

parser = argparse.ArgumentParser()

|

| 24 |

+

parser.add_argument('img1', type=str)

|

| 25 |

+

parser.add_argument('img2', type=str)

|

| 26 |

+

return parser.parse_args()

|

| 27 |

+

|

| 28 |

+

|

| 29 |

+

def func(args):

|

| 30 |

+

image1 = cv2.imread(args.img1)

|

| 31 |

+

image2 = cv2.imread(args.img2)

|

| 32 |

+

bboxes1, kpss1 = detector.autodetect(image1, max_num=1)

|

| 33 |

+

if bboxes1.shape[0]==0:

|

| 34 |

+

return -1.0, "Face not found in Image-1"

|

| 35 |

+

bboxes2, kpss2 = detector.autodetect(image2, max_num=1)

|

| 36 |

+

if bboxes2.shape[0]==0:

|

| 37 |

+

return -1.0, "Face not found in Image-2"

|

| 38 |

+

kps1 = kpss1[0]

|

| 39 |

+

kps2 = kpss2[0]

|

| 40 |

+

feat1 = rec.get(image1, kps1)

|

| 41 |

+

feat2 = rec.get(image2, kps2)

|

| 42 |

+

sim = rec.compute_sim(feat1, feat2)

|

| 43 |

+

if sim<0.2:

|

| 44 |

+

conclu = 'They are NOT the same person'

|

| 45 |

+

elif sim>=0.2 and sim<0.28:

|

| 46 |

+

conclu = 'They are LIKELY TO be the same person'

|

| 47 |

+

else:

|

| 48 |

+

conclu = 'They ARE the same person'

|

| 49 |

+

return sim, conclu

|

| 50 |

+

|

| 51 |

+

|

| 52 |

+

|

| 53 |

+

if __name__ == '__main__':

|

| 54 |

+

args = parse_args()

|

| 55 |

+

output = func(args)

|

| 56 |

+

print('sim: %.4f, message: %s'%(output[0], output[1]))

|

| 57 |

+

|

scrfd.py

ADDED

|

@@ -0,0 +1,329 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|