Spaces:

Runtime error

Runtime error

Saini

commited on

Commit

·

0b9f920

1

Parent(s):

a09d4b8

init

Browse files- .gitignore +106 -0

- DOCUMENTATION.md +146 -0

- LICENSE +50 -0

- MiDaS/MiDaS_utils.py +192 -0

- MiDaS/model.pt +3 -0

- MiDaS/monodepth_net.py +186 -0

- MiDaS/run.py +81 -0

- README.md +2 -2

- app.py +230 -0

- argument.yml +52 -0

- bilateral_filtering.py +215 -0

- boostmonodepth_utils.py +68 -0

- checkpoints/color-model.pth +3 -0

- checkpoints/depth-model.pth +3 -0

- checkpoints/edge-model.pth +3 -0

- dog.jpg +0 -0

- download.sh +25 -0

- gradio_queue.db +0 -0

- image/.empty +0 -0

- latest_net_G.pth +3 -0

- main.py +141 -0

- mesh.py +0 -0

- mesh_tools.py +1083 -0

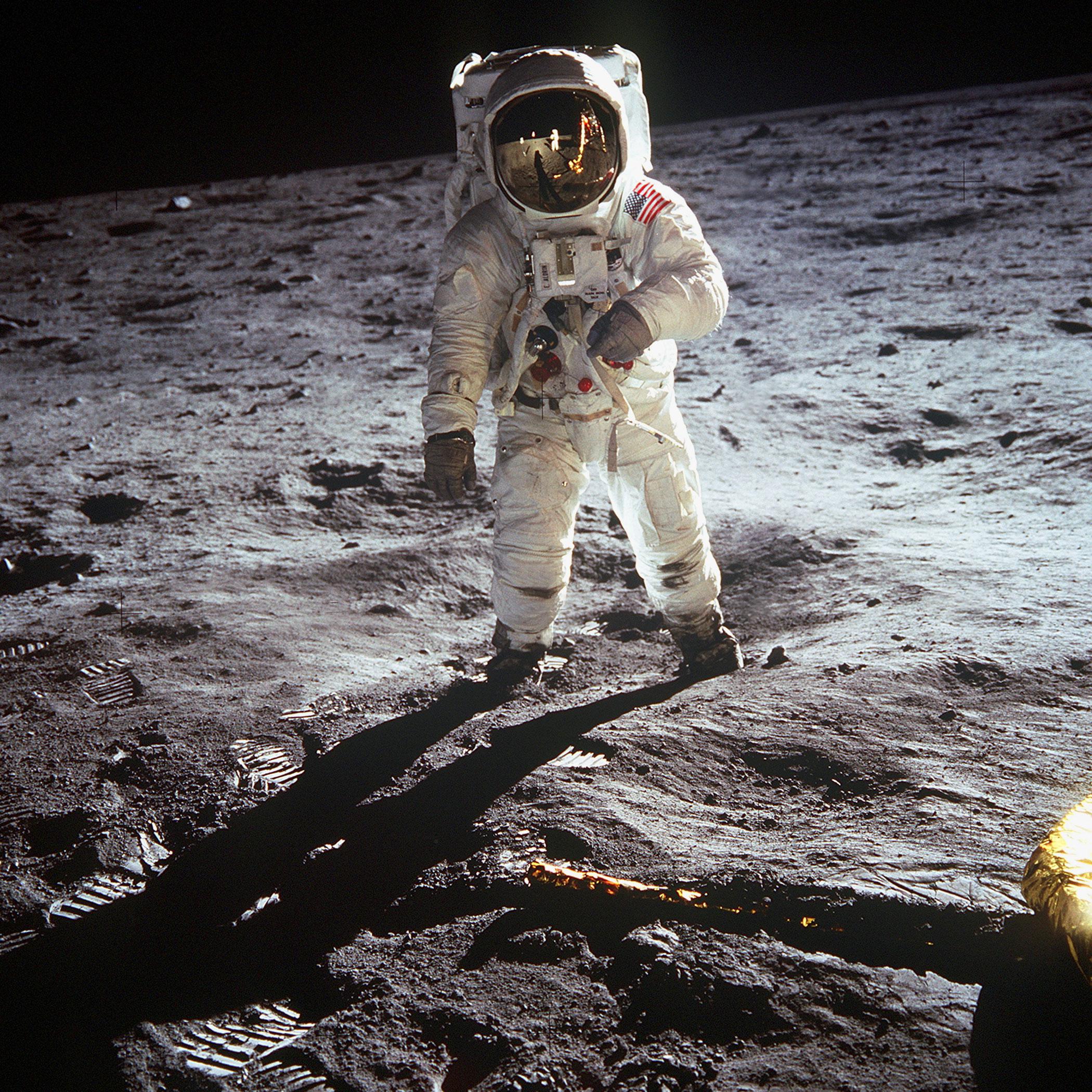

- moon.jpg +0 -0

- networks.py +501 -0

- packages.txt +8 -0

- pyproject.toml +3 -0

- requirements.txt +12 -0

- setup.py +8 -0

- utils.py +1416 -0

.gitignore

ADDED

|

@@ -0,0 +1,106 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

#================================================

|

| 2 |

+

# User Specifics

|

| 3 |

+

#================================================

|

| 4 |

+

## Don't push data up

|

| 5 |

+

image/*

|

| 6 |

+

!image/.empty

|

| 7 |

+

## Ignore vim swap files

|

| 8 |

+

#*.swp

|

| 9 |

+

## MAC why you do this?

|

| 10 |

+

#.DS_Store

|

| 11 |

+

## Our own output files

|

| 12 |

+

#*.out

|

| 13 |

+

|

| 14 |

+

#================================================

|

| 15 |

+

# Python Specifics

|

| 16 |

+

#================================================

|

| 17 |

+

|

| 18 |

+

# Byte-compiled / optimized / DLL files

|

| 19 |

+

__pycache__/

|

| 20 |

+

*.py[cod]

|

| 21 |

+

*$py.class

|

| 22 |

+

|

| 23 |

+

# C extensions

|

| 24 |

+

*.so

|

| 25 |

+

|

| 26 |

+

# Distribution / packaging

|

| 27 |

+

.Python

|

| 28 |

+

env/

|

| 29 |

+

build/

|

| 30 |

+

develop-eggs/

|

| 31 |

+

dist/

|

| 32 |

+

downloads/

|

| 33 |

+

eggs/

|

| 34 |

+

.eggs/

|

| 35 |

+

lib/

|

| 36 |

+

lib64/

|

| 37 |

+

parts/

|

| 38 |

+

sdist/

|

| 39 |

+

var/

|

| 40 |

+

*.egg-info/

|

| 41 |

+

.installed.cfg

|

| 42 |

+

*.egg

|

| 43 |

+

|

| 44 |

+

# PyInstaller

|

| 45 |

+

# Usually these files are written by a python script from a template

|

| 46 |

+

# before PyInstaller builds the exe, so as to inject date/other infos into it.

|

| 47 |

+

*.manifest

|

| 48 |

+

*.spec

|

| 49 |

+

|

| 50 |

+

# Installer logs

|

| 51 |

+

pip-log.txt

|

| 52 |

+

pip-delete-this-directory.txt

|

| 53 |

+

|

| 54 |

+

# Unit test / coverage reports

|

| 55 |

+

htmlcov/

|

| 56 |

+

.tox/

|

| 57 |

+

.coverage

|

| 58 |

+

.coverage.*

|

| 59 |

+

.cache

|

| 60 |

+

nosetests.xml

|

| 61 |

+

coverage.xml

|

| 62 |

+

*,cover

|

| 63 |

+

.hypothesis/

|

| 64 |

+

|

| 65 |

+

# Translations

|

| 66 |

+

*.mo

|

| 67 |

+

*.pot

|

| 68 |

+

|

| 69 |

+

# Django stuff:

|

| 70 |

+

*.log

|

| 71 |

+

local_settings.py

|

| 72 |

+

|

| 73 |

+

# Flask stuff:

|

| 74 |

+

instance/

|

| 75 |

+

.webassets-cache

|

| 76 |

+

|

| 77 |

+

# Scrapy stuff:

|

| 78 |

+

.scrapy

|

| 79 |

+

|

| 80 |

+

# Sphinx documentation

|

| 81 |

+

docs/_build/

|

| 82 |

+

|

| 83 |

+

# PyBuilder

|

| 84 |

+

target/

|

| 85 |

+

|

| 86 |

+

# IPython Notebook

|

| 87 |

+

.ipynb_checkpoints

|

| 88 |

+

|

| 89 |

+

# pyenv

|

| 90 |

+

.python-version

|

| 91 |

+

|

| 92 |

+

# celery beat schedule file

|

| 93 |

+

celerybeat-schedule

|

| 94 |

+

|

| 95 |

+

# dotenv

|

| 96 |

+

.env

|

| 97 |

+

|

| 98 |

+

# virtualenv

|

| 99 |

+

venv/

|

| 100 |

+

ENV/

|

| 101 |

+

|

| 102 |

+

# Spyder project settings

|

| 103 |

+

.spyderproject

|

| 104 |

+

|

| 105 |

+

# Rope project settings

|

| 106 |

+

.ropeproject

|

DOCUMENTATION.md

ADDED

|

@@ -0,0 +1,146 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Documentation

|

| 2 |

+

|

| 3 |

+

## Python scripts

|

| 4 |

+

|

| 5 |

+

These files are for our monocular 3D Tracking pipeline:

|

| 6 |

+

|

| 7 |

+

`main.py` Execute 3D photo inpainting

|

| 8 |

+

|

| 9 |

+

`mesh.py` Functions about context-aware depth inpainting

|

| 10 |

+

|

| 11 |

+

`mesh_tools.py` Some common functions used in `mesh.py`

|

| 12 |

+

|

| 13 |

+

`utils.py` Some common functions used in image preprocessing, data loading

|

| 14 |

+

|

| 15 |

+

`networks.py` Network architectures of inpainting model

|

| 16 |

+

|

| 17 |

+

|

| 18 |

+

MiDaS/

|

| 19 |

+

|

| 20 |

+

`run.py` Execute depth estimation

|

| 21 |

+

|

| 22 |

+

`monodepth_net.py` Network architecture of depth estimation model

|

| 23 |

+

|

| 24 |

+

`MiDaS_utils.py` Some common functions in depth estimation

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

## Configuration

|

| 28 |

+

|

| 29 |

+

```bash

|

| 30 |

+

argument.yml

|

| 31 |

+

```

|

| 32 |

+

|

| 33 |

+

- `depth_edge_model_ckpt: checkpoints/EdgeModel.pth`

|

| 34 |

+

- Pretrained model of depth-edge inpainting

|

| 35 |

+

- `depth_feat_model_ckpt: checkpoints/DepthModel.pth`

|

| 36 |

+

- Pretrained model of depth inpainting

|

| 37 |

+

- `rgb_feat_model_ckpt: checkpoints/ColorModel.pth`

|

| 38 |

+

- Pretrained model of color inpainting

|

| 39 |

+

- `MiDaS_model_ckpt: MiDaS/model.pt`

|

| 40 |

+

- Pretrained model of depth estimation

|

| 41 |

+

- `use_boostmonodepth: True`

|

| 42 |

+

- Use [BoostMonocularDepth](https://github.com/compphoto/BoostingMonocularDepth) to get sharper monocular depth estimation

|

| 43 |

+

- `fps: 40`

|

| 44 |

+

- Frame per second of output rendered video

|

| 45 |

+

- `num_frames: 240`

|

| 46 |

+

- Total number of frames in output rendered video

|

| 47 |

+

- `x_shift_range: [-0.03, -0.03, -0.03]`

|

| 48 |

+

- The translations on x-axis of output rendered videos.

|

| 49 |

+

- This parameter is a list. Each element corresponds to a specific camera motion.

|

| 50 |

+

- `y_shift_range: [-0.00, -0.00, -0.03]`

|

| 51 |

+

- The translations on y-axis of output rendered videos.

|

| 52 |

+

- This parameter is a list. Each element corresponds to a specific camera motion.

|

| 53 |

+

- `z_shift_range: [-0.07, -0.07, -0.07]`

|

| 54 |

+

- The translations on z-axis of output rendered videos.

|

| 55 |

+

- This parameter is a list. Each element corresponds to a specific camera motion.

|

| 56 |

+

- `traj_types: ['straight-line', 'circle', 'circle']`

|

| 57 |

+

- The type of camera trajectory.

|

| 58 |

+

- This parameter is a list.

|

| 59 |

+

- Currently, we only privode `straight-line` and `circle`.

|

| 60 |

+

- `video_postfix: ['zoom-in', 'swing', 'circle']`

|

| 61 |

+

- The postfix of video.

|

| 62 |

+

- This parameter is a list.

|

| 63 |

+

- Note that the number of elements in `x_shift_range`, `y_shift_range`, `z_shift_range`, `traj_types` and `video_postfix` should be equal.

|

| 64 |

+

- `specific: '' `

|

| 65 |

+

- The specific image name, use this to specify the image to be executed. By default, all the image in the folder will be executed.

|

| 66 |

+

- `longer_side_len: 960`

|

| 67 |

+

- The length of larger dimension in output resolution.

|

| 68 |

+

- `src_folder: image`

|

| 69 |

+

- Input image directory.

|

| 70 |

+

- `depth_folder: depth`

|

| 71 |

+

- Estimated depth directory.

|

| 72 |

+

- `mesh_folder: mesh`

|

| 73 |

+

- Output 3-D mesh directory.

|

| 74 |

+

- `video_folder: video`

|

| 75 |

+

- Output rendered video directory

|

| 76 |

+

- `load_ply: False`

|

| 77 |

+

- Action to load existed mesh (.ply) file

|

| 78 |

+

- `save_ply: True`

|

| 79 |

+

- Action to store the output mesh (.ply) file

|

| 80 |

+

- Disable this option `save_ply: False` to reduce the computational time.

|

| 81 |

+

- `inference_video: True`

|

| 82 |

+

- Action to rendered the output video

|

| 83 |

+

- `gpu_ids: 0`

|

| 84 |

+

- The ID of working GPU. Leave it blank or negative to use CPU.

|

| 85 |

+

- `offscreen_rendering: True`

|

| 86 |

+

- If you're executing the process in a remote server (via ssh), please switch on this flag.

|

| 87 |

+

- Sometimes, using off-screen rendering result in longer execution time.

|

| 88 |

+

- `img_format: '.jpg'`

|

| 89 |

+

- Input image format.

|

| 90 |

+

- `depth_format: '.npy'`

|

| 91 |

+

- Input depth (disparity) format. Use NumPy array file as default.

|

| 92 |

+

- If the user wants to edit the depth (disparity) map manually, we provide `.png` format depth (disparity) map.

|

| 93 |

+

- Remember to switch this parameter from `.npy` to `.png` when using depth (disparity) map with `.png` format.

|

| 94 |

+

- `require_midas: True`

|

| 95 |

+

- Set it to `True` if the user wants to use depth map estimated by `MiDaS`.

|

| 96 |

+

- Set it to `False` if the user wants to use manually edited depth map.

|

| 97 |

+

- If the user wants to edit the depth (disparity) map manually, we provide `.png` format depth (disparity) map.

|

| 98 |

+

- Remember to switch this parameter from `True` to `False` when using manually edited depth map.

|

| 99 |

+

- `depth_threshold: 0.04`

|

| 100 |

+

- A threshold in disparity, adjacent two pixels are discontinuity pixels

|

| 101 |

+

if the difference between them excceed this number.

|

| 102 |

+

- `ext_edge_threshold: 0.002`

|

| 103 |

+

- The threshold to define inpainted depth edge. A pixel in inpainted edge

|

| 104 |

+

map belongs to extended depth edge if the value of that pixel exceeds this number,

|

| 105 |

+

- `sparse_iter: 5`

|

| 106 |

+

- Total iteration numbers of bilateral median filter

|

| 107 |

+

- `filter_size: [7, 7, 5, 5, 5]`

|

| 108 |

+

- Window size of bilateral median filter in each iteration.

|

| 109 |

+

- `sigma_s: 4.0`

|

| 110 |

+

- Intensity term of bilateral median filter

|

| 111 |

+

- `sigma_r: 0.5`

|

| 112 |

+

- Spatial term of bilateral median filter

|

| 113 |

+

- `redundant_number: 12`

|

| 114 |

+

- The number defines short segments. If a depth edge is shorter than this number,

|

| 115 |

+

it is a short segment and removed.

|

| 116 |

+

- `background_thickness: 70`

|

| 117 |

+

- The thickness of synthesis area.

|

| 118 |

+

- `context_thickness: 140`

|

| 119 |

+

- The thickness of context area.

|

| 120 |

+

- `background_thickness_2: 70`

|

| 121 |

+

- The thickness of synthesis area when inpaint second time.

|

| 122 |

+

- `context_thickness_2: 70`

|

| 123 |

+

- The thickness of context area when inpaint second time.

|

| 124 |

+

- `discount_factor: 1.00`

|

| 125 |

+

- `log_depth: True`

|

| 126 |

+

- The scale of depth inpainting. If true, performing inpainting in log scale.

|

| 127 |

+

Otherwise, performing in linear scale.

|

| 128 |

+

- `largest_size: 512`

|

| 129 |

+

- The largest size of inpainted image patch.

|

| 130 |

+

- `depth_edge_dilate: 10`

|

| 131 |

+

- The thickness of dilated synthesis area.

|

| 132 |

+

- `depth_edge_dilate_2: 5`

|

| 133 |

+

- The thickness of dilated synthesis area when inpaint second time.

|

| 134 |

+

- `extrapolate_border: True`

|

| 135 |

+

- Action to extrapolate out-side the border.

|

| 136 |

+

- `extrapolation_thickness: 60`

|

| 137 |

+

- The thickness of extrapolated area.

|

| 138 |

+

- `repeat_inpaint_edge: True`

|

| 139 |

+

- Action to apply depth edge inpainting model repeatedly. Sometimes inpainting depth

|

| 140 |

+

edge once results in short inpinated edge, apply depth edge inpainting repeatedly

|

| 141 |

+

could help you prolong the inpainted depth edge.

|

| 142 |

+

- `crop_border: [0.03, 0.03, 0.05, 0.03]`

|

| 143 |

+

- The fraction of pixels to crop out around the borders `[top, left, bottom, right]`.

|

| 144 |

+

- `anti_flickering: True`

|

| 145 |

+

- Action to avoid flickering effect in the output video.

|

| 146 |

+

- This may result in longer computational time in rendering phase.

|

LICENSE

ADDED

|

@@ -0,0 +1,50 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

MIT License

|

| 3 |

+

|

| 4 |

+

Copyright (c) 2020 Virginia Tech Vision and Learning Lab

|

| 5 |

+

|

| 6 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 7 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 8 |

+

in the Software without restriction, including without limitation the rights

|

| 9 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 10 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 11 |

+

furnished to do so, subject to the following conditions:

|

| 12 |

+

|

| 13 |

+

The above copyright notice and this permission notice shall be included in all

|

| 14 |

+

copies or substantial portions of the Software.

|

| 15 |

+

|

| 16 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 17 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 18 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 19 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 20 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 21 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 22 |

+

SOFTWARE.

|

| 23 |

+

|

| 24 |

+

------------------ LICENSE FOR MiDaS --------------------

|

| 25 |

+

|

| 26 |

+

MIT License

|

| 27 |

+

|

| 28 |

+

Copyright (c) 2019 Intel ISL (Intel Intelligent Systems Lab)

|

| 29 |

+

|

| 30 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 31 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 32 |

+

in the Software without restriction, including without limitation the rights

|

| 33 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 34 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 35 |

+

furnished to do so, subject to the following conditions:

|

| 36 |

+

|

| 37 |

+

The above copyright notice and this permission notice shall be included in all

|

| 38 |

+

copies or substantial portions of the Software.

|

| 39 |

+

|

| 40 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 41 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 42 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 43 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 44 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 45 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 46 |

+

SOFTWARE.

|

| 47 |

+

|

| 48 |

+

--------------------------- LICENSE FOR EdgeConnect --------------------------------

|

| 49 |

+

|

| 50 |

+

Attribution-NonCommercial 4.0 International

|

MiDaS/MiDaS_utils.py

ADDED

|

@@ -0,0 +1,192 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

"""Utils for monoDepth.

|

| 2 |

+

"""

|

| 3 |

+

import sys

|

| 4 |

+

import re

|

| 5 |

+

import numpy as np

|

| 6 |

+

import cv2

|

| 7 |

+

import torch

|

| 8 |

+

import imageio

|

| 9 |

+

|

| 10 |

+

|

| 11 |

+

def read_pfm(path):

|

| 12 |

+

"""Read pfm file.

|

| 13 |

+

|

| 14 |

+

Args:

|

| 15 |

+

path (str): path to file

|

| 16 |

+

|

| 17 |

+

Returns:

|

| 18 |

+

tuple: (data, scale)

|

| 19 |

+

"""

|

| 20 |

+

with open(path, "rb") as file:

|

| 21 |

+

|

| 22 |

+

color = None

|

| 23 |

+

width = None

|

| 24 |

+

height = None

|

| 25 |

+

scale = None

|

| 26 |

+

endian = None

|

| 27 |

+

|

| 28 |

+

header = file.readline().rstrip()

|

| 29 |

+

if header.decode("ascii") == "PF":

|

| 30 |

+

color = True

|

| 31 |

+

elif header.decode("ascii") == "Pf":

|

| 32 |

+

color = False

|

| 33 |

+

else:

|

| 34 |

+

raise Exception("Not a PFM file: " + path)

|

| 35 |

+

|

| 36 |

+

dim_match = re.match(r"^(\d+)\s(\d+)\s$", file.readline().decode("ascii"))

|

| 37 |

+

if dim_match:

|

| 38 |

+

width, height = list(map(int, dim_match.groups()))

|

| 39 |

+

else:

|

| 40 |

+

raise Exception("Malformed PFM header.")

|

| 41 |

+

|

| 42 |

+

scale = float(file.readline().decode("ascii").rstrip())

|

| 43 |

+

if scale < 0:

|

| 44 |

+

# little-endian

|

| 45 |

+

endian = "<"

|

| 46 |

+

scale = -scale

|

| 47 |

+

else:

|

| 48 |

+

# big-endian

|

| 49 |

+

endian = ">"

|

| 50 |

+

|

| 51 |

+

data = np.fromfile(file, endian + "f")

|

| 52 |

+

shape = (height, width, 3) if color else (height, width)

|

| 53 |

+

|

| 54 |

+

data = np.reshape(data, shape)

|

| 55 |

+

data = np.flipud(data)

|

| 56 |

+

|

| 57 |

+

return data, scale

|

| 58 |

+

|

| 59 |

+

|

| 60 |

+

def write_pfm(path, image, scale=1):

|

| 61 |

+

"""Write pfm file.

|

| 62 |

+

|

| 63 |

+

Args:

|

| 64 |

+

path (str): pathto file

|

| 65 |

+

image (array): data

|

| 66 |

+

scale (int, optional): Scale. Defaults to 1.

|

| 67 |

+

"""

|

| 68 |

+

|

| 69 |

+

with open(path, "wb") as file:

|

| 70 |

+

color = None

|

| 71 |

+

|

| 72 |

+

if image.dtype.name != "float32":

|

| 73 |

+

raise Exception("Image dtype must be float32.")

|

| 74 |

+

|

| 75 |

+

image = np.flipud(image)

|

| 76 |

+

|

| 77 |

+

if len(image.shape) == 3 and image.shape[2] == 3: # color image

|

| 78 |

+

color = True

|

| 79 |

+

elif (

|

| 80 |

+

len(image.shape) == 2 or len(image.shape) == 3 and image.shape[2] == 1

|

| 81 |

+

): # greyscale

|

| 82 |

+

color = False

|

| 83 |

+

else:

|

| 84 |

+

raise Exception("Image must have H x W x 3, H x W x 1 or H x W dimensions.")

|

| 85 |

+

|

| 86 |

+

file.write("PF\n" if color else "Pf\n".encode())

|

| 87 |

+

file.write("%d %d\n".encode() % (image.shape[1], image.shape[0]))

|

| 88 |

+

|

| 89 |

+

endian = image.dtype.byteorder

|

| 90 |

+

|

| 91 |

+

if endian == "<" or endian == "=" and sys.byteorder == "little":

|

| 92 |

+

scale = -scale

|

| 93 |

+

|

| 94 |

+

file.write("%f\n".encode() % scale)

|

| 95 |

+

|

| 96 |

+

image.tofile(file)

|

| 97 |

+

|

| 98 |

+

|

| 99 |

+

def read_image(path):

|

| 100 |

+

"""Read image and output RGB image (0-1).

|

| 101 |

+

|

| 102 |

+

Args:

|

| 103 |

+

path (str): path to file

|

| 104 |

+

|

| 105 |

+

Returns:

|

| 106 |

+

array: RGB image (0-1)

|

| 107 |

+

"""

|

| 108 |

+

img = cv2.imread(path)

|

| 109 |

+

|

| 110 |

+

if img.ndim == 2:

|

| 111 |

+

img = cv2.cvtColor(img, cv2.COLOR_GRAY2BGR)

|

| 112 |

+

|

| 113 |

+

img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB) / 255.0

|

| 114 |

+

|

| 115 |

+

return img

|

| 116 |

+

|

| 117 |

+

|

| 118 |

+

def resize_image(img):

|

| 119 |

+

"""Resize image and make it fit for network.

|

| 120 |

+

|

| 121 |

+

Args:

|

| 122 |

+

img (array): image

|

| 123 |

+

|

| 124 |

+

Returns:

|

| 125 |

+

tensor: data ready for network

|

| 126 |

+

"""

|

| 127 |

+

height_orig = img.shape[0]

|

| 128 |

+

width_orig = img.shape[1]

|

| 129 |

+

unit_scale = 384.

|

| 130 |

+

|

| 131 |

+

if width_orig > height_orig:

|

| 132 |

+

scale = width_orig / unit_scale

|

| 133 |

+

else:

|

| 134 |

+

scale = height_orig / unit_scale

|

| 135 |

+

|

| 136 |

+

height = (np.ceil(height_orig / scale / 32) * 32).astype(int)

|

| 137 |

+

width = (np.ceil(width_orig / scale / 32) * 32).astype(int)

|

| 138 |

+

|

| 139 |

+

img_resized = cv2.resize(img, (width, height), interpolation=cv2.INTER_AREA)

|

| 140 |

+

|

| 141 |

+

img_resized = (

|

| 142 |

+

torch.from_numpy(np.transpose(img_resized, (2, 0, 1))).contiguous().float()

|

| 143 |

+

)

|

| 144 |

+

img_resized = img_resized.unsqueeze(0)

|

| 145 |

+

|

| 146 |

+

return img_resized

|

| 147 |

+

|

| 148 |

+

|

| 149 |

+

def resize_depth(depth, width, height):

|

| 150 |

+

"""Resize depth map and bring to CPU (numpy).

|

| 151 |

+

|

| 152 |

+

Args:

|

| 153 |

+

depth (tensor): depth

|

| 154 |

+

width (int): image width

|

| 155 |

+

height (int): image height

|

| 156 |

+

|

| 157 |

+

Returns:

|

| 158 |

+

array: processed depth

|

| 159 |

+

"""

|

| 160 |

+

depth = torch.squeeze(depth[0, :, :, :]).to("cpu")

|

| 161 |

+

depth = cv2.blur(depth.numpy(), (3, 3))

|

| 162 |

+

depth_resized = cv2.resize(

|

| 163 |

+

depth, (width, height), interpolation=cv2.INTER_AREA

|

| 164 |

+

)

|

| 165 |

+

|

| 166 |

+

return depth_resized

|

| 167 |

+

|

| 168 |

+

def write_depth(path, depth, bits=1):

|

| 169 |

+

"""Write depth map to pfm and png file.

|

| 170 |

+

|

| 171 |

+

Args:

|

| 172 |

+

path (str): filepath without extension

|

| 173 |

+

depth (array): depth

|

| 174 |

+

"""

|

| 175 |

+

# write_pfm(path + ".pfm", depth.astype(np.float32))

|

| 176 |

+

|

| 177 |

+

depth_min = depth.min()

|

| 178 |

+

depth_max = depth.max()

|

| 179 |

+

|

| 180 |

+

max_val = (2**(8*bits))-1

|

| 181 |

+

|

| 182 |

+

if depth_max - depth_min > np.finfo("float").eps:

|

| 183 |

+

out = max_val * (depth - depth_min) / (depth_max - depth_min)

|

| 184 |

+

else:

|

| 185 |

+

out = 0

|

| 186 |

+

|

| 187 |

+

if bits == 1:

|

| 188 |

+

cv2.imwrite(path + ".png", out.astype("uint8"))

|

| 189 |

+

elif bits == 2:

|

| 190 |

+

cv2.imwrite(path + ".png", out.astype("uint16"))

|

| 191 |

+

|

| 192 |

+

return

|

MiDaS/model.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:617d916c0864b95880aed0b6be6d0629ce8b4c0d28361a559f8e5193a9bb554d

|

| 3 |

+

size 149751722

|

MiDaS/monodepth_net.py

ADDED

|

@@ -0,0 +1,186 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

"""MonoDepthNet: Network for monocular depth estimation trained by mixing several datasets.

|

| 2 |

+

This file contains code that is adapted from

|

| 3 |

+

https://github.com/thomasjpfan/pytorch_refinenet/blob/master/pytorch_refinenet/refinenet/refinenet_4cascade.py

|

| 4 |

+

"""

|

| 5 |

+

import torch

|

| 6 |

+

import torch.nn as nn

|

| 7 |

+

from torchvision import models

|

| 8 |

+

|

| 9 |

+

|

| 10 |

+

class MonoDepthNet(nn.Module):

|

| 11 |

+

"""Network for monocular depth estimation.

|

| 12 |

+

"""

|

| 13 |

+

|

| 14 |

+

def __init__(self, path=None, features=256):

|

| 15 |

+

"""Init.

|

| 16 |

+

|

| 17 |

+

Args:

|

| 18 |

+

path (str, optional): Path to saved model. Defaults to None.

|

| 19 |

+

features (int, optional): Number of features. Defaults to 256.

|

| 20 |

+

"""

|

| 21 |

+

super().__init__()

|

| 22 |

+

|

| 23 |

+

resnet = models.resnet50(pretrained=False)

|

| 24 |

+

|

| 25 |

+

self.pretrained = nn.Module()

|

| 26 |

+

self.scratch = nn.Module()

|

| 27 |

+

self.pretrained.layer1 = nn.Sequential(resnet.conv1, resnet.bn1, resnet.relu,

|

| 28 |

+

resnet.maxpool, resnet.layer1)

|

| 29 |

+

|

| 30 |

+

self.pretrained.layer2 = resnet.layer2

|

| 31 |

+

self.pretrained.layer3 = resnet.layer3

|

| 32 |

+

self.pretrained.layer4 = resnet.layer4

|

| 33 |

+

|

| 34 |

+

# adjust channel number of feature maps

|

| 35 |

+

self.scratch.layer1_rn = nn.Conv2d(256, features, kernel_size=3, stride=1, padding=1, bias=False)

|

| 36 |

+

self.scratch.layer2_rn = nn.Conv2d(512, features, kernel_size=3, stride=1, padding=1, bias=False)

|

| 37 |

+

self.scratch.layer3_rn = nn.Conv2d(1024, features, kernel_size=3, stride=1, padding=1, bias=False)

|

| 38 |

+

self.scratch.layer4_rn = nn.Conv2d(2048, features, kernel_size=3, stride=1, padding=1, bias=False)

|

| 39 |

+

|

| 40 |

+

self.scratch.refinenet4 = FeatureFusionBlock(features)

|

| 41 |

+

self.scratch.refinenet3 = FeatureFusionBlock(features)

|

| 42 |

+

self.scratch.refinenet2 = FeatureFusionBlock(features)

|

| 43 |

+

self.scratch.refinenet1 = FeatureFusionBlock(features)

|

| 44 |

+

|

| 45 |

+

# adaptive output module: 2 convolutions and upsampling

|

| 46 |

+

self.scratch.output_conv = nn.Sequential(nn.Conv2d(features, 128, kernel_size=3, stride=1, padding=1),

|

| 47 |

+

nn.Conv2d(128, 1, kernel_size=3, stride=1, padding=1),

|

| 48 |

+

Interpolate(scale_factor=2, mode='bilinear'))

|

| 49 |

+

|

| 50 |

+

# load model

|

| 51 |

+

if path:

|

| 52 |

+

self.load(path)

|

| 53 |

+

|

| 54 |

+

def forward(self, x):

|

| 55 |

+

"""Forward pass.

|

| 56 |

+

|

| 57 |

+

Args:

|

| 58 |

+

x (tensor): input data (image)

|

| 59 |

+

|

| 60 |

+

Returns:

|

| 61 |

+

tensor: depth

|

| 62 |

+

"""

|

| 63 |

+

layer_1 = self.pretrained.layer1(x)

|

| 64 |

+

layer_2 = self.pretrained.layer2(layer_1)

|

| 65 |

+

layer_3 = self.pretrained.layer3(layer_2)

|

| 66 |

+

layer_4 = self.pretrained.layer4(layer_3)

|

| 67 |

+

|

| 68 |

+

layer_1_rn = self.scratch.layer1_rn(layer_1)

|

| 69 |

+

layer_2_rn = self.scratch.layer2_rn(layer_2)

|

| 70 |

+

layer_3_rn = self.scratch.layer3_rn(layer_3)

|

| 71 |

+

layer_4_rn = self.scratch.layer4_rn(layer_4)

|

| 72 |

+

|

| 73 |

+

path_4 = self.scratch.refinenet4(layer_4_rn)

|

| 74 |

+

path_3 = self.scratch.refinenet3(path_4, layer_3_rn)

|

| 75 |

+

path_2 = self.scratch.refinenet2(path_3, layer_2_rn)

|

| 76 |

+

path_1 = self.scratch.refinenet1(path_2, layer_1_rn)

|

| 77 |

+

|

| 78 |

+

out = self.scratch.output_conv(path_1)

|

| 79 |

+

|

| 80 |

+

return out

|

| 81 |

+

|

| 82 |

+

def load(self, path):

|

| 83 |

+

"""Load model from file.

|

| 84 |

+

|

| 85 |

+

Args:

|

| 86 |

+

path (str): file path

|

| 87 |

+

"""

|

| 88 |

+

parameters = torch.load(path)

|

| 89 |

+

|

| 90 |

+

self.load_state_dict(parameters)

|

| 91 |

+

|

| 92 |

+

|

| 93 |

+

class Interpolate(nn.Module):

|

| 94 |

+

"""Interpolation module.

|

| 95 |

+

"""

|

| 96 |

+

|

| 97 |

+

def __init__(self, scale_factor, mode):

|

| 98 |

+

"""Init.

|

| 99 |

+

|

| 100 |

+

Args:

|

| 101 |

+

scale_factor (float): scaling

|

| 102 |

+

mode (str): interpolation mode

|

| 103 |

+

"""

|

| 104 |

+

super(Interpolate, self).__init__()

|

| 105 |

+

|

| 106 |

+

self.interp = nn.functional.interpolate

|

| 107 |

+

self.scale_factor = scale_factor

|

| 108 |

+

self.mode = mode

|

| 109 |

+

|

| 110 |

+

def forward(self, x):

|

| 111 |

+

"""Forward pass.

|

| 112 |

+

|

| 113 |

+

Args:

|

| 114 |

+

x (tensor): input

|

| 115 |

+

|

| 116 |

+

Returns:

|

| 117 |

+

tensor: interpolated data

|

| 118 |

+

"""

|

| 119 |

+

x = self.interp(x, scale_factor=self.scale_factor, mode=self.mode, align_corners=False)

|

| 120 |

+

|

| 121 |

+

return x

|

| 122 |

+

|

| 123 |

+

|

| 124 |

+

class ResidualConvUnit(nn.Module):

|

| 125 |

+

"""Residual convolution module.

|

| 126 |

+

"""

|

| 127 |

+

|

| 128 |

+

def __init__(self, features):

|

| 129 |

+

"""Init.

|

| 130 |

+

|

| 131 |

+

Args:

|

| 132 |

+

features (int): number of features

|

| 133 |

+

"""

|

| 134 |

+

super().__init__()

|

| 135 |

+

|

| 136 |

+

self.conv1 = nn.Conv2d(features, features, kernel_size=3, stride=1, padding=1, bias=True)

|

| 137 |

+

self.conv2 = nn.Conv2d(features, features, kernel_size=3, stride=1, padding=1, bias=False)

|

| 138 |

+

self.relu = nn.ReLU(inplace=True)

|

| 139 |

+

|

| 140 |

+

def forward(self, x):

|

| 141 |

+

"""Forward pass.

|

| 142 |

+

|

| 143 |

+

Args:

|

| 144 |

+

x (tensor): input

|

| 145 |

+

|

| 146 |

+

Returns:

|

| 147 |

+

tensor: output

|

| 148 |

+

"""

|

| 149 |

+

out = self.relu(x)

|

| 150 |

+

out = self.conv1(out)

|

| 151 |

+

out = self.relu(out)

|

| 152 |

+

out = self.conv2(out)

|

| 153 |

+

|

| 154 |

+

return out + x

|

| 155 |

+

|

| 156 |

+

|

| 157 |

+

class FeatureFusionBlock(nn.Module):

|

| 158 |

+

"""Feature fusion block.

|

| 159 |

+

"""

|

| 160 |

+

|

| 161 |

+

def __init__(self, features):

|

| 162 |

+

"""Init.

|

| 163 |

+

|

| 164 |

+

Args:

|

| 165 |

+

features (int): number of features

|

| 166 |

+

"""

|

| 167 |

+

super().__init__()

|

| 168 |

+

|

| 169 |

+

self.resConfUnit = ResidualConvUnit(features)

|

| 170 |

+

|

| 171 |

+

def forward(self, *xs):

|

| 172 |

+

"""Forward pass.

|

| 173 |

+

|

| 174 |

+

Returns:

|

| 175 |

+

tensor: output

|

| 176 |

+

"""

|

| 177 |

+

output = xs[0]

|

| 178 |

+

|

| 179 |

+

if len(xs) == 2:

|

| 180 |

+

output += self.resConfUnit(xs[1])

|

| 181 |

+

|

| 182 |

+

output = self.resConfUnit(output)

|

| 183 |

+

output = nn.functional.interpolate(output, scale_factor=2,

|

| 184 |

+

mode='bilinear', align_corners=True)

|

| 185 |

+

|

| 186 |

+

return output

|

MiDaS/run.py

ADDED

|

@@ -0,0 +1,81 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

"""Compute depth maps for images in the input folder.

|

| 2 |

+

"""

|

| 3 |

+

import os

|

| 4 |

+

import glob

|

| 5 |

+

import torch

|

| 6 |

+

# from monodepth_net import MonoDepthNet

|

| 7 |

+

# import utils

|

| 8 |

+

import matplotlib.pyplot as plt

|

| 9 |

+

import numpy as np

|

| 10 |

+

import cv2

|

| 11 |

+

import imageio

|

| 12 |

+

|

| 13 |

+

|

| 14 |

+

def run_depth(img_names, input_path, output_path, model_path, Net, utils, target_w=None):

|

| 15 |

+

"""Run MonoDepthNN to compute depth maps.

|

| 16 |

+

|

| 17 |

+

Args:

|

| 18 |

+

input_path (str): path to input folder

|

| 19 |

+

output_path (str): path to output folder

|

| 20 |

+

model_path (str): path to saved model

|

| 21 |

+

"""

|

| 22 |

+

print("initialize")

|

| 23 |

+

|

| 24 |

+

# select device

|

| 25 |

+

device = torch.device("cpu")

|

| 26 |

+

print("device: %s" % device)

|

| 27 |

+

|

| 28 |

+

# load network

|

| 29 |

+

model = Net(model_path)

|

| 30 |

+

model.to(device)

|

| 31 |

+

model.eval()

|

| 32 |

+

|

| 33 |

+

# get input

|

| 34 |

+

# img_names = glob.glob(os.path.join(input_path, "*"))

|

| 35 |

+

num_images = len(img_names)

|

| 36 |

+

|

| 37 |

+

# create output folder

|

| 38 |

+

os.makedirs(output_path, exist_ok=True)

|

| 39 |

+

|

| 40 |

+

print("start processing")

|

| 41 |

+

|

| 42 |

+

for ind, img_name in enumerate(img_names):

|

| 43 |

+

|

| 44 |

+

print(" processing {} ({}/{})".format(img_name, ind + 1, num_images))

|

| 45 |

+

|

| 46 |

+

# input

|

| 47 |

+

img = utils.read_image(img_name)

|

| 48 |

+

w = img.shape[1]

|

| 49 |

+

scale = 640. / max(img.shape[0], img.shape[1])

|

| 50 |

+

target_height, target_width = int(round(img.shape[0] * scale)), int(round(img.shape[1] * scale))

|

| 51 |

+

img_input = utils.resize_image(img)

|

| 52 |

+

print(img_input.shape)

|

| 53 |

+

img_input = img_input.to(device)

|

| 54 |

+

# compute

|

| 55 |

+

with torch.no_grad():

|

| 56 |

+

out = model.forward(img_input)

|

| 57 |

+

|

| 58 |

+

depth = utils.resize_depth(out, target_width, target_height)

|

| 59 |

+

img = cv2.resize((img * 255).astype(np.uint8), (target_width, target_height), interpolation=cv2.INTER_AREA)

|

| 60 |

+

|

| 61 |

+

filename = os.path.join(

|

| 62 |

+

output_path, os.path.splitext(os.path.basename(img_name))[0]

|

| 63 |

+

)

|

| 64 |

+

np.save(filename + '.npy', depth)

|

| 65 |

+

utils.write_depth(filename, depth, bits=2)

|

| 66 |

+

|

| 67 |

+

print("finished")

|

| 68 |

+

|

| 69 |

+

|

| 70 |

+

# if __name__ == "__main__":

|

| 71 |

+

# # set paths

|

| 72 |

+

# INPUT_PATH = "image"

|

| 73 |

+

# OUTPUT_PATH = "output"

|

| 74 |

+

# MODEL_PATH = "model.pt"

|

| 75 |

+

|

| 76 |

+

# # set torch options

|

| 77 |

+

# torch.backends.cudnn.enabled = True

|

| 78 |

+

# torch.backends.cudnn.benchmark = True

|

| 79 |

+

|

| 80 |

+

# # compute depth maps

|

| 81 |

+

# run_depth(INPUT_PATH, OUTPUT_PATH, MODEL_PATH, Net, target_w=640)

|

README.md

CHANGED

|

@@ -1,7 +1,7 @@

|

|

| 1 |

---

|

| 2 |

title: 3D_Photo_Inpainting

|

| 3 |

-

emoji:

|

| 4 |

-

colorFrom:

|

| 5 |

colorTo: red

|

| 6 |

sdk: gradio

|

| 7 |

app_file: app.py

|

|

|

|

| 1 |

---

|

| 2 |

title: 3D_Photo_Inpainting

|

| 3 |

+

emoji: 👁

|

| 4 |

+

colorFrom: purple

|

| 5 |

colorTo: red

|

| 6 |

sdk: gradio

|

| 7 |

app_file: app.py

|

app.py

ADDED

|

@@ -0,0 +1,230 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Repo source: https://github.com/vt-vl-lab/3d-photo-inpainting

|

| 2 |

+

|

| 3 |

+

#import os

|

| 4 |

+

#os.environ['QT_DEBUG_PLUGINS'] = '1'

|

| 5 |

+

|

| 6 |

+

import subprocess

|

| 7 |

+

#subprocess.run('ldd /home/user/.local/lib/python3.8/site-packages/PyQt5/Qt/plugins/platforms/libqxcb.so', shell=True)

|

| 8 |

+

#subprocess.run('pip list', shell=True)

|

| 9 |

+

subprocess.run('nvidia-smi', shell=True)

|

| 10 |

+

|

| 11 |

+

from pyvirtualdisplay import Display

|

| 12 |

+

display = Display(visible=0, size=(1920, 1080)).start()

|

| 13 |

+

#subprocess.run('echo $DISPLAY', shell=True)

|

| 14 |

+

|

| 15 |

+

# 3d inpainting imports

|

| 16 |

+

import numpy as np

|

| 17 |

+

import argparse

|

| 18 |

+

import glob

|

| 19 |

+

import os

|

| 20 |

+

from functools import partial

|

| 21 |

+

import vispy

|

| 22 |

+

import scipy.misc as misc

|

| 23 |

+

from tqdm import tqdm

|

| 24 |

+

import yaml

|

| 25 |

+

import time

|

| 26 |

+

import sys

|

| 27 |

+

from mesh import write_ply, read_ply, output_3d_photo

|

| 28 |

+

from utils import get_MiDaS_samples, read_MiDaS_depth

|

| 29 |

+

import torch

|

| 30 |

+

import cv2

|

| 31 |

+

from skimage.transform import resize

|

| 32 |

+

import imageio

|

| 33 |

+

import copy

|

| 34 |

+

from networks import Inpaint_Color_Net, Inpaint_Depth_Net, Inpaint_Edge_Net

|

| 35 |

+

from MiDaS.run import run_depth

|

| 36 |

+

from boostmonodepth_utils import run_boostmonodepth

|

| 37 |

+

from MiDaS.monodepth_net import MonoDepthNet

|

| 38 |

+

import MiDaS.MiDaS_utils as MiDaS_utils

|

| 39 |

+

from bilateral_filtering import sparse_bilateral_filtering

|

| 40 |

+

|

| 41 |

+

import torch

|

| 42 |

+

|

| 43 |

+

# gradio imports

|

| 44 |

+