Upload folder using huggingface_hub

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .DS_Store +0 -0

- ._.DS_Store +0 -0

- .gitattributes +91 -0

- LICENSE-CODE +21 -0

- LICENSE-MODEL +91 -0

- README.md +336 -0

- README_WEIGHTS.md +94 -0

- config.json +70 -0

- configuration_deepseek.py +210 -0

- figures/benchmark.png +0 -0

- figures/niah.png +0 -0

- inference/._fp8_cast_bf16.py +0 -0

- inference/._kernel.py +0 -0

- inference/.venv/bin/Activate.ps1 +247 -0

- inference/.venv/bin/activate +69 -0

- inference/.venv/bin/activate.csh +26 -0

- inference/.venv/bin/activate.fish +69 -0

- inference/.venv/bin/convert-caffe2-to-onnx +8 -0

- inference/.venv/bin/convert-onnx-to-caffe2 +8 -0

- inference/.venv/bin/f2py +8 -0

- inference/.venv/bin/huggingface-cli +8 -0

- inference/.venv/bin/isympy +8 -0

- inference/.venv/bin/normalizer +8 -0

- inference/.venv/bin/numpy-config +8 -0

- inference/.venv/bin/pip +8 -0

- inference/.venv/bin/pip3 +8 -0

- inference/.venv/bin/pip3.10 +8 -0

- inference/.venv/bin/proton +8 -0

- inference/.venv/bin/proton-viewer +8 -0

- inference/.venv/bin/python +3 -0

- inference/.venv/bin/python3 +3 -0

- inference/.venv/bin/python3.10 +3 -0

- inference/.venv/bin/torchrun +8 -0

- inference/.venv/bin/tqdm +8 -0

- inference/.venv/bin/transformers-cli +8 -0

- inference/.venv/lib/python3.10/site-packages/MarkupSafe-3.0.2.dist-info/INSTALLER +1 -0

- inference/.venv/lib/python3.10/site-packages/MarkupSafe-3.0.2.dist-info/LICENSE.txt +28 -0

- inference/.venv/lib/python3.10/site-packages/MarkupSafe-3.0.2.dist-info/METADATA +92 -0

- inference/.venv/lib/python3.10/site-packages/MarkupSafe-3.0.2.dist-info/RECORD +14 -0

- inference/.venv/lib/python3.10/site-packages/MarkupSafe-3.0.2.dist-info/WHEEL +6 -0

- inference/.venv/lib/python3.10/site-packages/MarkupSafe-3.0.2.dist-info/top_level.txt +1 -0

- inference/.venv/lib/python3.10/site-packages/PyYAML-6.0.2.dist-info/INSTALLER +1 -0

- inference/.venv/lib/python3.10/site-packages/PyYAML-6.0.2.dist-info/LICENSE +20 -0

- inference/.venv/lib/python3.10/site-packages/PyYAML-6.0.2.dist-info/METADATA +46 -0

- inference/.venv/lib/python3.10/site-packages/PyYAML-6.0.2.dist-info/RECORD +43 -0

- inference/.venv/lib/python3.10/site-packages/PyYAML-6.0.2.dist-info/WHEEL +6 -0

- inference/.venv/lib/python3.10/site-packages/PyYAML-6.0.2.dist-info/top_level.txt +2 -0

- inference/.venv/lib/python3.10/site-packages/__pycache__/isympy.cpython-310.pyc +0 -0

- inference/.venv/lib/python3.10/site-packages/__pycache__/typing_extensions.cpython-310.pyc +0 -0

- inference/.venv/lib/python3.10/site-packages/_distutils_hack/__init__.py +132 -0

.DS_Store

ADDED

|

Binary file (6.15 kB). View file

|

|

|

._.DS_Store

ADDED

|

Binary file (4.1 kB). View file

|

|

|

.gitattributes

CHANGED

|

@@ -33,3 +33,94 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

inference/.venv/bin/python filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

inference/.venv/bin/python3 filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

inference/.venv/bin/python3.10 filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

inference/.venv/lib/python3.10/site-packages/numpy/_core/_multiarray_umath.cpython-310-x86_64-linux-gnu.so filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

inference/.venv/lib/python3.10/site-packages/numpy/_core/_simd.cpython-310-x86_64-linux-gnu.so filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

inference/.venv/lib/python3.10/site-packages/numpy/random/_generator.cpython-310-x86_64-linux-gnu.so filter=lfs diff=lfs merge=lfs -text

|

| 42 |

+

inference/.venv/lib/python3.10/site-packages/numpy.libs/libgfortran-040039e1-0352e75f.so.5.0.0 filter=lfs diff=lfs merge=lfs -text

|

| 43 |

+

inference/.venv/lib/python3.10/site-packages/numpy.libs/libscipy_openblas64_-6bb31eeb.so filter=lfs diff=lfs merge=lfs -text

|

| 44 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cublas/lib/libcublas.so.12 filter=lfs diff=lfs merge=lfs -text

|

| 45 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cublas/lib/libcublasLt.so.12 filter=lfs diff=lfs merge=lfs -text

|

| 46 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cuda_cupti/lib/libcheckpoint.so filter=lfs diff=lfs merge=lfs -text

|

| 47 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cuda_cupti/lib/libcupti.so.12 filter=lfs diff=lfs merge=lfs -text

|

| 48 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cuda_cupti/lib/libnvperf_host.so filter=lfs diff=lfs merge=lfs -text

|

| 49 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cuda_cupti/lib/libnvperf_target.so filter=lfs diff=lfs merge=lfs -text

|

| 50 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cuda_nvrtc/lib/libnvrtc-builtins.so.12.1 filter=lfs diff=lfs merge=lfs -text

|

| 51 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cuda_nvrtc/lib/libnvrtc.so.12 filter=lfs diff=lfs merge=lfs -text

|

| 52 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cudnn/lib/libcudnn_adv.so.9 filter=lfs diff=lfs merge=lfs -text

|

| 53 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cudnn/lib/libcudnn_cnn.so.9 filter=lfs diff=lfs merge=lfs -text

|

| 54 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cudnn/lib/libcudnn_engines_precompiled.so.9 filter=lfs diff=lfs merge=lfs -text

|

| 55 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cudnn/lib/libcudnn_engines_runtime_compiled.so.9 filter=lfs diff=lfs merge=lfs -text

|

| 56 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cudnn/lib/libcudnn_graph.so.9 filter=lfs diff=lfs merge=lfs -text

|

| 57 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cudnn/lib/libcudnn_heuristic.so.9 filter=lfs diff=lfs merge=lfs -text

|

| 58 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cudnn/lib/libcudnn_ops.so.9 filter=lfs diff=lfs merge=lfs -text

|

| 59 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cufft/lib/libcufft.so.11 filter=lfs diff=lfs merge=lfs -text

|

| 60 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cufft/lib/libcufftw.so.11 filter=lfs diff=lfs merge=lfs -text

|

| 61 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/curand/lib/libcurand.so.10 filter=lfs diff=lfs merge=lfs -text

|

| 62 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cusolver/lib/libcusolver.so.11 filter=lfs diff=lfs merge=lfs -text

|

| 63 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cusolver/lib/libcusolverMg.so.11 filter=lfs diff=lfs merge=lfs -text

|

| 64 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/cusparse/lib/libcusparse.so.12 filter=lfs diff=lfs merge=lfs -text

|

| 65 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/nccl/lib/libnccl.so.2 filter=lfs diff=lfs merge=lfs -text

|

| 66 |

+

inference/.venv/lib/python3.10/site-packages/nvidia/nvjitlink/lib/libnvJitLink.so.12 filter=lfs diff=lfs merge=lfs -text

|

| 67 |

+

inference/.venv/lib/python3.10/site-packages/regex/_regex.cpython-310-x86_64-linux-gnu.so filter=lfs diff=lfs merge=lfs -text

|

| 68 |

+

inference/.venv/lib/python3.10/site-packages/safetensors/_safetensors_rust.cpython-310-x86_64-linux-gnu.so filter=lfs diff=lfs merge=lfs -text

|

| 69 |

+

inference/.venv/lib/python3.10/site-packages/tokenizers/tokenizers.cpython-310-x86_64-linux-gnu.so filter=lfs diff=lfs merge=lfs -text

|

| 70 |

+

inference/.venv/lib/python3.10/site-packages/torch/bin/protoc filter=lfs diff=lfs merge=lfs -text

|

| 71 |

+

inference/.venv/lib/python3.10/site-packages/torch/bin/protoc-3.13.0.0 filter=lfs diff=lfs merge=lfs -text

|

| 72 |

+

inference/.venv/lib/python3.10/site-packages/torch/lib/libc10.so filter=lfs diff=lfs merge=lfs -text

|

| 73 |

+

inference/.venv/lib/python3.10/site-packages/torch/lib/libcusparseLt-f80c68d1.so.0 filter=lfs diff=lfs merge=lfs -text

|

| 74 |

+

inference/.venv/lib/python3.10/site-packages/torch/lib/libtorch_cpu.so filter=lfs diff=lfs merge=lfs -text

|

| 75 |

+

inference/.venv/lib/python3.10/site-packages/torch/lib/libtorch_cuda.so filter=lfs diff=lfs merge=lfs -text

|

| 76 |

+

inference/.venv/lib/python3.10/site-packages/torch/lib/libtorch_cuda_linalg.so filter=lfs diff=lfs merge=lfs -text

|

| 77 |

+

inference/.venv/lib/python3.10/site-packages/torch/lib/libtorch_python.so filter=lfs diff=lfs merge=lfs -text

|

| 78 |

+

inference/.venv/lib/python3.10/site-packages/triton/_C/libproton.so filter=lfs diff=lfs merge=lfs -text

|

| 79 |

+

inference/.venv/lib/python3.10/site-packages/triton/_C/libtriton.so filter=lfs diff=lfs merge=lfs -text

|

| 80 |

+

inference/.venv/lib/python3.10/site-packages/triton/backends/nvidia/bin/nvdisasm filter=lfs diff=lfs merge=lfs -text

|

| 81 |

+

inference/.venv/lib/python3.10/site-packages/triton/backends/nvidia/bin/ptxas filter=lfs diff=lfs merge=lfs -text

|

| 82 |

+

inference/.venv/lib/python3.10/site-packages/yaml/_yaml.cpython-310-x86_64-linux-gnu.so filter=lfs diff=lfs merge=lfs -text

|

| 83 |

+

inference/.venv/lib64/python3.10/site-packages/numpy/_core/_multiarray_umath.cpython-310-x86_64-linux-gnu.so filter=lfs diff=lfs merge=lfs -text

|

| 84 |

+

inference/.venv/lib64/python3.10/site-packages/numpy/_core/_simd.cpython-310-x86_64-linux-gnu.so filter=lfs diff=lfs merge=lfs -text

|

| 85 |

+

inference/.venv/lib64/python3.10/site-packages/numpy/random/_generator.cpython-310-x86_64-linux-gnu.so filter=lfs diff=lfs merge=lfs -text

|

| 86 |

+

inference/.venv/lib64/python3.10/site-packages/numpy.libs/libgfortran-040039e1-0352e75f.so.5.0.0 filter=lfs diff=lfs merge=lfs -text

|

| 87 |

+

inference/.venv/lib64/python3.10/site-packages/numpy.libs/libscipy_openblas64_-6bb31eeb.so filter=lfs diff=lfs merge=lfs -text

|

| 88 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cublas/lib/libcublas.so.12 filter=lfs diff=lfs merge=lfs -text

|

| 89 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cublas/lib/libcublasLt.so.12 filter=lfs diff=lfs merge=lfs -text

|

| 90 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cuda_cupti/lib/libcheckpoint.so filter=lfs diff=lfs merge=lfs -text

|

| 91 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cuda_cupti/lib/libcupti.so.12 filter=lfs diff=lfs merge=lfs -text

|

| 92 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cuda_cupti/lib/libnvperf_host.so filter=lfs diff=lfs merge=lfs -text

|

| 93 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cuda_cupti/lib/libnvperf_target.so filter=lfs diff=lfs merge=lfs -text

|

| 94 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cuda_nvrtc/lib/libnvrtc-builtins.so.12.1 filter=lfs diff=lfs merge=lfs -text

|

| 95 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cuda_nvrtc/lib/libnvrtc.so.12 filter=lfs diff=lfs merge=lfs -text

|

| 96 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cudnn/lib/libcudnn_adv.so.9 filter=lfs diff=lfs merge=lfs -text

|

| 97 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cudnn/lib/libcudnn_cnn.so.9 filter=lfs diff=lfs merge=lfs -text

|

| 98 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cudnn/lib/libcudnn_engines_precompiled.so.9 filter=lfs diff=lfs merge=lfs -text

|

| 99 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cudnn/lib/libcudnn_engines_runtime_compiled.so.9 filter=lfs diff=lfs merge=lfs -text

|

| 100 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cudnn/lib/libcudnn_graph.so.9 filter=lfs diff=lfs merge=lfs -text

|

| 101 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cudnn/lib/libcudnn_heuristic.so.9 filter=lfs diff=lfs merge=lfs -text

|

| 102 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cudnn/lib/libcudnn_ops.so.9 filter=lfs diff=lfs merge=lfs -text

|

| 103 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cufft/lib/libcufft.so.11 filter=lfs diff=lfs merge=lfs -text

|

| 104 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cufft/lib/libcufftw.so.11 filter=lfs diff=lfs merge=lfs -text

|

| 105 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/curand/lib/libcurand.so.10 filter=lfs diff=lfs merge=lfs -text

|

| 106 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cusolver/lib/libcusolver.so.11 filter=lfs diff=lfs merge=lfs -text

|

| 107 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cusolver/lib/libcusolverMg.so.11 filter=lfs diff=lfs merge=lfs -text

|

| 108 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/cusparse/lib/libcusparse.so.12 filter=lfs diff=lfs merge=lfs -text

|

| 109 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/nccl/lib/libnccl.so.2 filter=lfs diff=lfs merge=lfs -text

|

| 110 |

+

inference/.venv/lib64/python3.10/site-packages/nvidia/nvjitlink/lib/libnvJitLink.so.12 filter=lfs diff=lfs merge=lfs -text

|

| 111 |

+

inference/.venv/lib64/python3.10/site-packages/regex/_regex.cpython-310-x86_64-linux-gnu.so filter=lfs diff=lfs merge=lfs -text

|

| 112 |

+

inference/.venv/lib64/python3.10/site-packages/safetensors/_safetensors_rust.cpython-310-x86_64-linux-gnu.so filter=lfs diff=lfs merge=lfs -text

|

| 113 |

+

inference/.venv/lib64/python3.10/site-packages/tokenizers/tokenizers.cpython-310-x86_64-linux-gnu.so filter=lfs diff=lfs merge=lfs -text

|

| 114 |

+

inference/.venv/lib64/python3.10/site-packages/torch/bin/protoc filter=lfs diff=lfs merge=lfs -text

|

| 115 |

+

inference/.venv/lib64/python3.10/site-packages/torch/bin/protoc-3.13.0.0 filter=lfs diff=lfs merge=lfs -text

|

| 116 |

+

inference/.venv/lib64/python3.10/site-packages/torch/lib/libc10.so filter=lfs diff=lfs merge=lfs -text

|

| 117 |

+

inference/.venv/lib64/python3.10/site-packages/torch/lib/libcusparseLt-f80c68d1.so.0 filter=lfs diff=lfs merge=lfs -text

|

| 118 |

+

inference/.venv/lib64/python3.10/site-packages/torch/lib/libtorch_cpu.so filter=lfs diff=lfs merge=lfs -text

|

| 119 |

+

inference/.venv/lib64/python3.10/site-packages/torch/lib/libtorch_cuda.so filter=lfs diff=lfs merge=lfs -text

|

| 120 |

+

inference/.venv/lib64/python3.10/site-packages/torch/lib/libtorch_cuda_linalg.so filter=lfs diff=lfs merge=lfs -text

|

| 121 |

+

inference/.venv/lib64/python3.10/site-packages/torch/lib/libtorch_python.so filter=lfs diff=lfs merge=lfs -text

|

| 122 |

+

inference/.venv/lib64/python3.10/site-packages/triton/_C/libproton.so filter=lfs diff=lfs merge=lfs -text

|

| 123 |

+

inference/.venv/lib64/python3.10/site-packages/triton/_C/libtriton.so filter=lfs diff=lfs merge=lfs -text

|

| 124 |

+

inference/.venv/lib64/python3.10/site-packages/triton/backends/nvidia/bin/nvdisasm filter=lfs diff=lfs merge=lfs -text

|

| 125 |

+

inference/.venv/lib64/python3.10/site-packages/triton/backends/nvidia/bin/ptxas filter=lfs diff=lfs merge=lfs -text

|

| 126 |

+

inference/.venv/lib64/python3.10/site-packages/yaml/_yaml.cpython-310-x86_64-linux-gnu.so filter=lfs diff=lfs merge=lfs -text

|

LICENSE-CODE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT License

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2023 DeepSeek

|

| 4 |

+

|

| 5 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 6 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 7 |

+

in the Software without restriction, including without limitation the rights

|

| 8 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 9 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 10 |

+

furnished to do so, subject to the following conditions:

|

| 11 |

+

|

| 12 |

+

The above copyright notice and this permission notice shall be included in all

|

| 13 |

+

copies or substantial portions of the Software.

|

| 14 |

+

|

| 15 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 16 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 17 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 18 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 19 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 20 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 21 |

+

SOFTWARE.

|

LICENSE-MODEL

ADDED

|

@@ -0,0 +1,91 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

DEEPSEEK LICENSE AGREEMENT

|

| 2 |

+

|

| 3 |

+

Version 1.0, 23 October 2023

|

| 4 |

+

|

| 5 |

+

Copyright (c) 2023 DeepSeek

|

| 6 |

+

|

| 7 |

+

Section I: PREAMBLE

|

| 8 |

+

|

| 9 |

+

Large generative models are being widely adopted and used, and have the potential to transform the way individuals conceive and benefit from AI or ML technologies.

|

| 10 |

+

|

| 11 |

+

Notwithstanding the current and potential benefits that these artifacts can bring to society at large, there are also concerns about potential misuses of them, either due to their technical limitations or ethical considerations.

|

| 12 |

+

|

| 13 |

+

In short, this license strives for both the open and responsible downstream use of the accompanying model. When it comes to the open character, we took inspiration from open source permissive licenses regarding the grant of IP rights. Referring to the downstream responsible use, we added use-based restrictions not permitting the use of the model in very specific scenarios, in order for the licensor to be able to enforce the license in case potential misuses of the Model may occur. At the same time, we strive to promote open and responsible research on generative models for content generation.

|

| 14 |

+

|

| 15 |

+

Even though downstream derivative versions of the model could be released under different licensing terms, the latter will always have to include - at minimum - the same use-based restrictions as the ones in the original license (this license). We believe in the intersection between open and responsible AI development; thus, this agreement aims to strike a balance between both in order to enable responsible open-science in the field of AI.

|

| 16 |

+

|

| 17 |

+

This License governs the use of the model (and its derivatives) and is informed by the model card associated with the model.

|

| 18 |

+

|

| 19 |

+

NOW THEREFORE, You and DeepSeek agree as follows:

|

| 20 |

+

|

| 21 |

+

1. Definitions

|

| 22 |

+

"License" means the terms and conditions for use, reproduction, and Distribution as defined in this document.

|

| 23 |

+

"Data" means a collection of information and/or content extracted from the dataset used with the Model, including to train, pretrain, or otherwise evaluate the Model. The Data is not licensed under this License.

|

| 24 |

+

"Output" means the results of operating a Model as embodied in informational content resulting therefrom.

|

| 25 |

+

"Model" means any accompanying machine-learning based assemblies (including checkpoints), consisting of learnt weights, parameters (including optimizer states), corresponding to the model architecture as embodied in the Complementary Material, that have been trained or tuned, in whole or in part on the Data, using the Complementary Material.

|

| 26 |

+

"Derivatives of the Model" means all modifications to the Model, works based on the Model, or any other model which is created or initialized by transfer of patterns of the weights, parameters, activations or output of the Model, to the other model, in order to cause the other model to perform similarly to the Model, including - but not limited to - distillation methods entailing the use of intermediate data representations or methods based on the generation of synthetic data by the Model for training the other model.

|

| 27 |

+

"Complementary Material" means the accompanying source code and scripts used to define, run, load, benchmark or evaluate the Model, and used to prepare data for training or evaluation, if any. This includes any accompanying documentation, tutorials, examples, etc, if any.

|

| 28 |

+

"Distribution" means any transmission, reproduction, publication or other sharing of the Model or Derivatives of the Model to a third party, including providing the Model as a hosted service made available by electronic or other remote means - e.g. API-based or web access.

|

| 29 |

+

"DeepSeek" (or "we") means Beijing DeepSeek Artificial Intelligence Fundamental Technology Research Co., Ltd., Hangzhou DeepSeek Artificial Intelligence Fundamental Technology Research Co., Ltd. and/or any of their affiliates.

|

| 30 |

+

"You" (or "Your") means an individual or Legal Entity exercising permissions granted by this License and/or making use of the Model for whichever purpose and in any field of use, including usage of the Model in an end-use application - e.g. chatbot, translator, etc.

|

| 31 |

+

"Third Parties" means individuals or legal entities that are not under common control with DeepSeek or You.

|

| 32 |

+

|

| 33 |

+

Section II: INTELLECTUAL PROPERTY RIGHTS

|

| 34 |

+

|

| 35 |

+

Both copyright and patent grants apply to the Model, Derivatives of the Model and Complementary Material. The Model and Derivatives of the Model are subject to additional terms as described in Section III.

|

| 36 |

+

|

| 37 |

+

2. Grant of Copyright License. Subject to the terms and conditions of this License, DeepSeek hereby grants to You a perpetual, worldwide, non-exclusive, no-charge, royalty-free, irrevocable copyright license to reproduce, prepare, publicly display, publicly perform, sublicense, and distribute the Complementary Material, the Model, and Derivatives of the Model.

|

| 38 |

+

|

| 39 |

+

3. Grant of Patent License. Subject to the terms and conditions of this License and where and as applicable, DeepSeek hereby grants to You a perpetual, worldwide, non-exclusive, no-charge, royalty-free, irrevocable (except as stated in this paragraph) patent license to make, have made, use, offer to sell, sell, import, and otherwise transfer the Model and the Complementary Material, where such license applies only to those patent claims licensable by DeepSeek that are necessarily infringed by its contribution(s). If You institute patent litigation against any entity (including a cross-claim or counterclaim in a lawsuit) alleging that the Model and/or Complementary Material constitutes direct or contributory patent infringement, then any patent licenses granted to You under this License for the Model and/or works shall terminate as of the date such litigation is asserted or filed.

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

Section III: CONDITIONS OF USAGE, DISTRIBUTION AND REDISTRIBUTION

|

| 43 |

+

|

| 44 |

+

4. Distribution and Redistribution. You may host for Third Party remote access purposes (e.g. software-as-a-service), reproduce and distribute copies of the Model or Derivatives of the Model thereof in any medium, with or without modifications, provided that You meet the following conditions:

|

| 45 |

+

a. Use-based restrictions as referenced in paragraph 5 MUST be included as an enforceable provision by You in any type of legal agreement (e.g. a license) governing the use and/or distribution of the Model or Derivatives of the Model, and You shall give notice to subsequent users You Distribute to, that the Model or Derivatives of the Model are subject to paragraph 5. This provision does not apply to the use of Complementary Material.

|

| 46 |

+

b. You must give any Third Party recipients of the Model or Derivatives of the Model a copy of this License;

|

| 47 |

+

c. You must cause any modified files to carry prominent notices stating that You changed the files;

|

| 48 |

+

d. You must retain all copyright, patent, trademark, and attribution notices excluding those notices that do not pertain to any part of the Model, Derivatives of the Model.

|

| 49 |

+

e. You may add Your own copyright statement to Your modifications and may provide additional or different license terms and conditions - respecting paragraph 4.a. – for use, reproduction, or Distribution of Your modifications, or for any such Derivatives of the Model as a whole, provided Your use, reproduction, and Distribution of the Model otherwise complies with the conditions stated in this License.

|

| 50 |

+

|

| 51 |

+

5. Use-based restrictions. The restrictions set forth in Attachment A are considered Use-based restrictions. Therefore You cannot use the Model and the Derivatives of the Model for the specified restricted uses. You may use the Model subject to this License, including only for lawful purposes and in accordance with the License. Use may include creating any content with, finetuning, updating, running, training, evaluating and/or reparametrizing the Model. You shall require all of Your users who use the Model or a Derivative of the Model to comply with the terms of this paragraph (paragraph 5).

|

| 52 |

+

|

| 53 |

+

6. The Output You Generate. Except as set forth herein, DeepSeek claims no rights in the Output You generate using the Model. You are accountable for the Output you generate and its subsequent uses. No use of the output can contravene any provision as stated in the License.

|

| 54 |

+

|

| 55 |

+

Section IV: OTHER PROVISIONS

|

| 56 |

+

|

| 57 |

+

7. Updates and Runtime Restrictions. To the maximum extent permitted by law, DeepSeek reserves the right to restrict (remotely or otherwise) usage of the Model in violation of this License.

|

| 58 |

+

|

| 59 |

+

8. Trademarks and related. Nothing in this License permits You to make use of DeepSeek’ trademarks, trade names, logos or to otherwise suggest endorsement or misrepresent the relationship between the parties; and any rights not expressly granted herein are reserved by DeepSeek.

|

| 60 |

+

|

| 61 |

+

9. Personal information, IP rights and related. This Model may contain personal information and works with IP rights. You commit to complying with applicable laws and regulations in the handling of personal information and the use of such works. Please note that DeepSeek's license granted to you to use the Model does not imply that you have obtained a legitimate basis for processing the related information or works. As an independent personal information processor and IP rights user, you need to ensure full compliance with relevant legal and regulatory requirements when handling personal information and works with IP rights that may be contained in the Model, and are willing to assume solely any risks and consequences that may arise from that.

|

| 62 |

+

|

| 63 |

+

10. Disclaimer of Warranty. Unless required by applicable law or agreed to in writing, DeepSeek provides the Model and the Complementary Material on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied, including, without limitation, any warranties or conditions of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A PARTICULAR PURPOSE. You are solely responsible for determining the appropriateness of using or redistributing the Model, Derivatives of the Model, and the Complementary Material and assume any risks associated with Your exercise of permissions under this License.

|

| 64 |

+

|

| 65 |

+

11. Limitation of Liability. In no event and under no legal theory, whether in tort (including negligence), contract, or otherwise, unless required by applicable law (such as deliberate and grossly negligent acts) or agreed to in writing, shall DeepSeek be liable to You for damages, including any direct, indirect, special, incidental, or consequential damages of any character arising as a result of this License or out of the use or inability to use the Model and the Complementary Material (including but not limited to damages for loss of goodwill, work stoppage, computer failure or malfunction, or any and all other commercial damages or losses), even if DeepSeek has been advised of the possibility of such damages.

|

| 66 |

+

|

| 67 |

+

12. Accepting Warranty or Additional Liability. While redistributing the Model, Derivatives of the Model and the Complementary Material thereof, You may choose to offer, and charge a fee for, acceptance of support, warranty, indemnity, or other liability obligations and/or rights consistent with this License. However, in accepting such obligations, You may act only on Your own behalf and on Your sole responsibility, not on behalf of DeepSeek, and only if You agree to indemnify, defend, and hold DeepSeek harmless for any liability incurred by, or claims asserted against, DeepSeek by reason of your accepting any such warranty or additional liability.

|

| 68 |

+

|

| 69 |

+

13. If any provision of this License is held to be invalid, illegal or unenforceable, the remaining provisions shall be unaffected thereby and remain valid as if such provision had not been set forth herein.

|

| 70 |

+

|

| 71 |

+

14. Governing Law and Jurisdiction. This agreement will be governed and construed under PRC laws without regard to choice of law principles, and the UN Convention on Contracts for the International Sale of Goods does not apply to this agreement. The courts located in the domicile of Hangzhou DeepSeek Artificial Intelligence Fundamental Technology Research Co., Ltd. shall have exclusive jurisdiction of any dispute arising out of this agreement.

|

| 72 |

+

|

| 73 |

+

END OF TERMS AND CONDITIONS

|

| 74 |

+

|

| 75 |

+

Attachment A

|

| 76 |

+

|

| 77 |

+

Use Restrictions

|

| 78 |

+

|

| 79 |

+

You agree not to use the Model or Derivatives of the Model:

|

| 80 |

+

|

| 81 |

+

- In any way that violates any applicable national or international law or regulation or infringes upon the lawful rights and interests of any third party;

|

| 82 |

+

- For military use in any way;

|

| 83 |

+

- For the purpose of exploiting, harming or attempting to exploit or harm minors in any way;

|

| 84 |

+

- To generate or disseminate verifiably false information and/or content with the purpose of harming others;

|

| 85 |

+

- To generate or disseminate inappropriate content subject to applicable regulatory requirements;

|

| 86 |

+

- To generate or disseminate personal identifiable information without due authorization or for unreasonable use;

|

| 87 |

+

- To defame, disparage or otherwise harass others;

|

| 88 |

+

- For fully automated decision making that adversely impacts an individual’s legal rights or otherwise creates or modifies a binding, enforceable obligation;

|

| 89 |

+

- For any use intended to or which has the effect of discriminating against or harming individuals or groups based on online or offline social behavior or known or predicted personal or personality characteristics;

|

| 90 |

+

- To exploit any of the vulnerabilities of a specific group of persons based on their age, social, physical or mental characteristics, in order to materially distort the behavior of a person pertaining to that group in a manner that causes or is likely to cause that person or another person physical or psychological harm;

|

| 91 |

+

- For any use intended to or which has the effect of discriminating against individuals or groups based on legally protected characteristics or categories.

|

README.md

ADDED

|

@@ -0,0 +1,336 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

<!-- markdownlint-disable first-line-h1 -->

|

| 2 |

+

<!-- markdownlint-disable html -->

|

| 3 |

+

<!-- markdownlint-disable no-duplicate-header -->

|

| 4 |

+

|

| 5 |

+

<div align="center">

|

| 6 |

+

<img src="https://github.com/deepseek-ai/DeepSeek-V2/blob/main/figures/logo.svg?raw=true" width="60%" alt="DeepSeek-V3" />

|

| 7 |

+

</div>

|

| 8 |

+

<hr>

|

| 9 |

+

<div align="center" style="line-height: 1;">

|

| 10 |

+

<a href="https://www.deepseek.com/" target="_blank" style="margin: 2px;">

|

| 11 |

+

<img alt="Homepage" src="https://github.com/deepseek-ai/DeepSeek-V2/blob/main/figures/badge.svg?raw=true" style="display: inline-block; vertical-align: middle;"/>

|

| 12 |

+

</a>

|

| 13 |

+

<a href="https://chat.deepseek.com/" target="_blank" style="margin: 2px;">

|

| 14 |

+

<img alt="Chat" src="https://img.shields.io/badge/🤖%20Chat-DeepSeek%20V3-536af5?color=536af5&logoColor=white" style="display: inline-block; vertical-align: middle;"/>

|

| 15 |

+

</a>

|

| 16 |

+

<a href="https://huggingface.co/deepseek-ai" target="_blank" style="margin: 2px;">

|

| 17 |

+

<img alt="Hugging Face" src="https://img.shields.io/badge/%F0%9F%A4%97%20Hugging%20Face-DeepSeek%20AI-ffc107?color=ffc107&logoColor=white" style="display: inline-block; vertical-align: middle;"/>

|

| 18 |

+

</a>

|

| 19 |

+

</div>

|

| 20 |

+

|

| 21 |

+

<div align="center" style="line-height: 1;">

|

| 22 |

+

<a href="https://discord.gg/Tc7c45Zzu5" target="_blank" style="margin: 2px;">

|

| 23 |

+

<img alt="Discord" src="https://img.shields.io/badge/Discord-DeepSeek%20AI-7289da?logo=discord&logoColor=white&color=7289da" style="display: inline-block; vertical-align: middle;"/>

|

| 24 |

+

</a>

|

| 25 |

+

<a href="https://github.com/deepseek-ai/DeepSeek-V2/blob/main/figures/qr.jpeg?raw=true" target="_blank" style="margin: 2px;">

|

| 26 |

+

<img alt="Wechat" src="https://img.shields.io/badge/WeChat-DeepSeek%20AI-brightgreen?logo=wechat&logoColor=white" style="display: inline-block; vertical-align: middle;"/>

|

| 27 |

+

</a>

|

| 28 |

+

<a href="https://twitter.com/deepseek_ai" target="_blank" style="margin: 2px;">

|

| 29 |

+

<img alt="Twitter Follow" src="https://img.shields.io/badge/Twitter-deepseek_ai-white?logo=x&logoColor=white" style="display: inline-block; vertical-align: middle;"/>

|

| 30 |

+

</a>

|

| 31 |

+

</div>

|

| 32 |

+

|

| 33 |

+

<div align="center" style="line-height: 1;">

|

| 34 |

+

<a href="https://github.com/deepseek-ai/DeepSeek-V3/blob/main/LICENSE-CODE" style="margin: 2px;">

|

| 35 |

+

<img alt="Code License" src="https://img.shields.io/badge/Code_License-MIT-f5de53?&color=f5de53" style="display: inline-block; vertical-align: middle;"/>

|

| 36 |

+

</a>

|

| 37 |

+

<a href="https://github.com/deepseek-ai/DeepSeek-V3/blob/main/LICENSE-MODEL" style="margin: 2px;">

|

| 38 |

+

<img alt="Model License" src="https://img.shields.io/badge/Model_License-Model_Agreement-f5de53?&color=f5de53" style="display: inline-block; vertical-align: middle;"/>

|

| 39 |

+

</a>

|

| 40 |

+

</div>

|

| 41 |

+

|

| 42 |

+

|

| 43 |

+

<p align="center">

|

| 44 |

+

<a href="https://github.com/deepseek-ai/DeepSeek-V3/blob/main/DeepSeek_V3.pdf"><b>Paper Link</b>👁️</a>

|

| 45 |

+

</p>

|

| 46 |

+

|

| 47 |

+

|

| 48 |

+

## 1. Introduction

|

| 49 |

+

|

| 50 |

+

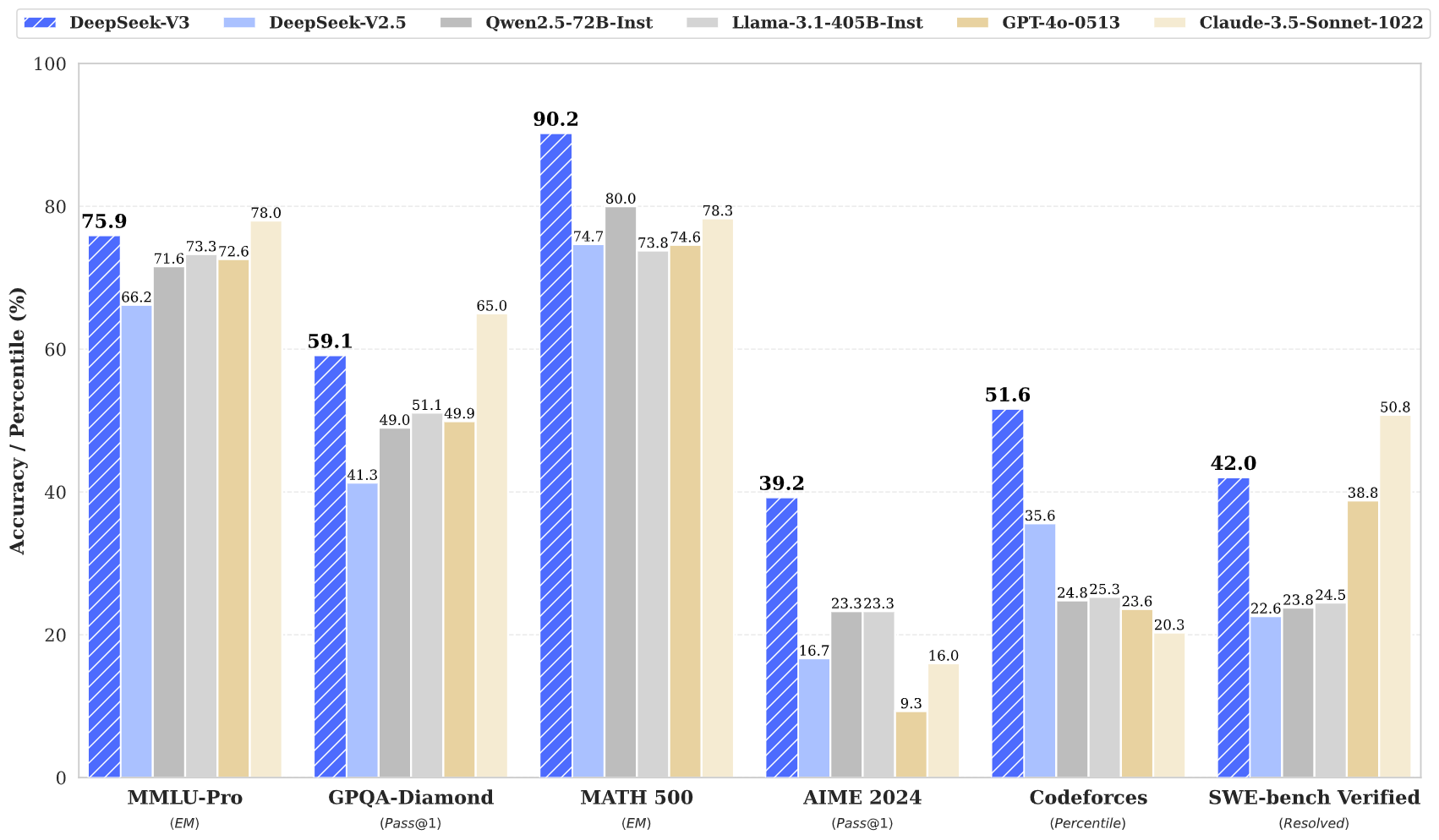

We present DeepSeek-V3, a strong Mixture-of-Experts (MoE) language model with 671B total parameters with 37B activated for each token.

|

| 51 |

+

To achieve efficient inference and cost-effective training, DeepSeek-V3 adopts Multi-head Latent Attention (MLA) and DeepSeekMoE architectures, which were thoroughly validated in DeepSeek-V2.

|

| 52 |

+

Furthermore, DeepSeek-V3 pioneers an auxiliary-loss-free strategy for load balancing and sets a multi-token prediction training objective for stronger performance.

|

| 53 |

+

We pre-train DeepSeek-V3 on 14.8 trillion diverse and high-quality tokens, followed by Supervised Fine-Tuning and Reinforcement Learning stages to fully harness its capabilities.

|

| 54 |

+

Comprehensive evaluations reveal that DeepSeek-V3 outperforms other open-source models and achieves performance comparable to leading closed-source models.

|

| 55 |

+

Despite its excellent performance, DeepSeek-V3 requires only 2.788M H800 GPU hours for its full training.

|

| 56 |

+

In addition, its training process is remarkably stable.

|

| 57 |

+

Throughout the entire training process, we did not experience any irrecoverable loss spikes or perform any rollbacks.

|

| 58 |

+

<p align="center">

|

| 59 |

+

<img width="80%" src="figures/benchmark.png">

|

| 60 |

+

</p>

|

| 61 |

+

|

| 62 |

+

## 2. Model Summary

|

| 63 |

+

|

| 64 |

+

---

|

| 65 |

+

|

| 66 |

+

**Architecture: Innovative Load Balancing Strategy and Training Objective**

|

| 67 |

+

|

| 68 |

+

- On top of the efficient architecture of DeepSeek-V2, we pioneer an auxiliary-loss-free strategy for load balancing, which minimizes the performance degradation that arises from encouraging load balancing.

|

| 69 |

+

- We investigate a Multi-Token Prediction (MTP) objective and prove it beneficial to model performance.

|

| 70 |

+

It can also be used for speculative decoding for inference acceleration.

|

| 71 |

+

|

| 72 |

+

---

|

| 73 |

+

|

| 74 |

+

**Pre-Training: Towards Ultimate Training Efficiency**

|

| 75 |

+

|

| 76 |

+

- We design an FP8 mixed precision training framework and, for the first time, validate the feasibility and effectiveness of FP8 training on an extremely large-scale model.

|

| 77 |

+

- Through co-design of algorithms, frameworks, and hardware, we overcome the communication bottleneck in cross-node MoE training, nearly achieving full computation-communication overlap.

|

| 78 |

+

This significantly enhances our training efficiency and reduces the training costs, enabling us to further scale up the model size without additional overhead.

|

| 79 |

+

- At an economical cost of only 2.664M H800 GPU hours, we complete the pre-training of DeepSeek-V3 on 14.8T tokens, producing the currently strongest open-source base model. The subsequent training stages after pre-training require only 0.1M GPU hours.

|

| 80 |

+

|

| 81 |

+

---

|

| 82 |

+

|

| 83 |

+

**Post-Training: Knowledge Distillation from DeepSeek-R1**

|

| 84 |

+

|

| 85 |

+

- We introduce an innovative methodology to distill reasoning capabilities from the long-Chain-of-Thought (CoT) model, specifically from one of the DeepSeek R1 series models, into standard LLMs, particularly DeepSeek-V3. Our pipeline elegantly incorporates the verification and reflection patterns of R1 into DeepSeek-V3 and notably improves its reasoning performance. Meanwhile, we also maintain a control over the output style and length of DeepSeek-V3.

|

| 86 |

+

|

| 87 |

+

---

|

| 88 |

+

|

| 89 |

+

|

| 90 |

+

## 3. Model Downloads

|

| 91 |

+

|

| 92 |

+

<div align="center">

|

| 93 |

+

|

| 94 |

+

| **Model** | **#Total Params** | **#Activated Params** | **Context Length** | **Download** |

|

| 95 |

+

| :------------: | :------------: | :------------: | :------------: | :------------: |

|

| 96 |

+

| DeepSeek-V3-Base | 671B | 37B | 128K | [🤗 HuggingFace](https://huggingface.co/deepseek-ai/DeepSeek-V3-Base) |

|

| 97 |

+

| DeepSeek-V3 | 671B | 37B | 128K | [🤗 HuggingFace](https://huggingface.co/deepseek-ai/DeepSeek-V3) |

|

| 98 |

+

|

| 99 |

+

</div>

|

| 100 |

+

|

| 101 |

+

**NOTE: The total size of DeepSeek-V3 models on HuggingFace is 685B, which includes 671B of the Main Model weights and 14B of the Multi-Token Prediction (MTP) Module weights.**

|

| 102 |

+

|

| 103 |

+

To ensure optimal performance and flexibility, we have partnered with open-source communities and hardware vendors to provide multiple ways to run the model locally. For step-by-step guidance, check out Section 6: [How_to Run_Locally](#6-how-to-run-locally).

|

| 104 |

+

|

| 105 |

+

For developers looking to dive deeper, we recommend exploring [README_WEIGHTS.md](./README_WEIGHTS.md) for details on the Main Model weights and the Multi-Token Prediction (MTP) Modules. Please note that MTP support is currently under active development within the community, and we welcome your contributions and feedback.

|

| 106 |

+

|

| 107 |

+

## 4. Evaluation Results

|

| 108 |

+

### Base Model

|

| 109 |

+

#### Standard Benchmarks

|

| 110 |

+

|

| 111 |

+

<div align="center">

|

| 112 |

+

|

| 113 |

+

|

| 114 |

+

| | Benchmark (Metric) | # Shots | DeepSeek-V2 | Qwen2.5 72B | LLaMA3.1 405B | DeepSeek-V3 |

|

| 115 |

+

|---|-------------------|----------|--------|-------------|---------------|---------|

|

| 116 |

+

| | Architecture | - | MoE | Dense | Dense | MoE |

|

| 117 |

+

| | # Activated Params | - | 21B | 72B | 405B | 37B |

|

| 118 |

+

| | # Total Params | - | 236B | 72B | 405B | 671B |

|

| 119 |

+

| English | Pile-test (BPB) | - | 0.606 | 0.638 | **0.542** | 0.548 |

|

| 120 |

+

| | BBH (EM) | 3-shot | 78.8 | 79.8 | 82.9 | **87.5** |

|

| 121 |

+

| | MMLU (Acc.) | 5-shot | 78.4 | 85.0 | 84.4 | **87.1** |

|

| 122 |

+

| | MMLU-Redux (Acc.) | 5-shot | 75.6 | 83.2 | 81.3 | **86.2** |

|

| 123 |

+

| | MMLU-Pro (Acc.) | 5-shot | 51.4 | 58.3 | 52.8 | **64.4** |

|

| 124 |

+

| | DROP (F1) | 3-shot | 80.4 | 80.6 | 86.0 | **89.0** |

|

| 125 |

+

| | ARC-Easy (Acc.) | 25-shot | 97.6 | 98.4 | 98.4 | **98.9** |

|

| 126 |

+

| | ARC-Challenge (Acc.) | 25-shot | 92.2 | 94.5 | **95.3** | **95.3** |

|

| 127 |

+

| | HellaSwag (Acc.) | 10-shot | 87.1 | 84.8 | **89.2** | 88.9 |

|

| 128 |

+

| | PIQA (Acc.) | 0-shot | 83.9 | 82.6 | **85.9** | 84.7 |

|

| 129 |

+

| | WinoGrande (Acc.) | 5-shot | **86.3** | 82.3 | 85.2 | 84.9 |

|

| 130 |

+

| | RACE-Middle (Acc.) | 5-shot | 73.1 | 68.1 | **74.2** | 67.1 |

|

| 131 |

+

| | RACE-High (Acc.) | 5-shot | 52.6 | 50.3 | **56.8** | 51.3 |

|

| 132 |

+

| | TriviaQA (EM) | 5-shot | 80.0 | 71.9 | **82.7** | **82.9** |

|

| 133 |

+

| | NaturalQuestions (EM) | 5-shot | 38.6 | 33.2 | **41.5** | 40.0 |

|

| 134 |

+

| | AGIEval (Acc.) | 0-shot | 57.5 | 75.8 | 60.6 | **79.6** |

|

| 135 |

+

| Code | HumanEval (Pass@1) | 0-shot | 43.3 | 53.0 | 54.9 | **65.2** |

|

| 136 |

+

| | MBPP (Pass@1) | 3-shot | 65.0 | 72.6 | 68.4 | **75.4** |

|

| 137 |

+

| | LiveCodeBench-Base (Pass@1) | 3-shot | 11.6 | 12.9 | 15.5 | **19.4** |

|

| 138 |

+

| | CRUXEval-I (Acc.) | 2-shot | 52.5 | 59.1 | 58.5 | **67.3** |

|

| 139 |

+

| | CRUXEval-O (Acc.) | 2-shot | 49.8 | 59.9 | 59.9 | **69.8** |

|

| 140 |

+

| Math | GSM8K (EM) | 8-shot | 81.6 | 88.3 | 83.5 | **89.3** |

|

| 141 |

+

| | MATH (EM) | 4-shot | 43.4 | 54.4 | 49.0 | **61.6** |

|

| 142 |

+

| | MGSM (EM) | 8-shot | 63.6 | 76.2 | 69.9 | **79.8** |

|

| 143 |

+

| | CMath (EM) | 3-shot | 78.7 | 84.5 | 77.3 | **90.7** |

|

| 144 |

+

| Chinese | CLUEWSC (EM) | 5-shot | 82.0 | 82.5 | **83.0** | 82.7 |

|

| 145 |

+

| | C-Eval (Acc.) | 5-shot | 81.4 | 89.2 | 72.5 | **90.1** |

|

| 146 |

+

| | CMMLU (Acc.) | 5-shot | 84.0 | **89.5** | 73.7 | 88.8 |

|

| 147 |

+

| | CMRC (EM) | 1-shot | **77.4** | 75.8 | 76.0 | 76.3 |

|

| 148 |

+

| | C3 (Acc.) | 0-shot | 77.4 | 76.7 | **79.7** | 78.6 |

|

| 149 |

+

| | CCPM (Acc.) | 0-shot | **93.0** | 88.5 | 78.6 | 92.0 |

|

| 150 |

+

| Multilingual | MMMLU-non-English (Acc.) | 5-shot | 64.0 | 74.8 | 73.8 | **79.4** |

|

| 151 |

+

|

| 152 |

+

</div>

|

| 153 |

+

|

| 154 |

+

Note: Best results are shown in bold. Scores with a gap not exceeding 0.3 are considered to be at the same level. DeepSeek-V3 achieves the best performance on most benchmarks, especially on math and code tasks.

|

| 155 |

+

For more evaluation details, please check our paper.

|

| 156 |

+

|

| 157 |

+

#### Context Window

|

| 158 |

+

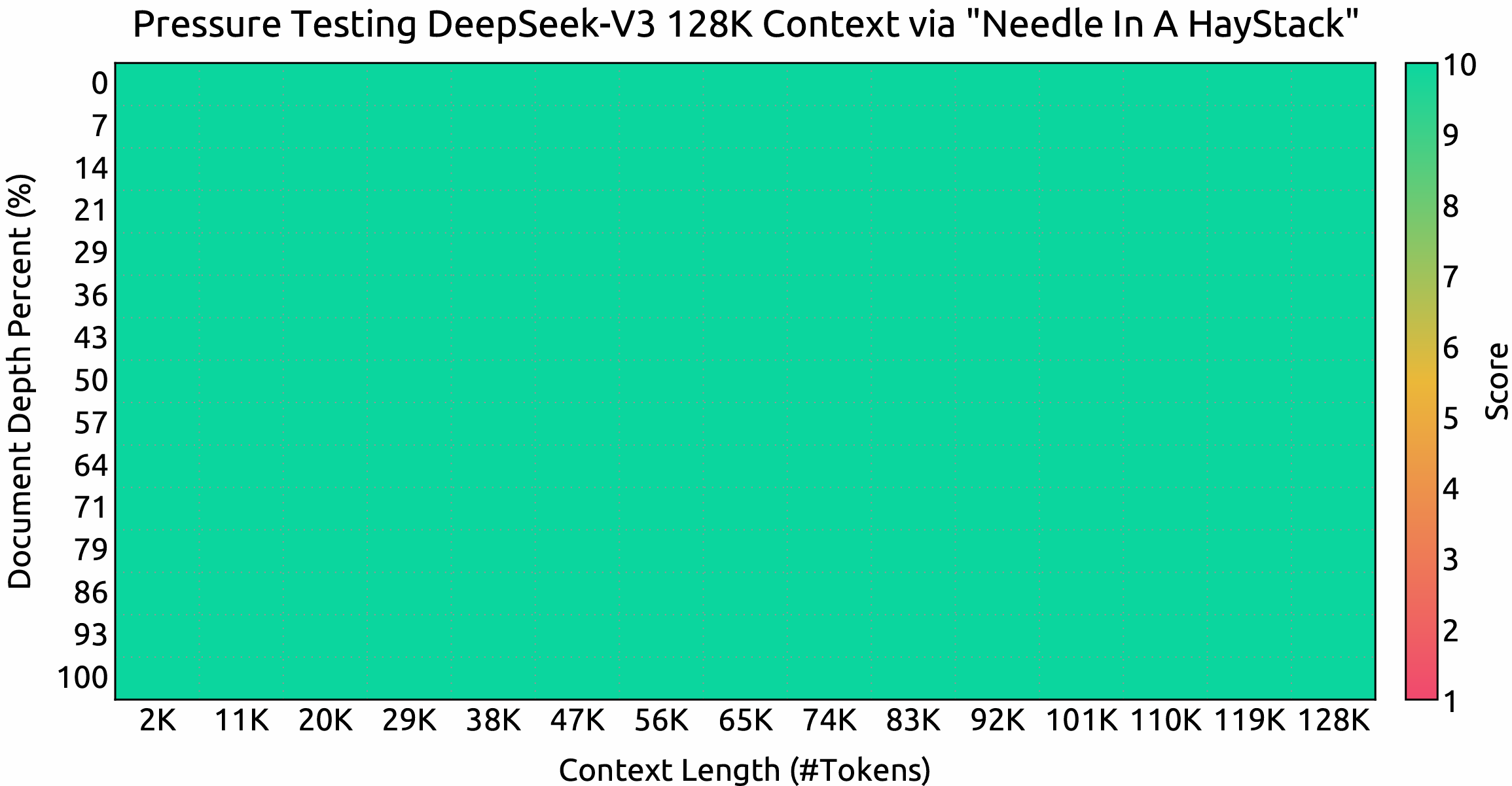

<p align="center">

|

| 159 |

+

<img width="80%" src="figures/niah.png">

|

| 160 |

+

</p>

|

| 161 |

+

|

| 162 |

+

Evaluation results on the ``Needle In A Haystack`` (NIAH) tests. DeepSeek-V3 performs well across all context window lengths up to **128K**.

|

| 163 |

+

|

| 164 |

+

### Chat Model

|

| 165 |

+

#### Standard Benchmarks (Models larger than 67B)

|

| 166 |

+

<div align="center">

|

| 167 |

+

|

| 168 |

+

| | **Benchmark (Metric)** | **DeepSeek V2-0506** | **DeepSeek V2.5-0905** | **Qwen2.5 72B-Inst.** | **Llama3.1 405B-Inst.** | **Claude-3.5-Sonnet-1022** | **GPT-4o 0513** | **DeepSeek V3** |

|

| 169 |

+

|---|---------------------|---------------------|----------------------|---------------------|----------------------|---------------------------|----------------|----------------|

|

| 170 |

+

| | Architecture | MoE | MoE | Dense | Dense | - | - | MoE |

|

| 171 |

+

| | # Activated Params | 21B | 21B | 72B | 405B | - | - | 37B |

|

| 172 |

+

| | # Total Params | 236B | 236B | 72B | 405B | - | - | 671B |

|

| 173 |

+

| English | MMLU (EM) | 78.2 | 80.6 | 85.3 | **88.6** | **88.3** | 87.2 | **88.5** |

|

| 174 |

+

| | MMLU-Redux (EM) | 77.9 | 80.3 | 85.6 | 86.2 | **88.9** | 88.0 | **89.1** |

|

| 175 |

+

| | MMLU-Pro (EM) | 58.5 | 66.2 | 71.6 | 73.3 | **78.0** | 72.6 | 75.9 |

|

| 176 |

+

| | DROP (3-shot F1) | 83.0 | 87.8 | 76.7 | 88.7 | 88.3 | 83.7 | **91.6** |

|

| 177 |

+

| | IF-Eval (Prompt Strict) | 57.7 | 80.6 | 84.1 | 86.0 | **86.5** | 84.3 | 86.1 |

|

| 178 |

+

| | GPQA-Diamond (Pass@1) | 35.3 | 41.3 | 49.0 | 51.1 | **65.0** | 49.9 | 59.1 |

|

| 179 |

+

| | SimpleQA (Correct) | 9.0 | 10.2 | 9.1 | 17.1 | 28.4 | **38.2** | 24.9 |

|

| 180 |

+

| | FRAMES (Acc.) | 66.9 | 65.4 | 69.8 | 70.0 | 72.5 | **80.5** | 73.3 |

|

| 181 |

+

| | LongBench v2 (Acc.) | 31.6 | 35.4 | 39.4 | 36.1 | 41.0 | 48.1 | **48.7** |

|

| 182 |

+

| Code | HumanEval-Mul (Pass@1) | 69.3 | 77.4 | 77.3 | 77.2 | 81.7 | 80.5 | **82.6** |

|

| 183 |

+

| | LiveCodeBench (Pass@1-COT) | 18.8 | 29.2 | 31.1 | 28.4 | 36.3 | 33.4 | **40.5** |

|

| 184 |

+

| | LiveCodeBench (Pass@1) | 20.3 | 28.4 | 28.7 | 30.1 | 32.8 | 34.2 | **37.6** |

|

| 185 |

+

| | Codeforces (Percentile) | 17.5 | 35.6 | 24.8 | 25.3 | 20.3 | 23.6 | **51.6** |

|

| 186 |

+

| | SWE Verified (Resolved) | - | 22.6 | 23.8 | 24.5 | **50.8** | 38.8 | 42.0 |

|

| 187 |

+

| | Aider-Edit (Acc.) | 60.3 | 71.6 | 65.4 | 63.9 | **84.2** | 72.9 | 79.7 |

|

| 188 |

+

| | Aider-Polyglot (Acc.) | - | 18.2 | 7.6 | 5.8 | 45.3 | 16.0 | **49.6** |

|

| 189 |

+

| Math | AIME 2024 (Pass@1) | 4.6 | 16.7 | 23.3 | 23.3 | 16.0 | 9.3 | **39.2** |

|

| 190 |

+

| | MATH-500 (EM) | 56.3 | 74.7 | 80.0 | 73.8 | 78.3 | 74.6 | **90.2** |

|

| 191 |

+

| | CNMO 2024 (Pass@1) | 2.8 | 10.8 | 15.9 | 6.8 | 13.1 | 10.8 | **43.2** |

|

| 192 |

+

| Chinese | CLUEWSC (EM) | 89.9 | 90.4 | **91.4** | 84.7 | 85.4 | 87.9 | 90.9 |

|

| 193 |

+

| | C-Eval (EM) | 78.6 | 79.5 | 86.1 | 61.5 | 76.7 | 76.0 | **86.5** |

|

| 194 |

+

| | C-SimpleQA (Correct) | 48.5 | 54.1 | 48.4 | 50.4 | 51.3 | 59.3 | **64.8** |

|

| 195 |

+

|

| 196 |

+

Note: All models are evaluated in a configuration that limits the output length to 8K. Benchmarks containing fewer than 1000 samples are tested multiple times using varying temperature settings to derive robust final results. DeepSeek-V3 stands as the best-performing open-source model, and also exhibits competitive performance against frontier closed-source models.

|

| 197 |

+

|

| 198 |

+

</div>

|

| 199 |

+

|

| 200 |

+

|

| 201 |

+

#### Open Ended Generation Evaluation

|

| 202 |

+

|

| 203 |

+

<div align="center">

|

| 204 |

+

|

| 205 |

+

|

| 206 |

+

|

| 207 |

+

| Model | Arena-Hard | AlpacaEval 2.0 |

|

| 208 |

+

|-------|------------|----------------|

|

| 209 |

+

| DeepSeek-V2.5-0905 | 76.2 | 50.5 |

|

| 210 |

+

| Qwen2.5-72B-Instruct | 81.2 | 49.1 |

|

| 211 |

+

| LLaMA-3.1 405B | 69.3 | 40.5 |

|

| 212 |

+

| GPT-4o-0513 | 80.4 | 51.1 |

|

| 213 |

+

| Claude-Sonnet-3.5-1022 | 85.2 | 52.0 |

|

| 214 |

+

| DeepSeek-V3 | **85.5** | **70.0** |

|

| 215 |

+

|

| 216 |

+

Note: English open-ended conversation evaluations. For AlpacaEval 2.0, we use the length-controlled win rate as the metric.

|

| 217 |

+

</div>

|

| 218 |

+

|

| 219 |

+

|

| 220 |

+

## 5. Chat Website & API Platform

|

| 221 |

+

You can chat with DeepSeek-V3 on DeepSeek's official website: [chat.deepseek.com](https://chat.deepseek.com/sign_in)

|

| 222 |

+

|

| 223 |

+

We also provide OpenAI-Compatible API at DeepSeek Platform: [platform.deepseek.com](https://platform.deepseek.com/)

|

| 224 |

+

|

| 225 |

+

## 6. How to Run Locally

|

| 226 |

+

|

| 227 |

+

DeepSeek-V3 can be deployed locally using the following hardware and open-source community software:

|

| 228 |

+

|

| 229 |

+

1. **DeepSeek-Infer Demo**: We provide a simple and lightweight demo for FP8 and BF16 inference.

|

| 230 |

+

2. **SGLang**: Fully support the DeepSeek-V3 model in both BF16 and FP8 inference modes.

|

| 231 |

+

3. **LMDeploy**: Enables efficient FP8 and BF16 inference for local and cloud deployment.

|

| 232 |

+

4. **TensorRT-LLM**: Currently supports BF16 inference and INT4/8 quantization, with FP8 support coming soon.

|

| 233 |

+

5. **vLLM**: Support DeekSeek-V3 model with FP8 and BF16 modes for tensor parallelism and pipeline parallelism.

|

| 234 |

+

6. **AMD GPU**: Enables running the DeepSeek-V3 model on AMD GPUs via SGLang in both BF16 and FP8 modes.

|

| 235 |

+

7. **Huawei Ascend NPU**: Supports running DeepSeek-V3 on Huawei Ascend devices.

|

| 236 |

+

|

| 237 |

+

Since FP8 training is natively adopted in our framework, we only provide FP8 weights. If you require BF16 weights for experimentation, you can use the provided conversion script to perform the transformation.

|

| 238 |

+

|

| 239 |

+

Here is an example of converting FP8 weights to BF16:

|

| 240 |

+

|

| 241 |

+

```shell

|

| 242 |

+

cd inference

|

| 243 |

+

python fp8_cast_bf16.py --input-fp8-hf-path /path/to/fp8_weights --output-bf16-hf-path /path/to/bf16_weights

|

| 244 |

+

```

|

| 245 |

+

|

| 246 |

+

**NOTE: Huggingface's Transformers has not been directly supported yet.**

|

| 247 |

+

|

| 248 |

+

### 6.1 Inference with DeepSeek-Infer Demo (example only)

|

| 249 |

+

|

| 250 |

+

#### Model Weights & Demo Code Preparation

|

| 251 |

+

|

| 252 |

+

First, clone our DeepSeek-V3 GitHub repository:

|

| 253 |

+

|

| 254 |

+

```shell

|

| 255 |

+

git clone https://github.com/deepseek-ai/DeepSeek-V3.git

|

| 256 |

+

```

|

| 257 |

+

|

| 258 |

+

Navigate to the `inference` folder and install dependencies listed in `requirements.txt`.

|

| 259 |

+

|

| 260 |

+

```shell

|

| 261 |

+

cd DeepSeek-V3/inference

|

| 262 |

+

pip install -r requirements.txt

|

| 263 |

+

```

|

| 264 |

+

|

| 265 |

+

Download the model weights from HuggingFace, and put them into `/path/to/DeepSeek-V3` folder.

|

| 266 |

+

|

| 267 |

+

#### Model Weights Conversion

|

| 268 |

+

|

| 269 |

+

Convert HuggingFace model weights to a specific format:

|

| 270 |

+

|

| 271 |

+

```shell

|

| 272 |

+

python convert.py --hf-ckpt-path /path/to/DeepSeek-V3 --save-path /path/to/DeepSeek-V3-Demo --n-experts 256 --model-parallel 16

|

| 273 |

+

```

|

| 274 |

+

|

| 275 |

+

#### Run

|

| 276 |

+

|

| 277 |

+

Then you can chat with DeepSeek-V3:

|

| 278 |

+

|

| 279 |

+

```shell

|

| 280 |

+

torchrun --nnodes 2 --nproc-per-node 8 generate.py --node-rank $RANK --master-addr $ADDR --ckpt-path /path/to/DeepSeek-V3-Demo --config configs/config_671B.json --interactive --temperature 0.7 --max-new-tokens 200

|

| 281 |

+

```

|

| 282 |

+

|

| 283 |

+

Or batch inference on a given file:

|

| 284 |

+

|

| 285 |

+

```shell

|

| 286 |

+

torchrun --nnodes 2 --nproc-per-node 8 generate.py --node-rank $RANK --master-addr $ADDR --ckpt-path /path/to/DeepSeek-V3-Demo --config configs/config_671B.json --input-file $FILE

|

| 287 |

+

```

|

| 288 |

+

|

| 289 |

+

### 6.2 Inference with SGLang (recommended)

|

| 290 |

+

|

| 291 |

+

[SGLang](https://github.com/sgl-project/sglang) currently supports MLA optimizations, FP8 (W8A8), FP8 KV Cache, and Torch Compile, delivering state-of-the-art latency and throughput performance among open-source frameworks.

|

| 292 |

+

|

| 293 |

+

Notably, [SGLang v0.4.1](https://github.com/sgl-project/sglang/releases/tag/v0.4.1) fully supports running DeepSeek-V3 on both **NVIDIA and AMD GPUs**, making it a highly versatile and robust solution.

|

| 294 |

+

|

| 295 |

+

Here are the launch instructions from the SGLang team: https://github.com/sgl-project/sglang/tree/main/benchmark/deepseek_v3

|

| 296 |

+

|

| 297 |

+

### 6.3 Inference with LMDeploy (recommended)

|

| 298 |

+

[LMDeploy](https://github.com/InternLM/lmdeploy), a flexible and high-performance inference and serving framework tailored for large language models, now supports DeepSeek-V3. It offers both offline pipeline processing and online deployment capabilities, seamlessly integrating with PyTorch-based workflows.

|

| 299 |

+

|

| 300 |

+

For comprehensive step-by-step instructions on running DeepSeek-V3 with LMDeploy, please refer to here: https://github.com/InternLM/lmdeploy/issues/2960

|

| 301 |

+

|

| 302 |

+

|

| 303 |

+

### 6.4 Inference with TRT-LLM (recommended)

|

| 304 |

+

|

| 305 |

+

[TensorRT-LLM](https://github.com/NVIDIA/TensorRT-LLM) now supports the DeepSeek-V3 model, offering precision options such as BF16 and INT4/INT8 weight-only. Support for FP8 is currently in progress and will be released soon. You can access the custom branch of TRTLLM specifically for DeepSeek-V3 support through the following link to experience the new features directly: https://github.com/NVIDIA/TensorRT-LLM/tree/deepseek/examples/deepseek_v3.

|

| 306 |

+

|

| 307 |

+

### 6.5 Inference with vLLM (recommended)

|

| 308 |

+

|

| 309 |

+