---

license: other

language:

- en

library_name: transformers

tags:

- RLHF

- Nexusflow

- Athene

- Chat Model

---

# Athene-V2-Chat-72B: Rivaling GPT-4o across Benchmarks

Nexusflow HF - Nexusflow Discord

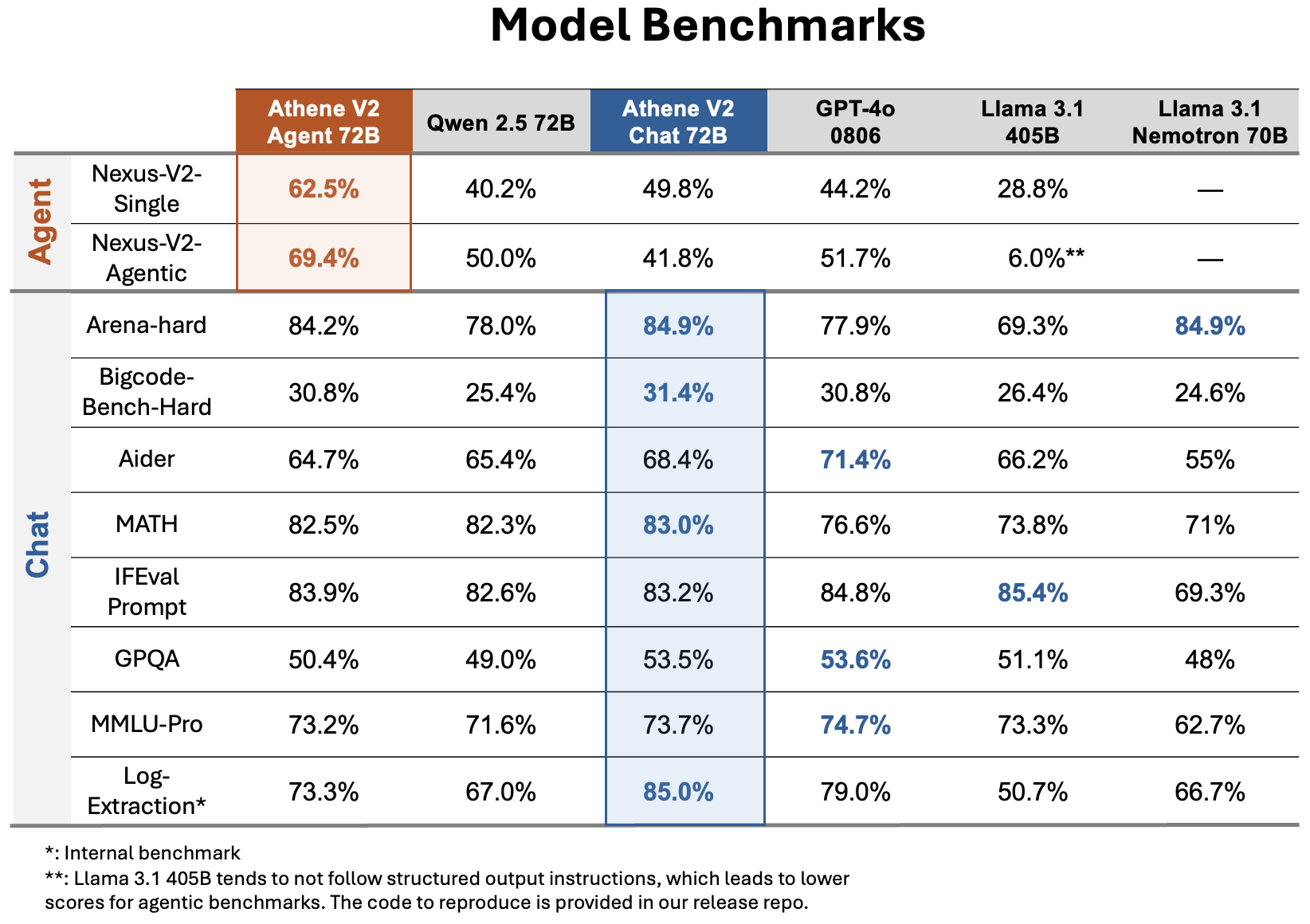

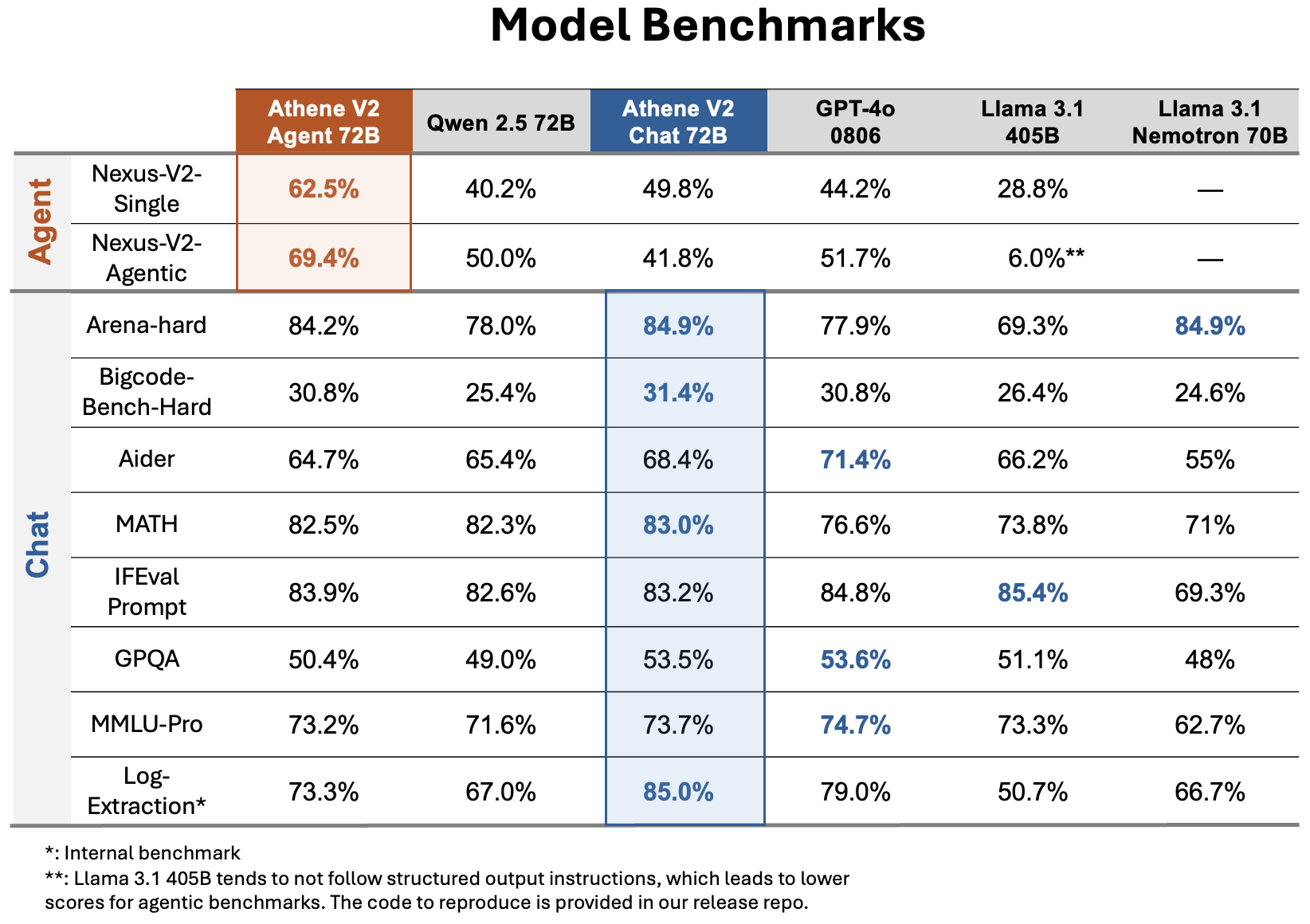

We introduce Athene-V2-Chat-72B, an open-weights LLM that rivals GPT-4o across benchmarks. It is trained through RLHF based off Qwen-2.5-72B.

Athene-V2-Chat-72B excels in chat, math and coding. Its sister model, [Athene-V2-Agent-72B](https://huggingface.co/Nexusflow/Athene-V2-Chat), surpasses GPT-4o in complex function calling and agent applications.

Benchmark performance:

- **Developed by:** The Nexusflow Team

- **Model type:** Chat Model

- **Finetuned from model:** [Qwen 2.5 72B](https://huggingface.co/Qwen/Qwen2.5-72B-Instruct)

- **License**: [Nexusflow Research License](https://huggingface.co/Nexusflow/Athene-V2-Chat/blob/main/Nexusflow_Research_License.pdf)

- **Blog**: https://nexusflow.ai/blogs/athene-V2

## Usage

Athene-V2-Chat uses the same chat template as Qwen 2.5 72B. Below is an example simple usage using the Transformers library.

```Python

import transformers

import torch

model_id = "Nexusflow/Athene-V2-Chat"

pipeline = transformers.pipeline(

"text-generation",

model=model_id,

model_kwargs={"torch_dtype": torch.bfloat16},

device_map="auto",

)

messages = [

{"role": "system", "content": "You are an Athene Noctura, you can only speak with owl sounds. Whoooo whooo."},

{"role": "user", "content": "Whooo are you?"},

]

terminators = [

pipeline.tokenizer.eos_token_id,

pipeline.tokenizer.convert_tokens_to_ids("<|end_of_text|>")

]

outputs = pipeline(

messages,

max_new_tokens=256,

eos_token_id=terminators,

do_sample=True,

temperature=0.6,

top_p=0.9,

)

print(outputs[0]["generated_text"][-1])

```

We found that by adding system prompts that enforce the model to think step by step, the model can do even better in math and problems like counting `r`s in strawberry. For fairness consideration we **do not** include such system prompt during chat evaluation.

## Acknowledgment

We would like to thank the [LMSYS Organization](https://lmsys.org/) for their support of testing the model. We would like to thank Meta AI and the open source community for their efforts in providing the datasets and base models.